No project description provided

Project description

Airflow Tools

Collection of Operators, Hooks and utility functions aimed at facilitating ELT pipelines.

Overview

This is an opinionated library focused on ELT, which means that our goal is to facilitate loading data from various data sources into a data lake, as well as loading from a data lake to a data warehouse and running transformations inside a data warehouse.

Airflow's operators notoriously suffer from an NxM problem, where if you have N data sources and M destinations you end up with NxM different operators (FTPToS3, S3ToFTP, PostgresToS3, FTPToPostgres etc.). We aim to mitigate this issue in two ways:

- ELT focused: cuts down on the number of possible sources and destinations, as we always want to do

source->data lake->data warehouse. - Building common interfaces: where possible we want to treat similar data sources in the same way. Airflow recently has done a good job at this by deprecating all specific SQL operators (

PostgresOperator,MySQLOperator, etc.) in favour of a more genericSQLExecuteQueryOperatorthat works with any hook compatible with the dbapi2 interface. We take this philosophy and apply it any time we can, like providing a unified interface for all filesystem data sources that then enables us to have much more generic operators likeSQLToFilesystem,FilesystemToFilesystem.

Filesystem Interface/Protocol

We provide a thin wrapper over many hooks for filesystem data sources. These wrappers use the hook's specific methods to implement some common methods that we then use inside the operators without needing to worry about the hook's specific type. For now we provide support for the following filesystem hooks, some of them native or that belong to other providers and others implemented in this library:

- WasbHook (Blob Storage/ADLS)

- S3Hook (S3)

- SFTPHook (SFTP)

- FSHook (Local Filesystem)

- AzureFileShareServicePrincipalHook (Azure Fileshare with support for service principal authentication)

- AzureDatabricksVolumeHook (Unity Catalog Columes)

Operators can create the correct hook at runtime by passing a connection ID with a connection type of aws or adls. Example code:

conn = BaseHook.get_connection(conn_id)

hook = conn.get_hook()

Operators

HTTP to Filesystem (Data Lake)

Creates a Example usage:

HttpToFilesystem(

task_id='test_http_to_data_lake',

http_conn_id='http_test',

data_lake_conn_id='data_lake_test',

data_lake_path=s3_bucket + '/source1/entity1/{{ ds }}/',

endpoint='/api/users',

method='GET',

jmespath_expression='data[:2].{id: id, email: email}',

)

Sensors

Filesystem (generic) File Sensor

This sensor checks if a file exists in a generic filesystem. Type of filesystem is determined by the connection type.

Current supported filesystem connections are (conn_type parameter):

aws(s3)azure_databricks_volumeazure_file_share_spsftpfs(LocalFileSystem)google_cloud_platformwasb(BlobStorageFilesystem)

For example, with a connection with a S3 filesystem:

AIRFLOW_CONN_TEST_S3_FILESYSTEM='{"conn_type": "aws", "extra": {"endpoint_url": "http://localhost:9090"}}'

You can use a sensor like this:

FilesystemFileSensor(

task_id='check_file_existence_exist_in_s3',

filesystem_conn_id='test_s3_filesystem',

source_path='data_lake/2023/10/01/test.csv',

poke_interval=60,

timeout=300,

)

JMESPATH expressions

APIs often return the response we are interested in wrapped in a key. JMESPATH expressions are a query language that we can use to select the response we are interested in. You can find more information on JMESPATH expressions and test them here.

The above expression selects the first two objects inside the key data, and then only the id and email attributes in each object. An example response can be found here.

Tests

Integration tests

To guarantee that the library works as intended we have an integration test that attempts to install it in a fresh virtual environment, and we aim to have a test for each Operator.

Running integration tests locally

The lint-and-test.yml workflow sets up the necessary environment variables, but if you want to run them locally you will need the following environment variables:

AIRFLOW_CONN_DATA_LAKE_TEST='{"conn_type": "aws", "extra": {"endpoint_url": "http://localhost:9090"}}'

AWS_ACCESS_KEY_ID=AKIAIOSFODNN7EXAMPLE

AWS_SECRET_ACCESS_KEY=wJalrXUtnFEMI/K7MDENG/bPxRfiCYEXAMPLEKEY

AWS_DEFAULT_REGION=us-east-1

TEST_BUCKET=data_lake

S3_ENDPOINT_URL=http://localhost:9090

AIRFLOW_CONN_DATA_LAKE_TEST='{"conn_type": "aws", "extra": {"endpoint_url": "http://localhost:9090"}}' AIRFLOW_CONN_SFTP_TEST='{"conn_type": "sftp", "host": "localhost", "port": 22, "login": "test_user", "password": "pass"}' AWS_ACCESS_KEY_ID=AKIAIOSFODNN7EXAMPLE AWS_SECRET_ACCESS_KEY=wJalrXUtnFEMI/K7MDENG/bPxRfiCYEXAMPLEKEY TEST_BUCKET=data_lake S3_ENDPOINT_URL=http://localhost:9090 poetry run pytest tests/ --doctest-modules --junitxml=junit/test-results.xml --cov=com --cov-report=xml --cov-report=html

AIRFLOW_CONN_SFTP_TEST='{"conn_type": "sftp", "host": "localhost", "port": 22, "login": "test_user", "password": "pass"}'

And you also need to run Adobe's S3 mock container like this:

docker run --rm -p 9090:9090 -e initialBuckets=data_lake -e debug=true -t adobe/s3mock

and the SFTP container like this:

docker run -p 22:22 -d atmoz/sftp test_user:pass:::root_folder

Notifications

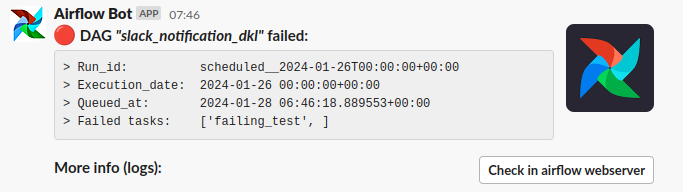

Slack (incoming webhook)

If your or your team are using slack, you can send and receive notifications about failed dags using dag_failure_slack_notification_webhook method

(in notifications.slack.webhook). You need to create a new Slack App and enable the "Incoming Webhooks". More info about sending messages using

Slack Incoming Webhooks here.

You need to create a new Airflow connection with the name SLACK_WEBHOOK_NOTIFICATION_CONN (or AIRFLOW_CONN_SLACK_WEBHOOK_NOTIFICATION_CONN

if you are using environment variables.)

Default message will have the format below:

But you can custom this message providing the below parameters:

- text (str)[optional]: the main message will appear in the notification. If you provide your slack block will be ignored.

- blocks (dict)[optional]: you can provide your custom slack blocks for your message.

- include_blocks (bool)[optional]: indicates if the default block have to be used. If you provide your own blocks will be ignored.

- source (typing.Literal['DAG', 'TASK'])[optional]: source of the failure (dag or task). Default:

DAG. - image_url: (str)[optional] image url for you notification (

accessory). You can useAIRFLOW_TOOLS__SLACK_NOTIFICATION_IMG_URLinstead.

Example of use in a Dag

from datetime import datetime, timedelta

from airflow import DAG

from airflow.operators.bash import BashOperator

from airflow_tools.notifications.slack.webhook import (

dag_failure_slack_notification_webhook, # <--- IMPORT

)

with DAG(

"slack_notification_dkl",

description="Slack notification on fail",

schedule=timedelta(days=1),

start_date=datetime(2021, 1, 1),

catchup=False,

tags=["example"],

on_failure_callback=dag_failure_slack_notification_webhook(), # <--- HERE

) as dag:

t = BashOperator(

task_id="failing_test",

depends_on_past=False,

bash_command="exit 1",

retries=1,

)

if __name__ == "__main__":

dag.test()

You can used only in a task providing the parameter source='TASK':

t = BashOperator(

task_id="failing_test",

depends_on_past=False,

bash_command="exit 1",

retries=1,

on_failure_callback=dag_failure_slack_notification_webhook(source='TASK')

)

You can add a custom message (ignoring the slack blocks for a formatted message):

with DAG(

...

on_failure_callback=dag_failure_slack_notification_webhook(

text='The task {{ ti.task_id }} failed',

include_blocks=False

),

) as dag:

Or you can pass your own Slack blocks:

custom_slack_blocks = {

"type": "section",

"text": {

"type": "mrkdwn",

"text": "<https://api.slack.com/reference/block-kit/block|This is an example using custom Slack blocks>"

}

}

with DAG(

...

on_failure_callback=dag_failure_slack_notification_webhook(

blocks=custom_slack_blocks

),

) as dag:

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file airflow_tools-0.12.0.tar.gz.

File metadata

- Download URL: airflow_tools-0.12.0.tar.gz

- Upload date:

- Size: 27.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.0.1 CPython/3.11.11 Linux/6.8.0-1020-azure

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

62f96b939afbcaf77fe3621e1e530a1fd19fdf625ec04bfa842ab2fd7be9972e

|

|

| MD5 |

8a82ef43dcd161f3b7cfbfc6e2b4294e

|

|

| BLAKE2b-256 |

b6945b1303a96dfb392f3109ba7f6013035b0706fea70989c15bd1b17e13d7a0

|

File details

Details for the file airflow_tools-0.12.0-py3-none-any.whl.

File metadata

- Download URL: airflow_tools-0.12.0-py3-none-any.whl

- Upload date:

- Size: 39.1 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/2.0.1 CPython/3.11.11 Linux/6.8.0-1020-azure

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b26a3ad355a09d414b635a84e5f285a960bda11513ded587dee491204be9e965

|

|

| MD5 |

effd575413100f17477ae0ccab2b4710

|

|

| BLAKE2b-256 |

a9e7203dea2b6ae88e242aa1d5f3b34d166293b39c9f57615dd6b488c31c95b0

|