Open world survival game for reinforcement learning.

Project description

Status: Stable release

Crafter

Open world survival game for evaluating a wide range of agent abilities within a single environment.

Overview

Crafter features randomly generated 2D worlds where the player needs to forage for food and water, find shelter to sleep, defend against monsters, collect materials, and build tools. Crafter aims to be a fruitful benchmark for reinforcement learning by focusing on the following design goals:

-

Research challenges: Crafter poses substantial challenges to current methods, evaluating strong generalization, wide and deep exploration, representation learning, and long-term reasoning and credit assignment.

-

Meaningful evaluation: Agents are evaluated by semantically meaningful achievements that can be unlocked in each episode, offering insights into the ability spectrum of both reward agents and unsupervised agents.

-

Iteration speed: Crafter evaluates many agent abilities within a single env, vastly reducing the computational requirements over benchmarks suites that require training on many separate envs from scratch.

See the research paper to find out more: Benchmarking the Spectrum of Agent Capabilities

@article{hafner2021crafter,

title={Benchmarking the Spectrum of Agent Capabilities},

author={Danijar Hafner},

year={2021},

journal={arXiv preprint arXiv:2109.06780},

}

Play Yourself

python3 -m pip install crafter # Install Crafter

python3 -m pip install pygame # Needed for human interface

python3 -m crafter.run_gui # Start the game

Keyboard mapping (click to expand)

| Key | Action |

|---|---|

| WASD | Move around |

| SPACE | Collect material, drink from lake, hit creature |

| TAB | Sleep |

| T | Place a table |

| R | Place a rock |

| F | Place a furnace |

| P | Place a plant |

| 1 | Craft a wood pickaxe |

| 2 | Craft a stone pickaxe |

| 3 | Craft an iron pickaxe |

| 4 | Craft a wood sword |

| 5 | Craft a stone sword |

| 6 | Craft an iron sword |

Interface

To install Crafter, run pip3 install crafter. The environment follows the

OpenAI Gym interface. Observations are images of size (64, 64, 3) and

outputs are one of 17 categorical actions.

import gym

import crafter

env = gym.make('CrafterReward-v1') # Or CrafterNoReward-v1

env = crafter.Recorder(

env, './path/to/logdir',

save_stats=True,

save_video=False,

save_episode=False,

)

obs = env.reset()

done = False

while not done:

action = env.action_space.sample()

obs, reward, done, info = env.step(action)

Evaluation

Agents are allowed a budget of 1M environmnent steps and are evaluated by their

success rates of the 22 achievements and by their geometric mean score. Example

scripts for computing these are included in the analysis directory of the

repository.

-

Reward: The sparse reward is

+1for unlocking an achievement during the episode and-0.1or+0.1for lost or regenerated health points. Results should be reported not as reward but as success rates and score. -

Success rates: The success rates of the 22 achievemnts are computed as the percentage across all training episodes in which the achievement was unlocked, allowing insights into the ability spectrum of an agent.

-

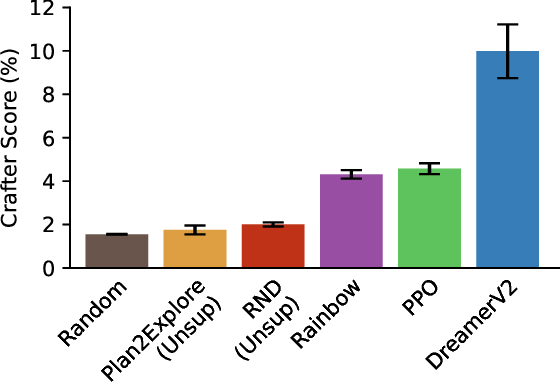

Crafter score: The score is the geometric mean of success rates, so that improvements on difficult achievements contribute more than improvements on achievements with already high success rates.

Scoreboards

Please create a pull request if you would like to add your or another algorithm to the scoreboards. For the reinforcement learning and unsupervised agents categories, the interaction budget is 1M. The external knowledge category is defined more broadly.

Reinforcement Learning

| Algorithm | Score (%) | Reward | Open Source |

|---|---|---|---|

| Curious Replay | 19.4±1.6 | - | AutonomousAgentsLab/cr-dv3 |

| PPO (ResNet) | 15.6±1.6 | 10.3±0.5 | snu-mllab/Achievement-Distillation |

| DreamerV3 | 14.5±1.6 | 11.7±1.9 | danijar/dreamerv3 |

| LSTM-SPCNN | 12.1±0.8 | — | astanic/crafter-ood |

| EDE | 11.7±1.0 | — | yidingjiang/ede |

| OC-SA | 11.1±0.7 | — | astanic/crafter-ood |

| DreamerV2 | 10.0±1.2 | 9.0±1.7 | danijar/dreamerv2 |

| PPO | 4.6±0.3 | 4.2±1.2 | DLR-RM/stable-baselines3 |

| Rainbow | 4.3±0.2 | 6.0±1.3 | Kaixhin/Rainbow |

Unsupervised Agents

| Algorithm | Score (%) | Reward | Open Source |

|---|---|---|---|

| Plan2Explore | 2.1±0.1 | 2.1±1.5 | danijar/dreamerv2 |

| RND | 2.0±0.1 | 0.7±1.3 | alirezakazemipour/PPO-RND |

| Random | 1.6±0.0 | 2.1±1.3 | — |

External Knowledge

| Algorithm | Score (%) | Reward | Uses | Interaction | Open Source |

|---|---|---|---|---|---|

| Human | 50.5±6.8 | 14.3±2.3 | Life experience | 0 | crafter_human_dataset |

| SPRING | 27.3±1.2 | 12.3±0.7 | LLM, scene description, Crafter paper | 0 | ❌ |

| Achievement Distillation | 21.8±1.4 | 12.6±0.3 | Reward structure | 1M | snu-mllab/Achievement-Distillation |

| ELLM | — | 6.0±0.4 | LLM, scene description | 5M | ❌ |

Baselines

Baseline scores of various agents are available for Crafter, both with and

without rewards. The scores are available in JSON format in the scores

directory of the repository. For comparison, the score of human expert players

is 50.5%. The baseline

implementations are available as

a separate repository.

Questions

Please open an issue on Github.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

File details

Details for the file crafter-1.8.3.tar.gz.

File metadata

- Download URL: crafter-1.8.3.tar.gz

- Upload date:

- Size: 107.6 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.5

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

efd72a7e0b8945cfd00eca6c8caf99f746be5f69072853a2f173fa1f85b4088d

|

|

| MD5 |

e2571ca239d91cd0cdfb4e0a1c7d5c76

|

|

| BLAKE2b-256 |

3250dad93d72f9cb97320d9817c34181d3f76e41a934c3c7b91ef4adc7a5ef36

|