Fairness Indicators

Project description

Fairness Indicators

Fairness Indicators is designed to support teams in evaluating, improving, and comparing models for fairness concerns in partnership with the broader Tensorflow toolkit.

The tool is currently actively used internally by many of our products. We would love to partner with you to understand where Fairness Indicators is most useful, and where added functionality would be valuable. Please reach out at tfx@tensorflow.org. You can provide feedback and feature requests here.

Key links

- Introductory Video

- Fairness Indicators Case Study

- Fairness Indicators Example Colab

- Pandas DataFrame to Fairness Indicators Case Study

- Fairness Indicators: Thinking about Fairness Evaluation

What is Fairness Indicators?

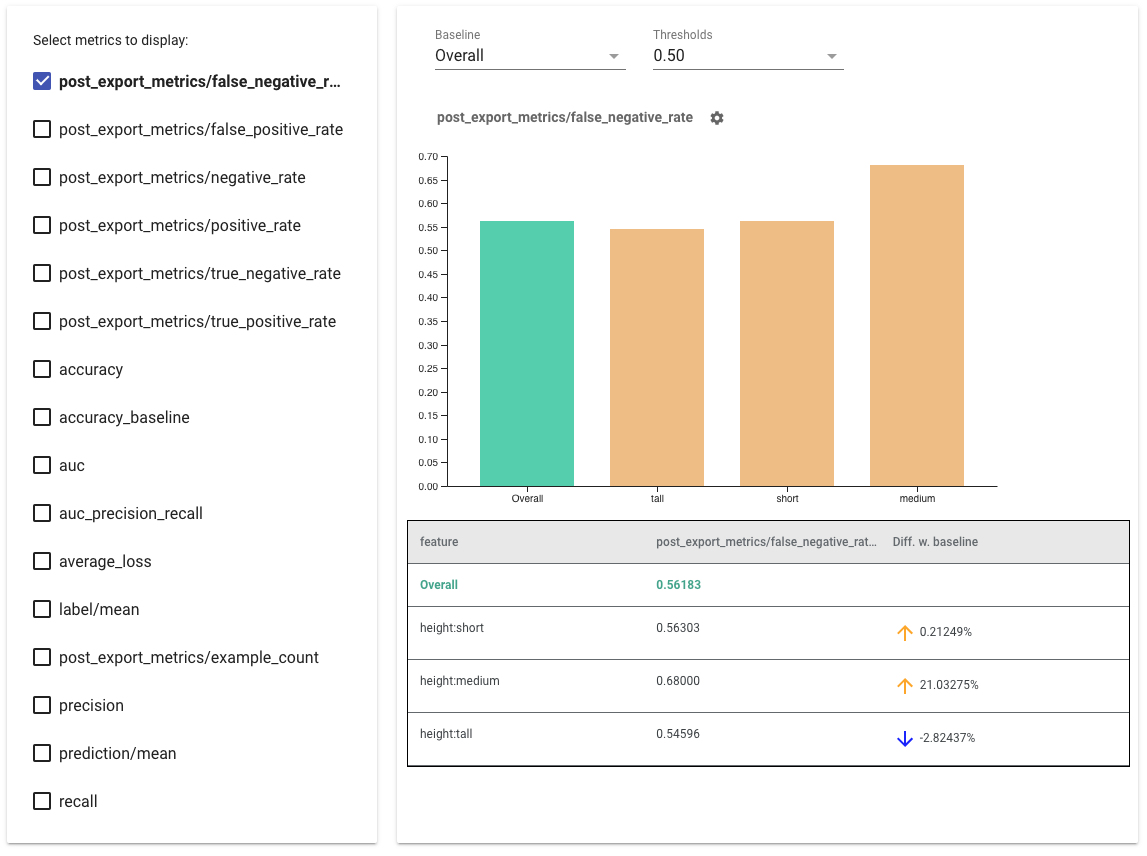

Fairness Indicators enables easy computation of commonly-identified fairness metrics for binary and multiclass classifiers.

Many existing tools for evaluating fairness concerns don’t work well on large-scale datasets and models. At Google, it is important for us to have tools that can work on billion-user systems. Fairness Indicators will allow you to evaluate fairenss metrics across any size of use case.

In particular, Fairness Indicators includes the ability to:

- Evaluate the distribution of datasets

- Evaluate model performance, sliced across defined groups of users

- Feel confident about your results with confidence intervals and evals at multiple thresholds

- Dive deep into individual slices to explore root causes and opportunities for improvement

This case study, complete with videos and programming exercises, demonstrates how Fairness Indicators can be used on one of your own products to evaluate fairness concerns over time.

Installation

pip install fairness-indicators

The pip package includes:

- Tensorflow Data Validation (TFDV) - analyze the distribution of your dataset

- Tensorflow Model Analysis (TFMA) - analyze model performance

- Fairness Indicators - an addition to TFMA that adds fairness metrics and easy performance comparison across slices

- The What-If Tool (WIT)](https://github.com/PAIR-code/what-if-tool - an interactive visual interface designed to probe your models better

Nightly Packages

Fairness Indicators also hosts nightly packages at https://pypi-nightly.tensorflow.org on Google Cloud. To install the latest nightly package, please use the following command:

pip install --extra-index-url https://pypi-nightly.tensorflow.org/simple fairness-indicators

This will install the nightly packages for the major dependencies of Fairness Indicators such as TensorFlow Data Validation (TFDV), TensorFlow Model Analysis (TFMA).

How can I use Fairness Indicators?

Tensorflow Models

- Access Fairness Indicators as part of the Evaluator component in Tensorflow Extended [docs]

- Access Fairness Indicators in Tensorboard when evaluating other real-time metrics [docs]

Not using existing Tensorflow tools? No worries!

- Download the Fairness Indicators pip package, and use Tensorflow Model Analysis as a standalone tool [docs]

- Model Agnostic TFMA enables you to compute Fairness Indicators based on the output of any model [docs]

Examples directory contains several examples.

- Fairness_Indicators_Example_Colab.ipynb gives an overview of Fairness Indicators in TensorFlow Model Analysis and how to use it with a real dataset. This notebook also goes over TensorFlow Data Validation and What-If Tool, two tools for analyzing TensorFlow models that are packaged with Fairness Indicators.

- Fairness_Indicators_on_TF_Hub.ipynb demonstrates how to use Fairness Indicators to compare models trained on different text embeddings. This notebook uses text embeddings from TensorFlow Hub, TensorFlow's library to publish, discover, and reuse model components.

- Fairness_Indicators_TensorBoard_Plugin_Example_Colab.ipynb demonstrates how to visualize Fairness Indicators in TensorBoard.

More questions?

For more information on how to think about fairness evaluation in the context of your use case, see this link.

If you have found a bug in Fairness Indicators, please file a GitHub issue with as much supporting information as you can provide.

Compatible versions

The following table shows the package versions that are compatible with each other. This is determined by our testing framework, but other untested combinations may also work.

| fairness-indicators | tensorflow | tensorflow-data-validation | tensorflow-model-analysis |

|---|---|---|---|

| GitHub master | nightly (1.x/2.x) | 1.17.0 | 0.48.0 |

| v0.48.0 | 2.17 | 1.17.0 | 0.48.0 |

| v0.47.0 | 2.16 | 1.16.1 | 0.47.1 |

| v0.46.0 | 2.15 | 1.15.1 | 0.46.0 |

| v0.44.0 | 2.12 | 1.13.0 | 0.44.0 |

| v0.43.0 | 2.11 | 1.12.0 | 0.43.0 |

| v0.42.0 | 1.15.5 / 2.10 | 1.11.0 | 0.42.0 |

| v0.41.0 | 1.15.5 / 2.9 | 1.10.0 | 0.41.0 |

| v0.40.0 | 1.15.5 / 2.9 | 1.9.0 | 0.40.0 |

| v0.39.0 | 1.15.5 / 2.8 | 1.8.0 | 0.39.0 |

| v0.38.0 | 1.15.5 / 2.8 | 1.7.0 | 0.38.0 |

| v0.37.0 | 1.15.5 / 2.7 | 1.6.0 | 0.37.0 |

| v0.36.0 | 1.15.2 / 2.7 | 1.5.0 | 0.36.0 |

| v0.35.0 | 1.15.2 / 2.6 | 1.4.0 | 0.35.0 |

| v0.34.0 | 1.15.2 / 2.6 | 1.3.0 | 0.34.0 |

| v0.33.0 | 1.15.2 / 2.5 | 1.2.0 | 0.33.0 |

| v0.30.0 | 1.15.2 / 2.4 | 0.30.0 | 0.30.0 |

| v0.29.0 | 1.15.2 / 2.4 | 0.29.0 | 0.29.0 |

| v0.28.0 | 1.15.2 / 2.4 | 0.28.0 | 0.28.0 |

| v0.27.0 | 1.15.2 / 2.4 | 0.27.0 | 0.27.0 |

| v0.26.0 | 1.15.2 / 2.3 | 0.26.0 | 0.26.0 |

| v0.25.0 | 1.15.2 / 2.3 | 0.25.0 | 0.25.0 |

| v0.24.0 | 1.15.2 / 2.3 | 0.24.0 | 0.24.0 |

| v0.23.0 | 1.15.2 / 2.3 | 0.23.0 | 0.23.0 |

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distributions

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file fairness_indicators-0.48.0-py3-none-any.whl.

File metadata

- Download URL: fairness_indicators-0.48.0-py3-none-any.whl

- Upload date:

- Size: 27.0 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.18

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4421288e9454ac985115963720edaa2f6cad9e836023922b21e432554c4c5677

|

|

| MD5 |

885cf10448496f05c09fd25da0f8ac2a

|

|

| BLAKE2b-256 |

925c6d79bc584f4decca88fa0a4c2a752de983659afc2954d4033c47f7e9eca4

|