FASTopic

Project description

FASTopic

Petr Korab's blog Topic Modelling in Business Intelligence: FASTopic and BERTopic in Code

[NeurIPS 2024 paper] [Video] [TowardsDataScience Blog] [Huggingface Blog]

FASTopic is a fast, adaptive, stable, and transferable topic model, different from the previous conventional (LDA), VAE-based (ProdLDA, ETM), or clustering-based (Top2Vec, BERTopic) methods. It leverages optimal transport between the document, topic, and word embeddings from pretrained Transformers to model topics and topic distributions of documents.

If you want to use FASTopic, please cite our NeurIPS 2024 paper as

@inproceedings{wu2024fastopic,

title={FASTopic: Pretrained Transformer is a Fast, Adaptive, Stable, and Transferable Topic Model},

author={Wu, Xiaobao and Nguyen, Thong Thanh and Zhang, Delvin Ce and Wang, William Yang and Luu, Anh Tuan},

booktitle={The Thirty-eighth Annual Conference on Neural Information Processing Systems},

year={2024}

}

https://github.com/user-attachments/assets/42fc1f2a-2dc9-49c0-baf2-97b6fd6aea70

Tutorials

| Tutorial | Link |

|---|---|

| A complete tutorial on FASTopic. |  |

| FASTopic with other languages. |  |

Installation

Install FASTopic with pip:

pip install fastopic

Otherwise, install FASTopic from the source:

pip install git+https://github.com/bobxwu/FASTopic.git

Quick Start

Discover topics from 20newsgroups with the topic number as 50.

from fastopic import FASTopic

from topmost import Preprocess

from sklearn.datasets import fetch_20newsgroups

docs = fetch_20newsgroups(subset='all', remove=('headers', 'footers', 'quotes'))['data']

preprocess = Preprocess(vocab_size=10000)

model = FASTopic(50, preprocess)

top_words, doc_topic_dist = model.fit_transform(docs)

top_words is a list containing the top words of discovered topics.

doc_topic_dist is the topic distributions of documents (doc-topic distributions),

a numpy array with shape $N \times K$ (number of documents $N$ and number of topics $K$).

Usage

Try FASTopic on your dataset

from fastopic import FASTopic

from topmost.preprocess import Preprocess

# Prepare your dataset.

docs = [

'doc 1',

'doc 2', # ...

]

# Preprocess the dataset. This step tokenizes docs, removes stopwords, and sets max vocabulary size, etc.

# preprocess = Preprocess(vocab_size=your_vocab_size, tokenizer=your_tokenizer, stopwords=your_stopwords_set)

preprocess = Preprocess()

model = FASTopic(50, preprocess)

top_words, doc_topic_dist = model.fit_transform(docs)

Save and Load

path = "./tmp/fastopic.zip"

model.save(path)

loaded_model = FASTopic.from_pretrained(path)

beta = loaded_model.get_beta()

doc_topic_dist = loaded_model.transform(docs)

# Keep training

loaded_model.fit_transform(docs, epochs=1)

Topic info

We can get the top words and their probabilities of a topic.

model.get_topic(topic_idx=36)

(('cancer', 0.004797671),

('monkeypox', 0.0044828397),

('certificates', 0.004410268),

('redfield', 0.004407463),

('administering', 0.0043857736))

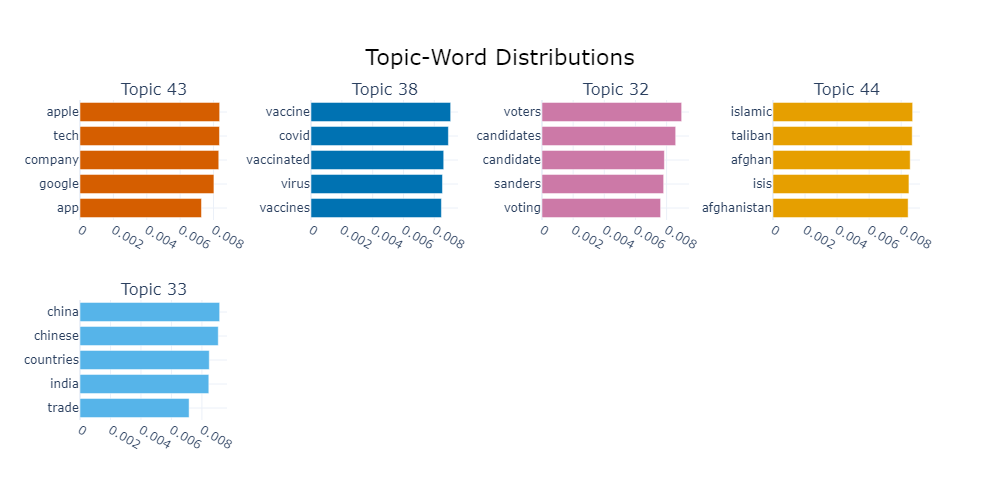

We can visualize these topic info.

fig = model.visualize_topic(top_n=5)

fig.show()

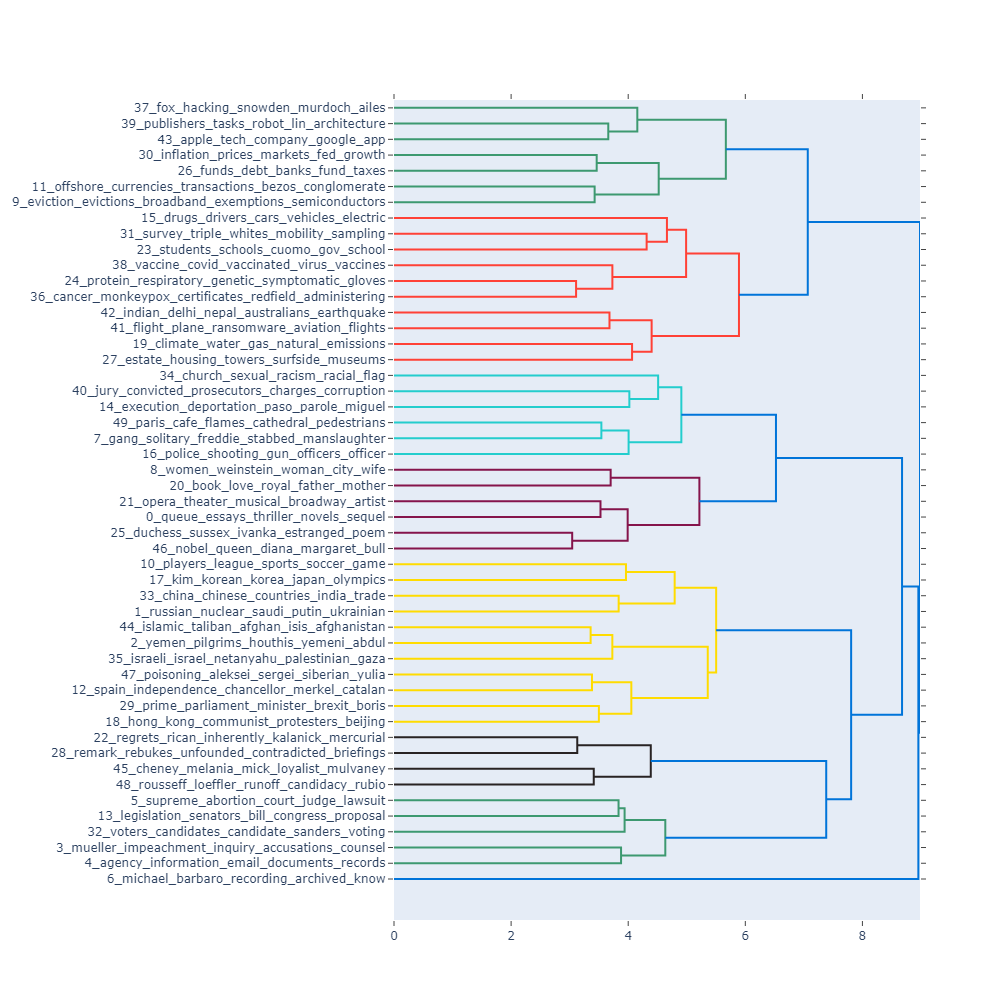

Topic hierarchy

We use the learned topic embeddings and scipy.cluster.hierarchy to build a hierarchy of discovered topics.

fig = model.visualize_topic_hierarchy()

fig.show()

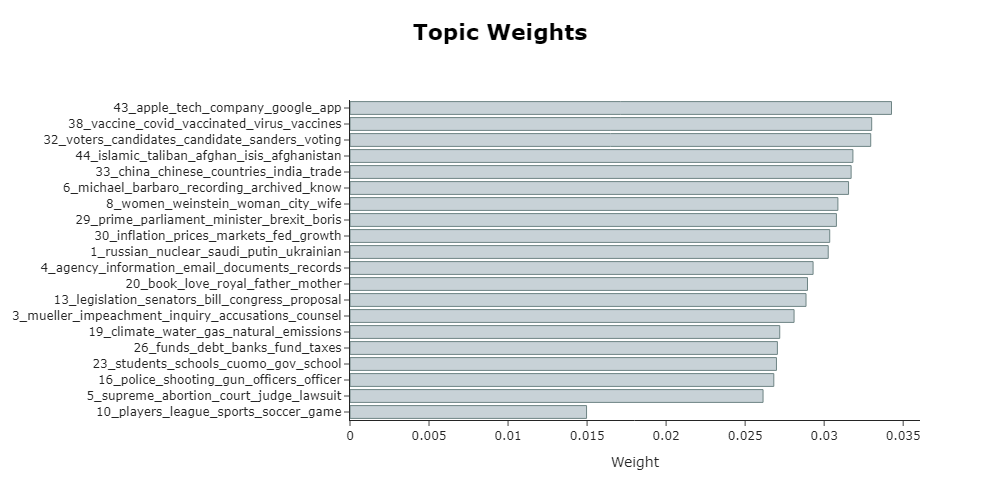

Topic weights

We plot the weights of topics in the given dataset.

fig = model.visualize_topic_weights(top_n=20, height=500)

fig.show()

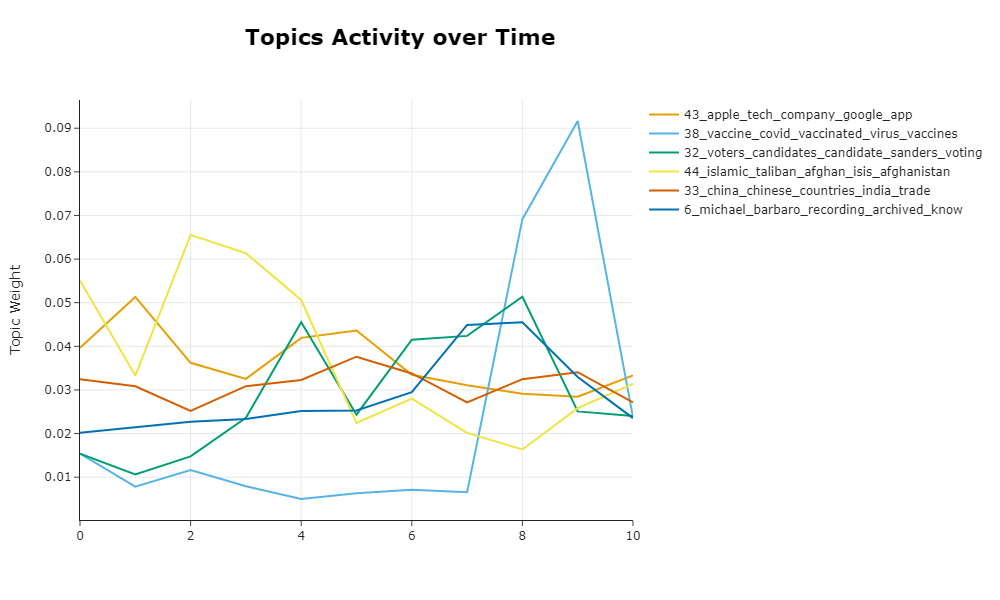

Topic activity over time

Topic activity refers to the weight of a topic at a time slice.

We additionally input the time slices of documents, time_slices to compute and plot topic activity over time.

act = model.topic_activity_over_time(time_slices)

fig = model.visualize_topic_activity(top_n=6, topic_activity=act, time_slices=time_slices)

fig.show()

APIs

We summarize the frequently used APIs of FASTopic here. It's easier for you to look up.

Common

| Method | API |

|---|---|

| Fit the model | .fit(docs) |

| Fit the model and predict documents | .fit_transform(docs) |

| Predict new documents | .transform(new_docs) |

| Get topic-word distribution matrix | .get_beta() |

| Get top words of all topics | .get_top_words() |

| Get top words and probabilities of a topic | .get_topic(topic_idx=10) |

| Get topic weights over the input dataset | .get_topic_weights() |

| Get topic activity over time | .topic_activity_over_time(time_slices) |

| Save model | .save(path=path) |

| Load model | .from_pretrained(path=path) |

Visualization

| Method | API |

|---|---|

| Visualize topics | .visualize_topic(top_n=5) or .visualize_topic(topic_idx=[1, 2, 3]) |

| Visualize topic weights | .visualize_topic_weights(top_n=5) or .visualize_topic_weights(topic_idx=[1, 2, 3]) |

| Visualize topic hierarchy | .visualize_topic_hierarchy() |

| Visualize topic activity | .visualize_topic_activity(top_n=5, topic_activity=topic_activity, time_slices=time_slices) |

Q&A

-

Meet the

out of memoryerror. My GPU memory is not enough due to large datasets. What should I do?You can try to set

low_memory=Trueandlow_memory_batch_sizein FASTopic.low_memory_batch_sizeshould not be too small, otherwise it may damage performance.model = FASTopic(50, low_memory=True, low_memory_batch_size=2000)

Or you can run FASTopic on the CPU as

model = FASTopic(50, device='cpu')

-

Can I try FASTopic with the languages other than English?

Yes! You can pass a multilingual document embedding model, like

paraphrase-multilingual-MiniLM-L12-v2, and the tokenizer and the stop words for your language, like pipelines of spaCy.

Please refer to the tutorial in Colab. -

Loss value can not decline, and the discovered topics all are repetitive.

This may be caused by the less discriminative document embeddings, due to the used document embedding model or extremely short texts as inputs.

Try to (1) normalize document embeddings asFASTopic(50, normalize_embeddings=True); or (2) increaseDT_alphato5.0,10.0or15.0, asFASTopic(num_topics, DT_alpha=10.0). -

How to reproduce the results? How to get the same results every time?

Since FASTopic mainly depends on pytorch and sentence-transformers, you can set random seeds before running FASTopic to get the same results every time:

import numpy as np import torch torch.manual_seed(0) np.random.seed(0) model.fit_transform(docs)

-

Can I use my own document embedding models?

Yes! You can wrap your model and pass it to FASTopic:

class YourDocEmbedModel: def __init__(self): ... def encode(self, docs: List[str], show_progress_bar: bool=False, normalize_embeddings: bool=False ): ... return embeddings your_model = YourDocEmbedModel() model = FASTopic(50, doc_embed_model=your_model)

-

Can I use my own preprocess module?

Yes! You can wrap your module and pass it to FASTopic:

class YourPreprocess: def __init__(self): ... def preprocess(self, docs: List[str]): ... train_bow = ... vocab = ... return { "train_bow": train_bow, # sparse matrix "vocab": vocab # List[str] } your_preprocess = YourPreprocess() model = FASTopic(50, preprocess=your_preprocess)

Contact

- We welcome your contributions to this project. Please feel free to submit pull requests.

- If you encounter any issues, please either directly contact Xiaobao Wu (xiaobao002@e.ntu.edu.sg) or leave an issue in the GitHub repo.

Related Resources

- TopMost: a topic modeling toolkit, including preprocessing, model training, and evaluations.

- A Survey on Neural Topic Models: Methods, Applications, and Challenges

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file fastopic-1.0.1.tar.gz.

File metadata

- Download URL: fastopic-1.0.1.tar.gz

- Upload date:

- Size: 24.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.9.19

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

94a474c90126f11774cc8a615a1d13d5600762a1f99f1610cbc4c99b9da6d986

|

|

| MD5 |

c120deff09e8508354febf14ffc20744

|

|

| BLAKE2b-256 |

15d0fb0efba2e7da50bda03fc4bcc8fdf1266d6039e38178a70ccb1521d69d69

|

File details

Details for the file fastopic-1.0.1-py3-none-any.whl.

File metadata

- Download URL: fastopic-1.0.1-py3-none-any.whl

- Upload date:

- Size: 20.6 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.9.19

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

25a03046f079ee4d3509fbc827d83bf217d1f98e2ad4d573c5817bb9f1596c48

|

|

| MD5 |

750279c3c39ab6b6b136db8001506474

|

|

| BLAKE2b-256 |

8db5048243c027b1e9589c8156bd5277eb7323539b1c1c9e3aa6e758d96fe92a

|