Static image export for web-based visualization libraries with zero dependencies

Project description

Overview

Kaleido is a cross-platform library for generating static images (e.g. png, svg, pdf, etc.) for web-based visualization libraries, with a particular focus on eliminating external dependencies. The project's initial focus is on the export of plotly.js images from Python for use by plotly.py, but it is designed to be relatively straight-forward to extend to other web-based visualization libraries, and other programming languages. The primary focus of Kaleido (at least initially) is to serve as a dependency of web-based visualization libraries like plotly.py. As such, the focus is on providing a programmatic-friendly, rather than user-friendly, API.

Try it out from Python

Install the kaleido wheel.

$ pip install kaleido

Install plotly.

$ pip install plotly

Export a plotly figure as a png image.

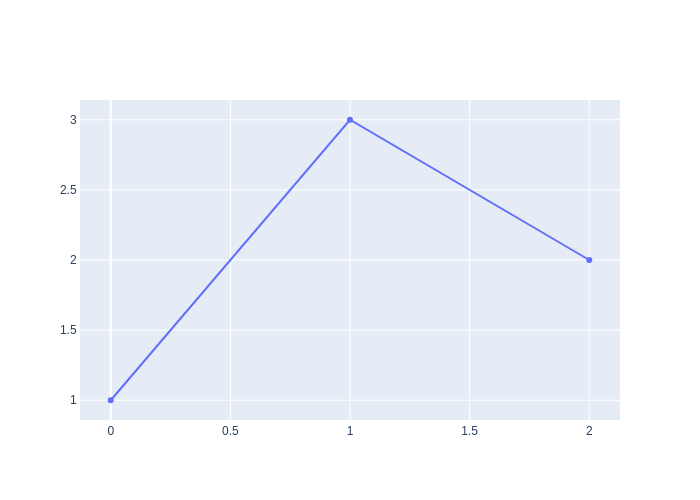

from kaleido.scopes.plotly import PlotlyScope

import plotly.graph_objects as go

scope = PlotlyScope()

fig = go.Figure(data=[go.Scatter(y=[1, 3, 2])])

with open("figure.png", "wb") as f:

f.write(scope.transform(fig, format="png"))

Then, open figure.png in the current working directory.

Background

As simple as it sounds, programmatically generating static images from web-based visualization libraries is a difficult problem. The core difficulty is that these libraries don't actually render plots (i.e. color the pixels) on their own, instead they delegate this work to web technologies like SVG, Canvas, WebGL, etc. This means that they are entirely dependent on the presence of a complete web browser to operate.

When the figure is displayed in a browser window, it's relatively straight-forward for a visualization library to provide an export-image button because it has full access to the browser for rendering. The difficulty arises when trying to export an image programmatically (e.g. from Python) without displaying it in a browser and without user interaction. To accomplish this, the Python portion of the visualization library needs programmatic access to a full web browser.

There are three main approaches that are currently in use among Python web-based visualization libraries.

- bokeh, altair, bqplot, and ipyvolume rely on the Selenium Python library to control a system web browser such as Firefox or Chrome/Chromium to perform image rendering.

- plotly.py relies on Orca, which is a custom headless Electron application that uses the Chromium browser engine built into Electron to perform image rendering. Orca runs as a local web server and plotly.py sends requests to it using a local port.

- When operating in the Jupyter notebook or JupyterLab, ipyvolume also supports programmatic image export by sending export requests to the browser using the ipywidgets protocol.

While options 1 and 2 can both be installed using conda, they still rely on the presence of some components that must be installed externally. For example, on Linux both require the installation of system libraries like libXss that are not typically included in headless Linux installations like you find in JupyterHub installations, Binder, Colab, Azure notebooks, SageMaker, etc. Also, conda is not as universally available as the pip package manager and neither approach is installable using pip packages.

Additionally, both 1 and 2 communicate between the Python process and the web browser over a local network port. While not typically a problem, certain firewall and container configurations can interfere with this local network connection.

The advantage of options 3 is that it introduces no additional system dependencies. The disadvantage is that it only works when running in a notebook, not in standalone Python scripts.

The end result is that all of these libraries have in-depth documentation pages on how to get image export working, and how to troubleshoot the inevitable failures and edge cases. While this is a great improvement over the state of affairs just a couple of years ago, and a lot of excellent work has gone into making these approaches work as seamlessly as possible, the fundamental limitations detailed above still result in sub-optimal user experiences. This is especially true when comparing web-based plotting libraries to traditional plotting libraries like matplotlib and ggplot2 where there's never a question of whether image export will work

The goal of Kaleido is to make static image export of web-based visualization libraries as universally available and reliable as that of matplotlib and ggplot2.

Approach

To accomplish this goal, Kaleido introduces a new approach. The core of Kaleido is a standalone C++ application that embeds Chromium as a library. This architecture allows Kaleido to communicate with the browser engine using the C++ API rather than requiring a local network connection. A thin Python wrapper runs the Kaledo C++ application as a subprocess and communicates with it by writing image export JSON requests to standard-in and retrieving results by reading from standard-out. Other language wrappers (e.g. R, Julia, Scala, Rust, etc.) can fairly easily be written in the future because the interface relies only on standard-in / standard-out communication using JSON requests.

By compiling Chromium as a library, we have a degree of control over what is included in the Chromium build. In particular, on Linux we can build Chromium in headless mode, which eliminates a large number of runtime dependencies (e.g. the libXss library mentioned above). The remaining dependencies are small enough to bundle with the library, making it possible to run Kaleido in the most minimal Linux environments with no additional dependencies required. So for example, the C++ Kaleido executable can run inside an ubuntu:16.04 docker container without anything be installed using apt.

The Python wrapper and the Kaleido executable can then be packaged as operating system dependent Python wheels that can be distributed on PyPI.

Disadvantages

While this approach has many advantages, the main disadvantage is that building Chromium is not for the faint of heart. Even on powerful workstations, downloading and building the Chromium code base takes 50+ GB and several hours. On Linux this work can be done once and distributed as a docker container, but we don't have a similar shortcut for Windows and MacOS. Because of this, we're still working on finding a CI solution for MacOS and Windows.

Scope (Plugin) architecture

While motivated by the needs of plotly.py, we made the decision early on to design Kaleido to make it fairly straightforward to add support for additional libraries. Plugins in Kaleido are called "scopes". For more information, see https://github.com/plotly/Kaleido/wiki/Scope-(Plugin)-Architecture.

Language wrapper architecture

While Python is the initial target language for Kaleido, it has been designed to make it fairly straightforward to add support for additional languages. For more information, see https://github.com/plotly/Kaleido/wiki/Language-wrapper-architecture.

Building Kaledo

Instructions for building Kaleido differ slightly across operating systems. All of these approaches assume that the Kaleido repository has been cloned and that the working directory is set to the repository root.

$ git clone git@github.com:plotly/Kaleido.git

$ cd Kaleido

Update version

Before building, generate the version string based on the git commit log with

$ python repos\version\build_pep440_version.py

Linux

There are two approaches to building Kaleido on Linux, both of which rely on Docker.

Method 1

This approach relies on the jonmmease/kaleido-builder docker image, and the scripts in repos/linux_full_scripts, to compile Kaleido. This docker image is over 30GB, but in includes a precompiled instance of the Chromium source tree making it possible to compile Kaleido in just a few 10s of seconds. The downside of this approach is that the chromium source tree is not visible outside of the docker image so it may be difficult for development environments to index it. This is the approach used for Continuous Integration on Linux.

Download docker image

$ docker pull jonmmease/kaleido-builder:0.7

Build Kaleido

$ /repos/linux_full_scripts/build_kaleido

Method 2

This approach relies on the jonmmease/chromium-builder docker image, and the scripts in repos/linux_scripts, to download the chromium source to a local folder and then build it. This takes longer to get set up than method 2 because the Chromium source tree must be compiled from scratch, but it downloads a copy of the chromium source tree to repos/src which makes it possible for development environments like CLion to index the Chromium codebase to provide code completion.

Download docker image

$ docker pull jonmmease/chromium-builder:0.7

Fetch the Chromium codebase and checkout the specific tag, then sync all dependencies

$ /repos/linux_scripts/fetch_chromium

Then build the kaleido application to repos/build/kaleido, and bundle shared libraries and fonts. The input source for this application is stored under repos/kaleido/cc/. The build step will also

create the Python wheel under repos/kaleido/py/dist/

$ /repos/linux_scripts/build_kaleido

MacOS

To build on MacOS, first install XCode version 11.0+, nodejs 12, and Python 3. See https://chromium.googlesource.com/chromium/src/+/master/docs/mac_build_instructions.md for more information on build requirements.

Then fetch the chromium codebase

$ /repos/mac_scripts/fetch_chromium

Then build Kaleido to repos/build/kaleido. The build step will also create the Python wheel under repos/kaleido/py/dist/

$ /repos/mac_scripts/build_kaleido

Windows

To build on Windows, first install Visual Studio 2019 (community edition is fine), nodejs 12, and Python 3. See https://chromium.googlesource.com/chromium/src/+/master/docs/windows_build_instructions.md for more information on build requirements.

Then fetch the chromium codebase from a Power Shell command prompt

$ /repos/win_scripts/fetch_chromium.ps1

Then build Kaleido to repos/build/kaleido. The build step will also create the Python wheel under repos/kaleido/py/dist/

$ /repos/mac_scripts/build_kaleido.ps1

Building Docker containers

chromium-builder

The chromium-builder container mostly follows the instructions at https://chromium.googlesource.com/chromium/src/+/master/docs/linux/build_instructions.md to install depot_tools and run install-build-deps.sh to install the required build dependencies the appropriate stable version of Chromium. The image is based on ubuntu 16.04, which is the recommended OS for building Chromium on Linux.

Build container with:

$ docker build -t jonmmease/chromium-builder:0.7 -f repos/linux_scripts/Dockerfile .

kaleido-builder

This container contains a pre-compiled version of chromium source tree. Takes several hours to build!

$ docker build -t jonmmease/kaleido-builder:0.7 -f repos/linux_full_scripts/Dockerfile .

Updating chromium version

To update the version of Chromium in the future, the docker images will need to be updated. Follow the instructions for the DEPOT_TOOLS_COMMIT and CHROMIUM_TAG environment variables in linux_scripts/Dockerfile.

Find a stable chromium version tag from https://chromereleases.googleblog.com/search/label/Desktop%20Update. Look up date of associated tag in GitHub at https://github.com/chromium/chromium/ E.g. Stable chrome version tag on 05/19/2020: 83.0.4103.61, set

CHROMIUM_TAG="83.0.4103.61"Search through depot_tools commitlog (https://chromium.googlesource.com/chromium/tools/depot_tools/+log) for commit hash of commit from the same day. E.g. depot_tools commit hash from 05/19/2020: e67e41a, set

DEPOT_TOOLS_COMMIT=e67e41a

The environment variable must also be updated in the repos/linux_scripts/checkout_revision, repos/mac_scripts/fetch_chromium, and repos/win_scripts/fetch_chromium.ps1 scripts.

CMakeLists.txt

The CMakeLists.txt file in repos/ is only there to help IDE's like CLion/KDevelop figure out how to index the chromium source tree. It can't be used to actually build chromium. Using this approach, it's possible to get full completion and code navigation from repos/kaleido/cc/kaleido.cc in CLion.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distributions

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file kaleido-0.0.1rc8-py2.py3-none-win_amd64.whl.

File metadata

- Download URL: kaleido-0.0.1rc8-py2.py3-none-win_amd64.whl

- Upload date:

- Size: 55.3 MB

- Tags: Python 2, Python 3, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.1.1 pkginfo/1.5.0.1 requests/2.24.0 setuptools/47.3.1.post20200622 requests-toolbelt/0.9.1 tqdm/4.46.1 CPython/3.7.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c31d7d7bdd8ec9b4342e04f71ae391ff91e7e8b422e12829f5b304c469a1a52d

|

|

| MD5 |

2de5c4ba9a622a114d757394632df723

|

|

| BLAKE2b-256 |

0a2fd56390a581afcc69977e332f3c723738ff5443512e24873b6f2b2c77904d

|

File details

Details for the file kaleido-0.0.1rc8-py2.py3-none-manylinux2014_x86_64.whl.

File metadata

- Download URL: kaleido-0.0.1rc8-py2.py3-none-manylinux2014_x86_64.whl

- Upload date:

- Size: 73.9 MB

- Tags: Python 2, Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.1.1 pkginfo/1.5.0.1 requests/2.24.0 setuptools/47.3.1.post20200622 requests-toolbelt/0.9.1 tqdm/4.46.1 CPython/3.7.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

db629f924c3d541e78b0367db8ddd7edc616e375e29e54132c0e3f0e8837e8d4

|

|

| MD5 |

9a1fe21ba7db30037cfebc82dc5bd881

|

|

| BLAKE2b-256 |

5803e490b649a9c87392887fec5afb11a6f5c72a603ec0ce83a9837fa4a0b5c4

|

File details

Details for the file kaleido-0.0.1rc8-py2.py3-none-macosx_10_15_x86_64.whl.

File metadata

- Download URL: kaleido-0.0.1rc8-py2.py3-none-macosx_10_15_x86_64.whl

- Upload date:

- Size: 68.8 MB

- Tags: Python 2, Python 3, macOS 10.15+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.1.1 pkginfo/1.5.0.1 requests/2.24.0 setuptools/47.3.1.post20200622 requests-toolbelt/0.9.1 tqdm/4.46.1 CPython/3.7.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

95d80d7d372191c8030b81683224a4e5a219c2d64cdb6f6f0cb5f42734466af7

|

|

| MD5 |

4dcedd8789e932730fb51cbdcba51080

|

|

| BLAKE2b-256 |

04409042d97451ed02358ab689ebe91fd338d9c91d0c75725bf6d4f5257d809a

|