kaskade is a text user interface for kafka

Project description

Kaskade

Kaskade is a text user interface (TUI) for Apache Kafka, built with Textual by Textualize.

It includes features like:

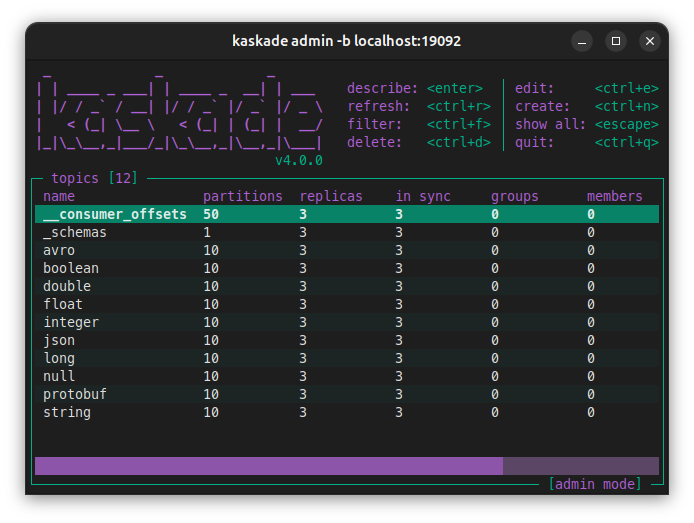

Admin

- List topics, partitions, groups and group members.

- Topic information like lag, replicas and records count.

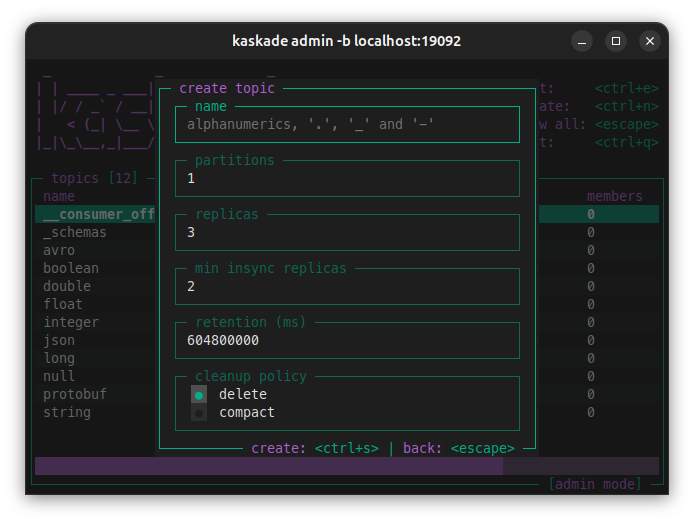

- Create, edit and delete topics.

- Filter topics by name.

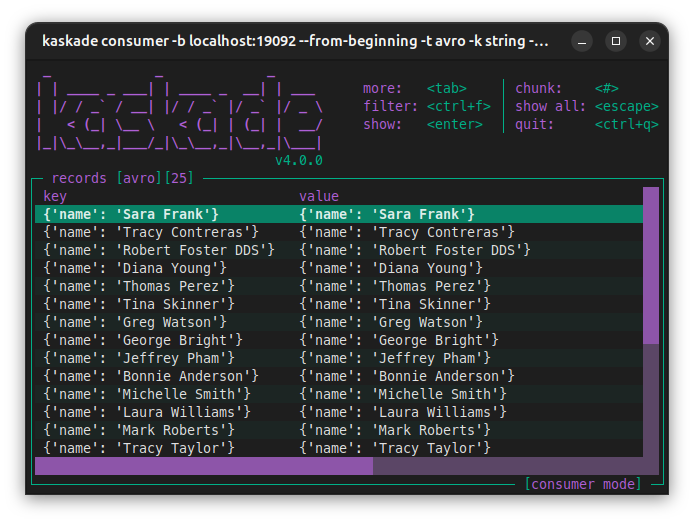

Consumer

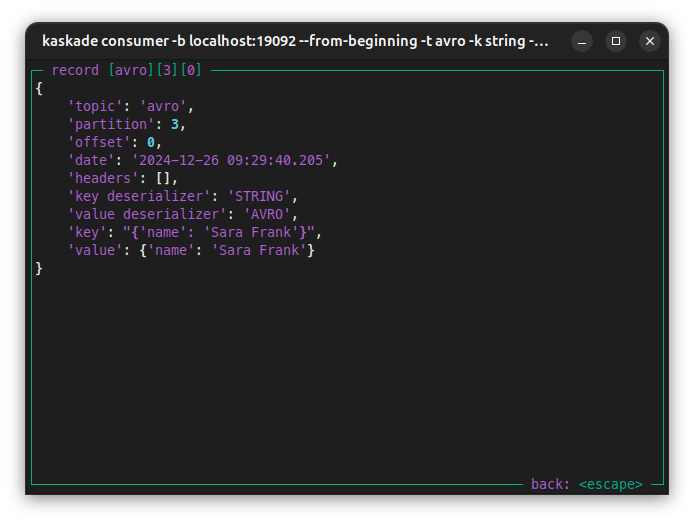

- Json, string, integer, long, float, boolean and double deserialization.

- Filter by key, value, header and/or partition.

- Schema Registry support for avro and json.

- Protobuf deserialization support without Schema Registry.

- Avro deserialization without Schema Registry.

Limitations

Kaskade does not include:

- Schema Registry for protobuf.

- Runtime auto-refresh.

Screenshots

|

|

|

|

Installation

Install it with brew:

brew install kaskade

Install it with pipx:

pipx install kaskade

Running kaskade

Admin view:

kaskade admin -b my-kafka:9092

Consumer view:

kaskade consumer -b my-kafka:9092 -t my-topic

Configuration examples

Multiple bootstrap servers:

kaskade admin -b my-kafka:9092,my-kafka:9093

Consume and deserialize:

kaskade consumer -b my-kafka:9092 -t my-json-topic -k json -v json

Supported deserializers

[bytes, boolean, string, long, integer, double, float, json, avro, protobuf, registry]

Consuming from the beginning:

kaskade consumer -b my-kafka:9092 -t my-topic --from-beginning

Schema registry simple connection deserializer:

kaskade consumer -b my-kafka:9092 -t my-avro-topic \

-k registry -v registry \

--registry url=http://my-schema-registry:8081

For more information about Schema Registry configurations go to: Confluent Schema Registry client.

Apicurio registry:

kaskade consumer -b my-kafka:9092 -t my-avro-topic \

-k registry -v registry \

--registry url=http://my-apicurio-registry:8081/apis/ccompat/v7

For more about apicurio go to: Apicurio registry.

SSL encryption example:

kaskade admin -b my-kafka:9092 -c security.protocol=SSL

For more information about SSL encryption and SSL authentication go to: Configure librdkafka client.

Confluent cloud admin and consumer:

kaskade admin -b ${BOOTSTRAP_SERVERS} \

-c security.protocol=SASL_SSL \

-c sasl.mechanism=PLAIN \

-c sasl.username=${CLUSTER_API_KEY} \

-c sasl.password=${CLUSTER_API_SECRET}

kaskade consumer -b ${BOOTSTRAP_SERVERS} -t my-avro-topic \

-k string -v registry \

-c security.protocol=SASL_SSL \

-c sasl.mechanism=PLAIN \

-c sasl.username=${CLUSTER_API_KEY} \

-c sasl.password=${CLUSTER_API_SECRET} \

--registry url=${SCHEMA_REGISTRY_URL} \

--registry basic.auth.user.info=${SR_API_KEY}:${SR_API_SECRET}

More about confluent cloud configuration at: Kafka client quick start for Confluent Cloud.

Running with docker:

docker run --rm -it --network my-networtk sauljabin/kaskade:latest \

admin -b my-kafka:9092

docker run --rm -it --network my-networtk sauljabin/kaskade:latest \

consumer -b my-kafka:9092 -t my-topic

Avro consumer:

Consume using my-schema.avsc file:

kaskade consumer -b my-kafka:9092 --from-beginning \

-k string -v avro \

-t my-avro-topic \

--avro value=my-schema.avsc

Protobuf consumer:

Install protoc command:

brew install protobuf

Generate a Descriptor Set file from your .proto file:

protoc --include_imports \

--descriptor_set_out=my-descriptor.desc \

--proto_path=${PROTO_PATH} \

${PROTO_PATH}/my-proto.proto

Consume using my-descriptor.desc file:

kaskade consumer -b my-kafka:9092 --from-beginning \

-k string -v protobuf \

-t my-protobuf-topic \

--protobuf descriptor=my-descriptor.desc \

--protobuf value=mypackage.MyMessage

More about protobuf and

FileDescriptorSetat: Protocol Buffers documentation.

Questions

For Q&A go to GitHub Discussions.

Development

For development instructions see DEVELOPMENT.md.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file kaskade-4.0.7.tar.gz.

File metadata

- Download URL: kaskade-4.0.7.tar.gz

- Upload date:

- Size: 22.6 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

cd907eb673d733ba27f4f89b649ad6bf19cd829745ae0dd8879989eb5a6bcc36

|

|

| MD5 |

34edc779562847d80fdbf15690093e90

|

|

| BLAKE2b-256 |

373b88be2113f39216a6ad36680ad599927cbb57dbd9eef9f4af3af138134187

|

Provenance

The following attestation bundles were made for kaskade-4.0.7.tar.gz:

Publisher:

release.yml on sauljabin/kaskade

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

kaskade-4.0.7.tar.gz -

Subject digest:

cd907eb673d733ba27f4f89b649ad6bf19cd829745ae0dd8879989eb5a6bcc36 - Sigstore transparency entry: 1009322661

- Sigstore integration time:

-

Permalink:

sauljabin/kaskade@a0311acedee1f04b4dccae5b1bba965f3a3f563f -

Branch / Tag:

refs/tags/v4.0.7 - Owner: https://github.com/sauljabin

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@a0311acedee1f04b4dccae5b1bba965f3a3f563f -

Trigger Event:

push

-

Statement type:

File details

Details for the file kaskade-4.0.7-py3-none-any.whl.

File metadata

- Download URL: kaskade-4.0.7-py3-none-any.whl

- Upload date:

- Size: 25.5 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

16215cb53a177c5169e5ac9ff443b1c425a2147120010493357377752e90fa54

|

|

| MD5 |

b3f6a51ad740c9a7ef777eecc47b6e98

|

|

| BLAKE2b-256 |

06cc75b8c1ae50d4acd41fb1b18c927b546bb340edad6dc29c901190ef304cb9

|

Provenance

The following attestation bundles were made for kaskade-4.0.7-py3-none-any.whl:

Publisher:

release.yml on sauljabin/kaskade

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

kaskade-4.0.7-py3-none-any.whl -

Subject digest:

16215cb53a177c5169e5ac9ff443b1c425a2147120010493357377752e90fa54 - Sigstore transparency entry: 1009322676

- Sigstore integration time:

-

Permalink:

sauljabin/kaskade@a0311acedee1f04b4dccae5b1bba965f3a3f563f -

Branch / Tag:

refs/tags/v4.0.7 - Owner: https://github.com/sauljabin

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@a0311acedee1f04b4dccae5b1bba965f3a3f563f -

Trigger Event:

push

-

Statement type: