🧑🏫 Implementations/tutorials of deep learning papers with side-by-side notes 📝; including transformers (original, xl, switch, feedback, vit), optimizers (adam, radam, adabelief), gans(dcgan, cyclegan, stylegan2), 🎮 reinforcement learning (ppo, dqn), capsnet, distillation, diffusion, etc. 🧠

Project description

labml.ai Deep Learning Paper Implementations

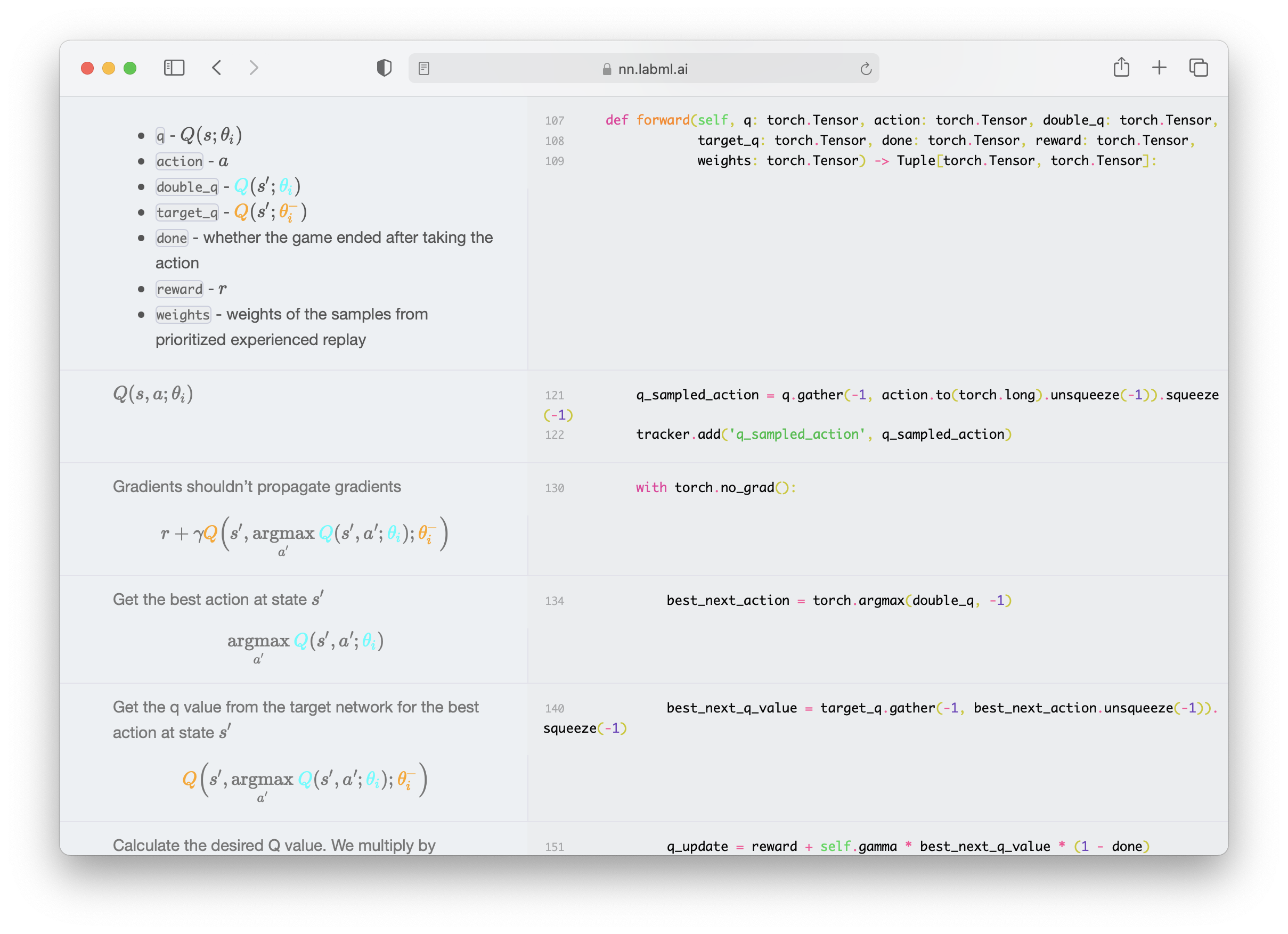

This is a collection of simple PyTorch implementations of neural networks and related algorithms. These implementations are documented with explanations,

The website renders these as side-by-side formatted notes. We believe these would help you understand these algorithms better.

We are actively maintaining this repo and adding new

implementations almost weekly.

Paper Implementations

✨ Transformers

- Multi-headed attention

- Triton Flash Attention

- Transformer building blocks

- Transformer XL

- Rotary Positional Embeddings

- Attention with Linear Biases (ALiBi)

- RETRO

- Compressive Transformer

- GPT Architecture

- GLU Variants

- kNN-LM: Generalization through Memorization

- Feedback Transformer

- Switch Transformer

- Fast Weights Transformer

- FNet

- Attention Free Transformer

- Masked Language Model

- MLP-Mixer: An all-MLP Architecture for Vision

- Pay Attention to MLPs (gMLP)

- Vision Transformer (ViT)

- Primer EZ

- Hourglass

✨ Low-Rank Adaptation (LoRA)

✨ Eleuther GPT-NeoX

✨ Diffusion models

- Denoising Diffusion Probabilistic Models (DDPM)

- Denoising Diffusion Implicit Models (DDIM)

- Latent Diffusion Models

- Stable Diffusion

✨ Generative Adversarial Networks

- Original GAN

- GAN with deep convolutional network

- Cycle GAN

- Wasserstein GAN

- Wasserstein GAN with Gradient Penalty

- StyleGAN 2

✨ Recurrent Highway Networks

✨ LSTM

✨ HyperNetworks - HyperLSTM

✨ ResNet

✨ ConvMixer

✨ Capsule Networks

✨ U-Net

✨ Sketch RNN

✨ Graph Neural Networks

✨ Counterfactual Regret Minimization (CFR)

Solving games with incomplete information such as poker with CFR.

✨ Reinforcement Learning

- Proximal Policy Optimization with Generalized Advantage Estimation

- Deep Q Networks with with Dueling Network, Prioritized Replay and Double Q Network.

✨ Optimizers

- Adam

- AMSGrad

- Adam Optimizer with warmup

- Noam Optimizer

- Rectified Adam Optimizer

- AdaBelief Optimizer

- Sophia-G Optimizer

✨ Normalization Layers

- Batch Normalization

- Layer Normalization

- Instance Normalization

- Group Normalization

- Weight Standardization

- Batch-Channel Normalization

- DeepNorm

✨ Distillation

✨ Adaptive Computation

✨ Uncertainty

✨ Activations

✨ Langauge Model Sampling Techniques

✨ Scalable Training/Inference

Installation

pip install labml-nn

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file labml_nn-0.5.1.tar.gz.

File metadata

- Download URL: labml_nn-0.5.1.tar.gz

- Upload date:

- Size: 334.2 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.11

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

14a23a126e3da62ddb38a2a4e3fd082581b42ab4b82f270882e0a6378be1546b

|

|

| MD5 |

1094f88ebcf219f9e83d0e43765b6ef7

|

|

| BLAKE2b-256 |

07e64f9eeb84fb5a37d5efa7ae637e337f12e3e59acf3359ea2d0985dc874740

|

File details

Details for the file labml_nn-0.5.1-py3-none-any.whl.

File metadata

- Download URL: labml_nn-0.5.1-py3-none-any.whl

- Upload date:

- Size: 461.9 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.11

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ba6b6d4efb2590636f237aa6204ffe789b3ec484088226248890393e225a5761

|

|

| MD5 |

2bda829cd68f4ce337d28b14dc10d528

|

|

| BLAKE2b-256 |

a1ed058a18295819037c4ae8ad2b273aa287d4afacc324a883e3ed95ae890bfc

|