A Simple Trial on Tensor-Graph-based Network...

Project description

Leo/need

A Simple Trial on Tensor-Graph-based Network...

Requirements

Ensure NumPy (NumPy on GitHub), and Matplotlib (Matplotlib on GitHub) is installed already before installing Leo/need.

One of most simple ways to install them is installing it with conda:

$ conda install numpy matplotlib

Installation

Currently the latest version of Leo/need can be installed with pip as following:

$ pip install leoneed --upgrade

or from source like other packages.

Importation

To access Leo/need and its functions import it in your Python code like this:

>>> import leoneed as ln

どうだっていい存在じゃない、簡単に愛は終わらないよ。

Components

needle: Nodes in Tensor-Graph

>>> mulmat = ln.needle.Mul_Matrix(( 3 , 4 ))

>>> mulmat.tensor

matrix([[ 1.40483957, 0.22112104, -0.14532731, 0.12319917],

[ 0.60602697, 2.42277001, -1.91660854, -2.42252709],

[ 0.64629422, 0.20150064, -0.15671318, 0.77204576]])

stella: Instances of Loss Functions

It Returns the Value and the Gradient of the Specified Loss Function.

>>> simploss = ln.stella.Loss_Simple(3) # Loss Function: (y_pred - y_true)^2 / 2

>>> simploss([ 1, 3, 4 ], [ 5, 7, 1 ])

(matrix([[8. , 8. , 4.5]]), matrix([[-4, -4, 3]]))

stage: Models' Containers

BATCH_SIZE = 128

NUM_EPOCHES = 39

MINI_BATCH = 5

ae = ln.stage.Auto_Encoder(3, 2)

print("W_Encoder(pre-training):", ae[0].tensor, sep="\n")

print("b_Encoder(pre-training):", ae[1].tensor, sep="\n")

print("W_Decoder(pre-training):", ae[3].tensor, sep="\n")

print("b_Decoder(pre-training):", ae[4].tensor, sep="\n")

randdata = np.random.randn(BATCH_SIZE)

randdata /= np.abs(randdata).max() * 1.28

traindata = np.zeros(( BATCH_SIZE , 3 )) # Constructing a Dataset Filled with Sample Vectors like (+a, 0, -a) Manually.

traindata[ : , 0 ] += randdata

traindata[ : , 2 ] -= randdata

ae_history = []

for idx_epoch in range(NUM_EPOCHES):

for k in range(BATCH_SIZE):

ae, ae_loss = ae.fit_sample(traindata[ k : (k + 1) , : ], traindata[ k : (k + 1) , : ])

ae_history.append(ae_loss)

print("W_Encoder(pst-training):", ae[0].tensor, sep="\n")

print("b_Encoder(pst-training):", ae[1].tensor, sep="\n")

print("W_Decoder(pst-training):", ae[3].tensor, sep="\n")

print("b_Decoder(pst-training):", ae[4].tensor, sep="\n")

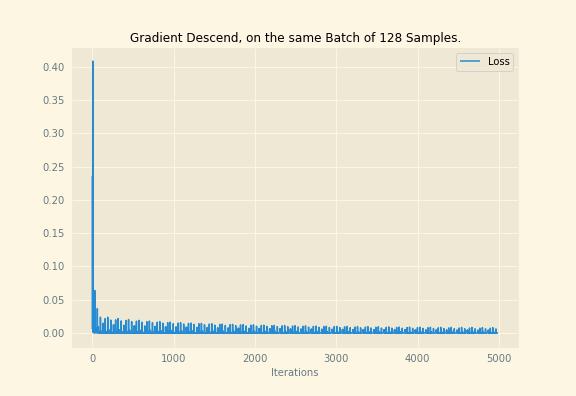

with plt.rc_context({}):

plt.plot(ae_history, label="Loss")

plt.legend()

plt.xlabel("Iterations")

plt.title("Gradient Descend, on the same Batch of %d Samples." % BATCH_SIZE)

plt.savefig("./gradloss-ae.jpeg")

plt.show()

Output:

W_Encoder(pre-training):

[[ 1.40483957 0.22112104]

[-0.14532731 0.12319917]

[ 0.60602697 2.42277001]]

b_Encoder(pre-training):

[[0. 0.]]

W_Decoder(pre-training):

[[-1.91660854 -2.42252709 0.64629422]

[ 0.20150064 -0.15671318 0.77204576]]

b_Decoder(pre-training):

[[0. 0. 0.]]

W_Encoder(pst-training):

[[ 0.87722083 0.77930038]

[-0.14532731 0.12319917]

[ 1.13364571 1.86459068]]

b_Encoder(pst-training):

[[-0.00498058 0.00632017]]

W_Decoder(pst-training):

[[-1.88554672 -2.21171336 0.71931055]

[-0.56403606 0.55549326 0.85221518]]

b_Decoder(pst-training):

[[ 0.00564453 -0.01603693 -0.01378995]]

Changelog

Version 0.0.3

-

Finished Implementation of Gradient after Matrix-Multiplication;

-

Added API of Generating AE (Auto-Encoder):

.stage.Auto_Encoder(numvisible, numhidden, w1=None, w2=None);

Version 0.0.2

- Added sub-module

needle(Nodes of Tensor-Graph),stella(Loss Functions), andstage;

References

[^extra-1]: Harry-P (針原 翼), Issenkou, 2017, av17632876;

Extra

息吹く炎、君の鼓動の中。

Flames Breathing, in your Heartbeat. 火炎般的氣息,綻放於你跳動的心臟。

---- Harry-P in "Issenkou"[^extra-1]

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file leoneed-0.0.3.tar.gz.

File metadata

- Download URL: leoneed-0.0.3.tar.gz

- Upload date:

- Size: 17.3 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.9.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d5a37abd927c00cada76d687269ac6bcc9f6efaf0f9cb72564cf1b07fddc23f7

|

|

| MD5 |

6b67dc3e805cff924c90f93814aa605b

|

|

| BLAKE2b-256 |

7cf2a5c3bdc9b92f6f1df05ba1f045bb1fe425a4873e100a68361ae710baaf76

|

File details

Details for the file leoneed-0.0.3-py3-none-any.whl.

File metadata

- Download URL: leoneed-0.0.3-py3-none-any.whl

- Upload date:

- Size: 18.0 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.9.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

14494beca66e296db4aca358c0138304c37548d5bb2d36bfd3e0c252c316b05a

|

|

| MD5 |

7e3cac2649687acb22b4e13a6a6cb3be

|

|

| BLAKE2b-256 |

23a99203592a90741c247317c39f4e5b9ffe65afc3ae29e727ecdba5d315eca3

|