An accurate natural language detection library, suitable for short text and mixed-language text

Project description

1. What does this library do?

Its task is simple: It tells you which language some text is written in. This is very useful as a preprocessing step for linguistic data in natural language processing applications such as text classification and spell checking. Other use cases, for instance, might include routing e-mails to the right geographically located customer service department, based on the e-mails' languages.

2. Why does this library exist?

Language detection is often done as part of large machine learning frameworks or natural language processing applications. In cases where you don't need the full-fledged functionality of those systems or don't want to learn the ropes of those, a small flexible library comes in handy.

Python is widely used in natural language processing, so there are a couple of comprehensive open source libraries for this task, such as Google's CLD 2 and CLD 3, Langid, Simplemma and Langdetect. Unfortunately, except for the last one they have two major drawbacks:

- Detection only works with quite lengthy text fragments. For very short text snippets such as Twitter messages, they do not provide adequate results.

- The more languages take part in the decision process, the less accurate are the detection results.

Lingua aims at eliminating these problems. She nearly does not need any configuration and yields pretty accurate results on both long and short text, even on single words and phrases. She draws on both rule-based and statistical Naive Bayes methods but does not use neural networks or any dictionaries of words. She does not need a connection to any external API or service either. Once the library has been downloaded, it can be used completely offline.

3. A short history of this library

This library started as a pure Python implementation. Python's quick prototyping capabilities made an important contribution to its improvements. Unfortunately, there was always a tradeoff between performance and memory consumption. At first, Lingua's language models were stored in dictionaries during runtime. This led to quick performance at the cost of large memory consumption (more than 3 GB). Because of that, the language models were then stored in NumPy arrays instead of dictionaries. Memory consumption reduced to approximately 800 MB but CPU performance dropped significantly. Both approaches were not satisfying.

Starting from version 2.0.0, the pure Python implementation was replaced with compiled Python bindings to the native Rust implementation of Lingua. This decision has led to both quick performance and a small memory footprint. The pure Python implementation is still available in a separate branch in this repository and will be kept up-to-date in subsequent 1.* releases. There are environments that do not support native Python extensions such as Juno, so a pure Python implementation is still useful. Both 1.* and 2.* versions will remain available on the Python package index (PyPI).

4. Which languages are supported?

Compared to other language detection libraries, Lingua's focus is on quality over quantity, that is, getting detection right for a small set of languages first before adding new ones. Currently, the following 75 languages are supported:

- A

- Afrikaans

- Albanian

- Arabic

- Armenian

- Azerbaijani

- B

- Basque

- Belarusian

- Bengali

- Norwegian Bokmal

- Bosnian

- Bulgarian

- C

- Catalan

- Chinese

- Croatian

- Czech

- D

- Danish

- Dutch

- E

- English

- Esperanto

- Estonian

- F

- Finnish

- French

- G

- Ganda

- Georgian

- German

- Greek

- Gujarati

- H

- Hebrew

- Hindi

- Hungarian

- I

- Icelandic

- Indonesian

- Irish

- Italian

- J

- Japanese

- K

- Kazakh

- Korean

- L

- Latin

- Latvian

- Lithuanian

- M

- Macedonian

- Malay

- Maori

- Marathi

- Mongolian

- N

- Norwegian Nynorsk

- P

- Persian

- Polish

- Portuguese

- Punjabi

- R

- Romanian

- Russian

- S

- Serbian

- Shona

- Slovak

- Slovene

- Somali

- Sotho

- Spanish

- Swahili

- Swedish

- T

- Tagalog

- Tamil

- Telugu

- Thai

- Tsonga

- Tswana

- Turkish

- U

- Ukrainian

- Urdu

- V

- Vietnamese

- W

- Welsh

- X

- Xhosa

- Y

- Yoruba

- Z

- Zulu

5. How accurate is it?

Lingua is able to report accuracy statistics for some bundled test data available for each supported language. The test data for each language is split into three parts:

- a list of single words with a minimum length of 5 characters

- a list of word pairs with a minimum length of 10 characters

- a list of complete grammatical sentences of various lengths

Both the language models and the test data have been created from separate documents of the Wortschatz corpora offered by Leipzig University, Germany. Data crawled from various news websites have been used for training, each corpus comprising one million sentences. For testing, corpora made of arbitrarily chosen websites have been used, each comprising ten thousand sentences. From each test corpus, a random unsorted subset of 1000 single words, 1000 word pairs and 1000 sentences has been extracted, respectively.

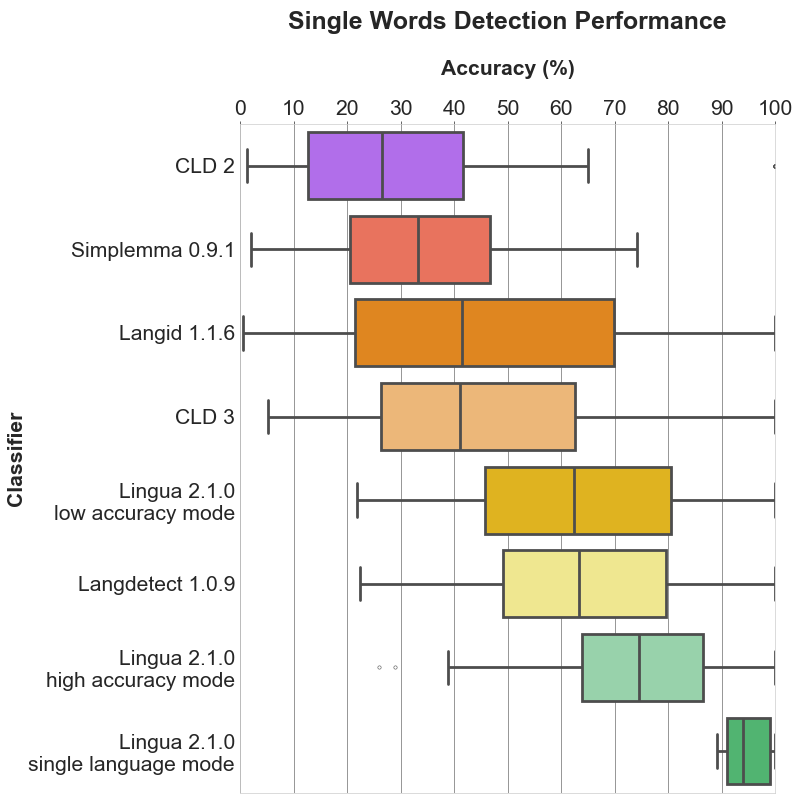

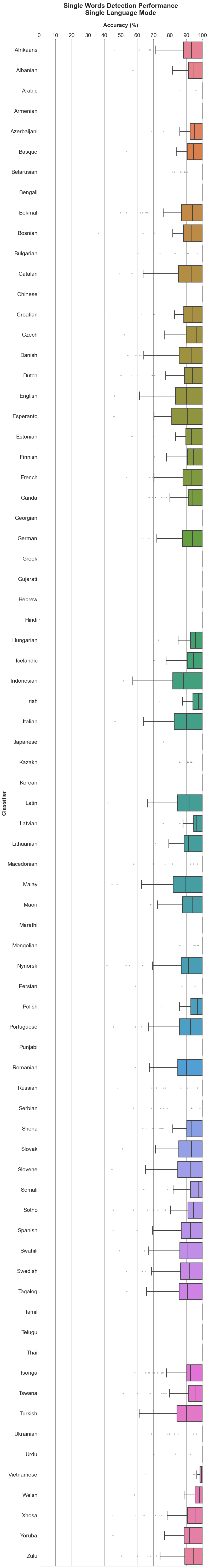

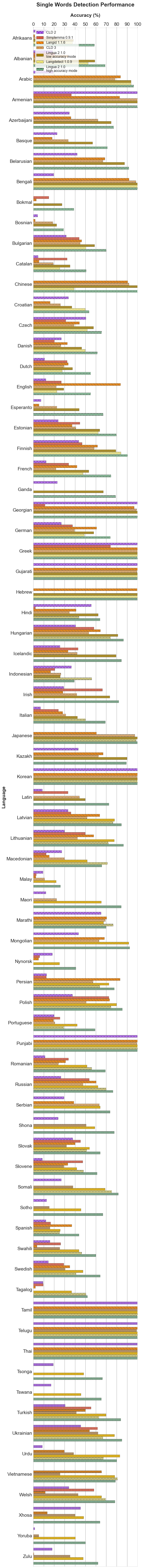

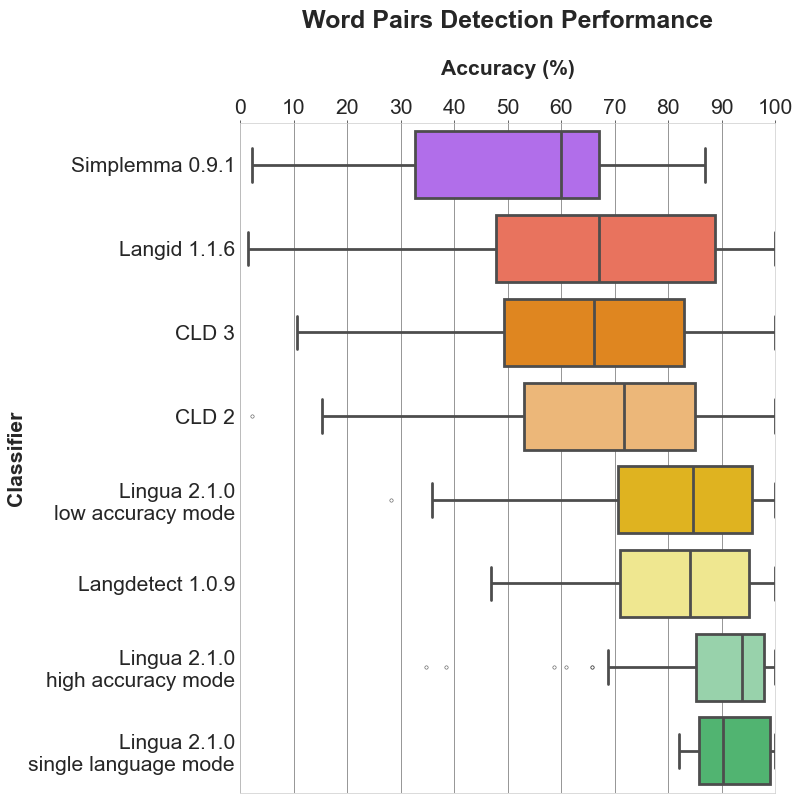

Given the generated test data, I have compared the detection results of Lingua, Langdetect, Langid, Simplemma, CLD 2 and CLD 3 running over the data of Lingua's supported 75 languages. Languages that are not supported by the other detectors are simply ignored for them during the detection process.

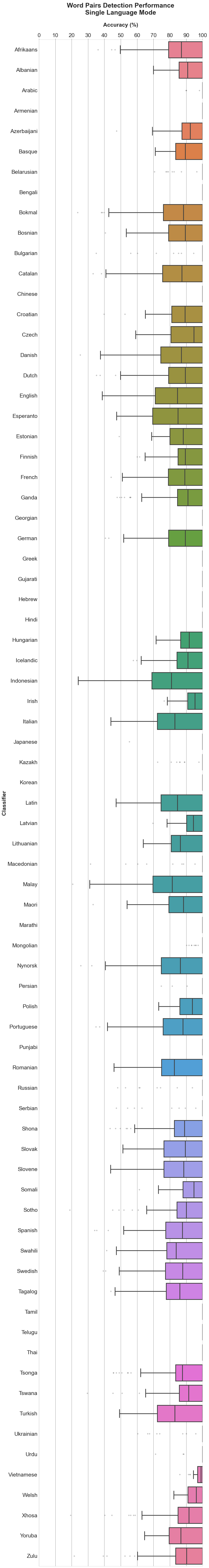

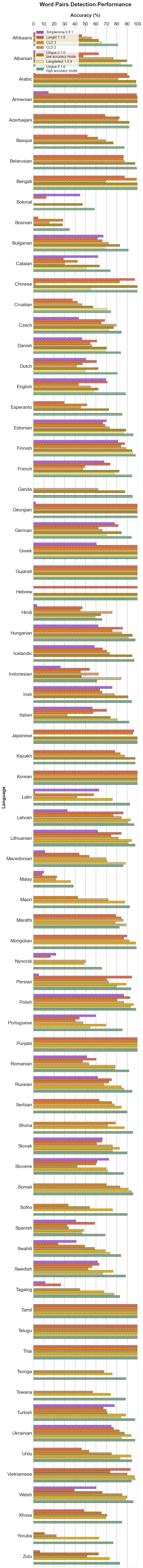

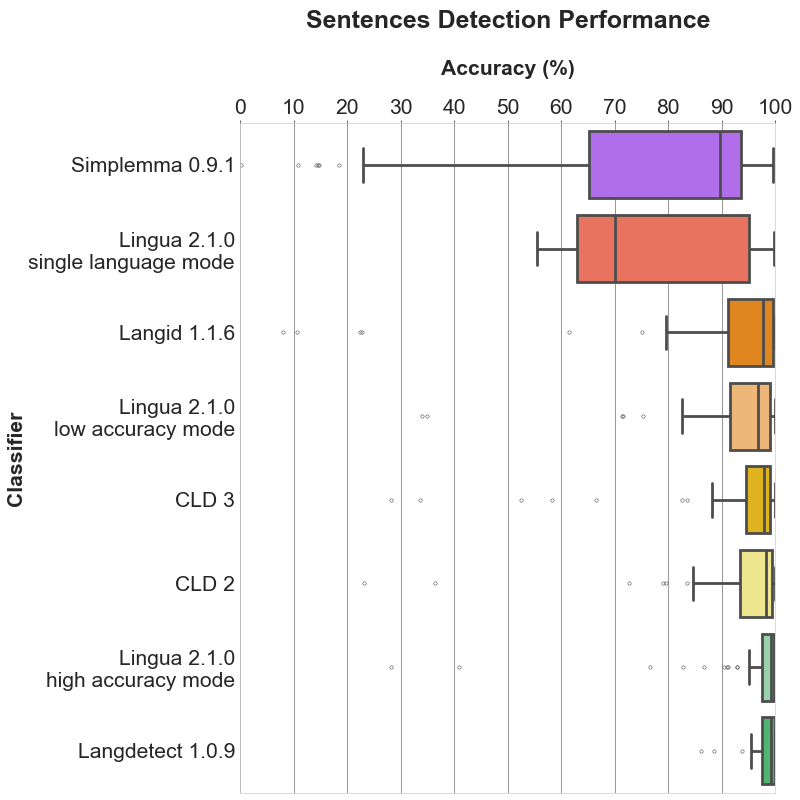

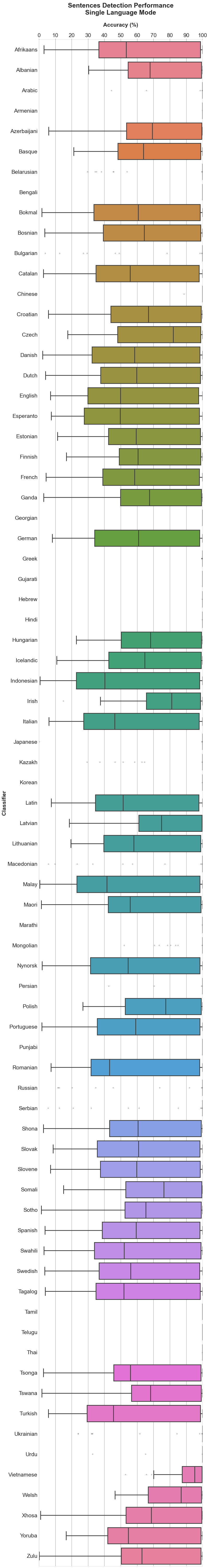

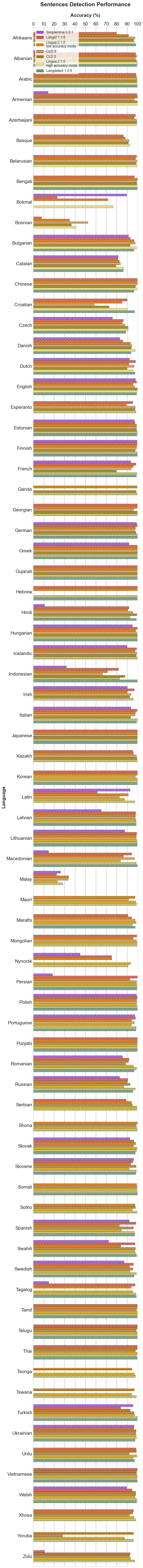

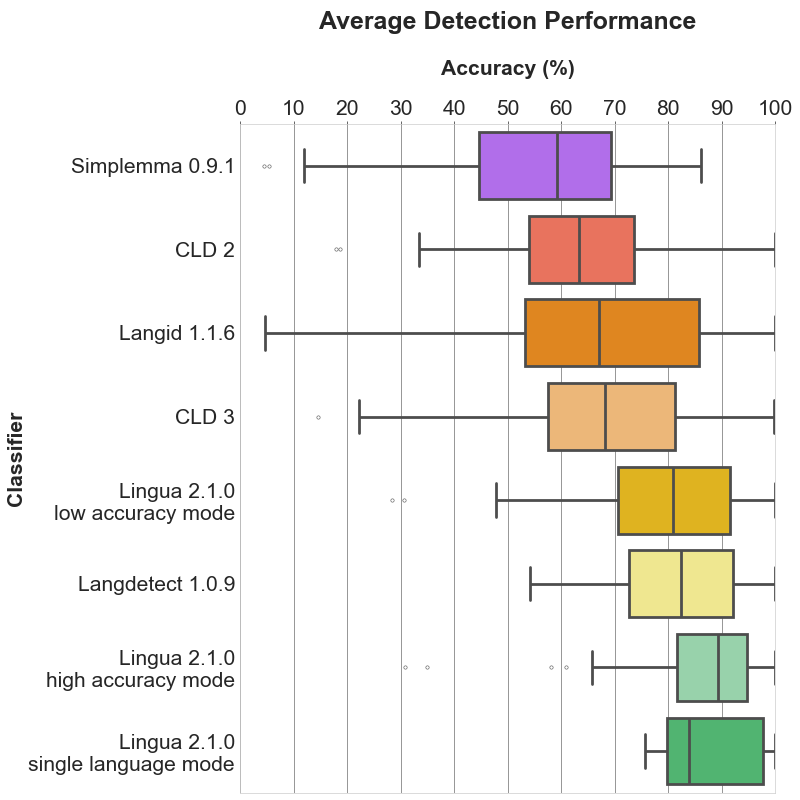

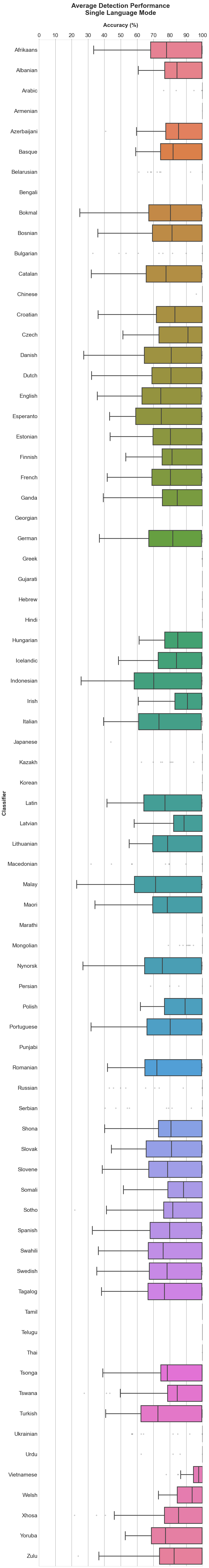

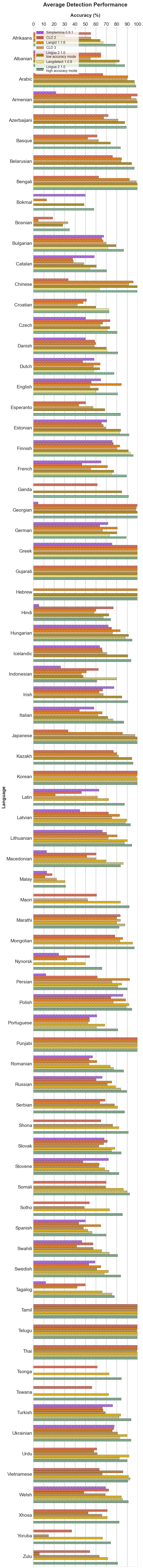

Each of the following sections contains three plots. The bar plot shows the detailed accuracy results for each supported language. The box plots illustrate the distributions of the accuracy values for each classifier. The boxes themselves represent the areas which the middle 50 % of data lie within. Within the colored boxes, the horizontal lines mark the median of the distributions.

5.1 Single word detection

Bar plot

5.2 Word pair detection

Bar plot

5.3 Sentence detection

Bar plot

5.4 Average detection

Bar plot

5.5 Mean, median and standard deviation

The tables found here show detailed statistics for each language and classifier including mean, median and standard deviation.

6. How fast is it?

The accuracy reporter script measures the time each language detector needs to classify 3000 input texts for each of the supported 75 languages. The results below have been produced on an iMac 3.6 Ghz 8-Core Intel Core i9 with 40 GB RAM.

Lingua in multi-threaded mode is one of the fastest algorithms in this comparison. CLD 2 and 3 are similarly fast as they have been implemented in C or C++. Pure Python libraries such as Simplemma, Langid or Langdetect are significantly slower.

| Detector | Time |

|---|---|

| CLD 2 | 8.65 sec |

| CLD 3 | 16.77 sec |

| Lingua (low accuracy mode, multi-threaded) | 11.81 sec |

| Lingua (high accuracy mode, multi-threaded) | 21.13 sec |

| Simplemma | 2 min 36.44 sec |

| Langid | 3 min 50.40 sec |

| Langdetect | 10 min 43.96 sec |

7. Why is it better than other libraries?

Every language detector uses a probabilistic n-gram model trained on the character distribution in some training corpus. Most libraries only use n-grams of size 3 (trigrams) which is satisfactory for detecting the language of longer text fragments consisting of multiple sentences. For short phrases or single words, however, trigrams are not enough. The shorter the input text is, the less n-grams are available. The probabilities estimated from such few n-grams are not reliable. This is why Lingua makes use of n-grams of sizes 1 up to 5 which results in much more accurate prediction of the correct language.

A second important difference is that Lingua does not only use such a statistical model, but also a rule-based engine. This engine first determines the alphabet of the input text and searches for characters which are unique in one or more languages. If exactly one language can be reliably chosen this way, the statistical model is not necessary anymore. In any case, the rule-based engine filters out languages that do not satisfy the conditions of the input text. Only then, in a second step, the probabilistic n-gram model is taken into consideration. This makes sense because loading less language models means less memory consumption and better runtime performance.

In general, it is always a good idea to restrict the set of languages to be considered in the classification process using the respective api methods. If you know beforehand that certain languages are never to occur in an input text, do not let those take part in the classification process. The filtering mechanism of the rule-based engine is quite good, however, filtering based on your own knowledge of the input text is always preferable.

Even when taking all language models into account, the library uses only a few dozen megabytes of memory during runtime. This is because the models are stored as finite-state transducers (FSTs). FSTs allow to be searched on disk without actually reading them entirely into memory, making the library suitable for low-resource environments.

8. Test report generation

If you want to reproduce the accuracy results above, you can generate the test reports yourself for all classifiers and languages by installing Poetry and executing:

poetry install --no-root --only script

poetry run python3 scripts/accuracy_reporter.py

Accuracy reports for only a subset of classifiers and / or languages can be created by passing command line arguments:

poetry run python3 scripts/accuracy_reporter.py --detectors cld2 lingua-high-accuracy --languages bulgarian german

For each detector and language, a test report file is then written into

/accuracy-reports.

As an example, here is the current output of the Lingua German report:

##### German #####

>>> Accuracy on average: 89.27%

>> Detection of 1000 single words (average length: 9 chars)

Accuracy: 74.20%

Erroneously classified as Dutch: 2.30%, Danish: 2.20%, English: 2.20%, Latin: 1.80%, Bokmal: 1.60%, Italian: 1.30%, Basque: 1.20%, Esperanto: 1.20%, French: 1.20%, Swedish: 0.90%, Afrikaans: 0.70%, Finnish: 0.60%, Nynorsk: 0.60%, Portuguese: 0.60%, Yoruba: 0.60%, Sotho: 0.50%, Tsonga: 0.50%, Welsh: 0.50%, Estonian: 0.40%, Irish: 0.40%, Polish: 0.40%, Spanish: 0.40%, Tswana: 0.40%, Albanian: 0.30%, Icelandic: 0.30%, Tagalog: 0.30%, Bosnian: 0.20%, Catalan: 0.20%, Croatian: 0.20%, Indonesian: 0.20%, Lithuanian: 0.20%, Romanian: 0.20%, Swahili: 0.20%, Zulu: 0.20%, Latvian: 0.10%, Malay: 0.10%, Maori: 0.10%, Slovak: 0.10%, Slovene: 0.10%, Somali: 0.10%, Turkish: 0.10%, Xhosa: 0.10%

>> Detection of 1000 word pairs (average length: 18 chars)

Accuracy: 93.90%

Erroneously classified as Dutch: 0.90%, Latin: 0.90%, English: 0.70%, Swedish: 0.60%, Danish: 0.50%, French: 0.40%, Bokmal: 0.30%, Irish: 0.20%, Tagalog: 0.20%, Tsonga: 0.20%, Afrikaans: 0.10%, Esperanto: 0.10%, Estonian: 0.10%, Finnish: 0.10%, Italian: 0.10%, Maori: 0.10%, Nynorsk: 0.10%, Somali: 0.10%, Swahili: 0.10%, Turkish: 0.10%, Welsh: 0.10%, Zulu: 0.10%

>> Detection of 1000 sentences (average length: 111 chars)

Accuracy: 99.70%

Erroneously classified as Dutch: 0.20%, Latin: 0.10%

9. How to add it to your project?

Lingua is available in the Python Package Index and can be installed with:

pip install lingua-language-detector

10. How to build?

Lingua requires Python >= 3.12.

First create a virtualenv and install the Python wheel for your platform with pip.

git clone https://github.com/pemistahl/lingua-py.git

cd lingua-py

python3 -m venv .venv

source .venv/bin/activate

pip install --find-links=lingua lingua-language-detector

In the scripts directory, there are Python scripts for writing accuracy reports, drawing plots and writing accuracy values in an HTML table. The dependencies for these scripts are managed by Poetry which you need to install if you have not done so yet. In order to install the script dependencies in your virtualenv, run

poetry install --no-root --only script

The project makes uses of type annotations which allow for static type checking with Mypy. Run the following commands for checking the types:

poetry install --no-root --only dev

poetry run mypy

The Python source code is formatted with Black:

poetry run black .

11. How to use?

11.1 Basic usage

>>> from lingua import Language, LanguageDetectorBuilder

>>> languages = [Language.ENGLISH, Language.FRENCH, Language.GERMAN, Language.SPANISH]

>>> detector = LanguageDetectorBuilder.from_languages(*languages).build()

>>> language = detector.detect_language_of("languages are awesome")

>>> language

Language.ENGLISH

>>> language.iso_code_639_1

IsoCode639_1.EN

>>> language.iso_code_639_1.name

'EN'

>>> language.iso_code_639_3

IsoCode639_3.ENG

>>> language.iso_code_639_3.name

'ENG'

The entire library is thread-safe, i.e. you can use a single LanguageDetector instance and

its methods in multiple threads. Multiple instances of LanguageDetector share thread-safe

access to the language models, so every language model is loaded into memory just once, no

matter how many instances of LanguageDetector have been created.

11.2 Minimum relative distance

By default, Lingua returns the most likely language for a given input text. However, there are certain words that are spelled the same in more than one language. The word prologue, for instance, is both a valid English and French word. Lingua would output either English or French which might be wrong in the given context. For cases like that, it is possible to specify a minimum relative distance that the logarithmized and summed up probabilities for each possible language have to satisfy. It can be stated in the following way:

>>> from lingua import Language, LanguageDetectorBuilder

>>> languages = [Language.ENGLISH, Language.FRENCH, Language.GERMAN, Language.SPANISH]

>>> detector = LanguageDetectorBuilder.from_languages(*languages)\

.with_minimum_relative_distance(0.9)\

.build()

>>> print(detector.detect_language_of("languages are awesome"))

None

Be aware that the distance between the language probabilities is dependent on

the length of the input text. The longer the input text, the larger the

distance between the languages. So if you want to classify very short text

phrases, do not set the minimum relative distance too high. Otherwise, None

will be returned most of the time as in the example above. This is the return

value for cases where language detection is not reliably possible.

11.3 Confidence values

Knowing about the most likely language is nice but how reliable is the computed likelihood? And how less likely are the other examined languages in comparison to the most likely one? These questions can be answered as well:

>>> from lingua import Language, LanguageDetectorBuilder

>>> languages = [Language.ENGLISH, Language.FRENCH, Language.GERMAN, Language.SPANISH]

>>> detector = LanguageDetectorBuilder.from_languages(*languages).build()

>>> confidence_values = detector.compute_language_confidence_values("languages are awesome")

>>> for confidence in confidence_values:

... print(f"{confidence.language.name}: {confidence.value:.2f}")

ENGLISH: 0.93

FRENCH: 0.04

GERMAN: 0.02

SPANISH: 0.01

In the example above, a list is returned containing those languages which the calling instance of LanguageDetector has been built from, sorted by their confidence value in descending order. Each value is a probability between 0.0 and 1.0. The probabilities of all languages will sum to 1.0. If the language is unambiguously identified by the rule engine, the value 1.0 will always be returned for this language. The other languages will receive a value of 0.0.

There is also a method for returning the confidence value for one specific language only:

>>> from lingua import Language, LanguageDetectorBuilder

>>> languages = [Language.ENGLISH, Language.FRENCH, Language.GERMAN, Language.SPANISH]

>>> detector = LanguageDetectorBuilder.from_languages(*languages).build()

>>> confidence_value = detector.compute_language_confidence("languages are awesome", Language.FRENCH)

>>> print(f"{confidence_value:.2f}")

0.04

The value that this method computes is a number between 0.0 and 1.0. If the language is unambiguously identified by the rule engine, the value 1.0 will always be returned. If the given language is not supported by this detector instance, the value 0.0 will always be returned.

11.4 Eager loading versus lazy loading

By default, Lingua uses lazy-loading to load only those language models on demand which are considered relevant by the rule-based filter engine. For web services, for instance, it is rather beneficial to preload all language models into memory to avoid unexpected latency while waiting for the service response. If you want to enable the eager-loading mode, you can do it like this:

LanguageDetectorBuilder.from_all_languages().with_preloaded_language_models().build()

Multiple instances of LanguageDetector share the same language models in

memory which are accessed asynchronously by the instances.

11.5 Low accuracy mode versus high accuracy mode

Lingua's high detection accuracy comes at the cost of being noticeably slower than other language detectors. This requirement might not be feasible for systems running low on resources. If you want to classify mostly long texts or need to save resources, you can enable a low accuracy mode that loads only a small subset of the language models into memory:

LanguageDetectorBuilder.from_all_languages().with_low_accuracy_mode().build()

The downside of this approach is that detection accuracy for short texts consisting of less than 120 characters will drop significantly. However, detection accuracy for texts which are longer than 120 characters will remain mostly unaffected.

An alternative for a faster performance is to reduce the set of languages when building the language detector. In most cases, it is not advisable to build the detector from all supported languages. When you have knowledge about the texts you want to classify you can almost always rule out certain languages as impossible or unlikely to occur.

11.6 Single-language mode

If you build a LanguageDetector from one language only it will operate in single-language mode.

This means the detector will try to find out whether a given text has been written in the given language or not.

If not, then None will be returned, otherwise the given language. In single-language mode, the detector decides based on a set of unique and most common n-grams which

have been collected beforehand for every supported language.

11.7 Detection of multiple languages in mixed-language texts

In contrast to most other language detectors, Lingua is able to detect multiple languages in mixed-language texts. This feature can yield quite reasonable results but it is still in an experimental state and therefore the detection result is highly dependent on the input text. It works best in high-accuracy mode with multiple long words for each language. The shorter the phrases and their words are, the less accurate are the results. Reducing the set of languages when building the language detector can also improve accuracy for this task if the languages occurring in the text are equal to the languages supported by the respective language detector instance.

>>> from lingua import Language, LanguageDetectorBuilder

>>> languages = [Language.ENGLISH, Language.FRENCH, Language.GERMAN]

>>> detector = LanguageDetectorBuilder.from_languages(*languages).build()

>>> sentence = "Parlez-vous français? " + \

... "Ich spreche Französisch nur ein bisschen. " + \

... "A little bit is better than nothing."

>>> for result in detector.detect_multiple_languages_of(sentence):

... print(f"{result.language.name}: '{sentence[result.start_index:result.end_index]}'")

FRENCH: 'Parlez-vous français? '

GERMAN: 'Ich spreche Französisch nur ein bisschen. '

ENGLISH: 'A little bit is better than nothing.'

In the example above, a list of

DetectionResult

is returned. Each entry in the list describes a contiguous single-language text section,

providing start and end indices of the respective substring.

11.8 Single-threaded versus multi-threaded language detection

The LanguageDetector methods explained above all operate in a single thread.

If you want to classify a very large set of texts, you will probably want to

use all available CPU cores efficiently in multiple threads for maximum performance.

Every single-threaded method has a multi-threaded equivalent that accepts a list of texts and returns a list of results.

| Single-threaded | Multi-threaded |

|---|---|

detect_language_of |

detect_languages_in_parallel_of |

detect_multiple_languages_of |

detect_multiple_languages_in_parallel_of |

compute_language_confidence_values |

compute_language_confidence_values_in_parallel |

compute_language_confidence |

compute_language_confidence_in_parallel |

11.9 Methods to build the LanguageDetector

There might be classification tasks where you know beforehand that your language data is definitely not written in Latin, for instance. The detection accuracy can become better in such cases if you exclude certain languages from the decision process or just explicitly include relevant languages:

from lingua import LanguageDetectorBuilder, Language, IsoCode639_1, IsoCode639_3

# Include all languages available in the library.

LanguageDetectorBuilder.from_all_languages()

# Include only languages that are not yet extinct (= currently excludes Latin).

LanguageDetectorBuilder.from_all_spoken_languages()

# Include only languages written with Cyrillic script.

LanguageDetectorBuilder.from_all_languages_with_cyrillic_script()

# Exclude only the Spanish language from the decision algorithm.

LanguageDetectorBuilder.from_all_languages_without(Language.SPANISH)

# Only decide between English and German.

LanguageDetectorBuilder.from_languages(Language.ENGLISH, Language.GERMAN)

# Select languages by ISO 639-1 code.

LanguageDetectorBuilder.from_iso_codes_639_1(IsoCode639_1.EN, IsoCode639_1.DE)

# Select languages by ISO 639-3 code.

LanguageDetectorBuilder.from_iso_codes_639_3(IsoCode639_3.ENG, IsoCode639_3.DEU)

11.10 Differences to native Python enums

As version >= 2.0 has been implemented in Rust with Python bindings implemented with PyO3, there are some limitations with regard to enums. PyO3 does not yet support meta classes, that's why Lingua's enums do not exactly behave like native Python enums.

If you want to iterate through all members of the Language enum, for instance,

you can do it like this:

# This won't work

# for language in Language:

# print(language)

# But this will work

for language in sorted(Language.all()):

print(language)

PyO3 enums are not subscriptable. If you want to get an enum member dynamically, you can do it like this:

# This won't work

# assert Language["GERMAN"] == Language.GERMAN

# But this will work

assert Language.from_str("GERMAN") == Language.GERMAN

assert Language.from_str("german") == Language.GERMAN

assert Language.from_str("GeRmAn") == Language.GERMAN

12. What's next for version 2.3.0?

Take a look at the planned issues.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distributions

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file lingua_language_detector-2.2.0-cp314-cp314-win_arm64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp314-cp314-win_arm64.whl

- Upload date:

- Size: 170.0 MB

- Tags: CPython 3.14, Windows ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

0ec27bc67813372baba2e0a3df2b13cd559c64bc45c5af92f6137fe5b153a525

|

|

| MD5 |

0f5fac67ca2279fd90b83bfb17217b0a

|

|

| BLAKE2b-256 |

445ef73a74fb19c189c4070d66e9b15f1e4a032bf5e5203fb6bb6c622e16f9c0

|

File details

Details for the file lingua_language_detector-2.2.0-cp314-cp314-win_amd64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp314-cp314-win_amd64.whl

- Upload date:

- Size: 170.1 MB

- Tags: CPython 3.14, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3423749db1861937443141e1871a726b8d70dc6e7fe4f6584c477eef5b87fc38

|

|

| MD5 |

cefc2314aa215d60a778e3a7a7340057

|

|

| BLAKE2b-256 |

5fc12e55c62abc6653383917f9d008090820182d32b8e1f19213af1c06e16411

|

File details

Details for the file lingua_language_detector-2.2.0-cp314-cp314-musllinux_1_2_x86_64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp314-cp314-musllinux_1_2_x86_64.whl

- Upload date:

- Size: 170.6 MB

- Tags: CPython 3.14, musllinux: musl 1.2+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

145a11d7b7f0c8bf666de411585f53011d530c541a2cd55c2f86b3cff499f77e

|

|

| MD5 |

c11a64322cb8ac2666150b0cdd9cf7c9

|

|

| BLAKE2b-256 |

7f8969ea8b9de230b322ce8b60e9b95463cc4cbeed73476abd9214ab699ade73

|

File details

Details for the file lingua_language_detector-2.2.0-cp314-cp314-musllinux_1_2_aarch64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp314-cp314-musllinux_1_2_aarch64.whl

- Upload date:

- Size: 170.5 MB

- Tags: CPython 3.14, musllinux: musl 1.2+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b7ef23811c8ceacbc10a08dd2f56d71590e7ca6c50e19dfd11a1e142d101199d

|

|

| MD5 |

8b5111e360a31381280b0b7752f189a3

|

|

| BLAKE2b-256 |

2842efb8119a778f0b8df175f5f79a04a21b019c7b38058042866519953c5be1

|

File details

Details for the file lingua_language_detector-2.2.0-cp314-cp314-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp314-cp314-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 170.3 MB

- Tags: CPython 3.14, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

83badc377b0d07f349753ec3d35cf1ad74afb3ad0dce3ee672240d437705872b

|

|

| MD5 |

657bb94752b06f8ad376d40091779f48

|

|

| BLAKE2b-256 |

580f6dcd9de6f5257ea736693ea92b354dac0073466a1ed32ef1f9873cc4cafe

|

File details

Details for the file lingua_language_detector-2.2.0-cp314-cp314-manylinux_2_17_aarch64.manylinux2014_aarch64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp314-cp314-manylinux_2_17_aarch64.manylinux2014_aarch64.whl

- Upload date:

- Size: 170.3 MB

- Tags: CPython 3.14, manylinux: glibc 2.17+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b883aa34f03cd5cde7ee606bd2c18496f15b6cbd775be0dfd38311d47d6cf551

|

|

| MD5 |

f6de3b93a7299772f60406cc4fee404e

|

|

| BLAKE2b-256 |

a6897367d0f7d3b5bcc89f47e223580ec57032dfc642f27cd2a0d06f40bda147

|

File details

Details for the file lingua_language_detector-2.2.0-cp314-cp314-macosx_11_0_arm64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp314-cp314-macosx_11_0_arm64.whl

- Upload date:

- Size: 172.5 MB

- Tags: CPython 3.14, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

066b56ca4e3bd324b4c76a861ab2b747d2d8d4e6eda0a4cf06291c6c039b90f4

|

|

| MD5 |

a218ec1eedc178e98e739ea758cbf13a

|

|

| BLAKE2b-256 |

280b3dd8a1eba4ac0da9987542849bae25344bb107e5b4a153ebe09e0c8feba3

|

File details

Details for the file lingua_language_detector-2.2.0-cp314-cp314-macosx_10_12_x86_64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp314-cp314-macosx_10_12_x86_64.whl

- Upload date:

- Size: 170.2 MB

- Tags: CPython 3.14, macOS 10.12+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4cac0e0721425342e1b10cbddfb009a7fdc75e0a79cfd0451bffc29bee0574c1

|

|

| MD5 |

cf483f3c30a827ae4cf16b040318e2a1

|

|

| BLAKE2b-256 |

0e53a7f52e45e7a71c3a749cc77fbc414c8948108ff406c9059197fdc77779e8

|

File details

Details for the file lingua_language_detector-2.2.0-cp313-cp313-win_arm64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp313-cp313-win_arm64.whl

- Upload date:

- Size: 170.0 MB

- Tags: CPython 3.13, Windows ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

9fc04412287d254982612dafe2dae2073e1feeedffbee8d4ddff4b961218cb69

|

|

| MD5 |

c563cf580a8935f4bf86efd3bb9aaf43

|

|

| BLAKE2b-256 |

81e74ed636d7d7e4605ce170ce70a566b45f70eed79ec9cdb5c9bc821892c1cd

|

File details

Details for the file lingua_language_detector-2.2.0-cp313-cp313-win_amd64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp313-cp313-win_amd64.whl

- Upload date:

- Size: 170.1 MB

- Tags: CPython 3.13, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

250517a581cfa098a451299aa913e9756aee9f738b0b248259fc634eeffeb2cf

|

|

| MD5 |

84715bff8ac8220b549231197a9065d1

|

|

| BLAKE2b-256 |

35a6e087ba2c47eb86899020915fb6bf47b0f956eda9c61cabc742bc832c1b3c

|

File details

Details for the file lingua_language_detector-2.2.0-cp313-cp313-musllinux_1_2_x86_64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp313-cp313-musllinux_1_2_x86_64.whl

- Upload date:

- Size: 170.6 MB

- Tags: CPython 3.13, musllinux: musl 1.2+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c0961ec8f616897f5e91c7c3a5422d2d3aa48493954f2c425f2fca522a253916

|

|

| MD5 |

b33b0db0af509053073c70a0b42be83d

|

|

| BLAKE2b-256 |

f47124d9d151ccf35cd001d8570d22dc1d305e632eee7ff1252764be8fb081f3

|

File details

Details for the file lingua_language_detector-2.2.0-cp313-cp313-musllinux_1_2_aarch64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp313-cp313-musllinux_1_2_aarch64.whl

- Upload date:

- Size: 170.5 MB

- Tags: CPython 3.13, musllinux: musl 1.2+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

126899985870ada7f9630fb984a0763741bb7fde42adfc077e6f415e49e407b5

|

|

| MD5 |

bb02591f661913b6539fe2c641fb48fa

|

|

| BLAKE2b-256 |

21907f0f4c131cd0686c0f77157545b599b5023b00fa44ffb4a1c24a4c861cb3

|

File details

Details for the file lingua_language_detector-2.2.0-cp313-cp313-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp313-cp313-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 170.3 MB

- Tags: CPython 3.13, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4fbf936b47ef4fdd7043ebb4159d4a5f1c3648028e19d6e3c60464abc5f5e195

|

|

| MD5 |

50c709324b1af098a368ac956e34ef45

|

|

| BLAKE2b-256 |

53a5b93c76728294e4eaf01f442fa7e9da913963d638915ce0aafd0220bc9902

|

File details

Details for the file lingua_language_detector-2.2.0-cp313-cp313-manylinux_2_17_aarch64.manylinux2014_aarch64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp313-cp313-manylinux_2_17_aarch64.manylinux2014_aarch64.whl

- Upload date:

- Size: 170.3 MB

- Tags: CPython 3.13, manylinux: glibc 2.17+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4ed86c6e803a585853298623d9ee683bd08bcd15c2543c045ef059a090823fc8

|

|

| MD5 |

cfdfb4f19f835e3e67b21f933c2f7c66

|

|

| BLAKE2b-256 |

2588ad5e9b8b21f4c5eeecd5d08539bf6ec869df87a491d779b8756501db6a71

|

File details

Details for the file lingua_language_detector-2.2.0-cp313-cp313-macosx_11_0_arm64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp313-cp313-macosx_11_0_arm64.whl

- Upload date:

- Size: 172.5 MB

- Tags: CPython 3.13, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

0bb20bfe60b64012cd71f85bfdf5c79fc2e916590a9f69c3a9b01a44fbfd2244

|

|

| MD5 |

19a11249af8eb12e23f250be42bf328c

|

|

| BLAKE2b-256 |

6ecd248053f61de66faa866bb4eb7190af1c2e67fa363f8193444a5aee5c1706

|

File details

Details for the file lingua_language_detector-2.2.0-cp313-cp313-macosx_10_12_x86_64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp313-cp313-macosx_10_12_x86_64.whl

- Upload date:

- Size: 170.2 MB

- Tags: CPython 3.13, macOS 10.12+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d52dc5a54bb245b1d9df54620810e7b72a247f8ca4276659a9893fe415faff37

|

|

| MD5 |

5a6ead0195869d653355ed8a44d15e8b

|

|

| BLAKE2b-256 |

0cd3b4647a233d4d8ef411519c7259c5b607b20568cb993d976319ae3f260eea

|

File details

Details for the file lingua_language_detector-2.2.0-cp312-cp312-win_arm64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp312-cp312-win_arm64.whl

- Upload date:

- Size: 170.0 MB

- Tags: CPython 3.12, Windows ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

581bfb3405dd99863b04753812021f2554545c4c2783d0faa41af44535c759a1

|

|

| MD5 |

667a8a496ae56a963fd5cfd260735f9e

|

|

| BLAKE2b-256 |

45a8197f06b3d2da6ffb580d20e0b46181ef6d34fd750c7930ec04b322767cfb

|

File details

Details for the file lingua_language_detector-2.2.0-cp312-cp312-win_amd64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp312-cp312-win_amd64.whl

- Upload date:

- Size: 170.1 MB

- Tags: CPython 3.12, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

98baee0c51e31d0b54a92a4795aca6ca7069de9b99dc783e3456a91abd2ff692

|

|

| MD5 |

46a865b3b26cd196f1fdea73216e4072

|

|

| BLAKE2b-256 |

9748bb581e0deda48169a11d25467d9fbe3ef4792b4d5363144bbea08caa9dd2

|

File details

Details for the file lingua_language_detector-2.2.0-cp312-cp312-musllinux_1_2_x86_64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp312-cp312-musllinux_1_2_x86_64.whl

- Upload date:

- Size: 170.6 MB

- Tags: CPython 3.12, musllinux: musl 1.2+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

362fbbc21da68c778f3521f42309d1ed6f54d4bd554a5701bf165419be9cc64b

|

|

| MD5 |

019c3febab8cb6f2646a0e3534518ab7

|

|

| BLAKE2b-256 |

e3f2ef84cc7f57854838f9b64f1b8aae07ee56827b5538b9609acb72aa6832e5

|

File details

Details for the file lingua_language_detector-2.2.0-cp312-cp312-musllinux_1_2_aarch64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp312-cp312-musllinux_1_2_aarch64.whl

- Upload date:

- Size: 170.5 MB

- Tags: CPython 3.12, musllinux: musl 1.2+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

cd54fe6505b671c0d1e33bf0436e8e9308e8802112eb5ba6fb37d2c5459ab685

|

|

| MD5 |

c1653f1022545b488ce363968dff1821

|

|

| BLAKE2b-256 |

47b5e6d09c3cf08580088cc85807b1b28ef8b77d8c62d50ed56144a565205787

|

File details

Details for the file lingua_language_detector-2.2.0-cp312-cp312-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp312-cp312-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 170.3 MB

- Tags: CPython 3.12, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

63d99c7570ba09525f1702e4e4b2362f8f1f7e0a0fba93a3a53d3f322e00659d

|

|

| MD5 |

1646364bf3a050de79c294c7ec7bd082

|

|

| BLAKE2b-256 |

44a07322a0c50db8f82836ef40b14986dfcfad17bd837bfa5782562fec143bf0

|

File details

Details for the file lingua_language_detector-2.2.0-cp312-cp312-manylinux_2_17_aarch64.manylinux2014_aarch64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp312-cp312-manylinux_2_17_aarch64.manylinux2014_aarch64.whl

- Upload date:

- Size: 170.3 MB

- Tags: CPython 3.12, manylinux: glibc 2.17+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

9ac7453c08ab9699706a92f15480ae3d4b66761c15e1577a1ba31d1635780f3a

|

|

| MD5 |

a1ade16932c25e0742567b5a57cf35db

|

|

| BLAKE2b-256 |

c964b6212bc0eff72d76dd04649c13452318eb2abeafc397ac597242e47e3e07

|

File details

Details for the file lingua_language_detector-2.2.0-cp312-cp312-macosx_11_0_arm64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp312-cp312-macosx_11_0_arm64.whl

- Upload date:

- Size: 172.5 MB

- Tags: CPython 3.12, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2fe367f7c112a0445218407e259338a88af770d5c84a550c20ebe11d5053f03d

|

|

| MD5 |

05655f89413d03deffac6d84d8b679e7

|

|

| BLAKE2b-256 |

290532568a1afe29e8d2060e4ffefd9d1a67aa2e423db3ab4abbf4f604c81b39

|

File details

Details for the file lingua_language_detector-2.2.0-cp312-cp312-macosx_10_12_x86_64.whl.

File metadata

- Download URL: lingua_language_detector-2.2.0-cp312-cp312-macosx_10_12_x86_64.whl

- Upload date:

- Size: 170.2 MB

- Tags: CPython 3.12, macOS 10.12+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: maturin/1.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

df29270e5eef3c597e725e11eee778b7111412faab466d390d22ab1d5293bbb8

|

|

| MD5 |

fbb726f0249759763a3991fc27f73efc

|

|

| BLAKE2b-256 |

c7c569636ba575cca9f507dd08ffdd4a2d084fdb193aa8e4246a5335bc077678

|