A simple CLI to chat with LLM Models

Project description

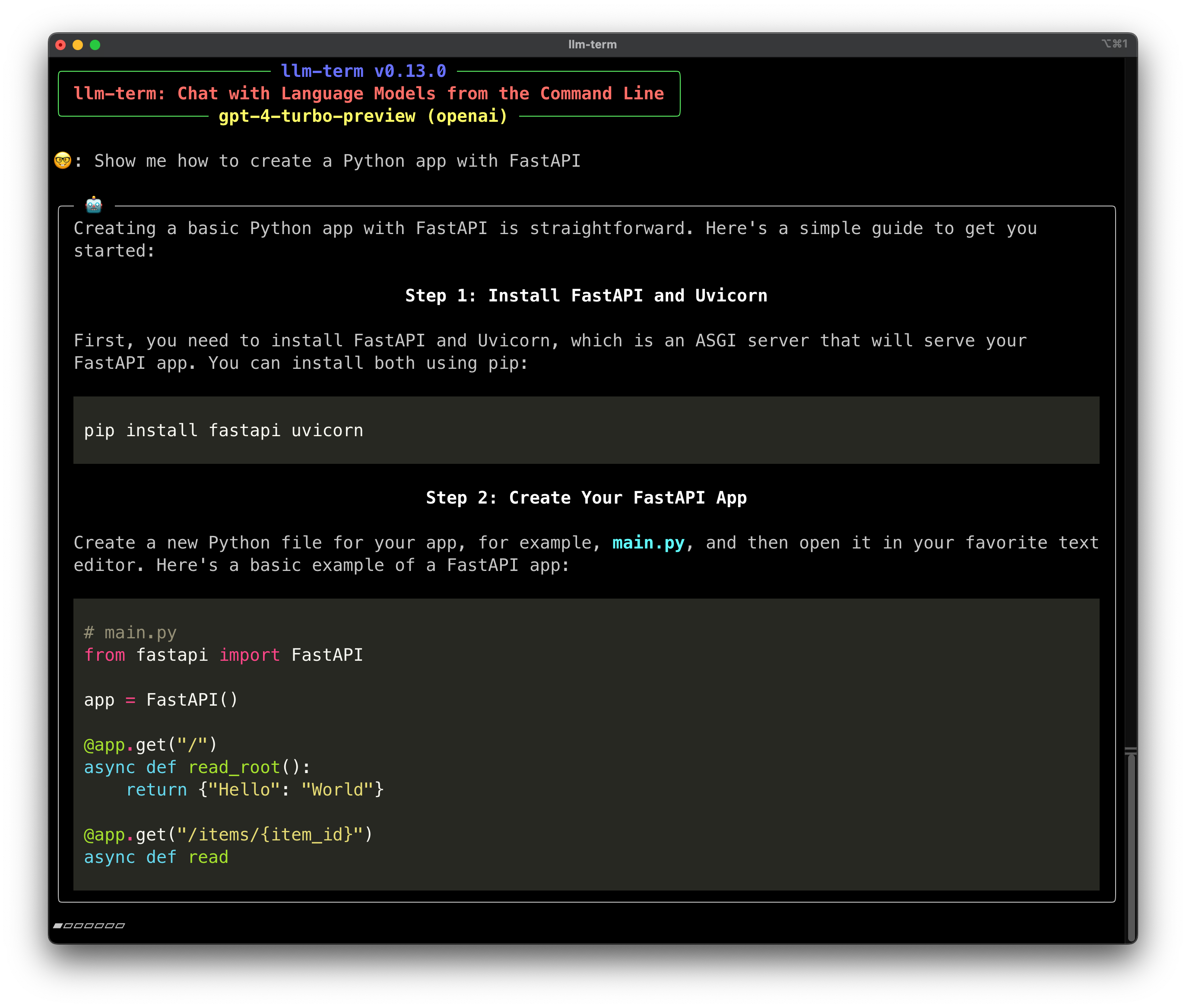

llm-term

Chat with LLM models directly from the command line.

Screen Recording

https://github.com/juftin/llm-term/assets/49741340/c305f636-dfcf-4d6f-884f-81d378cf0684

Check Out the Docs

Installation

pipx install llm-term

Install with Extras

You can install llm-term with extra dependencies for different providers:

pipx install "llm-term[anthropic]"

pipx install "llm-term[mistralai]"

Or, you can install all the extras:

pipx install "llm-term[all]"

Usage

Then, you can chat with the model directly from the command line:

llm-term

llm-term works with multiple LLM providers, but by default it uses OpenAI.

Most providers require extra packages to be installed, so make sure you

read the Providers section below. To use a different provider, you

can set the --provider / -p flag:

llm-term --provider anthropic

If needed, make sure you have your LLM's API key set as an environment variable

(this can also set via the --api-key / -k flag in the CLI). If your LLM uses

a particular environment variable for its API key, such as OPENAI_API_KEY,

that will be detected automatically.

export LLM_API_KEY="xxxxxxxxxxxxxx"

Optionally, you can set a custom model. llm-term defaults

to gpt-4o (this can also set via the --model / -m flag in the CLI):

export LLM_MODEL="gpt-4o-mini"

Want to start the conversion directly from the command line? No problem,

just pass your prompt to llm-term:

llm-term show me python code to detect a palindrome

You can also set a custom system prompt. llm-term defaults to a reasonable

prompt for chatting with the model, but you can set your own prompt (this

can also set via the --system / -s flag in the CLI):

export LLM_SYSTEM_MESSAGE="You are a helpful assistant who talks like a pirate."

Providers

OpenAI

By default, llm-term uses OpenAI as your LLM provider. The default model is

gpt-4o and you can also use the OPENAI_API_KEY environment variable

to set your API key.

Anthropic

You can request access to Anthropic here. The

default model is claude-3-5-sonnet-20240620, and you can use the ANTHROPIC_API_KEY environment

variable. To use anthropic as your provider you must install the anthropic

extra.

pipx install "llm-term[anthropic]"

llm-term --provider anthropic

MistralAI

You can request access to the MistralAI

here. The default model is

mistral-small-latest, and you can use the MISTRAL_API_KEY environment variable.

pipx install "llm-term[mistralai]"

llm-term --provider mistralai

Ollama

Ollama is a an open source LLM provider. These models run locally on your

machine, so you don't need to worry about API keys or rate limits. The default

model is llama3, and you can see what models are available on the Ollama

Website. Make sure to

download Ollama first.

ollama pull llama3

llm-term --provider ollama --model llama3

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file llm_term-0.14.0.tar.gz.

File metadata

- Download URL: llm_term-0.14.0.tar.gz

- Upload date:

- Size: 153.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/5.1.0 CPython/3.12.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

50cf719a566f136674532995e8d9ef4db9ed314ada29383765e394809a3eaa2c

|

|

| MD5 |

ade6a9a0e6ca1d4cee2f0fb025933738

|

|

| BLAKE2b-256 |

327731278afc8d4744d07c2b1377d539fb874b542a9782b50d792f5dba59d50e

|

File details

Details for the file llm_term-0.14.0-py3-none-any.whl.

File metadata

- Download URL: llm_term-0.14.0-py3-none-any.whl

- Upload date:

- Size: 9.1 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/5.1.0 CPython/3.12.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

cfad27a25fba9d782a4542f6e15a27ccc1461e5af87c0ac3f0a93fb75dd3cb3b

|

|

| MD5 |

d05ef528ae57c03cecd5ee09d36f5cbe

|

|

| BLAKE2b-256 |

456d43715bea59894a2d193c4f03a01fef294551f0b8c741f5d4f9eca62612b9

|