LMU metapackage for installing various LMU implementations

Project description

Legendre Memory Units: Continuous-Time Representation in Recurrent Neural Networks

The LMU is a novel memory cell for recurrent neural networks that dynamically maintains information across long windows of time using relatively few resources. It has been shown to perform as well as standard LSTM or other RNN-based models in a variety of tasks, generally with fewer internal parameters (see this paper for more details). For the Permuted Sequential MNIST (psMNIST) task in particular, it has been demonstrated to outperform the current state-of-the-art results. See the note below for instructions on how to get access to this model.

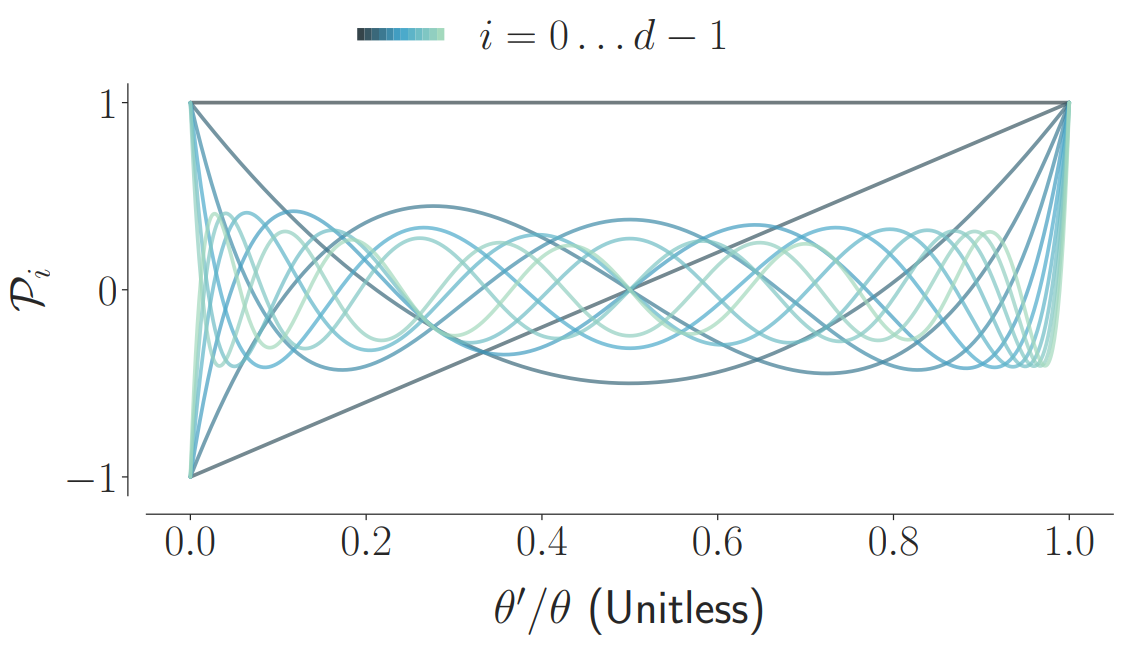

The LMU is mathematically derived to orthogonalize its continuous-time history – doing so by solving d coupled ordinary differential equations (ODEs), whose phase space linearly maps onto sliding windows of time via the Legendre polynomials up to degree d − 1 (the example for d = 12 is shown below).

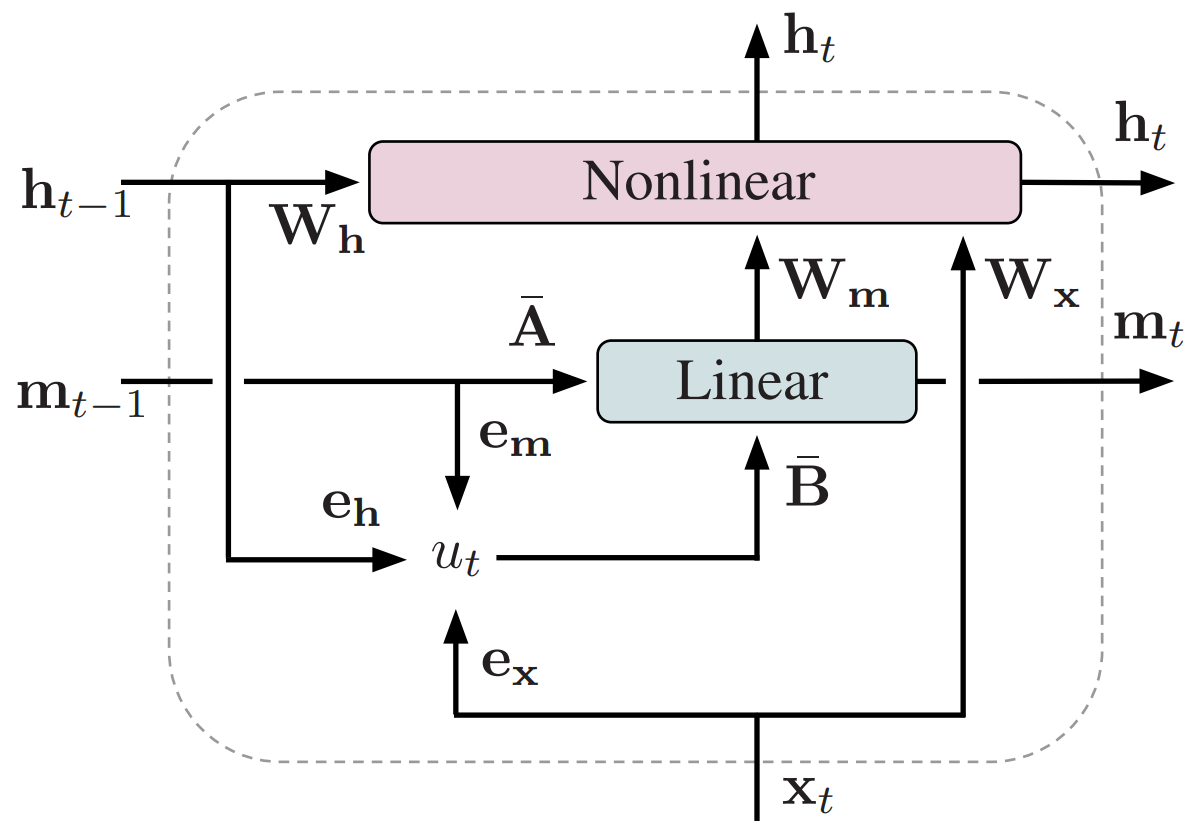

A single LMU cell expresses the following computational graph, which takes in an input signal, x, and couples a optimal linear memory, m, with a nonlinear hidden state, h. By default, this coupling is trained via backpropagation, while the dynamics of the memory remain fixed.

The discretized A and B matrices are initialized according to the LMU’s mathematical derivation with respect to some chosen window length, θ. Backpropagation can be used to learn this time-scale, or fine-tune A and B, if necessary.

Both the kernels, W, and the encoders, e, are learned. Intuitively, the kernels learn to compute nonlinear functions across the memory, while the encoders learn to project the relevant information into the memory (see paper for details).

LMU implementations

KerasLMU: Implementation of LMUs in Keras (this is the original LMU implementation, which used to be referred to generically as the LMU repo).

Examples

Citation

@inproceedings{voelker2019lmu,

title={Legendre Memory Units: Continuous-Time Representation in Recurrent Neural Networks},

author={Aaron R. Voelker and Ivana Kaji\'c and Chris Eliasmith},

booktitle={Advances in Neural Information Processing Systems},

pages={15544--15553},

year={2019}

}Release history

0.4.0 (November 10, 2021)

Changed

Updated to use KerasLMU 0.4. (#2)

0.3.0 (November 6, 2020)

The version of this package as it existed prior to 0.3.0 has been renamed to keras-lmu. This package will continue as a metapackage for installing multiple LMU implementations.

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file lmu-0.4.0.tar.gz.

File metadata

- Download URL: lmu-0.4.0.tar.gz

- Upload date:

- Size: 7.8 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.6.0 importlib_metadata/4.8.2 pkginfo/1.7.1 requests/2.26.0 requests-toolbelt/0.9.1 tqdm/4.62.3 CPython/3.8.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

382393a4bd7093921ad60eec7bd9b67e40e253f026489e9bb01afb6e1721ebfb

|

|

| MD5 |

01b77f15df780bfb7e2bacd6bb389090

|

|

| BLAKE2b-256 |

3d7f68f54c2b461457d64390e98c9b6c2e770d8023bc95e2878041c16da52270

|

File details

Details for the file lmu-0.4.0-py3-none-any.whl.

File metadata

- Download URL: lmu-0.4.0-py3-none-any.whl

- Upload date:

- Size: 5.5 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.6.0 importlib_metadata/4.8.2 pkginfo/1.7.1 requests/2.26.0 requests-toolbelt/0.9.1 tqdm/4.62.3 CPython/3.8.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

58218e187a4dde75e5dc3961db2d2915e1405ae7b47f988866c9ca3b42310ebb

|

|

| MD5 |

ecfd7ed53f05a2758ad39c5a79124fd7

|

|

| BLAKE2b-256 |

02a9fc8ad151178c0cbc594960d77ff9904de3c44962d14c95ebd571a8312e81

|