Machine Learning Operations Toolkit

Project description

⏳ Tempo: The MLOps Software Development Kit

Vision

Enable data scientists to see a productionised machine learning model within moments, not months. Easy to work with locally and also in kubernetes, whatever your preferred data science tools

Overview

Tempo provides a unified interface to multiple MLOps projects that enable data scientists to deploy and productionise machine learning systems.

- Package your trained model artifacts to optimized server runtimes (Tensorflow, PyTorch, Sklearn, XGBoost etc)

- Package custom business logic to production servers.

- Build a inference pipeline of models and orchestration steps.

- Include any custom python components as needed. Examples:

- Outlier detectors with Alibi-Detect.

- Explainers with Alibi-Explain.

- Deploy locally to Docker to test with Docker runtimes.

- Deploy to production on Kubernetes with configurable runtimes.

- Seldon customers can deploy with Seldon Deploy runtime.

- Run with local unit tests.

- Create stateful services. Examples:

- Multi-Armed Bandits.

- Extract declarative Kubernetes yaml to follow GitOps workflows.

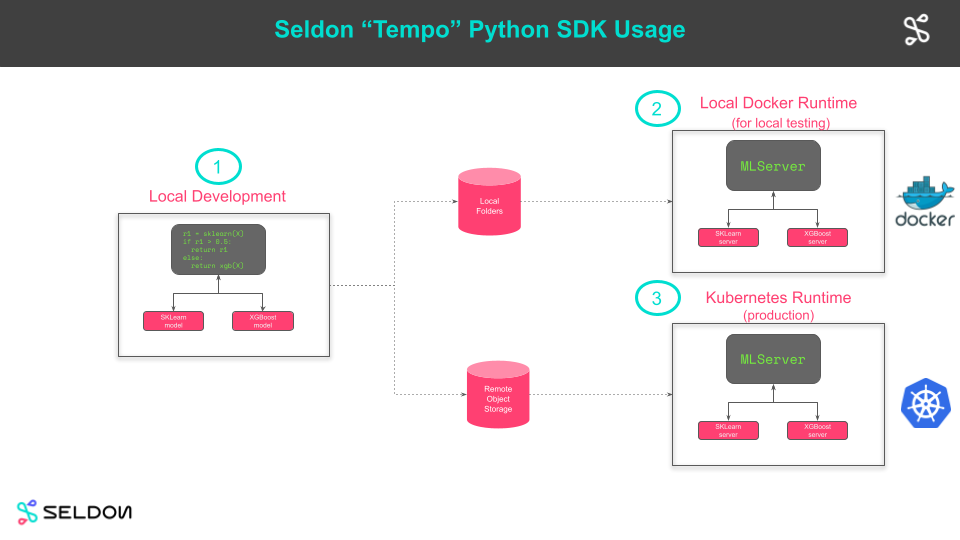

Workflow

- Develop locally.

- Test locally on Docker with production artifacts.

- Push artifacts to remote bucket store and launch remotely (on Kubernetes).

Motivating Example

Tempo allows you to interact with scalable orchestration engines like Seldon Core and KFServing, and leverage a broad range of machine learning services like TFserving, Triton, MLFlow, etc.

sklearn_model = Model(

name="test-iris-sklearn",

platform=ModelFramework.SKLearn,

uri="gs://seldon-models/sklearn/iris")

sklearn_model = Model(

name="test-iris-sklearn",

platform=ModelFramework.SKLearn,

uri="gs://seldon-models/sklearn/iris")

@pipeline(name="mypipeline",

uri="gs://seldon-models/custom",

models=[sklearn_model, xgboost_model])

class MyPipeline(object):

@predictmethod

def predict(self, payload: np.ndarray) -> np.ndarray:

res1 = sklearn_model(payload)

if res1[0][0] > 0.7:

return res1

else:

return xgboost_model(payload)

my_pipeline = MyPipeline()

# Deploy only the models into kubernetes

my_pipeline.deploy_models()

my_pipeline.wait_ready()

# Run the request using the local pipeline function but reaching to remote models

my_pipeline.predict(np.array([[4.9, 3.1, 1.5, 0.2]]))

Productionisation Workflows

Declarative Interface

Even though Tempo provides a dynamic imperative interface, it is possible to convert into a declarative representation of components.

yaml = my_pipeline.to_k8s_yaml()

print(yaml)

Environment Packaging

You can also manage the environments of your pipelines to introduce reproducibility of local and production environments.

@pipeline(name="mypipeline",

uri="gs://seldon-models/custom",

conda_env="tempo",

models=[sklearn_model, xgboost_model])

class MyPipeline(object):

# ...

my_pipeline = MyPipeline()

# Save the full conda environment of the pipeline

my_pipeline.save()

# Upload the full conda environment

my_pipeline.upload()

# Deploy the full pipeline remotely

my_pipeline.deploy()

my_pipeline.wait_ready()

# Run the request to the remote deployed pipeline

my_pipeline.remote(np.array([[4.9, 3.1, 1.5, 0.2]]))

Examples

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file mlops-tempo-0.1.0.dev7.tar.gz.

File metadata

- Download URL: mlops-tempo-0.1.0.dev7.tar.gz

- Upload date:

- Size: 25.2 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.3.0 pkginfo/1.7.0 requests/2.25.1 setuptools/51.1.2 requests-toolbelt/0.9.1 tqdm/4.56.2 CPython/3.7.8

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

339fd31b188009c376b0a32717e863dcab8bda5b905c74b81cdbda4ed026cc56

|

|

| MD5 |

3fa1cf48a99799b7e4bea3152806c1e0

|

|

| BLAKE2b-256 |

5d28fd982898526d1c183cc23d1507da4106179d59f216a8781ff74bb57cf066

|

File details

Details for the file mlops_tempo-0.1.0.dev7-py3-none-any.whl.

File metadata

- Download URL: mlops_tempo-0.1.0.dev7-py3-none-any.whl

- Upload date:

- Size: 40.6 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.3.0 pkginfo/1.7.0 requests/2.25.1 setuptools/51.1.2 requests-toolbelt/0.9.1 tqdm/4.56.2 CPython/3.7.8

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ef631b618bda54f820ddc2cedeac5a9ecbda2c70d6dd14e1f600caf1a7c565df

|

|

| MD5 |

b8b5f907f0c79c2be3ac796d0d22a354

|

|

| BLAKE2b-256 |

968def9d8cf20fdce73a5dabc16ef802c7c5fbe9e1006701ca10d7d530bdf7bd

|