A unified approach to explain the output of any machine learning model.

Project description

SHAP (SHapley Additive exPlanations) is a game theoretic approach to explain the output of any machine learning model. It connects optimal credit allocation with local explanations using the classic Shapley values from game theory and their related extensions (see papers for details and citations).

Install

SHAP can be installed from either PyPI or conda-forge:

pip install shap or conda install -c conda-forge shap

Tree ensemble example (XGBoost/LightGBM/CatBoost/scikit-learn/pyspark models)

While SHAP can explain the output of any machine learning model, we have developed a high-speed exact algorithm for tree ensemble methods (see our Nature MI paper). Fast C++ implementations are supported for XGBoost, LightGBM, CatBoost, scikit-learn and pyspark tree models:

import xgboost

import shap

# train an XGBoost model

X, y = shap.datasets.boston()

model = xgboost.XGBRegressor().fit(X, y)

# explain the model's predictions using SHAP

# (same syntax works for LightGBM, CatBoost, scikit-learn, transformers, Spark, etc.)

explainer = shap.Explainer(model)

shap_values = explainer(X)

# visualize the first prediction's explanation

shap.plots.waterfall(shap_values[0])

The above explanation shows features each contributing to push the model output from the base value (the average model output over the training dataset we passed) to the model output. Features pushing the prediction higher are shown in red, those pushing the prediction lower are in blue. Another way to visualize the same explanation is to use a force plot (these are introduced in our Nature BME paper):

# visualize the first prediction's explanation with a force plot

shap.plots.force(shap_values[0])

If we take many force plot explanations such as the one shown above, rotate them 90 degrees, and then stack them horizontally, we can see explanations for an entire dataset (in the notebook this plot is interactive):

# visualize all the training set predictions

shap.plots.force(shap_values)

To understand how a single feature effects the output of the model we can plot the SHAP value of that feature vs. the value of the feature for all the examples in a dataset. Since SHAP values represent a feature's responsibility for a change in the model output, the plot below represents the change in predicted house price as RM (the average number of rooms per house in an area) changes. Vertical dispersion at a single value of RM represents interaction effects with other features. To help reveal these interactions we can color by another feature. If we pass the whole explanation tensor to the color argument the scatter plot will pick the best feature to color by. In this case it picks RAD (index of accessibility to radial highways) since that highlights that the average number of rooms per house has less impact on home price for areas with a high RAD value.

# create a dependence scatter plot to show the effect of a single feature across the whole dataset

shap.plots.scatter(shap_values[:,"RM"], color=shap_values)

To get an overview of which features are most important for a model we can plot the SHAP values of every feature for every sample. The plot below sorts features by the sum of SHAP value magnitudes over all samples, and uses SHAP values to show the distribution of the impacts each feature has on the model output. The color represents the feature value (red high, blue low). This reveals for example that a high LSTAT (% lower status of the population) lowers the predicted home price.

# summarize the effects of all the features

shap.plots.beeswarm(shap_values)

We can also just take the mean absolute value of the SHAP values for each feature to get a standard bar plot (produces stacked bars for multi-class outputs):

shap.plots.bar(shap_values)

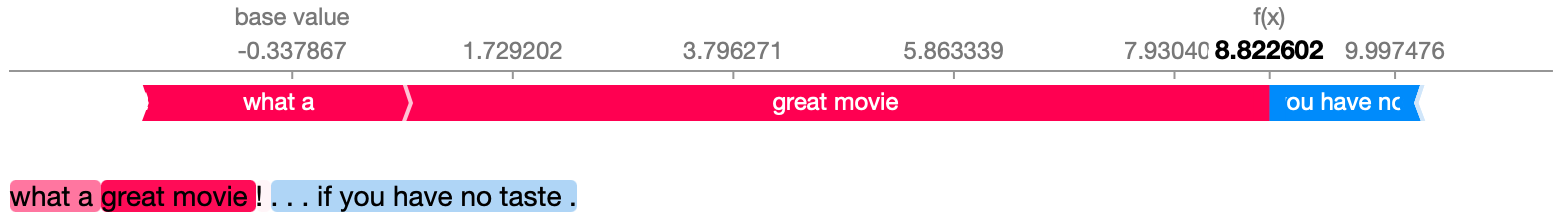

Natural language example (transformers)

SHAP has specific support for natural language models like those in the Hugging Face transformers library. By adding coalitional rules to traditional Shapley values we can form games that explain large modern NLP model using very few function evaluations. Using this functionality is as simple as passing a supported transformers pipeline to SHAP:

import transformers

import shap

# load a transformers pipeline model

model = transformers.pipeline('sentiment-analysis', return_all_scores=True)

# explain the model on two sample inputs

explainer = shap.Explainer(model)

shap_values = explainer(["What a great movie! ...if you have no taste."])

# visualize the first prediction's explanation for the POSITIVE output class

shap.plots.text(shap_values[0, :, "POSITIVE"])

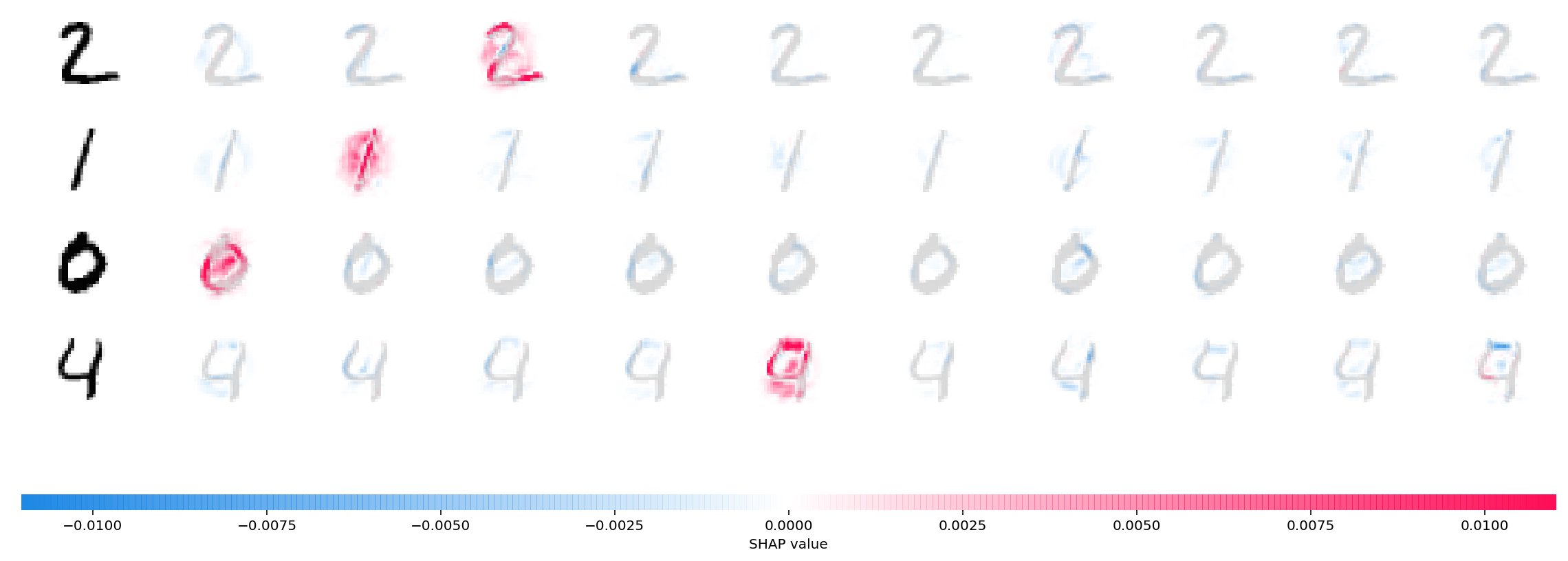

Deep learning example with DeepExplainer (TensorFlow/Keras models)

Deep SHAP is a high-speed approximation algorithm for SHAP values in deep learning models that builds on a connection with DeepLIFT described in the SHAP NIPS paper. The implementation here differs from the original DeepLIFT by using a distribution of background samples instead of a single reference value, and using Shapley equations to linearize components such as max, softmax, products, divisions, etc. Note that some of these enhancements have also been since integrated into DeepLIFT. TensorFlow models and Keras models using the TensorFlow backend are supported (there is also preliminary support for PyTorch):

# ...include code from https://github.com/keras-team/keras/blob/master/examples/mnist_cnn.py

import shap

import numpy as np

# select a set of background examples to take an expectation over

background = x_train[np.random.choice(x_train.shape[0], 100, replace=False)]

# explain predictions of the model on four images

e = shap.DeepExplainer(model, background)

# ...or pass tensors directly

# e = shap.DeepExplainer((model.layers[0].input, model.layers[-1].output), background)

shap_values = e.shap_values(x_test[1:5])

# plot the feature attributions

shap.image_plot(shap_values, -x_test[1:5])

The plot above explains ten outputs (digits 0-9) for four different images. Red pixels increase the model's output while blue pixels decrease the output. The input images are shown on the left, and as nearly transparent grayscale backings behind each of the explanations. The sum of the SHAP values equals the difference between the expected model output (averaged over the background dataset) and the current model output. Note that for the 'zero' image the blank middle is important, while for the 'four' image the lack of a connection on top makes it a four instead of a nine.

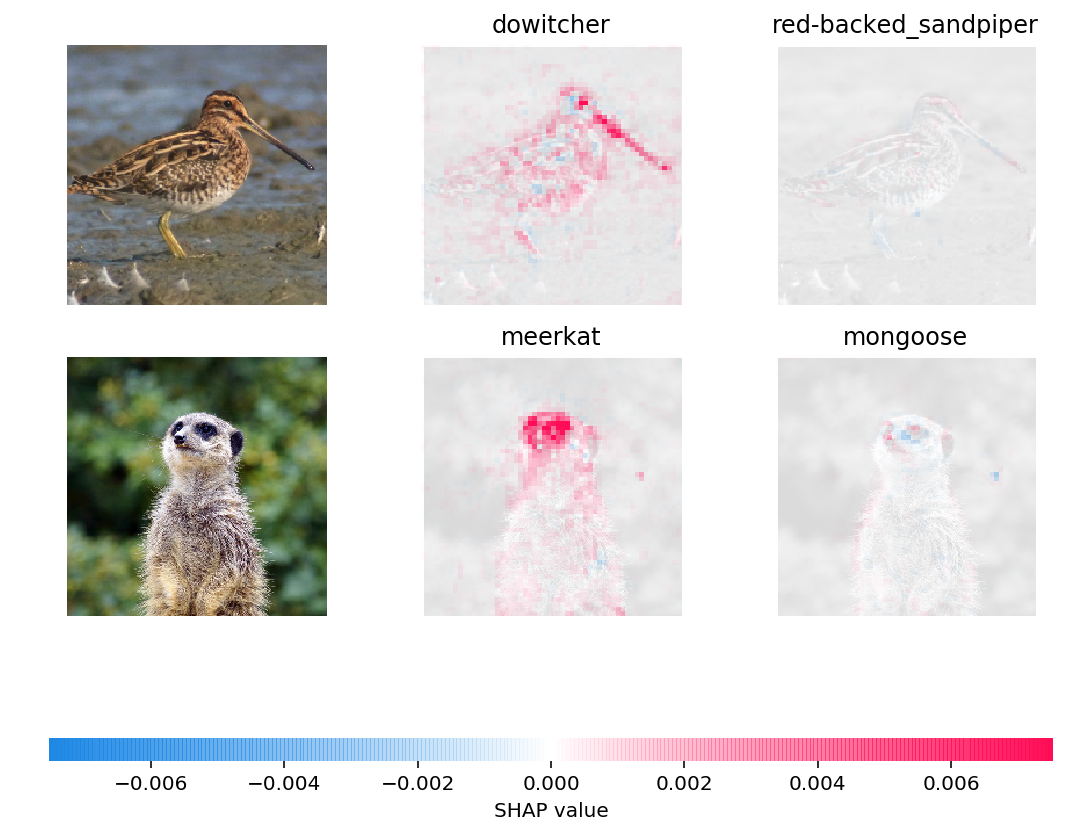

Deep learning example with GradientExplainer (TensorFlow/Keras/PyTorch models)

Expected gradients combines ideas from Integrated Gradients, SHAP, and SmoothGrad into a single expected value equation. This allows an entire dataset to be used as the background distribution (as opposed to a single reference value) and allows local smoothing. If we approximate the model with a linear function between each background data sample and the current input to be explained, and we assume the input features are independent then expected gradients will compute approximate SHAP values. In the example below we have explained how the 7th intermediate layer of the VGG16 ImageNet model impacts the output probabilities.

from keras.applications.vgg16 import VGG16

from keras.applications.vgg16 import preprocess_input

import keras.backend as K

import numpy as np

import json

import shap

# load pre-trained model and choose two images to explain

model = VGG16(weights='imagenet', include_top=True)

X,y = shap.datasets.imagenet50()

to_explain = X[[39,41]]

# load the ImageNet class names

url = "https://s3.amazonaws.com/deep-learning-models/image-models/imagenet_class_index.json"

fname = shap.datasets.cache(url)

with open(fname) as f:

class_names = json.load(f)

# explain how the input to the 7th layer of the model explains the top two classes

def map2layer(x, layer):

feed_dict = dict(zip([model.layers[0].input], [preprocess_input(x.copy())]))

return K.get_session().run(model.layers[layer].input, feed_dict)

e = shap.GradientExplainer(

(model.layers[7].input, model.layers[-1].output),

map2layer(X, 7),

local_smoothing=0 # std dev of smoothing noise

)

shap_values,indexes = e.shap_values(map2layer(to_explain, 7), ranked_outputs=2)

# get the names for the classes

index_names = np.vectorize(lambda x: class_names[str(x)][1])(indexes)

# plot the explanations

shap.image_plot(shap_values, to_explain, index_names)

Predictions for two input images are explained in the plot above. Red pixels represent positive SHAP values that increase the probability of the class, while blue pixels represent negative SHAP values the reduce the probability of the class. By using ranked_outputs=2 we explain only the two most likely classes for each input (this spares us from explaining all 1,000 classes).

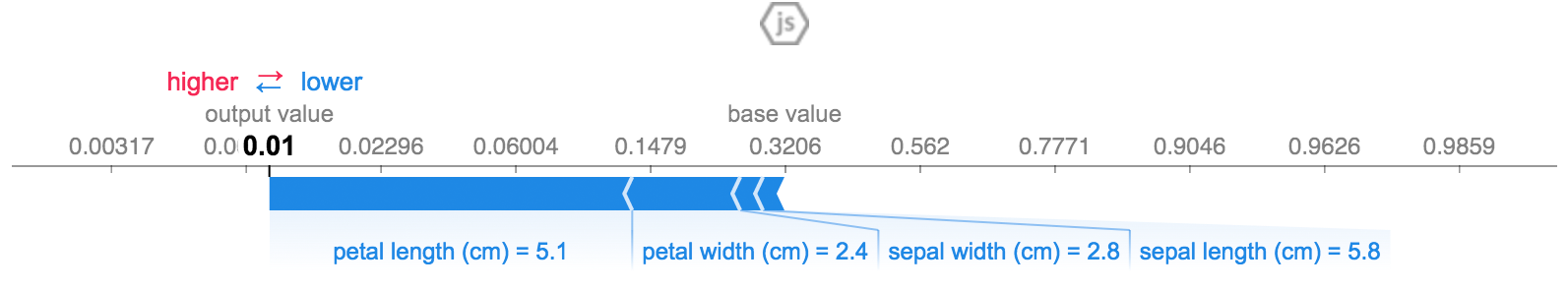

Model agnostic example with KernelExplainer (explains any function)

Kernel SHAP uses a specially-weighted local linear regression to estimate SHAP values for any model. Below is a simple example for explaining a multi-class SVM on the classic iris dataset.

import sklearn

import shap

from sklearn.model_selection import train_test_split

# print the JS visualization code to the notebook

shap.initjs()

# train a SVM classifier

X_train,X_test,Y_train,Y_test = train_test_split(*shap.datasets.iris(), test_size=0.2, random_state=0)

svm = sklearn.svm.SVC(kernel='rbf', probability=True)

svm.fit(X_train, Y_train)

# use Kernel SHAP to explain test set predictions

explainer = shap.KernelExplainer(svm.predict_proba, X_train, link="logit")

shap_values = explainer.shap_values(X_test, nsamples=100)

# plot the SHAP values for the Setosa output of the first instance

shap.force_plot(explainer.expected_value[0], shap_values[0][0,:], X_test.iloc[0,:], link="logit")

The above explanation shows four features each contributing to push the model output from the base value (the average model output over the training dataset we passed) towards zero. If there were any features pushing the class label higher they would be shown in red.

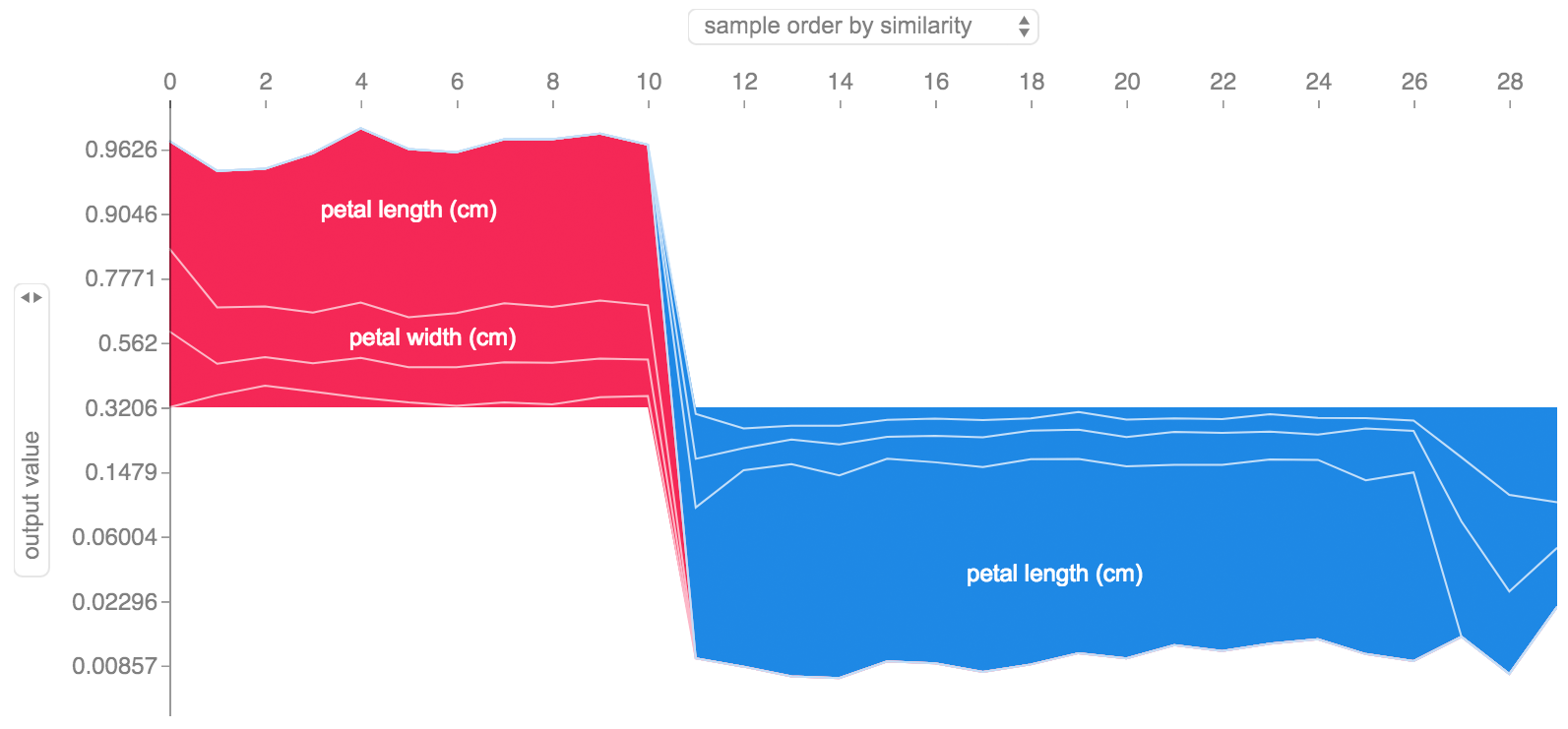

If we take many explanations such as the one shown above, rotate them 90 degrees, and then stack them horizontally, we can see explanations for an entire dataset. This is exactly what we do below for all the examples in the iris test set:

# plot the SHAP values for the Setosa output of all instances

shap.force_plot(explainer.expected_value[0], shap_values[0], X_test, link="logit")

SHAP Interaction Values

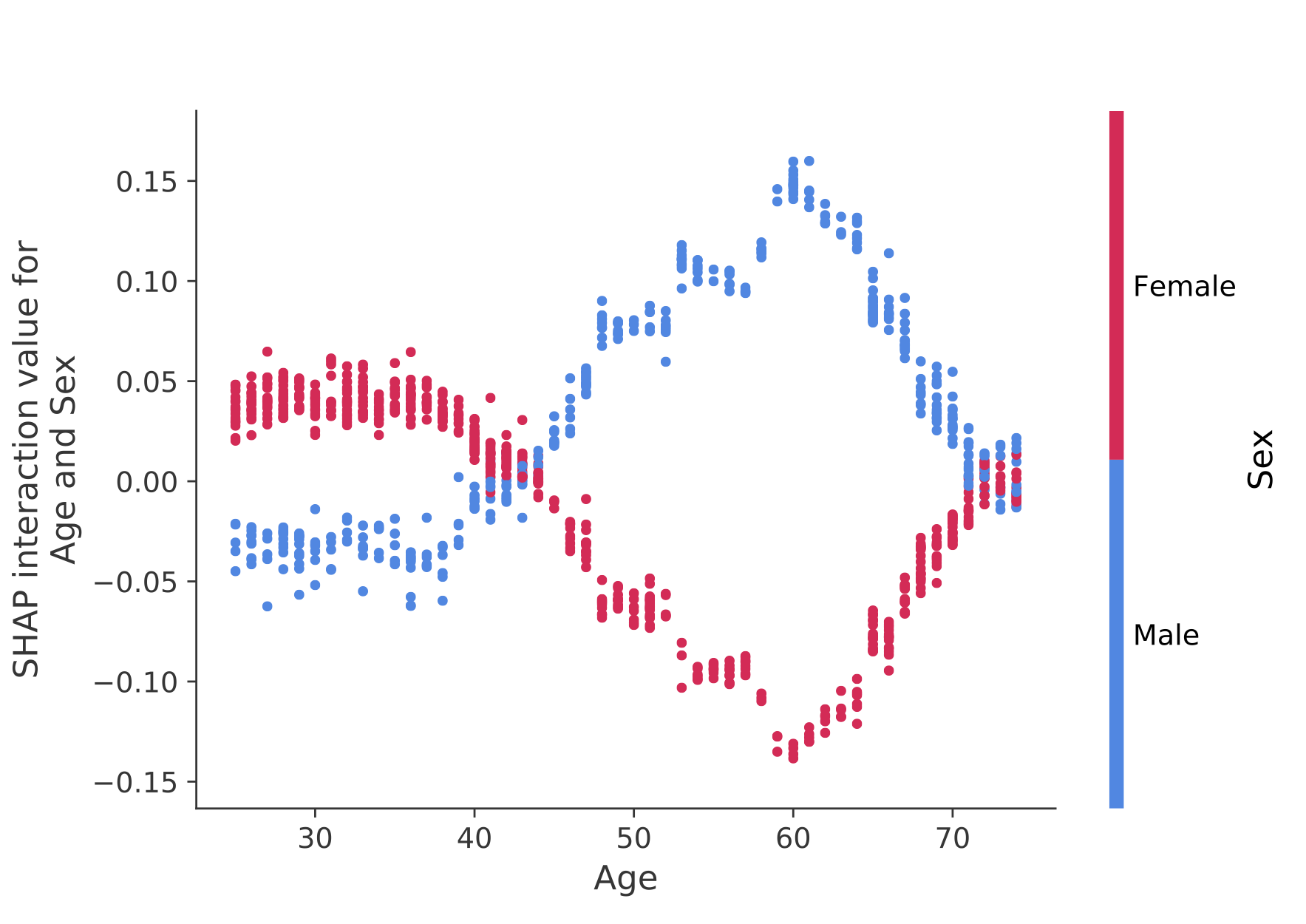

SHAP interaction values are a generalization of SHAP values to higher order interactions. Fast exact computation of pairwise interactions are implemented for tree models with shap.TreeExplainer(model).shap_interaction_values(X). This returns a matrix for every prediction, where the main effects are on the diagonal and the interaction effects are off-diagonal. These values often reveal interesting hidden relationships, such as how the increased risk of death peaks for men at age 60 (see the NHANES notebook for details):

Sample notebooks

The notebooks below demonstrate different use cases for SHAP. Look inside the notebooks directory of the repository if you want to try playing with the original notebooks yourself.

TreeExplainer

An implementation of Tree SHAP, a fast and exact algorithm to compute SHAP values for trees and ensembles of trees.

-

NHANES survival model with XGBoost and SHAP interaction values - Using mortality data from 20 years of followup this notebook demonstrates how to use XGBoost and

shapto uncover complex risk factor relationships. -

Census income classification with LightGBM - Using the standard adult census income dataset, this notebook trains a gradient boosting tree model with LightGBM and then explains predictions using

shap. -

League of Legends Win Prediction with XGBoost - Using a Kaggle dataset of 180,000 ranked matches from League of Legends we train and explain a gradient boosting tree model with XGBoost to predict if a player will win their match.

DeepExplainer

An implementation of Deep SHAP, a faster (but only approximate) algorithm to compute SHAP values for deep learning models that is based on connections between SHAP and the DeepLIFT algorithm.

-

MNIST Digit classification with Keras - Using the MNIST handwriting recognition dataset, this notebook trains a neural network with Keras and then explains predictions using

shap. -

Keras LSTM for IMDB Sentiment Classification - This notebook trains an LSTM with Keras on the IMDB text sentiment analysis dataset and then explains predictions using

shap.

GradientExplainer

An implementation of expected gradients to approximate SHAP values for deep learning models. It is based on connections between SHAP and the Integrated Gradients algorithm. GradientExplainer is slower than DeepExplainer and makes different approximation assumptions.

- Explain an Intermediate Layer of VGG16 on ImageNet - This notebook demonstrates how to explain the output of a pre-trained VGG16 ImageNet model using an internal convolutional layer.

LinearExplainer

For a linear model with independent features we can analytically compute the exact SHAP values. We can also account for feature correlation if we are willing to estimate the feature covariance matrix. LinearExplainer supports both of these options.

- Sentiment Analysis with Logistic Regression - This notebook demonstrates how to explain a linear logistic regression sentiment analysis model.

KernelExplainer

An implementation of Kernel SHAP, a model agnostic method to estimate SHAP values for any model. Because it makes no assumptions about the model type, KernelExplainer is slower than the other model type specific algorithms.

-

Census income classification with scikit-learn - Using the standard adult census income dataset, this notebook trains a k-nearest neighbors classifier using scikit-learn and then explains predictions using

shap. -

ImageNet VGG16 Model with Keras - Explain the classic VGG16 convolutional neural network's predictions for an image. This works by applying the model agnostic Kernel SHAP method to a super-pixel segmented image.

-

Iris classification - A basic demonstration using the popular iris species dataset. It explains predictions from six different models in scikit-learn using

shap.

Documentation notebooks

These notebooks comprehensively demonstrate how to use specific functions and objects.

Methods Unified by SHAP

-

LIME: Ribeiro, Marco Tulio, Sameer Singh, and Carlos Guestrin. "Why should i trust you?: Explaining the predictions of any classifier." Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. ACM, 2016.

-

Shapley sampling values: Strumbelj, Erik, and Igor Kononenko. "Explaining prediction models and individual predictions with feature contributions." Knowledge and information systems 41.3 (2014): 647-665.

-

DeepLIFT: Shrikumar, Avanti, Peyton Greenside, and Anshul Kundaje. "Learning important features through propagating activation differences." arXiv preprint arXiv:1704.02685 (2017).

-

QII: Datta, Anupam, Shayak Sen, and Yair Zick. "Algorithmic transparency via quantitative input influence: Theory and experiments with learning systems." Security and Privacy (SP), 2016 IEEE Symposium on. IEEE, 2016.

-

Layer-wise relevance propagation: Bach, Sebastian, et al. "On pixel-wise explanations for non-linear classifier decisions by layer-wise relevance propagation." PloS one 10.7 (2015): e0130140.

-

Shapley regression values: Lipovetsky, Stan, and Michael Conklin. "Analysis of regression in game theory approach." Applied Stochastic Models in Business and Industry 17.4 (2001): 319-330.

-

Tree interpreter: Saabas, Ando. Interpreting random forests. http://blog.datadive.net/interpreting-random-forests/

Citations

The algorithms and visualizations used in this package came primarily out of research in Su-In Lee's lab at the University of Washington, and Microsoft Research. If you use SHAP in your research we would appreciate a citation to the appropriate paper(s):

- For general use of SHAP you can read/cite our NeurIPS paper (bibtex).

- For TreeExplainer you can read/cite our Nature Machine Intelligence paper (bibtex; free access).

- For GPUTreeExplainer you can read/cite this article.

- For

force_plotvisualizations and medical applications you can read/cite our Nature Biomedical Engineering paper (bibtex; free access).

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file shap-0.42.1.tar.gz.

File metadata

- Download URL: shap-0.42.1.tar.gz

- Upload date:

- Size: 402.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

64403915e4a07d2951e7eee4af0e835b1b519367b11806fe1aa4bd6d81adb626

|

|

| MD5 |

98225be251f17d1f76d8d352adcf10cb

|

|

| BLAKE2b-256 |

b35c087c301fcbd2164c097b87ca16e8e94ec06b28006b6e5d8ac8bfad4bea33

|

File details

Details for the file shap-0.42.1-cp311-cp311-win_amd64.whl.

File metadata

- Download URL: shap-0.42.1-cp311-cp311-win_amd64.whl

- Upload date:

- Size: 462.3 kB

- Tags: CPython 3.11, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b32b13a5a5fc089594b676f32565d5c5ea3abf5f0755b0fa0d25ffc9d53cb6ea

|

|

| MD5 |

4efbeef700c16b9403363a53cb1613df

|

|

| BLAKE2b-256 |

ee60db3416646efc5d6e2c27f75b30bdda9c77d54dad44a5fbb1fe888a2a958b

|

File details

Details for the file shap-0.42.1-cp311-cp311-manylinux_2_17_aarch64.manylinux2014_aarch64.whl.

File metadata

- Download URL: shap-0.42.1-cp311-cp311-manylinux_2_17_aarch64.manylinux2014_aarch64.whl

- Upload date:

- Size: 545.7 kB

- Tags: CPython 3.11, manylinux: glibc 2.17+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

6c570de5b91e26d26c93e6c028d13636845ef86619a3559145de98b7ec1148f1

|

|

| MD5 |

4f8889c4ef364e325f5680ba600fa5c5

|

|

| BLAKE2b-256 |

f76cd28e8017fa27a5ba57982fc4cd203590fca69f6dc1057a96e90634240b23

|

File details

Details for the file shap-0.42.1-cp311-cp311-manylinux_2_12_x86_64.manylinux2010_x86_64.manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: shap-0.42.1-cp311-cp311-manylinux_2_12_x86_64.manylinux2010_x86_64.manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 548.0 kB

- Tags: CPython 3.11, manylinux: glibc 2.12+ x86-64, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ce0fc9654ab62c6edeaf375c235a781bb280a40ffbbdbce35102d3b2d1a323a4

|

|

| MD5 |

10f95a80b0b0ee890adfbe456ab062c7

|

|

| BLAKE2b-256 |

8291329cd40c72cdc09776fb361b2378da4080377e77a55a1ce49d64f4b17e4b

|

File details

Details for the file shap-0.42.1-cp311-cp311-macosx_11_0_arm64.whl.

File metadata

- Download URL: shap-0.42.1-cp311-cp311-macosx_11_0_arm64.whl

- Upload date:

- Size: 460.4 kB

- Tags: CPython 3.11, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

53f6b6ab79f67dabb6fbc6613b373ebd726c200f8136f1f7e43d8a8068edcad1

|

|

| MD5 |

8771f3c77425ecaaf0fb93e755a18eeb

|

|

| BLAKE2b-256 |

d08dbdce2f364078e96b6b6fd1634a694b884cc4e710c76fb3512ecf3e5f332b

|

File details

Details for the file shap-0.42.1-cp311-cp311-macosx_10_9_x86_64.whl.

File metadata

- Download URL: shap-0.42.1-cp311-cp311-macosx_10_9_x86_64.whl

- Upload date:

- Size: 465.3 kB

- Tags: CPython 3.11, macOS 10.9+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b6bfe78edf8478c5f0bc2ae1f4150ab1ae3065b5655fcce580fd99ef30601db7

|

|

| MD5 |

51e5481fa92086ab5636ad41e7e93fdc

|

|

| BLAKE2b-256 |

12f772e36cc600b9b015aacaf4c41e8941919498421c988fe2eef0ca03a78d78

|

File details

Details for the file shap-0.42.1-cp310-cp310-win_amd64.whl.

File metadata

- Download URL: shap-0.42.1-cp310-cp310-win_amd64.whl

- Upload date:

- Size: 462.3 kB

- Tags: CPython 3.10, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c6d1b381a365a4a8582ac0fe1800282a89479757e20fb5f8d8db2ec8e853461c

|

|

| MD5 |

d502771027446ec0420af9e973261146

|

|

| BLAKE2b-256 |

760fa17e7f29c9bb859231a7098457b08ca99d16079b8d8c6c68d5be84800efb

|

File details

Details for the file shap-0.42.1-cp310-cp310-manylinux_2_17_aarch64.manylinux2014_aarch64.whl.

File metadata

- Download URL: shap-0.42.1-cp310-cp310-manylinux_2_17_aarch64.manylinux2014_aarch64.whl

- Upload date:

- Size: 545.6 kB

- Tags: CPython 3.10, manylinux: glibc 2.17+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

60d272e133f28cce322049dadec8909d2912c12c31ba38cb2eb861c01e3d16b8

|

|

| MD5 |

ef74af24dd74293adeab4d02a346dd85

|

|

| BLAKE2b-256 |

f20a0f24850ddf4915dce322776e890c82cf0d640f6fc630e146bb5c13f759b7

|

File details

Details for the file shap-0.42.1-cp310-cp310-manylinux_2_12_x86_64.manylinux2010_x86_64.manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: shap-0.42.1-cp310-cp310-manylinux_2_12_x86_64.manylinux2010_x86_64.manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 547.9 kB

- Tags: CPython 3.10, manylinux: glibc 2.12+ x86-64, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e5d4e6c8d63a1e3535c2f3fc64f47f09862d1cc437ad434e9cdc50225d360266

|

|

| MD5 |

6528d7e0ef31127a4fd34980406868d5

|

|

| BLAKE2b-256 |

1899054dc6abe6d706a20cf31dc48a8d3de7b1601a44343def3f512f84e94f97

|

File details

Details for the file shap-0.42.1-cp310-cp310-macosx_11_0_arm64.whl.

File metadata

- Download URL: shap-0.42.1-cp310-cp310-macosx_11_0_arm64.whl

- Upload date:

- Size: 460.4 kB

- Tags: CPython 3.10, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

46cf52ce701eeee554d7b773b09ff907838f1210013c1cee5ddbee021a7f2c72

|

|

| MD5 |

4980de102e3d19ce2e226738358639aa

|

|

| BLAKE2b-256 |

45b999ca52bf4442ec9506534d59ec74db5fb45a9bb6430a4fd4781120a249d1

|

File details

Details for the file shap-0.42.1-cp310-cp310-macosx_10_9_x86_64.whl.

File metadata

- Download URL: shap-0.42.1-cp310-cp310-macosx_10_9_x86_64.whl

- Upload date:

- Size: 465.3 kB

- Tags: CPython 3.10, macOS 10.9+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4c6106e59dbd446114bb5f7f0762ae0fa4ffa28bdf79a860d287e37ac5233d48

|

|

| MD5 |

349899a972e128d3275b6855da39dac9

|

|

| BLAKE2b-256 |

0a6102bb5470ae62697d6261bb0a4082e6dce2f9ca38c7e4a0d9b56b1bfe9171

|

File details

Details for the file shap-0.42.1-cp39-cp39-win_amd64.whl.

File metadata

- Download URL: shap-0.42.1-cp39-cp39-win_amd64.whl

- Upload date:

- Size: 462.3 kB

- Tags: CPython 3.9, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

dfc77a2a4c37ac1c74c54af60d92732991386bd60da8763660846225384fe592

|

|

| MD5 |

6c55a95f12d6b6eed2b7e2bc1f8dae41

|

|

| BLAKE2b-256 |

5ea0b510b682cac4f2d0b25c05a490c0a689eb234d36edb42c96c885383f064a

|

File details

Details for the file shap-0.42.1-cp39-cp39-manylinux_2_17_aarch64.manylinux2014_aarch64.whl.

File metadata

- Download URL: shap-0.42.1-cp39-cp39-manylinux_2_17_aarch64.manylinux2014_aarch64.whl

- Upload date:

- Size: 545.5 kB

- Tags: CPython 3.9, manylinux: glibc 2.17+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

de4bf0035265cbc127b39ad8ecd1272acb5619e89cae666808d87d17cf6a0b28

|

|

| MD5 |

f39acf0e248b66f84e74a1f835a2a790

|

|

| BLAKE2b-256 |

771ea6093f3a451cc7a010f3d1e7b9f810d5297bb4a0c218ddbeb7c54bc564b8

|

File details

Details for the file shap-0.42.1-cp39-cp39-manylinux_2_12_x86_64.manylinux2010_x86_64.manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: shap-0.42.1-cp39-cp39-manylinux_2_12_x86_64.manylinux2010_x86_64.manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 547.8 kB

- Tags: CPython 3.9, manylinux: glibc 2.12+ x86-64, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

9d0b2e82bb3a5938b41fa640bb86894dc14b4935d419597d33b7d04392cd6ad0

|

|

| MD5 |

b456bb76acc2704221b122aca1d83c38

|

|

| BLAKE2b-256 |

beddceb8360240a3a70f5941515f6507eb8aa76d8a5ca9d311654a7433824727

|

File details

Details for the file shap-0.42.1-cp39-cp39-macosx_11_0_arm64.whl.

File metadata

- Download URL: shap-0.42.1-cp39-cp39-macosx_11_0_arm64.whl

- Upload date:

- Size: 460.4 kB

- Tags: CPython 3.9, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d43d31adeae9b1f628f49917179777580c7deceefa6156b5cf488a298287eabd

|

|

| MD5 |

b21ac3a12a0340eb89ea1e922474efdc

|

|

| BLAKE2b-256 |

e7fb30bd15d1d030996f33240a8ef125a13f66b6a2179c4e599cee4864b3c678

|

File details

Details for the file shap-0.42.1-cp39-cp39-macosx_10_9_x86_64.whl.

File metadata

- Download URL: shap-0.42.1-cp39-cp39-macosx_10_9_x86_64.whl

- Upload date:

- Size: 465.3 kB

- Tags: CPython 3.9, macOS 10.9+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

48511486c568a09d469381278bb40982cb0f74c1cf8a391dfb79d81e53843beb

|

|

| MD5 |

75b29ea1f89869ed5d78a7bb864e17e2

|

|

| BLAKE2b-256 |

bc4f9a713865151abaac37a5db79bd5eea94186cf3bce85219c71a05c22272b9

|

File details

Details for the file shap-0.42.1-cp38-cp38-win_amd64.whl.

File metadata

- Download URL: shap-0.42.1-cp38-cp38-win_amd64.whl

- Upload date:

- Size: 462.3 kB

- Tags: CPython 3.8, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

00cf2878c4a5fa5a6fcb72520ace9d17a027d64dd49272bd2072c20f2f97121d

|

|

| MD5 |

3efbd8f51ffc02c7c6e1df7c9ce2c20d

|

|

| BLAKE2b-256 |

b2266d04f78d0957679463c48c8e2bd2387baf55a9734438f5efc8ad3fb29f4a

|

File details

Details for the file shap-0.42.1-cp38-cp38-manylinux_2_17_aarch64.manylinux2014_aarch64.whl.

File metadata

- Download URL: shap-0.42.1-cp38-cp38-manylinux_2_17_aarch64.manylinux2014_aarch64.whl

- Upload date:

- Size: 548.7 kB

- Tags: CPython 3.8, manylinux: glibc 2.17+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c6bb4e3044240b300690d7e0282b512d4ffa4def49816e03fe0d6a06dcbde957

|

|

| MD5 |

860cc3c89f103ce9d23ff776ea7c36de

|

|

| BLAKE2b-256 |

3a35b99296b4d59556638f816d4993ac0d5140484c88fa51fbe34257c65996af

|

File details

Details for the file shap-0.42.1-cp38-cp38-manylinux_2_12_x86_64.manylinux2010_x86_64.manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: shap-0.42.1-cp38-cp38-manylinux_2_12_x86_64.manylinux2010_x86_64.manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 550.7 kB

- Tags: CPython 3.8, manylinux: glibc 2.12+ x86-64, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

6acc29bd3e1bd8eebf94d22ec35421a791fce62d35260e34468e6e6cf008a7c5

|

|

| MD5 |

86808b2aca83df3130ea00e42681d2cb

|

|

| BLAKE2b-256 |

4bfdecbc2d6c8ba3e982685e1eb40985640f90f0d06adf06af1afaf24b614160

|

File details

Details for the file shap-0.42.1-cp38-cp38-macosx_11_0_arm64.whl.

File metadata

- Download URL: shap-0.42.1-cp38-cp38-macosx_11_0_arm64.whl

- Upload date:

- Size: 460.4 kB

- Tags: CPython 3.8, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8d9b55b34e9027157f02f996bab71cc5e286ed246c031112a700461a1e2acb4f

|

|

| MD5 |

de1a50259a39878c835ddf337cb619ef

|

|

| BLAKE2b-256 |

0ac0bd05ca3e155daa9e6baf2a156686bb2a275b4154e4837a1c95d6c5ce1f79

|

File details

Details for the file shap-0.42.1-cp38-cp38-macosx_10_9_x86_64.whl.

File metadata

- Download URL: shap-0.42.1-cp38-cp38-macosx_10_9_x86_64.whl

- Upload date:

- Size: 465.3 kB

- Tags: CPython 3.8, macOS 10.9+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

93c97525068868bd84a13a68f853b6286fafc035bbc308291e3a6497677c5d89

|

|

| MD5 |

3914ac4cfb31a17eb4710f959093c0e1

|

|

| BLAKE2b-256 |

daf35c0dc229aa896e107aace90cf119e118f23068b3ffe2b597254ebd2f47ee

|

File details

Details for the file shap-0.42.1-cp37-cp37m-win_amd64.whl.

File metadata

- Download URL: shap-0.42.1-cp37-cp37m-win_amd64.whl

- Upload date:

- Size: 462.1 kB

- Tags: CPython 3.7m, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

26a3c8321852f12c650f685973159fbdcef75aae47405c4ceb702e3dbf49dcac

|

|

| MD5 |

707b24f8c708856b84d0f5a46cc9f565

|

|

| BLAKE2b-256 |

849e88ca34c2c79cd673df32161918707f8257e41fa0d70bcbb8c7b6026c36db

|

File details

Details for the file shap-0.42.1-cp37-cp37m-manylinux_2_17_aarch64.manylinux2014_aarch64.whl.

File metadata

- Download URL: shap-0.42.1-cp37-cp37m-manylinux_2_17_aarch64.manylinux2014_aarch64.whl

- Upload date:

- Size: 544.6 kB

- Tags: CPython 3.7m, manylinux: glibc 2.17+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

56b1d4aa0c9bba4050279a2bfcc3efcabb1af45cb1657e6c57d629810b4b360f

|

|

| MD5 |

47add349d83b6e4ece797c54d8ed8795

|

|

| BLAKE2b-256 |

e405f64ba674c366b3b67c775c68f5e5490ae80f1e895c150d7420a22a176767

|

File details

Details for the file shap-0.42.1-cp37-cp37m-manylinux_2_12_x86_64.manylinux2010_x86_64.manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: shap-0.42.1-cp37-cp37m-manylinux_2_12_x86_64.manylinux2010_x86_64.manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 545.7 kB

- Tags: CPython 3.7m, manylinux: glibc 2.12+ x86-64, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f14bcad9dcc3b48082c3b9a93ca3577610d110a768674b1ce209ada18619c133

|

|

| MD5 |

e2018b8f9d13de50160ffee9c62e18ff

|

|

| BLAKE2b-256 |

b8d815066ae71ba63683b8e53a8bef0e75bd87e95b79ef293f63fa674b351d9b

|

File details

Details for the file shap-0.42.1-cp37-cp37m-macosx_10_9_x86_64.whl.

File metadata

- Download URL: shap-0.42.1-cp37-cp37m-macosx_10_9_x86_64.whl

- Upload date:

- Size: 465.1 kB

- Tags: CPython 3.7m, macOS 10.9+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

34bc359b7c60f873d589133a54640929f76ead7cbe036d5faa35ddb96d2a8168

|

|

| MD5 |

3817af88d78461004d627f6b36c19985

|

|

| BLAKE2b-256 |

78b1d8346a236b260226287cda784321d78e17b14918e0d8d99657e12ae855d8

|