Simple tool for partial optimization of ONNX. Further optimize some models that cannot be optimized with onnx-optimizer and onnxsim by several tens of percent. In particular, models containing Einsum and OneHot.

Project description

spo4onnx

Simple tool for partial optimization of ONNX.

Further optimize some models that cannot be optimized with onnx-optimizer and onnxsim by several tens of percent. In particular, models containing Einsum and OneHot. In other words, the goal is to raise the optimization capacity of onnxsim. In the first place, the "optimization" as it is commonly called can be easily performed, since Einsum itself is all separated into MatMul if the model is built using the opt-einsum package. What this tool aims to do, however, is to optimize ONNX without generating many MatMul, leaving Einsum as it is. The only benefit you get with spo4onnx is that you can rejoice that you are a geek.

https://github.com/PINTO0309/simple-onnx-processing-tools

pip install -U spo4onnx \

&& pip install -U onnx \

&& pip install -U onnxruntime \

&& pip install onnxsim \

&& pip install tqdm==4.66.1 \

&& python3 -m pip install -U onnx_graphsurgeon --index-url https://pypi.ngc.nvidia.com

https://github.com/PINTO0309/spo4onnx/releases/download/model/wd-v1-4-moat-tagger-v2.onnx

spo4onnx -if wd-v1-4-moat-tagger-v2.onnx

- Temporarily downgrade onnxsim to

0.4.30to perform my own optimization sequence. - After the optimization process is complete, reinstall the original onnxsim version to restore the environment.

- The first version modifies two OPs,

EinsumandOneHot, which hinder optimization and boost the optimization operation by onnxsim to maximum performance. - Not all models will be effective, but the larger and more complex the structure and the larger the model, the more effective this unique optimization behavior will be.

- Processing models with this tool that contain OPs with non-deterministic output shapes, such as

NonZeroorNonMaxSuppression, will break the model. - I have already identified models that can reduce redundant operations by up to 30%-60%.

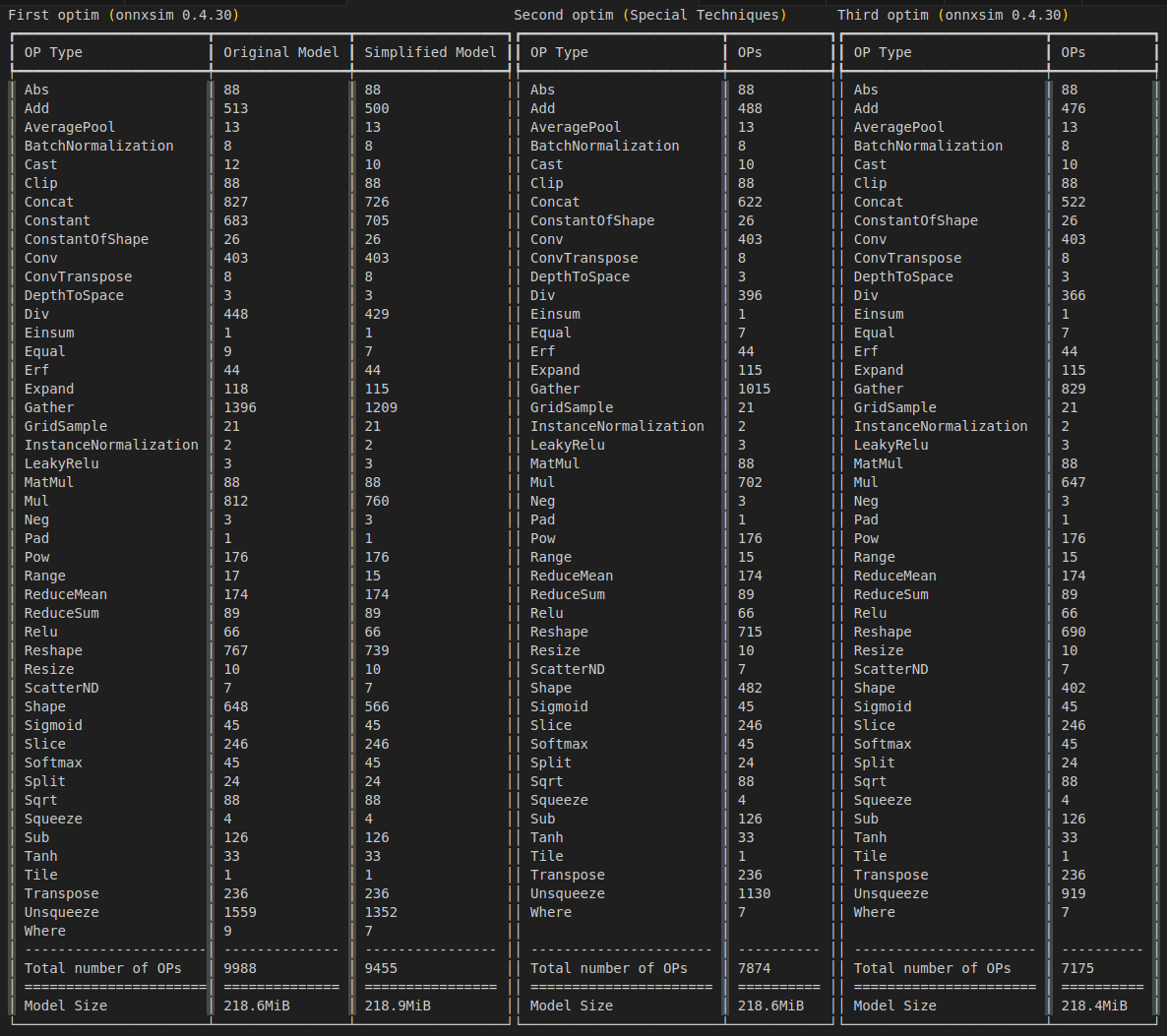

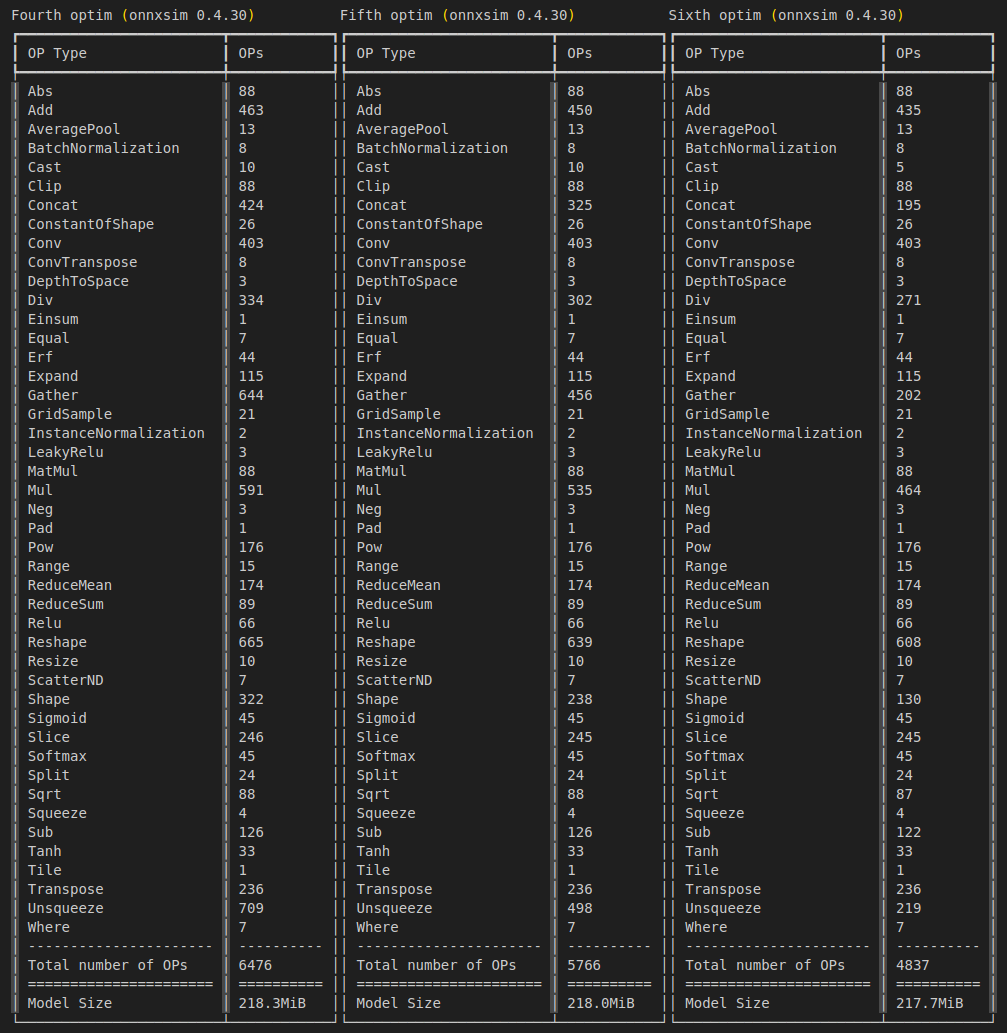

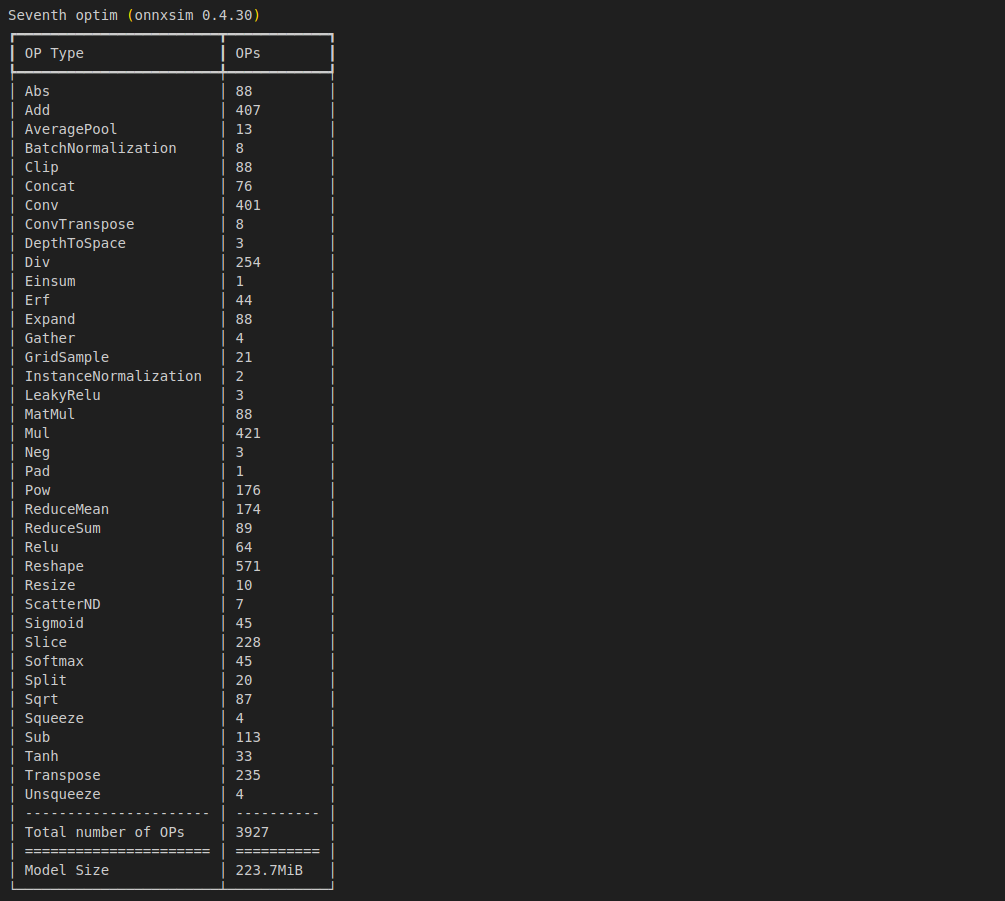

- An example of the most extreme optimization of my model is shown in the figure below. Example of optimization from 9,988 OP to 3,927 OP. The assumption is that this is an example of a huge ONNX with undefined Hieght and Width dimensions, set to fixed resolution and my special optimization technique applied. By making OPs such as

Tiledisappear and embedded in the model as INT64 constants, the final model file size is increased, but the model structure is greatly optimized.

Verify that the inference works properly.

sit4onnx \

-if high_frequency_stereo_matching_kitti_iter05_1x3x192x320.onnx \

-oep tensorrt

INFO: file: high_frequency_stereo_matching_kitti_iter05_1x3x192x320.onnx

INFO: providers: ['TensorrtExecutionProvider', 'CPUExecutionProvider']

INFO: input_name.1: left shape: [1, 3, 192, 320] dtype: float32

INFO: input_name.2: right shape: [1, 3, 192, 320] dtype: float32

INFO: test_loop_count: 10

INFO: total elapsed time: 185.7011318206787 ms

INFO: avg elapsed time per pred: 18.57011318206787 ms

INFO: output_name.1: output shape: [1, 1, 192, 320] dtype: float32

sit4onnx \

-if high_frequency_stereo_matching_kitti_iter05_1x3x192x320.onnx \

-oep cpu

INFO: file: high_frequency_stereo_matching_kitti_iter05_1x3x192x320.onnx

INFO: providers: ['CPUExecutionProvider']

INFO: input_name.1: left shape: [1, 3, 192, 320] dtype: float32

INFO: input_name.2: right shape: [1, 3, 192, 320] dtype: float32

INFO: test_loop_count: 10

INFO: total elapsed time: 4090.1401042938232 ms

INFO: avg elapsed time per pred: 409.0140104293823 ms

INFO: output_name.1: output shape: [1, 1, 192, 320] dtype: float32

1. CLI Usage

spo4onnx -h

spo4onnx \

[-h] \

-if INPUT_ONNX_FILE_PATH \

[-of OUTPUT_ONNX_FILE_PATH] \

[-ois OVERWRITE_INPUT_SHAPE [OVERWRITE_INPUT_SHAPE ...]] \

[-ot OPTIMIZATION_TIMES] \

[-tov TARGET_ONNXSIM_VERSION]

[-n]

options:

-h, --help

show this help message and exit

-if INPUT_ONNX_FILE_PATH, --input_onnx_file_path INPUT_ONNX_FILE_PATH

Input onnx file path.

-of OUTPUT_ONNX_FILE_PATH, --output_onnx_file_path OUTPUT_ONNX_FILE_PATH

Output onnx file path. If not specified,

it will overwrite the onnx specified in --input_onnx_file_path.

-ois OVERWRITE_INPUT_SHAPE [OVERWRITE_INPUT_SHAPE ...], \

--overwrite_input_shape OVERWRITE_INPUT_SHAPE [OVERWRITE_INPUT_SHAPE ...]

Overwrite the input shape.

The format is "input_1:dim0,...,dimN" "input_2:dim0,...,dimN" "input_3:dim0,...,dimN"

When there is only one input, for example, "data:1,3,224,224"

When there are multiple inputs, for example, "data1:1,3,224,224" "data2:1,3,112,112" "data3:5"

A value of 1 or more must be specified.

Numerical values other than dynamic dimensions are ignored.

-ot OPTIMIZATION_TIMES, --optimization_times OPTIMIZATION_TIMES

Number of times the optimization process is performed.

If zero is specified, the tool automatically calculates the number of optimization times.

Default: 0

-tov TARGET_ONNXSIM_VERSION, --target_onnxsim_version TARGET_ONNXSIM_VERSION

Version number of the onnxsim used for optimization.

Default: 0.4.30

-n, --non_verbose

Do not show all information logs. Only error logs are displayed.

2. In-script Usage

$ python

>>> from spo4onnx import partial_optimization

>>> help(partial_optimization)

Help on function partial_optimization in module spo4onnx.onnx_partial_optimization:

partial_optimization(

input_onnx_file_path: Optional[str] = '',

onnx_graph: Optional[onnx.onnx_ml_pb2.ModelProto] = None,

output_onnx_file_path: Optional[str] = '',

overwrite_input_shape: Optional[Dict] = None,

optimization_times: Optional[int] = 0,

target_onnxsim_version: Optional[str] = '0.4.30',

non_verbose: Optional[bool] = False,

) -> onnx.onnx_ml_pb2.ModelProto

Parameters

----------

input_onnx_file_path: Optional[str]

Input onnx file path.

Either input_onnx_file_path or onnx_graph must be specified.

Default: ''

onnx_graph: Optional[onnx.ModelProto]

onnx.ModelProto.

Either input_onnx_file_path or onnx_graph must be specified.

onnx_graph If specified, ignore input_onnx_file_path and process onnx_graph.

output_onnx_file_path: Optional[str]

Output onnx file path.

If not specified, it will overwrite the onnx specified in --input_onnx_file_path.

overwrite_input_shape: Optional[Dict]

Overwrite the input shape.

The format is

{'data1': [1, 3, 224, 224], 'data2': [1, 224], 'data3': [1]}

optimization_times: Optional[int]

Number of times the optimization process is performed.

If zero is specified, the tool automatically calculates the number of optimization times.

Default: 0

non_verbose: Optional[bool]

Do not show all information logs. Only error logs are displayed.

Returns

-------

onnx_graph: onnx.ModelProto

Optimized onnx ModelProto

3. Acknowledgments

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file spo4onnx-1.0.5.tar.gz.

File metadata

- Download URL: spo4onnx-1.0.5.tar.gz

- Upload date:

- Size: 13.1 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/5.0.0 CPython/3.9.19

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

dd31146688045ec1dfc09de04f7718b51aa2400310958fac3ca4dda336ef1d7e

|

|

| MD5 |

0495bd5948f2d2eb6ae4221b0204234c

|

|

| BLAKE2b-256 |

20e5bb018f64cc98ce8e1b91f2a65492904418d117cf9b7c426e8b5d2cde898e

|

File details

Details for the file spo4onnx-1.0.5-py3-none-any.whl.

File metadata

- Download URL: spo4onnx-1.0.5-py3-none-any.whl

- Upload date:

- Size: 11.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/5.0.0 CPython/3.9.19

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3275651f14b33fbb4fe6a3b8dd196acd6dd6ac5aa49829c129b1b487c2cb8c99

|

|

| MD5 |

b98098c7d1dda9b46a55e9ea2d76ee8d

|

|

| BLAKE2b-256 |

96a02bcda978f76fb5a76c48b109ab17686267daedb1b712fb6a23d43fe9e6b6

|