The bilingual_book_maker is an AI translation tool that uses ChatGPT to assist users in creating multi-language versions of epub/txt files and books.

Project description

中文 | English

bilingual_book_maker

The bilingual_book_maker is an AI translation tool that uses ChatGPT to assist users in creating multi-language versions of epub/txt/srt files and books. This tool is exclusively designed for translating epub books that have entered the public domain and is not intended for copyrighted works. Before using this tool, please review the project's disclaimer.

Supported Models

gpt-4, gpt-3.5-turbo, claude-2, palm, llama-2, azure-openai, command-nightly, gemini, qwen-mt-turbo, qwen-mt-plus

For using Non-OpenAI models, use class liteLLM() - liteLLM supports all models above.

Find more info here for using liteLLM: https://github.com/BerriAI/litellm/blob/main/setup.py

Preparation

- ChatGPT or OpenAI token ^token

- epub/txt books

- Environment with internet access or proxy

- Python 3.8+

Quick Start

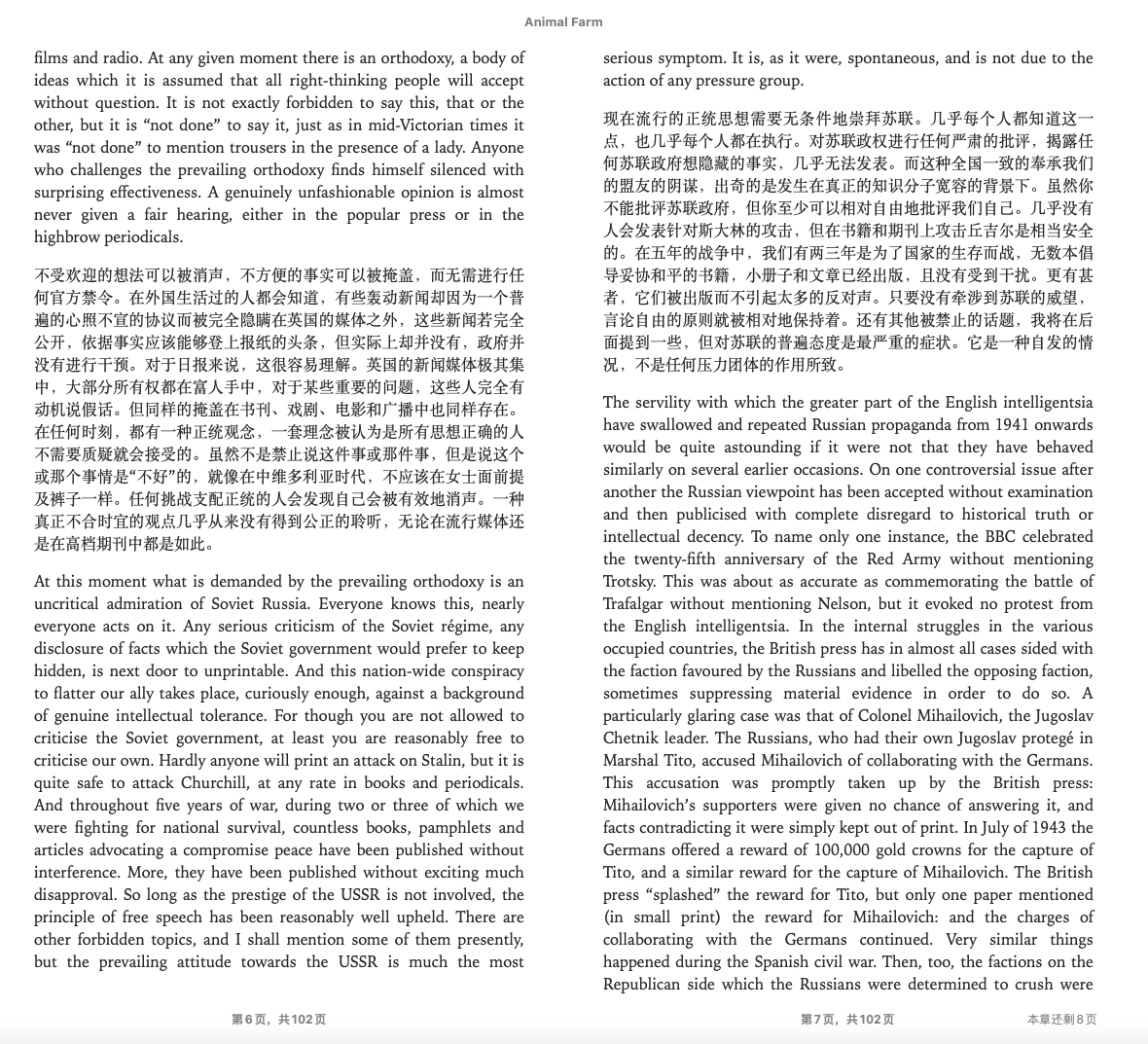

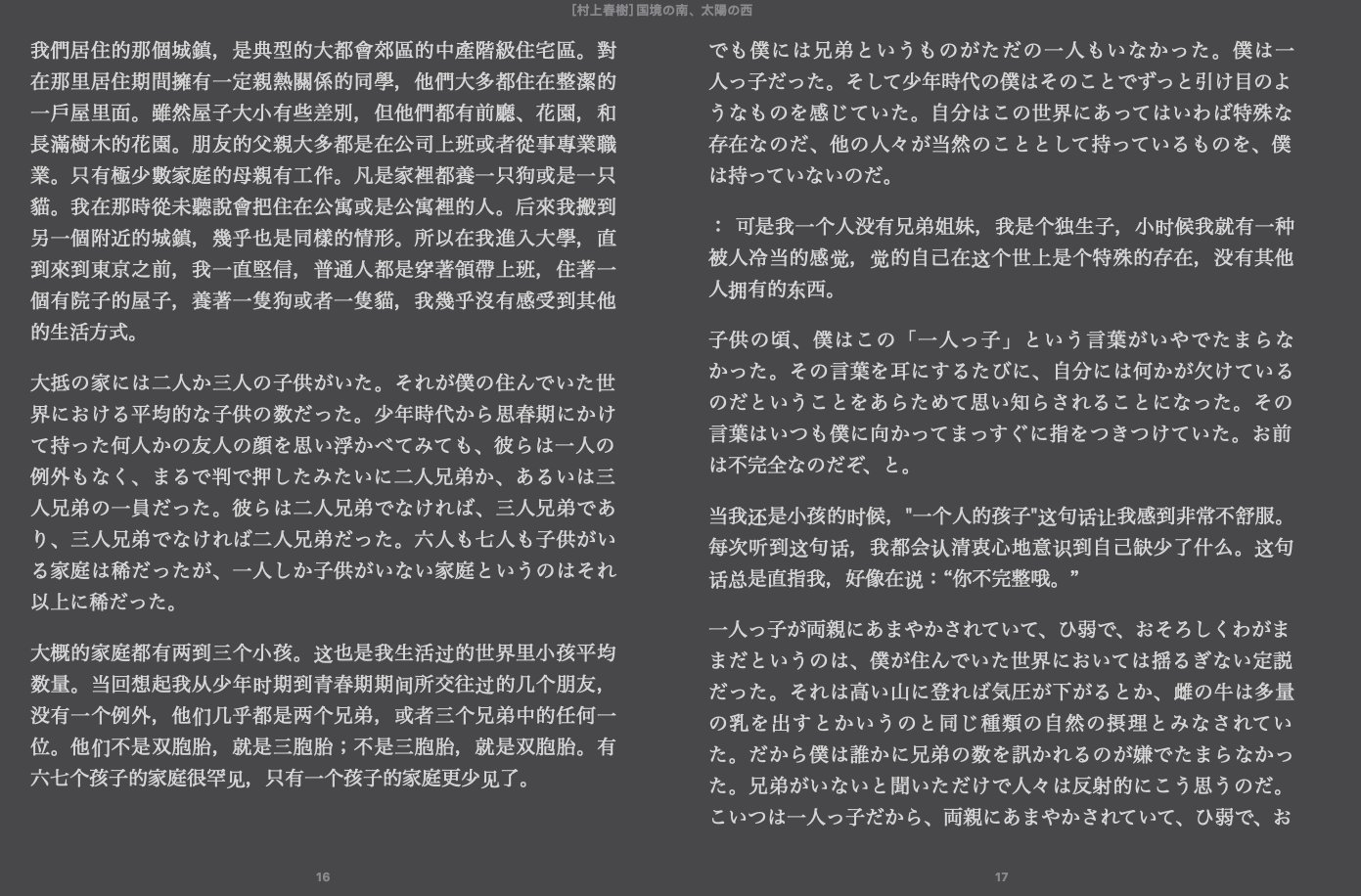

A sample book, test_books/animal_farm.epub, is provided for testing purposes.

pip install -r requirements.txt

python3 make_book.py --book_name test_books/animal_farm.epub --openai_key ${openai_key} --test

OR

pip install -U bbook_maker

bbook --book_name test_books/animal_farm.epub --openai_key ${openai_key} --test

Translate Service

- Use

--openai_keyoption to specify OpenAI API key. If you have multiple keys, separate them by commas (xxx,xxx,xxx) to reduce errors caused by API call limits. Or, just set environment variableBBM_OPENAI_API_KEYinstead. - A sample book,

test_books/animal_farm.epub, is provided for testing purposes. - The default underlying model is GPT-3.5-turbo, which is used by ChatGPT currently. Use

--model gpt4to change the underlying model toGPT4. You can also useGPT4omini. - Important to note that

gpt-4is significantly more expensive thangpt-4-turbo, but to avoid bumping into rate limits, we automatically balance queries acrossgpt-4-1106-preview,gpt-4,gpt-4-32k,gpt-4-0613,gpt-4-32k-0613. - If you want to use a specific model alias with OpenAI (eg

gpt-4-1106-previeworgpt-3.5-turbo-0125), you can use--model openai --model_list gpt-4-1106-preview,gpt-3.5-turbo-0125.--model_listtakes a comma-separated list of model aliases. - If using chatgptapi, you can add

--use_contextto add a context paragraph to each passage sent to the model for translation (see below).

-

DeepL Support DeepL model DeepL Translator need pay to get the token

python3 make_book.py --book_name test_books/animal_farm.epub --model deepl --deepl_key ${deepl_key} -

DeepL free

python3 make_book.py --book_name test_books/animal_farm.epub --model deeplfree

-

Use Claude model to translate

python3 make_book.py --book_name test_books/animal_farm.epub --model claude --claude_key ${claude_key}

-

Google Translate

python3 make_book.py --book_name test_books/animal_farm.epub --model google

-

Caiyun Translate

python3 make_book.py --book_name test_books/animal_farm.epub --model caiyun --caiyun_key ${caiyun_key}

-

Gemini

Support Google Gemini model, use

--model geminifor Gemini Flash or--model geminiprofor Gemini Pro. If you want to use a specific model alias with Gemini (eggemini-1.5-flash-002orgemini-1.5-flash-8b-exp-0924), you can use--model gemini --model_list gemini-1.5-flash-002,gemini-1.5-flash-8b-exp-0924.--model_listtakes a comma-separated list of model aliases.python3 make_book.py --book_name test_books/animal_farm.epub --model gemini --gemini_key ${gemini_key}

-

Qwen

Support Alibaba Cloud Qwen-MT specialized translation model. Supports 92 languages with features like terminology intervention and translation memory. Use

--model qwen-mt-turbofor faster/cheaper translation, or--model qwen-mt-plusfor higher quality.Use

source_langto specify the source language explicitly, or leave it empty for auto-detection.python3 make_book.py --book_name test_books/animal_farm.epub --qwen_key ${qwen_key} --model qwen-mt-turbo --language "Simplified Chinese" python3 make_book.py --book_name test_books/animal_farm.epub --qwen_key ${qwen_key} --model qwen-mt-plus --language "Japanese" --source_lang "English"

-

python3 make_book.py --book_name test_books/animal_farm.epub --model tencentransmart

-

python3 make_book.py --book_name test_books/animal_farm.epub --model xai --xai_key ${xai_key}

-

Support Ollama self-host models, If ollama server is not running on localhost, use

--api_base http://x.x.x.x:port/v1to point to the ollama server addresspython3 make_book.py --book_name test_books/animal_farm.epub --ollama_model ${ollama_model_name}

-

GroqCloud currently supports models: you can find from Supported Models

python3 make_book.py --book_name test_books/animal_farm.epub --groq_key [your_key] --model groq --model_list llama3-8b-8192

Use

- Once the translation is complete, a bilingual book named

${book_name}_bilingual.epubwould be generated. - If there are any errors or you wish to interrupt the translation by pressing

CTRL+C. A book named{book_name}_bilingual_temp.epubwould be generated. You can simply rename it to any desired name.

Params

-

--test:Use

--testoption to preview the result if you haven't paid for the service. Note that there is a limit and it may take some time. -

--language:Set the target language like

--language "Simplified Chinese". Default target language is"Simplified Chinese". Read available languages by helper message:python make_book.py --help -

--proxy:Use

--proxyoption to specify proxy server for internet access. Enter a string such ashttp://127.0.0.1:7890. -

--resume:Use

--resumeoption to manually resume the process after an interruption.python3 make_book.py --book_name test_books/animal_farm.epub --model google --resume

-

--translate-tags:epub is made of html files. By default, we only translate contents in

<p>. Use--translate-tagsto specify tags need for translation. Use comma to separate multiple tags. For example:--translate-tags h1,h2,h3,p,div -

--book_from:Use

--book_fromoption to specify e-reader type (Now onlykobois available), and use--device_pathto specify the mounting point. -

--api_base:If you want to change api_base like using Cloudflare Workers, use

--api_base <URL>to support it. Note: the api url should be 'https://xxxx/v1'. Quotation marks are required. -

--allow_navigable_strings:If you want to translate strings in an e-book that aren't labeled with any tags, you can use the

--allow_navigable_stringsparameter. This will add the strings to the translation queue. Note that it's best to look for e-books that are more standardized if possible. -

--prompt:To tweak the prompt, use the

--promptparameter. Valid placeholders for theuserrole template include{text}and{language}. It supports a few ways to configure the prompt:-

If you don't need to set the

systemrole content, you can simply set it up like this:--prompt "Translate {text} to {language}."or--prompt prompt_template_sample.txt(example of a text file can be found at ./prompt_template_sample.txt). -

If you need to set the

systemrole content, you can use the following format:--prompt '{"user":"Translate {text} to {language}", "system": "You are a professional translator."}'or--prompt prompt_template_sample.json(example of a JSON file can be found at ./prompt_template_sample.json). -

You can now use PromptDown format (

.mdfiles) for more structured prompts:--prompt prompt_md.prompt.md. PromptDown supports both traditional system messages and developer messages (used by newer AI models). Example:# Translation Prompt ## Developer Message You are a professional translator who specializes in accurate translations. ## Conversation | Role | Content | | ---- | -------------------------------------------------------------- | | User | Please translate the following text into {language}:\n\n{text} |

-

You can also set the

userandsystemrole prompt by setting environment variables:BBM_CHATGPTAPI_USER_MSG_TEMPLATEandBBM_CHATGPTAPI_SYS_MSG.

-

-

--batch_size:Use the

--batch_sizeparameter to specify the number of lines for batch translation (default is 10, currently only effective for txt files). -

--accumulated_num:Wait for how many tokens have been accumulated before starting the translation. gpt3.5 limits the total_token to 4090. For example, if you use

--accumulated_num 1600, maybe openai will output 2200 tokens and maybe 200 tokens for other messages in the system messages user messages, 1600+2200+200=4000, So you are close to reaching the limit. You have to choose your own value, there is no way to know if the limit is reached before sending -

--use_context:prompts the model to create a three-paragraph summary. If it's the beginning of the translation, it will summarize the entire passage sent (the size depending on

--accumulated_num). For subsequent passages, it will amend the summary to include details from the most recent passage, creating a running one-paragraph context payload of the important details of the entire translated work. This improves consistency of flow and tone throughout the translation. This option is available for all ChatGPT-compatible models and Gemini models. -

--context_paragraph_limit:Use

--context_paragraph_limitto set a limit on the number of context paragraphs when using the--use_contextoption. -

--temperature:Use

--temperatureto set the temperature parameter forchatgptapi/gpt4/claudemodels. For example:--temperature 0.7. -

--block_size:Use

--block_sizeto merge multiple paragraphs into one block. This may increase accuracy and speed up the process but can disturb the original format. Must be used with--single_translate. For example:--block_size 5 --single_translate. -

--single_translate:Use

--single_translateto output only the translated book without creating a bilingual version. -

--translation_style:example:

--translation_style "color: #808080; font-style: italic;" -

--retranslate "$translated_filepath" "file_name_in_epub" "start_str" "end_str"(optional):Retranslate from start_str to end_str's tag:

python3 "make_book.py" --book_name "test_books/animal_farm.epub" --retranslate 'test_books/animal_farm_bilingual.epub' 'index_split_002.html' 'in spite of the present book shortage which' 'This kind of thing is not a good symptom. Obviously'

Retranslate start_str's tag:

python3 "make_book.py" --book_name "test_books/animal_farm.epub" --retranslate 'test_books/animal_farm_bilingual.epub' 'index_split_002.html' 'in spite of the present book shortage which'

Examples

Note if use pip install bbook_maker all commands can change to bbook_maker args

# Test quickly

python3 make_book.py --book_name test_books/animal_farm.epub --openai_key ${openai_key} --test --language zh-hans

# Test quickly for src

python3 make_book.py --book_name test_books/Lex_Fridman_episode_322.srt --openai_key ${openai_key} --test

# Or translate the whole book

python3 make_book.py --book_name test_books/animal_farm.epub --openai_key ${openai_key} --language zh-hans

# Or translate the whole book using Gemini flash

python3 make_book.py --book_name test_books/animal_farm.epub --gemini_key ${gemini_key} --model gemini

# Use a specific list of Gemini model aliases

python3 make_book.py --book_name test_books/animal_farm.epub --gemini_key ${gemini_key} --model gemini --model_list gemini-1.5-flash-002,gemini-1.5-flash-8b-exp-0924

# Set env OPENAI_API_KEY to ignore option --openai_key

export OPENAI_API_KEY=${your_api_key}

# Use the GPT-4 model with context to Japanese

python3 make_book.py --book_name test_books/animal_farm.epub --model gpt4 --use_context --language ja

# Use a specific OpenAI model alias

python3 make_book.py --book_name test_books/animal_farm.epub --model openai --model_list gpt-4-1106-preview --openai_key ${openai_key}

**Note** you can use other `openai like` model in this way

python3 make_book.py --book_name test_books/animal_farm.epub --model openai --model_list yi-34b-chat-0205 --openai_key ${openai_key} --api_base "https://api.lingyiwanwu.com/v1"

# Use a specific list of OpenAI model aliases

python3 make_book.py --book_name test_books/animal_farm.epub --model openai --model_list gpt-4-1106-preview,gpt-4-0125-preview,gpt-3.5-turbo-0125 --openai_key ${openai_key}

# Use the DeepL model with Japanese

python3 make_book.py --book_name test_books/animal_farm.epub --model deepl --deepl_key ${deepl_key} --language ja

# Use the Claude model with Japanese

python3 make_book.py --book_name test_books/animal_farm.epub --model claude --claude_key ${claude_key} --language ja

# Use the CustomAPI model with Japanese

python3 make_book.py --book_name test_books/animal_farm.epub --model customapi --custom_api ${custom_api} --language ja

# Translate contents in <div> and <p>

python3 make_book.py --book_name test_books/animal_farm.epub --translate-tags div,p

# Tweaking the prompt

python3 make_book.py --book_name test_books/animal_farm.epub --prompt prompt_template_sample.txt

# or

python3 make_book.py --book_name test_books/animal_farm.epub --prompt prompt_template_sample.json

# or

python3 make_book.py --book_name test_books/animal_farm.epub --prompt "Please translate \`{text}\` to {language}"

# Translate books download from Rakuten Kobo on kobo e-reader

python3 make_book.py --book_from kobo --device_path /tmp/kobo

# translate txt file

python3 make_book.py --book_name test_books/the_little_prince.txt --test --language zh-hans

# aggregated translation txt file

python3 make_book.py --book_name test_books/the_little_prince.txt --test --batch_size 20

# Using Caiyun model to translate

# (the api currently only support: simplified chinese <-> english, simplified chinese <-> japanese)

# the official Caiyun has provided a test token (3975l6lr5pcbvidl6jl2)

# you can apply your own token by following this tutorial(https://bobtranslate.com/service/translate/caiyun.html)

python3 make_book.py --model caiyun --caiyun_key 3975l6lr5pcbvidl6jl2 --book_name test_books/animal_farm.epub

# Set env BBM_CAIYUN_API_KEY to ignore option --openai_key

export BBM_CAIYUN_API_KEY=${your_api_key}

More understandable example

python3 make_book.py --book_name 'animal_farm.epub' --openai_key sk-XXXXX --api_base 'https://xxxxx/v1'

# Or python3 is not in your PATH

python make_book.py --book_name 'animal_farm.epub' --openai_key sk-XXXXX --api_base 'https://xxxxx/v1'

Microsoft Azure Endpoints

python3 make_book.py --book_name 'animal_farm.epub' --openai_key XXXXX --api_base 'https://example-endpoint.openai.azure.com' --deployment_id 'deployment-name'

# Or python3 is not in your PATH

python make_book.py --book_name 'animal_farm.epub' --openai_key XXXXX --api_base 'https://example-endpoint.openai.azure.com' --deployment_id 'deployment-name'

Docker

You can use Docker if you don't want to deal with setting up the environment.

# Build image

docker build --tag bilingual_book_maker .

# Run container

# "$folder_path" represents the folder where your book file locates. Also, it is where the processed file will be stored.

# Windows PowerShell

$folder_path=your_folder_path # $folder_path="C:\Users\user\mybook\"

$book_name=your_book_name # $book_name="animal_farm.epub"

$openai_key=your_api_key # $openai_key="sk-xxx"

$language=your_language # see utils.py

docker run --rm --name bilingual_book_maker --mount type=bind,source=$folder_path,target='/app/test_books' bilingual_book_maker --book_name "/app/test_books/$book_name" --openai_key $openai_key --language $language

# Linux

export folder_path=${your_folder_path}

export book_name=${your_book_name}

export openai_key=${your_api_key}

export language=${your_language}

docker run --rm --name bilingual_book_maker --mount type=bind,source=${folder_path},target='/app/test_books' bilingual_book_maker --book_name "/app/test_books/${book_name}" --openai_key ${openai_key} --language "${language}"

For example:

# Linux

docker run --rm --name bilingual_book_maker --mount type=bind,source=/home/user/my_books,target='/app/test_books' bilingual_book_maker --book_name /app/test_books/animal_farm.epub --openai_key sk-XXX --test --test_num 1 --language zh-hant

Notes

- API token from free trial has limit. If you want to speed up the process, consider paying for the service or use multiple OpenAI tokens

- PR is welcome

Thanks

Contribution

- Any issues or PRs are welcome.

- TODOs in the issue can also be selected.

- Please run

black make_book.py^black before submitting the code.

Others better

- 书译 iOS -> AI 全书翻译工具

Appreciation

Thank you, that's enough.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file bbook_maker-1.1.0.tar.gz.

File metadata

- Download URL: bbook_maker-1.1.0.tar.gz

- Upload date:

- Size: 53.8 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

151e3a9a743d9753661bac23eb6c92762b3c55391e9bf34be5aa498caaa23da1

|

|

| MD5 |

a50eebed91eda39de7cf4415d0f955a4

|

|

| BLAKE2b-256 |

eb032eda7dbfb689bf930076abedc0983449d3979f93263254431c034fc83190

|

File details

Details for the file bbook_maker-1.1.0-py3-none-any.whl.

File metadata

- Download URL: bbook_maker-1.1.0-py3-none-any.whl

- Upload date:

- Size: 60.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c4a37814835d9c218ff00ab2c7f4c706077ed35a747552b9365bc6f158665154

|

|

| MD5 |

9081a6a1e64ebb9864cb0a00bdee6b34

|

|

| BLAKE2b-256 |

8c44242f7a2889037ab8953afc9a89cfef5f9cac9f3331a422ab4285080ac8b3

|