Benchmark interpretability methods.

Project description

BIM - Benchmark Interpretability Method

This repository contains dataset, models, and metrics for benchmarking interpretability methods (BIM) described in paper:

- Title: "BIM: Towards Quantitative Evaluation of Interpretability Methods with Ground Truth"

- Authors: Sherry (Mengjiao) Yang, Been Kim

Upon using this library, please cite:

@Article{BIM2019,

title = {{BIM: Towards Quantitative Evaluation of Interpretability Methods with Ground Truth}},

author = {Yang, Mengjiao and Kim, Been},

year = {2019}

}

Setup

Run the following from the home directory of this repository to install python dependencies, download BIM models, download MSCOCO and MiniPlaces, and construct BIM dataset.

pip install bim

source scripts/download_models.sh

source scripts/download_datasets.sh

python scripts/construct_bim_dataset.py

Dataset

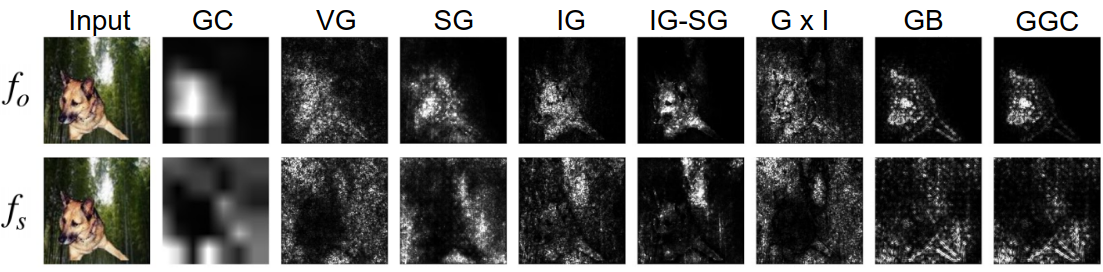

Images in data/obj and data/scene are the same but have object and scene

labels respectively, as shown in the figure above. val_loc.txt records the

top-left and bottom-right corner of the object and val_mask has the binary

masks of the object in the validation set. Additional sets and their usage are

described in the table below.

| Name | Training | Validation | Usage | Description |

|---|---|---|---|---|

obj |

90,000 | 10,000 | Model contrast | Objects and scenes with object labels |

scene |

90,000 | 10,000 | Model contrast & Input dependence | Objects and scenes with scene labels |

scene_only |

90,000 | 10,000 | Input dependence | Scene-only images with scene labels |

dog_bedroom |

- | 200 | Relative model contrast | Dog in bedroom labeled as bedroom |

bamboo_forest |

- | 100 | Input independence | Scene-only images of bamboo forest |

bamboo_forest_patch |

- | 100 | Input independence | Bamboo forest with functionally insignificant dog patch |

Models

Models in models/obj, models/scene, and models/scene_only are trained on

data/obj, data/scene, and data/scene_only respectively. Models in

models/scenei for i in {1...10} are trained on images where dog is added

to i scene classes, and the rest scene classes do not contain any added

objects. All models are in TensorFlow's

SavedModel format.

Metrics

BIM metrics compare how interpretability methods perform across models (model contrast), across inputs to the same model (input dependence), and across functionally equivalent inputs (input independence).

Model contrast scores

Given images that contain both objects and scenes, model contrast measures the difference in attributions between the model trained on object labels and the model trained on scene labels.

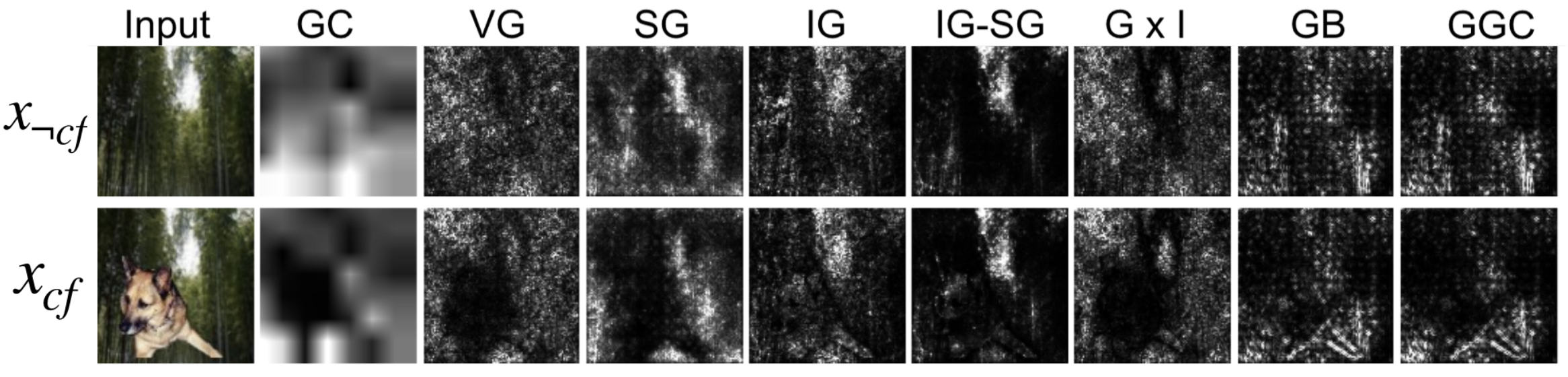

Input dependence rate

Given a model trained on scene labels, input dependence measures the percentage of inputs where the addition of objects results in the region being attributed as less important.

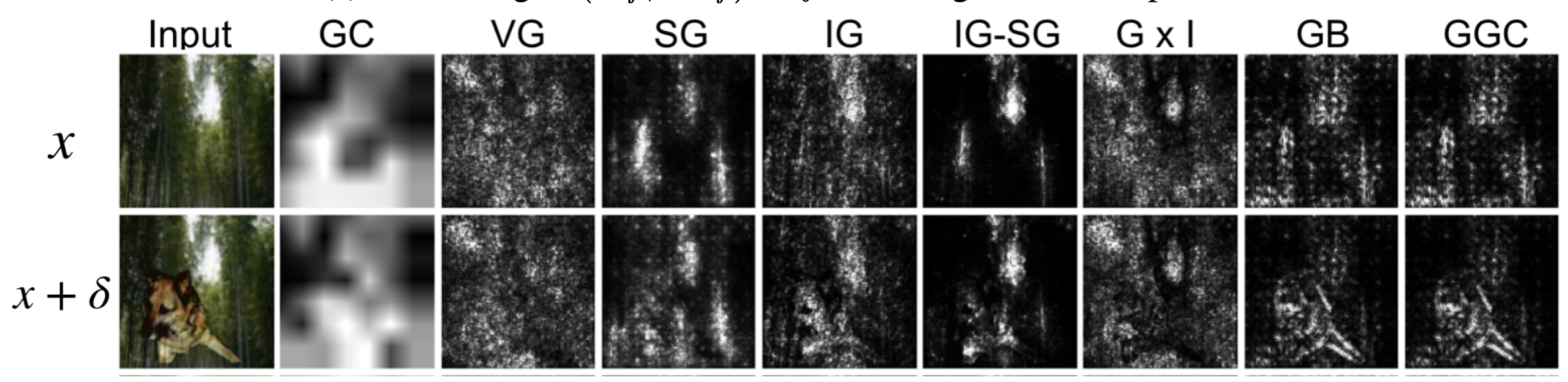

Input independence rate

Given a model trained on scene-only images, input independence measures the percentage of inputs where a functionally insignificant patch (e.g., a dog) does not affect explanations significantly.

Evaluate saliency methods

To compute model contrast score (MCS) over randomly selected 10 images, you can run

python bim/metrics.py --metrics=MCS --num_imgs=10

To compute input dependence rate (IDR), change --metrics to IDR. To compute

input independence rate (IIR), you need to first constructs a set of

functionally insignificant patches by running

python scripts/construct_delta_patch.py

and then evaluate IIR by running

python bim/metrics.py --metrics=IIR --num_imgs=10

Evaluate TCAV

TCAV is a global concept attribution method whose MCS can be measured by comparing the TCAV scores of a particular object concept for the object model and the scene model. Run the following to compute the TCAV scores of the dog concept for the object model.

python bim/run_tcav.py --model=obj

Disclaimer

This is not an officially supported Google product.

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file bim-0.2.tar.gz.

File metadata

- Download URL: bim-0.2.tar.gz

- Upload date:

- Size: 11.1 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/1.13.0 pkginfo/1.5.0.1 requests/2.22.0 setuptools/41.0.1 requests-toolbelt/0.9.1 tqdm/4.32.2 CPython/3.6.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

45ff0df1996e92f39d4f1dcf780620f62e1cb2d3c5ebf34d8fe766cca2360f77

|

|

| MD5 |

2dde531dbda0de9538c5a99acf25b140

|

|

| BLAKE2b-256 |

91743022c10769dea1082ced54eda83dc891280738b51973d988543e0f02eccd

|

File details

Details for the file bim-0.2-py3-none-any.whl.

File metadata

- Download URL: bim-0.2-py3-none-any.whl

- Upload date:

- Size: 17.0 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/1.13.0 pkginfo/1.5.0.1 requests/2.22.0 setuptools/41.0.1 requests-toolbelt/0.9.1 tqdm/4.32.2 CPython/3.6.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

271c1d28b122863655b6b795d2d97bd31cc0f2bbb7d8993d830d9d2753668660

|

|

| MD5 |

1f7b6b5b7e3374ad10121e675646e571

|

|

| BLAKE2b-256 |

725586d7893ec60e22cd546ec1173bf78badc27d15f0afcf2bb6cf48c4ce5362

|