ml4co-kit provides convenient dataset generators for the combinatorial optimization problem

Project description

📚 Introductions

Combinatorial Optimization (CO) is a mathematical optimization area that involves finding the best solution from a large set of discrete possibilities, often under constraints. Widely applied in routing, logistics, hardware design, and biology, CO addresses NP-hard problems critical to computer science and industrial engineering.

ML4CO-Kit aims to provide foundational support for machine learning practices on CO problems.

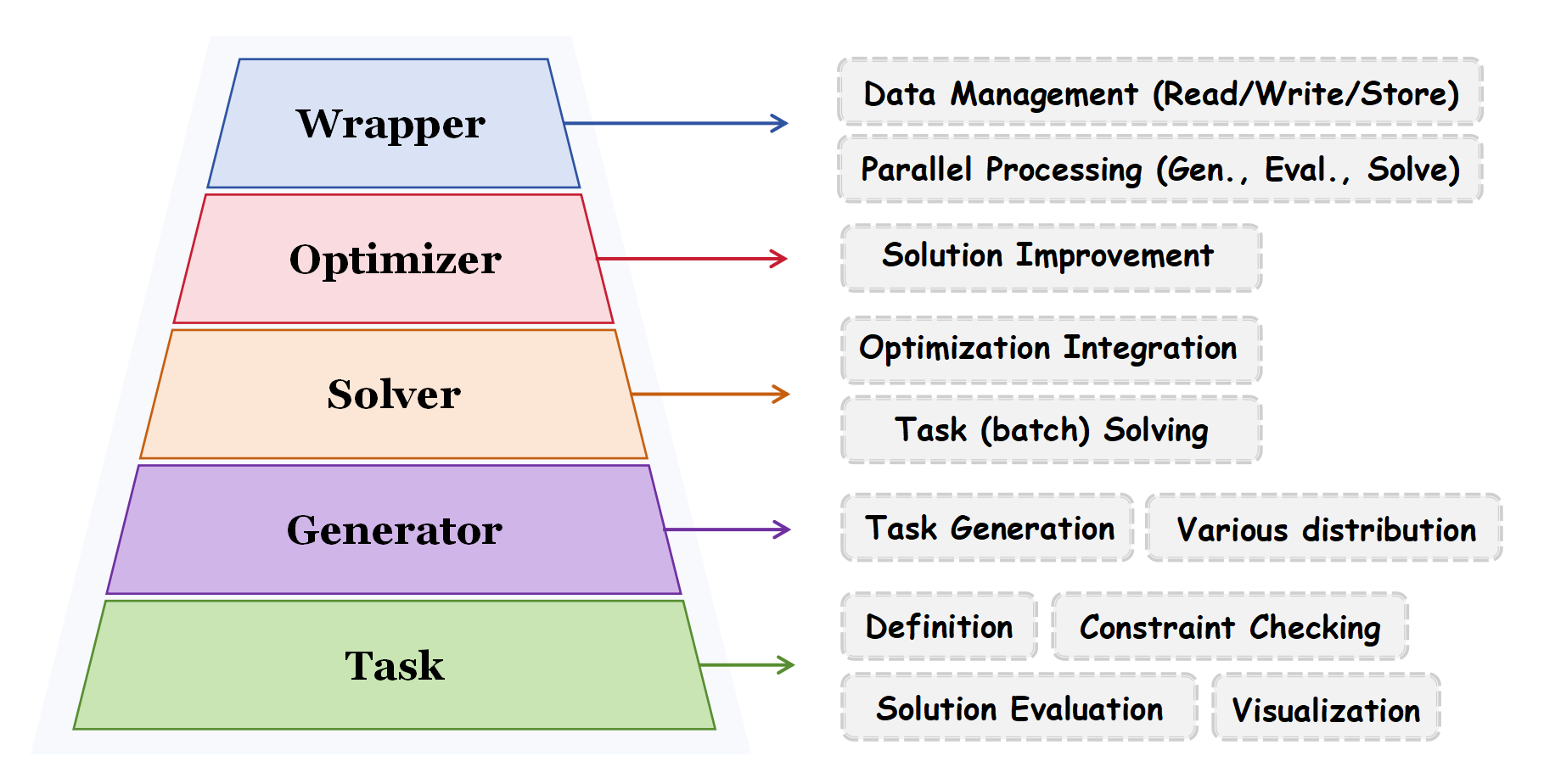

We have designed the ML4CO-Kit into five levels:

Task(Level 1): the smallest processing unit, where each task represents a problem instance. At the task level, it mainly involves the definition of CO problems, evaluation of solutions (including constraint checking), and problem visualization, etc.Generator(Level 2): the generator creates task instances of a specific structure or distribution based on the set parameters.Solver(Level 3): a variety of solvers. Different solvers, based on their scope of application, can solve specific types of task instances and can be combined with optimizers to further improve the solution results.Optimizer(Level 4): to further optimize the initial solution obtained by the solver.Wrapper(Level 5): user-friendly wrappers, used for handling data reading and writing, task storage, as well as parallelized generation and solving.

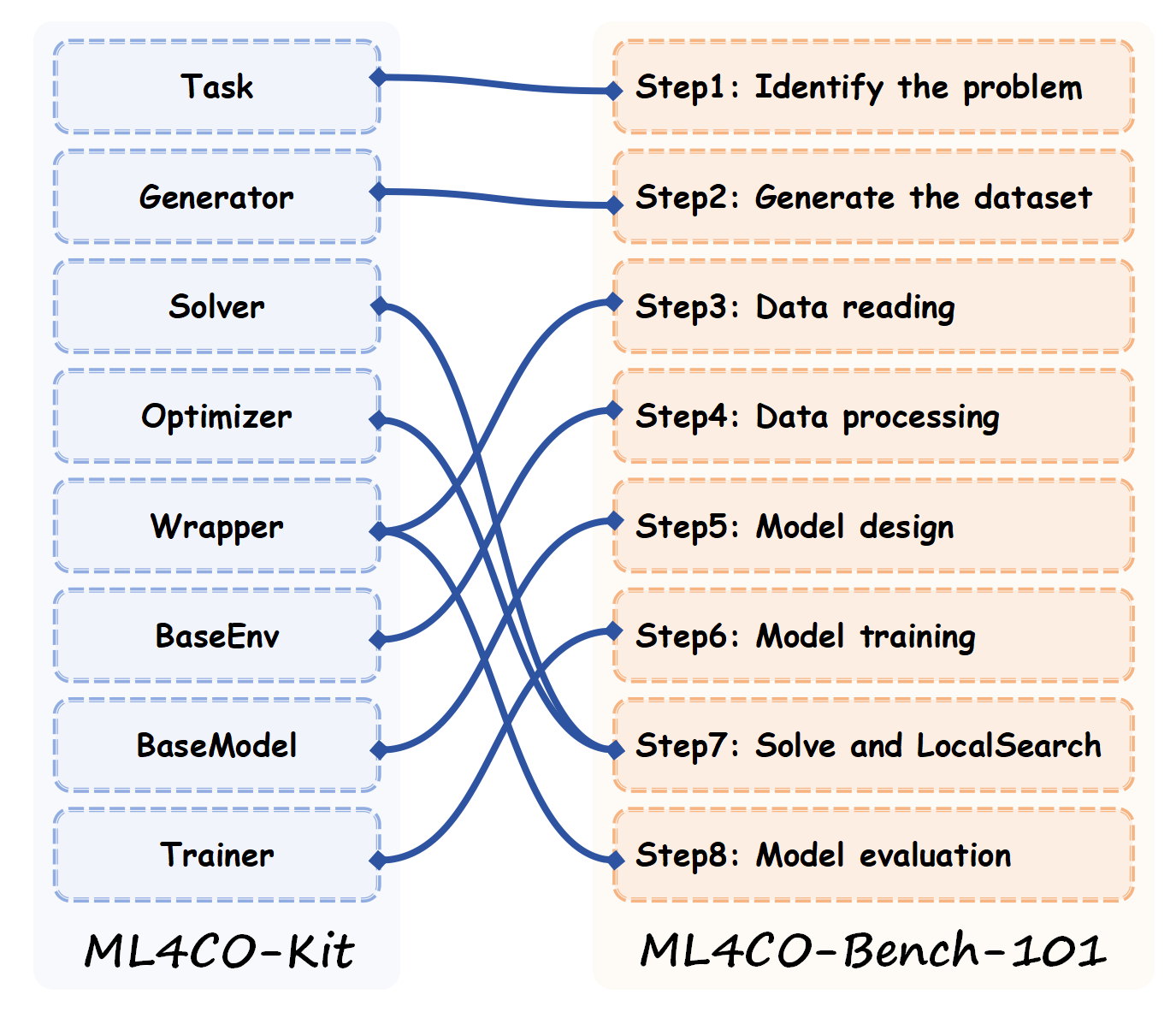

Additionally, for higher-level ML4CO (see ML4CO-Bench-101) services, we also provide learning base classes (see ml4co_kit/learning) based on the PyTorch-Lightning framework, including BaseEnv, BaseModel, Trainer. The following figure illustrates the relationship between the ML4CO-Kit and ML4CO-Bench-101.

We are still enriching the library and we welcome any contributions/ideas/suggestions from the community.

⭐ Official Documentation: https://ml4co-kit.readthedocs.io/en/latest/

⭐ Source Code: https://github.com/Thinklab-SJTU/ML4CO-Kit

🚀 Installation

You can install the stable release on PyPI:

$ pip install ml4co-kit

or get the latest version by running:

$ pip install -U https://github.com/Thinklab-SJTU/ML4CO-Kit/archive/master.zip # with --user for user install (no root)

The following packages are required and shall be automatically installed by pip:

Python>=3.8

numpy>=1.24.3

networkx>=2.8.8

tqdm>=4.66.3

cython>=3.0.8

pulp>=2.8.0,

scipy>=1.10.1

aiohttp>=3.10.11

requests>=2.32.0

matplotlib>=3.7.0

async_timeout>=4.0.3

pyvrp>=0.6.3

gurobipy>=11.0.3

scikit-learn>=1.3.0

ortools>=9.12.4544

huggingface_hub>=0.32.0

setuptools>=75.0.0

PySCIPOpt>=5.6.0

pybind11>=3.0.1

To ensure you have access to all functions, you need to install the environment related to pytorch_lightning. We have provided an installation helper, and you can install it using the following code.

import sys

from packaging import version

from ml4co_kit import EnvInstallHelper

if __name__ == "__main__":

# Get pytorch version

python_version = sys.version.split()[0]

# Get pytorch version

if version.parse(python_version) < version.parse("3.12"):

pytorch_version = "2.1.0"

elif version.parse(python_version) < version.parse("3.13"):

pytorch_version = "2.4.0"

else:

pytorch_version = "2.7.0"

# Install pytorch environment

env_install_helper = EnvInstallHelper(pytorch_version=pytorch_version)

env_install_helper.install()

⚠️ 2025-10-14: While testing the NVIDIA GeForce RTX 50-series GPUs, we have encountered the following error. To fix this issue, we recommend that you upgrade your driver to version 12.8 or later and download the corresponding PyTorch build from the official PyTorch website.

XXX with CUDA capability sm_120 is not compatible with the current PyTorch installation.

The current PyTorch install supports CUDA capabilities sm_50 sm_60 sm_70 sm_75 sm_80 sm_86 sm_90.

import os

# download torch==2.8.0+cu128 from pytorch.org

os.system(f"pip install torch==2.8.0+cu128 --index-url https://download.pytorch.org/whl/cu128")

# download torch-X (scatter, sparse, spline-conv, cluster)

html_link = f"https://pytorch-geometric.com/whl/torch-2.8.0+cu128.html"

os.system(f"pip install --no-index torch-scatter -f {html_link}")

os.system(f"pip install --no-index torch-sparse -f {html_link}")

os.system(f"pip install --no-index torch-spline-conv -f {html_link}")

os.system(f"pip install --no-index torch-cluster -f {html_link}")

# wandb

os.system(f"pip install wandb>=0.20.0")

# pytorch-lightning

os.system(f"pip install pytorch-lightning==2.5.3")

# torch_geometric

os.system(f"pip install torch_geometric==2.7.0")

After the environment is installed, run the following command to confirm that the PyTorch build supports sm_120.

>>> import torch

>>> print(torch.cuda.get_arch_list())

['sm_70', 'sm_75', 'sm_80', 'sm_86', 'sm_90', 'sm_100', 'sm_120']

⚠️ 2025-10-21: We find that on macOS, the gurobipy package does not support Python 3.8 or earlier. Therefore, please upgrade your Python to at least 3.9.

⚠️ 2026-03-13: For Python versions 3.9 to 3.11 on macOS, it is necessary to downgrade setuptools (recommended versions: 75.0.0 ~ 80.9.0).

📝 ML4CO-Kit Development status

We will present the development progress of ML4CO-Kit in the above 5 levels.

Graph: MCl & MCut & MIS & MVC; Routing: ATSP & OP & PCTSP & SPCTSP & TSP & CVRP (B/BL/BLTW/BTW/L/LTW/TW); Portfolio: MaxRetPO & MinVarPO & MOPO; SAT: SATA & SATP; EDA: EDAP

✔: Supported; 📆: Planned for future versions (contributions welcomed!).

Task (Level 1)

| Task | Definition | Check Constraint | Evaluation | Render | Special R/O |

|---|---|---|---|---|---|

| Routing Tasks | |||||

| Asymmetric TSP (ATSP) | ✔ | ✔ | ✔ | 📆 | tsplib |

| Orienteering Problem (OP) | ✔ | ✔ | ✔ | 📆 | |

| Prize Collection TSP (PCTSP) | ✔ | ✔ | ✔ | 📆 | |

| Stochastic PCTSP (SPCTSP) | ✔ | ✔ | ✔ | 📆 | |

| Traveling Salesman Problem (TSP) | ✔ | ✔ | ✔ | ✔ | tsplib |

| Capacitated Vehicle Routing Problem (CVRP) | ✔ | ✔ | ✔ | ✔ | vrplib |

| CVRP with Backhauls (CVRPB) | ✔ | ✔ | ✔ | 📆 | |

| CVRP with Backhauls and Length Limit (CVRPBL) | ✔ | ✔ | ✔ | 📆 | |

| CVRP with Backhauls, Length Limit and TW (CVRPBLTW) | ✔ | ✔ | ✔ | 📆 | |

| CVRP with Backhauls and Time Windows (CVRPBTW) | ✔ | ✔ | ✔ | 📆 | |

| CVRP with Length Limit (CVRPL) | ✔ | ✔ | ✔ | 📆 | |

| CVRP with Length Limit and Time Windows (CVRPLTW) | ✔ | ✔ | ✔ | 📆 | |

| CVRP with Time Windows (CVRPTW) | ✔ | ✔ | ✔ | 📆 | |

| Graph Tasks | |||||

| Maximum Clique (MCl) | ✔ | ✔ | ✔ | ✔ | gpickle, adj_matrix, networkx, csr |

| Maximum Cut (MCut) | ✔ | ✔ | ✔ | ✔ | gpickle, adj_matrix, networkx, csr |

| Maximum Independent Set (MIS) | ✔ | ✔ | ✔ | ✔ | gpickle, adj_matrix, networkx, csr |

| Minimum Vertex Cover (MVC) | ✔ | ✔ | ✔ | ✔ | gpickle, adj_matrix, networkx, csr |

| QAP Tasks | |||||

| Graph Matching (GM) | ✔ | ✔ | ✔ | 📆 | |

| Graph Edit Distance (GED) | ✔ | ✔ | ✔ | 📆 | |

| Koopmans-Beckmann QAP (KQAP) | ✔ | ✔ | ✔ | 📆 | |

| SAT Tasks | |||||

| Satisfiability Prediction (SATP) | ✔ | ✔ | ✔ | 📆 | cnf |

| Satisfying Assignment Prediction (SATA) | ✔ | ✔ | ✔ | 📆 | cnf |

| Portfolio Tasks | |||||

| Maximum Return Portfolio Optimization (MaxRetPO) | ✔ | ✔ | ✔ | 📆 | |

| Minimum Variance Portfolio Optimization (MinVarPO) | ✔ | ✔ | ✔ | 📆 | |

| Multi-Objective Portfolio Optimization (MOPO) | ✔ | ✔ | ✔ | 📆 | |

| EDA Tasks | |||||

| EDA Placement (EDAP) | ✔ | ✔ | ✔ | 📆 | bookshelf |

Generator (Level 2)

| Task | Distribution | Brief Intro. | State |

|---|---|---|---|

| Routing Tasks | |||

| ATSP | Uniform | Random distance matrix with triangle inequality | ✔ |

| SAT | SAT problem transformed to ATSP | ✔ | |

| HCP | Hamiltonian Cycle Problem transformed to ATSP | ✔ | |

| CVRP | Uniform | Random coordinates with uniform distribution | ✔ |

| Gaussian | Random coordinates with Gaussian distribution | ✔ | |

| CVRPB | Uniform | CVRP with backhauls (uniform) | ✔ |

| Gaussian | CVRP with backhauls (Gaussian) | ✔ | |

| CVRPBL | Uniform | CVRPB + route length limit | ✔ |

| Gaussian | CVRPB + route length limit (Gaussian) | ✔ | |

| CVRPBLTW | Uniform | CVRPBL + time windows | ✔ |

| Gaussian | CVRPBL + time windows (Gaussian) | ✔ | |

| CVRPBTW | Uniform | CVRPB + time windows | ✔ |

| Gaussian | CVRPB + time windows (Gaussian) | ✔ | |

| CVRPL | Uniform | CVRP + route length limit | ✔ |

| Gaussian | CVRP + route length limit (Gaussian) | ✔ | |

| CVRPLTW | Uniform | CVRPL + time windows | ✔ |

| Gaussian | CVRPL + time windows (Gaussian) | ✔ | |

| CVRPTW | Uniform | CVRP + time windows | ✔ |

| Gaussian | CVRP + time windows (Gaussian) | ✔ | |

| OP | Uniform | Random prizes with uniform distribution | ✔ |

| Constant | All prizes are constant | ✔ | |

| Distance | Prizes based on distance from depot | ✔ | |

| PCTSP | Uniform | Random prizes with uniform distribution | ✔ |

| SPCTSP | Uniform | Random prizes with uniform distribution | ✔ |

| TSP | Uniform | Random coordinates with uniform distribution | ✔ |

| Gaussian | Random coordinates with Gaussian distribution | ✔ | |

| Cluster | Coordinates clustered around random centers | ✔ | |

| Graph Tasks | |||

| (Graph) | ER (structure) | Erdos-Renyi random graph | ✔ |

| BA (structure) | Barabasi-Albert scale-free graph | ✔ | |

| HK (structure) | Holme-Kim small-world graph | ✔ | |

| WS (structure) | Watts-Strogatz small-world graph | ✔ | |

| RB (structure) | RB-Model graph | ✔ | |

| Uniform (weighted) | Weights with Uniform distribution | ✔ | |

| Gaussian (weighted) | Weights with Gaussian distribution | ✔ | |

| Poisson (weighted) | Weights with Poisson distribution | ✔ | |

| Exponential (weighted) | Weights with Exponential distribution | ✔ | |

| Lognormal (weighted) | Weights with Lognormal distribution | ✔ | |

| Powerlaw (weighted) | Weights with Powerlaw distribution | ✔ | |

| Binomial (weighted) | Weights with Binomial distribution | ✔ | |

| QAP Tasks | |||

| GM | ISO | Isomorphic Graph matching | ✔ |

| GM | SUB | Subgraph Graph matching | ✔ |

| SAT Tasks | |||

| (SAT) | PHASE | Near satisfiability phase transition | ✔ |

| SR | SAT/UNSAT paired generation | ✔ | |

| CA | Community Attachment generator | ✔ | |

| PS | Popularity Similarity generator | ✔ | |

| K_CLIQUE | Reduction-based SAT instance generation | ✔ | |

| K_CLIQUE | Reduction-based SAT instance generation | ✔ | |

| K_CLIQUE | Reduction-based SAT instance generation | ✔ | |

| Portfolio Tasks | |||

| (Portfolio) | GBM | Geometric Brownian Motion model | ✔ |

| Factor | Factor model with k factors and idiosyncratic noise | ✔ | |

| VAR(1) | Vector Autoregressive model of order 1 | ✔ | |

| MVT | Multivariate T distribution model | ✔ | |

| GRACH | GARCH model for volatility clustering | ✔ | |

| Jump | Merton Jump-Diffusion model | ✔ | |

| Regime | Regime-Switching model with multiple states | ✔ |

Solver (Level 3)

| Solver | Support Task | Language | Source | Ref. / Implementation | State |

|---|---|---|---|---|---|

| ConcordeSolver | TSP | C/C++ | Concorde | PyConcorde | ✔ |

| GAEAXSolver | TSP | C/C++ | GA-EAX | GA-EAX | ✔ |

| GNN4COSolver(Beam) | MCl | Python | ML4CO-Kit | ML4CO-Kit | ✔ |

| MIS | Python | ML4CO-Kit | ML4CO-Kit | ✔ | |

| GNN4COSolver(Greedy) | ATSP | C/C++ | ML4CO-Kit | ML4CO-Kit | ✔ |

| CVRP | Python | ML4CO-Kit | ML4CO-Kit | ✔ | |

| TSP | Cython | DIFUSCO | DIFUSCO | ✔ | |

| MCl | Python | ML4CO-Kit | ML4CO-Kit | ✔ | |

| MCut | Python | ML4CO-Kit | ML4CO-Kit | ✔ | |

| MIS | Python | ML4CO-Kit | ML4CO-Kit | ✔ | |

| MVC | Python | ML4CO-Kit | ML4CO-Kit | ✔ | |

| GNN4COSolver(MCTS) | TSP | Python | Att-GCRN | ML4CO-Kit | ✔ |

| GpDegreeSolver | MCl | Python | ML4CO-Kit | ML4CO-Kit | ✔ |

| MIS | Python | ML4CO-Kit | ML4CO-Kit | ✔ | |

| MVC | Python | ML4CO-Kit | ML4CO-Kit | ✔ | |

| GurobiSolver | ATSP | C/C++ | Gurobi | ML4CO-Kit | ✔ |

| CVRP | C/C++ | Gurobi | ML4CO-Kit | ✔ | |

| OP | C/C++ | Gurobi | ML4CO-Kit | ✔ | |

| TSP | C/C++ | Gurobi | ML4CO-Kit | ✔ | |

| MCl | C/C++ | Gurobi | DIffUCO | ✔ | |

| MCut | C/C++ | Gurobi | DIffUCO | ✔ | |

| MIS | C/C++ | Gurobi | DIffUCO | ✔ | |

| MVC | C/C++ | Gurobi | DIffUCO | ✔ | |

| MaxRetPO | C/C++ | Gurobi | ML4CO-Kit | ✔ | |

| MinVarPO | C/C++ | Gurobi | ML4CO-Kit | ✔ | |

| MOPO | C/C++ | Gurobi | ML4CO-Kit | ✔ | |

| HGSSolver | CVRP | C/C++ | HGS-CVRP | HGS-CVRP | ✔ |

| ILSSolver | PCTSP | Python | PCTSP | PCTSP | ✔ |

| SPCTSP | Python | Attention | Attention | ✔ | |

| InsertionSolver | TSP | Python | GLOP | GLOP | ✔ |

| ISCOSolver | MCl | Python | ISCO | DISCS | ✔ |

| MCut | Python | ISCO | DISCS | ✔ | |

| MIS | Python | ISCO | DISCS | ✔ | |

| MVC | Python | ISCO | DISCS | ✔ | |

| FEMSolver | MCut | Python | FEM | ML4CO-Kit | ✔ |

| KaMISSolver | MIS | Python | KaMIS | MIS-Bench | ✔ |

| LcDegreeSolver | MCl | Python | ML4CO-Kit | ML4CO-Kit | ✔ |

| MCut | Python | ML4CO-Kit | ML4CO-Kit | ✔ | |

| MIS | Python | ML4CO-Kit | ML4CO-Kit | ✔ | |

| MVC | Python | ML4CO-Kit | ML4CO-Kit | ✔ | |

| LKHSolver | TSP | C/C++ | LKH | ML4CO-Kit | ✔ |

| ATSP | C/C++ | LKH | ML4CO-Kit | ✔ | |

| CVRP | C/C++ | LKH | ML4CO-Kit | ✔ | |

| NeuroLKHSolver | TSP | Python | NeuroLKH | ML4CO-Kit | ✔ |

| ORSolver | ATSP | C/C++ | OR-Tools | ML4CO-Kit | ✔ |

| OP | C/C++ | OR-Tools | ML4CO-Kit | ✔ | |

| PCTSP | C/C++ | OR-Tools | ML4CO-Kit | ✔ | |

| TSP | C/C++ | OR-Tools | ML4CO-Kit | ✔ | |

| MCl | C/C++ | OR-Tools | ML4CO-Kit | ✔ | |

| MIS | C/C++ | OR-Tools | ML4CO-Kit | ✔ | |

| MVC | C/C++ | OR-Tools | ML4CO-Kit | ✔ | |

| PyGMSolver | GM | Python | pygmtools | ML4CO-Kit | ✔ |

| GED | Python | pygmtools | ML4CO-Kit | ✔ | |

| KQAP | Python | pygmtools | ML4CO-Kit | ✔ | |

| PySATSolver | SATP | Python | PySAT | ML4CO-Kit | ✔ |

| SATA | Python | PySAT | ML4CO-Kit | ✔ | |

| RLSASolver | MCl | Python | RLSA | ML4CO-Kit | ✔ |

| MCut | Python | RLSA | ML4CO-Kit | ✔ | |

| MIS | Python | RLSA | ML4CO-Kit | ✔ | |

| SCIPSolver | MaxRetPO | C/C++ | PySCIPOpt | ML4CO-Kit | ✔ |

| MinVarPO | C/C++ | PySCIPOpt | ML4CO-Kit | ✔ | |

| MOPO | C/C++ | PySCIPOpt | ML4CO-Kit | ✔ | |

| PyVRPSolver | CVRP | Python | PyVRP | ML4CO-Kit | ✔ |

| CVRPB | Python | PyVRP | ML4CO-Kit | ✔ | |

| CVRPBL | Python | PyVRP | ML4CO-Kit | ✔ | |

| CVRPBLTW | Python | PyVRP | ML4CO-Kit | ✔ | |

| CVRPBTW | Python | PyVRP | ML4CO-Kit | ✔ | |

| CVRPL | Python | PyVRP | ML4CO-Kit | ✔ | |

| CVRPLTW | Python | PyVRP | ML4CO-Kit | ✔ | |

| CVRPTW | Python | PyVRP | ML4CO-Kit | ✔ | |

| NearestSolver | TSP | Python | ML4CO-Kit | ML4CO-Kit | ✔ |

| CVRP | Python | ML4CO-Kit | ML4CO-Kit | ✔ | |

| DreamPlaceSolver | EDAP | Python | DREAMPlace | ML4CO-Kit | ✔ |

Optimizer (Level 4)

| Optimizer | Support Task | IMPL | Source | Ref. / Implementation | State |

|---|---|---|---|---|---|

| CVRPLSOptimizer | CVRP | Ctypes | HGS-CVRP | ML4CO-Kit | ✔ |

| ISCOOptimizer | MCl | Numpy | ISCO | DISCS | ✔ |

| MCut | Numpy | ISCO | DISCS | ✔ | |

| MIS | Numpy | ISCO | DISCS | ✔ | |

| MVC | Numpy | ISCO | DISCS | ✔ | |

| MCTSOptimizer | TSP | Ctypes | Att-GCRN | ML4CO-Kit | ✔ |

| TwoOptOptimizer | ATSP | Ctypes | ML4CO-Kit | ML4CO-Kit | ✔ |

| TSP | Torch | DIFUSCO | ML4CO-Kit | ✔ | |

| TSP | Pybind11 | GenSCO | GenSCO | ✔ | |

| FastTwoOptOptimizer | TSP | Pybind11 | ML4CO-Kit | ML4CO-Kit | ✔ |

| MCMCOptimizer | TSP | Pybind11 | ML4CO-Kit | ML4CO-Kit | ✔ |

| CVRP | Pybind11 | ML4CO-Kit | ML4CO-Kit | ✔ | |

| MIS | Pybind11 | ML4CO-Kit | ML4CO-Kit | ✔ |

Wrapper (Level 5)

| Wrapper | TXT | Other R&W |

|---|---|---|

| Routing Tasks | ||

| ATSPWrapper | "[dists] output [sol]" | tsplib |

| CVRPWrapper | "depots [depots] points [points] demands [demands] capacity [capacity] output [sol]" | vrplib |

| CVRPBWrapper | "depots [depots] points [points] demands [demands] capacity [capacity] output [sol]" | |

| CVRPBLWrapper | "depots [depots] points [points] demands [demands] capacity [capacity] max_route_length [max_route_length] output [sol]" | |

| CVRPBLTWWrapper | "depots [depots] points [points] demands [demands] capacity [capacity] tw [tw] service [service] max_route_length [max_route_length] output [sol]" | |

| CVRPBTWWrapper | "depots [depots] points [points] demands [demands] capacity [capacity] tw [tw] service [service] output [sol]" | |

| CVRPLWrapper | "depots [depots] points [points] demands [demands] capacity [capacity] max_route_length [max_route_length] output [sol]" | |

| CVRPLTWWrapper | "depots [depots] points [points] demands [demands] capacity [capacity] tw [tw] service [service] max_route_length [max_route_length] output [sol]" | |

| CVRPTWWrapper | "depots [depots] points [points] demands [demands] capacity [capacity] tw [tw] service [service] output [sol]" | |

| ORWrapper | "depots [depots] points [points] prizes [prizes] max_length [max_length] output [sol]" | |

| PCTSPWrapper | "depots [depots] points [points] penalties [penalties] prizes [prizes] required_prize [required_prize] output [sol]" | |

| SPCTSPWrapper | "depots [depots] points [points] penalties [penalties] expected_prizes [expected_prizes] actual_prizes [actual_prizes] required_prize [required_prize] output [sol]" | |

| TSPWrapper | "[points] output [sol]" | tsplib |

| Graph Tasks | ||

| (Graph)Wrapper | "[edge_index] label [sol]" | gpickle |

| (Graph)Wrapper [weighted] | "[edge_index] weights [weights] label [sol]" | gpickle |

| QAP Tasks | ||

| GMWrapper | -- | pickle |

| GEDWrapper | -- | pickle |

| KQAPWrapper | -- | pickle |

| SAT Tasks | ||

| SATPWrapper | "[vars_num] vars_num [clauses] output [sol]" | cnf |

| SATAWrapper | "[vars_num] vars_num [clauses] output [sol]" | cnf |

| Portfolio Tasks | ||

| MaxRetPOWrapper | "[returns] cov [cov] max_var [max_var] output [sol]" | |

| MinVarPOWrapper | "[returns] cov [cov] required_returns [required_returns] output [sol]" | |

| MOPOWrapper | "[returns] cov [cov] var_factor [var_factor] output [sol]" |

🔎 How to use ML4CO-Kit

Case-01: How to use ML4CO-Kit to generate a dataset

# We take the TSP as an example

# Import the required classes.

>>> import numpy as np # Numpy

>>> from ml4co_kit import TSPWrapper # The wrapper for TSP, used to manage data and parallel generation.

>>> from ml4co_kit import TSPGenerator # The generator for TSP, used to generate a single instance.

>>> from ml4co_kit import TSP_TYPE # The distribution types supported by the generator.

>>> from ml4co_kit import LKHSolver # We choose LKHSolver to solve TSP instances

# Check which distributions are supported by the TSP types.

>>> for type in TSP_TYPE:

... print(type)

TSP_TYPE.UNIFORM

TSP_TYPE.GAUSSIAN

TSP_TYPE.CLUSTER

# Set the generator parameters according to the requirements.

>>> tsp_generator = TSPGenerator(

... distribution_type=TSP_TYPE.GAUSSIAN, # Generate a TSP instance with a Gaussian distribution

... precision=np.float32, # Floating-point precision: 32-bit

... nodes_num=50, # Number of nodes in TSP instance

... gaussian_mean_x=0, # Mean of Gaussian for x coordinate

... gaussian_mean_y=0, # Mean of Gaussian for y coordinate

... gaussian_std=1, # Standard deviation of Gaussian

... )

# Set the LKH parameters.

>>> tsp_solver = LKHSolver(

... lkh_scale=1e6, # Scaling factor to convert floating-point numbers to integers

... lkh_max_trials=500, # Maximum number of trials for the LKH algorithm

... lkh_path="LKH", # Path to the LKH executable

... lkh_runs=1, # Number of runs for the LKH algorithm

... lkh_seed=1234, # Random seed for the LKH algorithm

... lkh_special=False, # When set to True, disables 2-opt and 3-opt heuristics

... )

# Create the TSP wrapper

>>> tsp_wrapper = TSPWrapper(precision=np.float32)

# Use ``generate_w_to_txt`` to generate a dataset of TSP.

>>> tsp_wrapper.generate_w_to_txt(

... file_path="tsp_gaussian_16ins.txt", # Path to the output file where the generated TSP instances will be saved

... generator=tsp_generator, # The TSP instance generator to use

... solver=tsp_solver, # The TSP solver to use

... num_samples=16, # Number of TSP instances to generate

... num_threads=4, # Number of CPU threads to use for parallelization; cannot both be non-1 with batch_size

... batch_size=1, # Batch size for parallel processing; cannot both be non-1 with num_threads

... write_per_iters=1, # Number of sub-generation steps after which data will be written to the file

... write_mode="a", # Write mode for the output file ("a" for append)

... show_time=True, # Whether to display the time taken for the generation process

... )

Generating TSP: 100%|██████████| 4/4 [00:00<00:00, 12.79it/s]

Case-02: How to use ML4CO-Kit to load problems and solve them

# We take the MIS as an example

# Import the required classes.

>>> import numpy as np # Numpy

>>> from ml4co_kit import MISWrapper # The wrapper for MIS, used to manage data and parallel solving.

>>> from ml4co_kit import KaMISSolver # We choose KaMISSolver to solve MIS instances

# Set the KaMIS parameters.

>>> mis_solver = KaMISSolver(

... kamis_time_limit=10.0, # The maximum solution time for a single problem

... kamis_weighted_scale=1e5, # Weight scaling factor, used when nodes have weights.

... )

# Create the MIS wrapper

>>> mis_wrapper = MISWrapper(precision=np.float32)

# Load the problems to be solved.

# You can use the corresponding loading function based on the file type,

# such as ``from_txt`` for txt file and ``from_pickle`` for pickle file.

>>> mis_wrapper.from_txt(

... file_path="test_dataset/mis/wrapper/mis_rb-small_uniform-weighted_4ins.txt",

... ref=True, # TXT file contains labels. Set ``ref=True`` to set them as reference.

... overwrite=True, # Whether to overwrite the data. If not, only update according to the file data.

... show_time=True # Whether to display the time taken for the loading process

... )

Loading data from test_dataset/mis/wrapper/mis_rb-small_uniform-weighted_4ins.txt: 4it [00:00, 75.41it/s]

# Use ``solve`` to call the KaMISSolver to perform the solution.

>>> mis_wrapper.solve(

... solver=mis_solver, # The solver to use

... num_threads=2, # Number of CPU threads to use for parallelization; cannot both be non-1 with batch_size

... batch_size=1, # Batch size for parallel processing; cannot both be non-1 with num_threads

... show_time=True, # Whether to display the time taken for the generation process

... )

Solving MIS Using kamis: 100%|██████████| 2/2 [00:21<00:00, 10.97s/it]

Using Time: 21.947036743164062

# Use ``evaluate_w_gap`` to obtain the evaluation results.

# Evaluation Results: average solution value, average reference value, gap (%), gap std.

>>> eval_result = mis_wrapper.evaluate_w_gap()

>>> print(eval_result)

(14.827162742614746, 15.18349838256836, 2.5054726600646973, 2.5342845916748047)

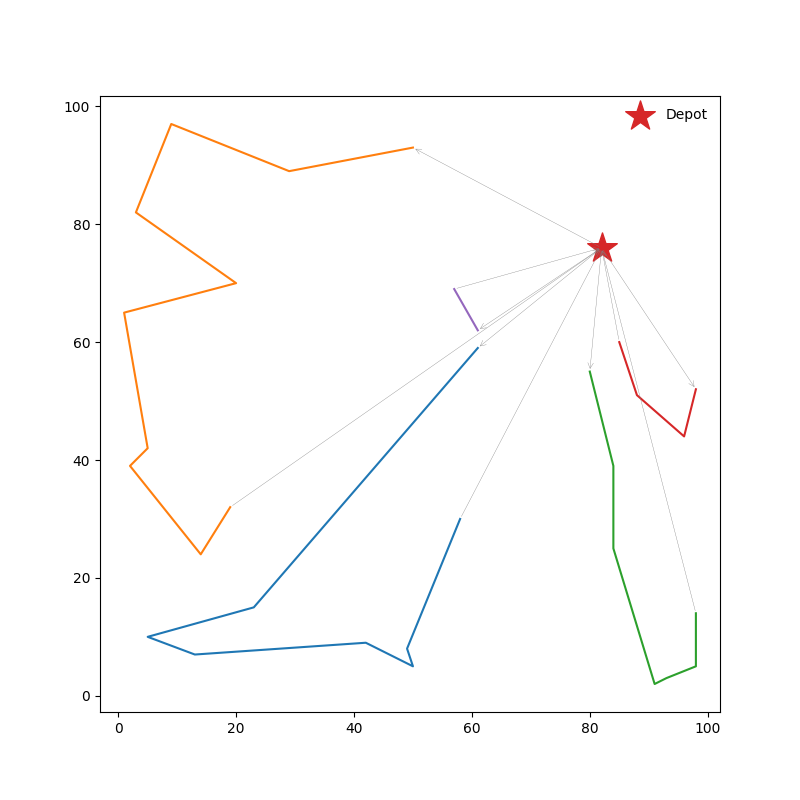

Case-03: How to use ML4CO-Kit to visualize the COPs

# We take the CVRP as an example

# Import the required classes.

>>> import numpy as np # Numpy

>>> from ml4co_kit import CVRPTask # CVRP Task.

>>> from ml4co_kit import CVRPWrapper # The wrapper for CVRP, used to manage data.

# Case-1: multiple task data are saved in ``txt``, ``pickle``, etc. single task data is saved in pickle.

>>> cvrp_wrapper = CVRPWrapper()

>>> cvrp_wrapper.from_pickle("test_dataset/cvrp/wrapper/cvrp50_uniform_16ins.pkl")

>>> cvrp_task = cvrp_wrapper.task_list[0]

>>> print(cvrp_task)

CVRPTask(2fb389cdafdb4e79a94572f01edf0b95)

# Case-2: single task data is saved in pickle.

>>> cvrp_task = CVRPTask()

>>> cvrp_task.from_pickle("test_dataset/cvrp/task/cvrp50_uniform_task.pkl")

>>> print(cvrp_task)

CVRPTask(2fb389cdafdb4e79a94572f01edf0b95)

# The loaded solution is usually a reference solution.

# When drawing the image, it is the ``sol`` that is being drawn.

# Therefore, it is necessary to assign ``ref_sol`` to ``sol``.

>>> cvrp_task.sol = cvrp_task.ref_sol

# Using ``render`` to get the visualization

>>> cvrp_task.render(

... save_path="./docs/assets/cvrp_solution.png", # Path to save the rendered image

... with_sol=True, # Whether to draw the solution tour

... figsize=(10, 10), # Size of the image (width and height)

... node_color="darkblue", # Color of the nodes

... edge_color="darkblue", # Color of the edges

... node_size=50 # Size of the nodes

... )

Case-04: A simple ML4CO example

# We take the MCut as an example

# Import the required classes.

>>> import numpy as np # Numpy

>>> from ml4co_kit import TASK_TYPE # The task type.

>>> from ml4co_kit import MCutWrapper # The wrapper for MCutWrapper, used to manage data.

>>> from ml4co_kit import GNN4COSolver # GNN4COSolver.

>>> from ml4co_kit import RLSAOptimizer # Using RLSA to perform local search.

>>> from ml4co_kit.extension.gnn4co import GNN4COModel, GNN4COEnv, GNNEncoder, GNN4COGreedyDecoder

# Set the GNN4COModel parameters. ``weight_path``: Pretrain weight path.

# If it is not available locally, it will be automatically downloaded from Hugging Face.

>>> gnn4mcut_model = GNN4COModel(

... env=GNN4COEnv(

... task_type=TASK_TYPE.MCUT, # Task type: MCut.

... wrapper=MCutWrapper(), # The wrapper for MCutWrapper, used to manage data.

... mode="solve", # Mode: solving mode.

... sparse_factor=1, # Sparse factor: Controls the sparsity of the graph.

... device="cuda" # Device: 'cuda' or 'cpu'

... ),

... encoder=GNNEncoder(

... task_type=TASK_TYPE.MCUT, # Task type: MCut.

... sparse=True, # Graph data should set ``sparse`` to True.

... block_layers=[2,4,4,2] # Block layers: the number of layers in each block of the encoder.

... ),

... decoder=GNN4COGreedyDecoder(sparse_factor=1),

... weight_path="weights/gnn4co_mcut_ba-large_sparse.pt"

... )

gnn4co/gnn4co_mcut_ba-large_sparse.pt: 100% ███████████████ 19.6M/19.6M [00:03<00:00, 6.18MB/s]

# Set the RLSAOptimizer parameters.

>>> mcut_optimizer = RLSAOptimizer(

... rlsa_kth_dim="both", # Which dimension to consider for the k-th value calculation.

... rlsa_tau=0.01, # The temperature parameter in the Simulated Annealing process.

... rlsa_d=2, # Control the step size of each update.

... rlsa_k=1000, # The number of samples used in the optimization process.

... rlsa_t=1000, # The number of iterations in the optimization process.

... rlsa_device="cuda", # Device: 'cuda' or 'cpu'.

... rlsa_seed=1234 # The random seed for reproducibility.

... )

# Set the GNN4COSolver parameters.

>>> mcut_solver_wo_opt = GNN4COSolver(

... model=gnn4mcut_model, # GNN4CO model for MCut

... device="cuda", # Device: 'cuda' or 'cpu'.

... optimizer=None # The optimizer to perform local search.

... )

>>> mcut_solver_w_opt = GNN4COSolver(

... model=gnn4mcut_model, # GNN4CO model for MCut

... device="cuda", # Device: 'cuda' or 'cpu'.

... optimizer=mcut_optimizer # The optimizer to perform local search.

... )

# Create the MCut wrapper

>>> mcut_wrapper = MCutWrapper(precision=np.float32)

# Load the problems to be solved.

# You can use the corresponding loading function based on the file type,

# such as ``from_txt`` for txt file and ``from_pickle`` for pickle file.

>>> mcut_wrapper.from_txt(

... file_path="test_dataset/mcut/wrapper/mcut_ba-large_no-weighted_4ins.txt",

... ref=True, # TXT file contains labels. Set ``ref=True`` to set them as reference.

... overwrite=True, # Whether to overwrite the data. If not, only update according to the file data.

... show_time=True # Whether to display the time taken for the loading process

... )

Loading data from test_dataset/mcut/wrapper/mcut_ba-large_no-weighted_4ins.txt: 4it [00:00, 16.35it/s]

# Using ``solve`` to get the solution (without optimizer)

>>> mcut_wrapper.solve(

... solver=mcut_solver_wo_opt, # The solver to use

... num_threads=1, # Number of CPU threads to use for parallelization; cannot both be non-1 with batch_size

... batch_size=1, # Batch size for parallel processing; cannot both be non-1 with num_threads

... show_time=True, # Whether to display the time taken for the generation process

... )

Solving MCut Using greedy: 100%|██████████| 4/4 [00:00<00:00, 12.34it/s]

Using Time: 0.3261079788208008

# Use ``evaluate_w_gap`` to obtain the evaluation results.

# Evaluation Results: average solution value, average reference value, gap (%), gap std.

>>> eval_result = mcut_wrapper.evaluate_w_gap()

>>> print(eval_result)

(2647.25, 2726.5, 2.838811523236064, 0.7528157058230817)

# Using ``solve`` to get the solution (with optimizer)

>>> mcut_wrapper.solve(

... solver=mcut_solver_w_opt, # The solver to use

... num_threads=1, # Number of CPU threads to use for parallelization; cannot both be non-1 with batch_size

... batch_size=1, # Batch size for parallel processing; cannot both be non-1 with num_threads

... show_time=True, # Whether to display the time taken for the generation process

... )

Solving MCut Using greedy: 100%|██████████| 4/4 [00:02<00:00, 1.46it/s]

Using Time: 2.738525867462158

# Use ``evaluate_w_gap`` to obtain the evaluation results.

# Evaluation Results: average solution value, average reference value, gap (%), gap std.

>>> eval_result = mcut_wrapper.evaluate_w_gap()

>>> print(eval_result)

(2693.0, 2726.5, 1.2373146256952277, 0.29320238806274546)

📈 Our Systematic Benchmark Works

We are systematically building a foundational framework for ML4CO with a collection of resources that complement each other in a cohesive manner.

-

Awesome-ML4CO, a curated collection of literature in the ML4CO field, organized to support researchers in accessing both foundational and recent developments.

-

ML4CO-Kit, a general-purpose toolkit that provides implementations of common algorithms used in ML4CO, along with basic training frameworks, traditional solvers and data generation tools. It aims to simplify the implementation of key techniques and offer a solid base for developing machine learning models for COPs.

-

ML4TSPBench: a benchmark focusing on exploring the TSP for representativeness. It advances a unified modular streamline incorporating existing tens of technologies in both learning and search for transparent ablation, aiming to reassess the role of learning and to discern which parts of existing techniques are genuinely beneficial and which are not. It offers a deep dive into various methodology designs, enabling comparisons and the development of specialized algorithms.

-

ML4CO-Bench-101: a benchmark that categorizes neural combinatorial optimization (NCO) solvers by solving paradigms, model designs, and learning strategies. It evaluates applicability and generalization of different NCO approaches across a broad range of combinatorial optimization problems to uncover universal insights that can be transferred across various domains of ML4CO.

-

PredictiveCO-Benchmark: a benchmark for decision-focused learning (DFL) approaches on predictive combinatorial optimization problems.

✨ Citation

If you find our code helpful in your research, please cite

@inproceedings{

ma2025mlcobench,

title={ML4CO-Bench-101: Benchmark Machine Learning for Classic Combinatorial Problems on Graphs},

author={Jiale Ma and Wenzheng Pan and Yang Li and Junchi Yan},

booktitle={The Thirty-ninth Annual Conference on Neural Information Processing Systems Datasets and Benchmarks Track},

year={2025},

url={https://openreview.net/forum?id=ye4ntB1Kzi}

}

@inproceedings{li2025unify,

title={Unify ml4tsp: Drawing methodological principles for tsp and beyond from streamlined design space of learning and search},

author={Li, Yang and Ma, Jiale and Pan, Wenzheng and Wang, Runzhong and Geng, Haoyu and Yang, Nianzu and Yan, Junchi},

booktitle={The Thirteenth International Conference on Learning Representations},

year={2025}

}

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file ml4co_kit-0.5.2.tar.gz.

File metadata

- Download URL: ml4co_kit-0.5.2.tar.gz

- Upload date:

- Size: 4.8 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.20

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

7e0e1bf7d1e108f080a3ae579f97c6439a91c23d3f6a0d9cf01fedbe5a5c2e17

|

|

| MD5 |

93c1119a403a788d58800475ec4b051d

|

|

| BLAKE2b-256 |

3952361ba97810dcc7a9f9a466346cf4da09c2d3ff17720112636ecc18f8ff0e

|

File details

Details for the file ml4co_kit-0.5.2-cp313-cp313-manylinux2014_x86_64.whl.

File metadata

- Download URL: ml4co_kit-0.5.2-cp313-cp313-manylinux2014_x86_64.whl

- Upload date:

- Size: 12.0 MB

- Tags: CPython 3.13

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f61cc03becfbc9e316e11aaed23562fc761bf9f9bc1bbf3a4c0f82ed8a25fd64

|

|

| MD5 |

29393d98189aad120f460f78d8008d2f

|

|

| BLAKE2b-256 |

c856fd46f795878eb22ba31429c376eedb82b7e5609a1cfdcb43827c19b9ac96

|

File details

Details for the file ml4co_kit-0.5.2-cp313-cp313-macosx_15_0_universal2.whl.

File metadata

- Download URL: ml4co_kit-0.5.2-cp313-cp313-macosx_15_0_universal2.whl

- Upload date:

- Size: 10.1 MB

- Tags: CPython 3.13, macOS 15.0+ universal2 (ARM64, x86-64)

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f4dc0fe6eb1c42dcc320969260a3e9d43e3d8ab0eb7348bfe5750308d609cbd8

|

|

| MD5 |

4208cc1b946129beaf3674c6fbe6a5bd

|

|

| BLAKE2b-256 |

5d1da695b92c8da9592b0f99f1eddc3283f36492e4a857b1eeb40001bfab4325

|

File details

Details for the file ml4co_kit-0.5.2-cp312-cp312-manylinux2014_x86_64.whl.

File metadata

- Download URL: ml4co_kit-0.5.2-cp312-cp312-manylinux2014_x86_64.whl

- Upload date:

- Size: 12.0 MB

- Tags: CPython 3.12

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

6ad48c28dda46839c8cefd632351dcee7edac1fb695dfd227b298a7298d92350

|

|

| MD5 |

c3ee1104ae2067675b4a6274a9e0d6cc

|

|

| BLAKE2b-256 |

bc6df15a6ebe67e791c3c0126c0de14185882acbcf58a643f650124da3745145

|

File details

Details for the file ml4co_kit-0.5.2-cp312-cp312-macosx_15_0_universal2.whl.

File metadata

- Download URL: ml4co_kit-0.5.2-cp312-cp312-macosx_15_0_universal2.whl

- Upload date:

- Size: 10.1 MB

- Tags: CPython 3.12, macOS 15.0+ universal2 (ARM64, x86-64)

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.10

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

508d181b1dfc9250c0dc19aa02b7c0999e52e222444933d691f9f5e476da6260

|

|

| MD5 |

1898b868c3ec84831d43eac7f5002cc4

|

|

| BLAKE2b-256 |

791ce29bffa328474960b4529ba58b5ca800255f9dad5b803c613911f9fd792a

|

File details

Details for the file ml4co_kit-0.5.2-cp311-cp311-manylinux2014_x86_64.whl.

File metadata

- Download URL: ml4co_kit-0.5.2-cp311-cp311-manylinux2014_x86_64.whl

- Upload date:

- Size: 12.1 MB

- Tags: CPython 3.11

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.11.15

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

71c2a59e22120d10dd5da6c2e7c717d35f532814b780b59449ce2e62b1040a54

|

|

| MD5 |

fa045f74c5942837f0a7464cd5af9375

|

|

| BLAKE2b-256 |

a6ae76ce574e8309c81ee5c80f109ca447ab40d0a6a8d64f5953b35d244f5fdf

|

File details

Details for the file ml4co_kit-0.5.2-cp311-cp311-macosx_15_0_universal2.whl.

File metadata

- Download URL: ml4co_kit-0.5.2-cp311-cp311-macosx_15_0_universal2.whl

- Upload date:

- Size: 10.1 MB

- Tags: CPython 3.11, macOS 15.0+ universal2 (ARM64, x86-64)

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.11.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ae6f59fcadfc0cb2fa7523c330b46ad7bf0c5405327da65dbefdfdef8fca020a

|

|

| MD5 |

c57554c76186733b3ad75db7cd562427

|

|

| BLAKE2b-256 |

1d11b5f3dca6a7d5ef18dedab8d227337519717c13eda09cbd2eec1a9365bd01

|

File details

Details for the file ml4co_kit-0.5.2-cp310-cp310-manylinux2014_x86_64.whl.

File metadata

- Download URL: ml4co_kit-0.5.2-cp310-cp310-manylinux2014_x86_64.whl

- Upload date:

- Size: 11.8 MB

- Tags: CPython 3.10

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.20

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

98a7f751735905418df6313513bdd31ca30cf5a08a4adeb1b489afb0ece51f76

|

|

| MD5 |

b51a36c658b8d9e9cbbe7b5d3556d766

|

|

| BLAKE2b-256 |

e0ed54ef102bc69d77be7bd5a79e0b42a9f9ae30c54a35d97934f5452e62d6f9

|

File details

Details for the file ml4co_kit-0.5.2-cp310-cp310-macosx_15_0_universal2.whl.

File metadata

- Download URL: ml4co_kit-0.5.2-cp310-cp310-macosx_15_0_universal2.whl

- Upload date:

- Size: 9.8 MB

- Tags: CPython 3.10, macOS 15.0+ universal2 (ARM64, x86-64)

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.11

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f50fbc7531c13dc0b19b150e7fe596a54c84aacfe4080b88dd88abc67ab6122a

|

|

| MD5 |

914c9b64ac3b4f836f2e26065e590629

|

|

| BLAKE2b-256 |

28f1ecd00f7ee95f66fe7d880f458e132959172bd0f77d2072062605e92c37a9

|

File details

Details for the file ml4co_kit-0.5.2-cp39-cp39-manylinux2014_x86_64.whl.

File metadata

- Download URL: ml4co_kit-0.5.2-cp39-cp39-manylinux2014_x86_64.whl

- Upload date:

- Size: 11.8 MB

- Tags: CPython 3.9

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.9.25

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f2c8415f43a4a4cd07be8d317b4b7f81000fbef5334af2d25ccf61109fce3bc0

|

|

| MD5 |

6d89decba88a5e7a213e336fb5b30bf7

|

|

| BLAKE2b-256 |

853b6809e31d9b82d22e08ff883eeb5f2fe73d47948bffe6b836b52511e09f16

|

File details

Details for the file ml4co_kit-0.5.2-cp39-cp39-macosx_15_0_universal2.whl.

File metadata

- Download URL: ml4co_kit-0.5.2-cp39-cp39-macosx_15_0_universal2.whl

- Upload date:

- Size: 9.8 MB

- Tags: CPython 3.9, macOS 15.0+ universal2 (ARM64, x86-64)

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.9.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

075ada8bd9f7c86d97dc52e2447b48baa651e071b6f37380bf8aaf8765914589

|

|

| MD5 |

7baedaa411a2ecd995882cdb8655e1c7

|

|

| BLAKE2b-256 |

b1131b46b07ce5abdd715849a71833e41f248ded161d8a539739128968938543

|

File details

Details for the file ml4co_kit-0.5.2-cp38-cp38-manylinux2014_x86_64.whl.

File metadata

- Download URL: ml4co_kit-0.5.2-cp38-cp38-manylinux2014_x86_64.whl

- Upload date:

- Size: 11.8 MB

- Tags: CPython 3.8

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.8.18

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2797493601dc85b8aa39a7b95af930b25b52ca6928ba97bf8a9e12f4e8a0873b

|

|

| MD5 |

317b18ce3d0507400ede08ca6b72fac6

|

|

| BLAKE2b-256 |

02147a019c46edfa0ad0918fe3a190bf67c78b0b60378bc5bd96e54cfecfde5a

|