Exporting Segment Anything models ONNX format

Project description

SAMExporter — SAM / SAM2 / SAM2.1 / SAM3 / MobileSAM → ONNX

Export Segment Anything, MobileSAM, Segment Anything 2 / 2.1, and Segment Anything 3 to ONNX for easy, dependency-free deployment.

Supported models:

| Model | Prompt types | Notes |

|---|---|---|

| SAM ViT-B / ViT-L / ViT-H | Point, Rectangle | Original Meta SAM |

| SAM ViT-B / ViT-L / ViT-H (quantized) | Point, Rectangle | Smaller, faster variants |

| MobileSAM | Point, Rectangle | Lightweight; fast on CPU |

| SAM2 Tiny / Small / Base+ / Large | Point, Rectangle | Meta SAM 2 |

| SAM2.1 Tiny / Small / Base+ / Large | Point, Rectangle | Improved SAM 2 |

| SAM3 ViT-H | Text, Point, Rectangle | Open-vocabulary text-driven segmentation |

Installation

Requires Python 3.11+.

pip install torch==2.10.0 torchvision==0.25.0 --index-url https://download.pytorch.org/whl/cpu

pip install samexporter

Note — Windows users: The optional

onnxsimmodel simplifier (used during ONNX export) has no pre-built wheel for Windows. If you plan to export models and want simplification, install with:pip install "samexporter[export]"or enable Windows Long Path support before installing.

From source

pip install torch==2.10.0 torchvision==0.25.0 --index-url https://download.pytorch.org/whl/cpu

git clone --recurse-submodules https://github.com/vietanhdev/samexporter

cd samexporter

pip install -e .

SAM / MobileSAM — Convert to ONNX

1. Download checkpoints

Place checkpoints in original_models/:

original_models/

sam_vit_b_01ec64.pth

sam_vit_l_0b3195.pth

sam_vit_h_4b8939.pth

mobile_sam.pt

Download links:

2. Export encoder

# SAM ViT-H (most accurate)

python -m samexporter.export_encoder \

--checkpoint original_models/sam_vit_h_4b8939.pth \

--output output_models/sam_vit_h_4b8939.encoder.onnx \

--model-type vit_h \

--quantize-out output_models/sam_vit_h_4b8939.encoder.quant.onnx \

--use-preprocess

# SAM ViT-B (fastest)

python -m samexporter.export_encoder \

--checkpoint original_models/sam_vit_b_01ec64.pth \

--output output_models/sam_vit_b_01ec64.encoder.onnx \

--model-type vit_b \

--quantize-out output_models/sam_vit_b_01ec64.encoder.quant.onnx \

--use-preprocess

3. Export decoder

python -m samexporter.export_decoder \

--checkpoint original_models/sam_vit_h_4b8939.pth \

--output output_models/sam_vit_h_4b8939.decoder.onnx \

--model-type vit_h \

--quantize-out output_models/sam_vit_h_4b8939.decoder.quant.onnx \

--return-single-mask

Remove --return-single-mask to return multiple mask proposals.

Batch convert all SAM models:

bash convert_all_meta_sam.sh

bash convert_mobile_sam.sh

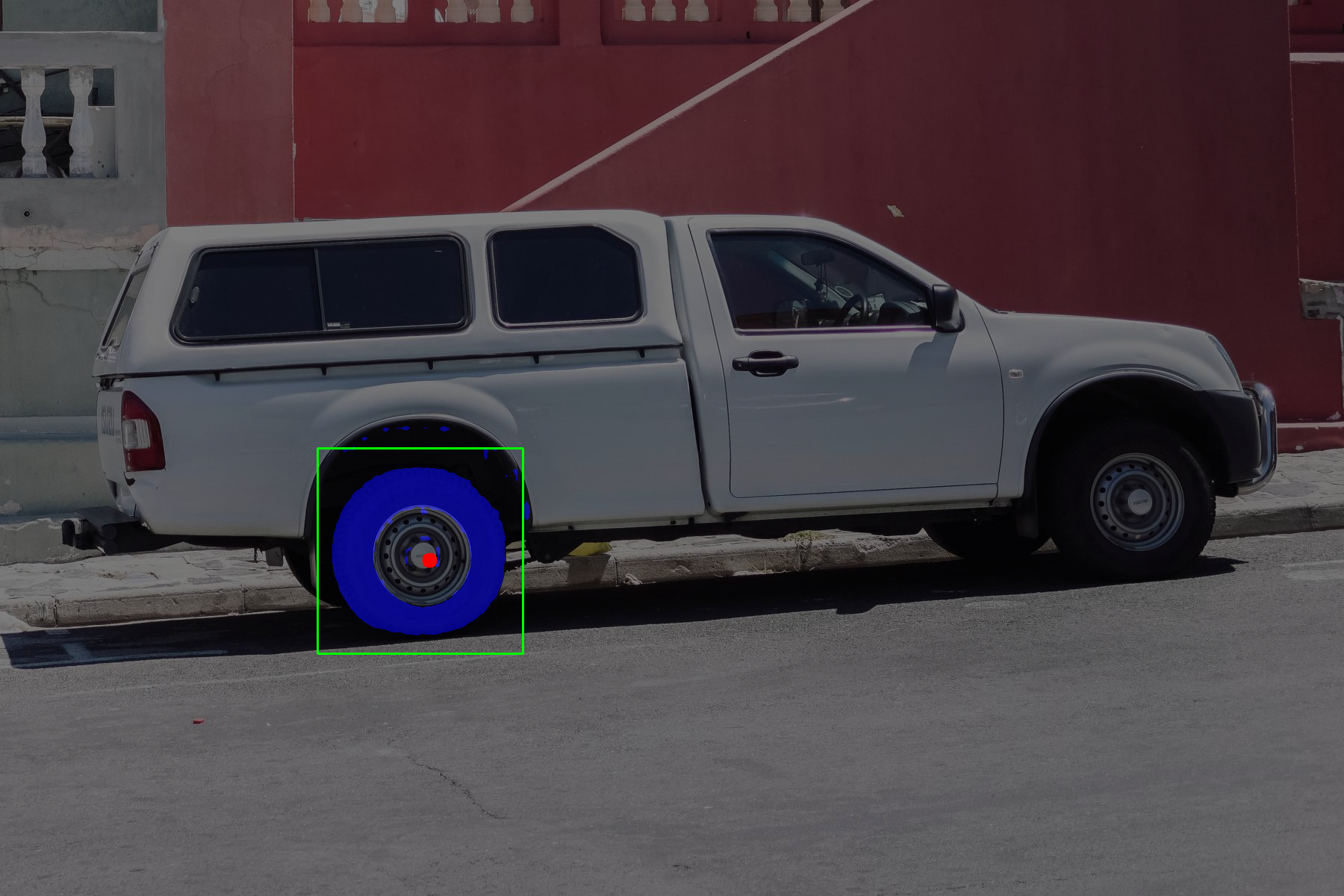

4. Run inference

python -m samexporter.inference \

--encoder_model output_models/sam_vit_h_4b8939.encoder.onnx \

--decoder_model output_models/sam_vit_h_4b8939.decoder.onnx \

--image images/truck.jpg \

--prompt images/truck_prompt.json \

--output output_images/truck.png \

--show

python -m samexporter.inference \

--encoder_model output_models/sam_vit_h_4b8939.encoder.onnx \

--decoder_model output_models/sam_vit_h_4b8939.decoder.onnx \

--image images/plants.png \

--prompt images/plants_prompt1.json \

--output output_images/plants_01.png \

--show

SAM2 / SAM2.1 — Convert to ONNX

1. Download checkpoints

cd original_models && bash download_sam2.sh

Or download manually:

original_models/

sam2_hiera_tiny.pt

sam2_hiera_small.pt

sam2_hiera_base_plus.pt

sam2_hiera_large.pt

sam2.1_hiera_tiny.pt

sam2.1_hiera_small.pt

sam2.1_hiera_base_plus.pt

sam2.1_hiera_large.pt

2. Install SAM2 PyTorch package

pip install git+https://github.com/facebookresearch/segment-anything-2.git

3. Export

# Single model example (SAM2 Tiny)

python -m samexporter.export_sam2 \

--checkpoint original_models/sam2_hiera_tiny.pt \

--output_encoder output_models/sam2_hiera_tiny.encoder.onnx \

--output_decoder output_models/sam2_hiera_tiny.decoder.onnx \

--model_type sam2_hiera_tiny

# SAM2.1 example

python -m samexporter.export_sam2 \

--checkpoint original_models/sam2.1_hiera_tiny.pt \

--output_encoder output_models/sam2.1_hiera_tiny.encoder.onnx \

--output_decoder output_models/sam2.1_hiera_tiny.decoder.onnx \

--model_type sam2.1_hiera_tiny

Batch convert all SAM2 / SAM2.1 models:

bash convert_all_meta_sam2.sh

4. Run inference

python -m samexporter.inference \

--encoder_model output_models/sam2_hiera_tiny.encoder.onnx \

--decoder_model output_models/sam2_hiera_tiny.decoder.onnx \

--image images/truck.jpg \

--prompt images/truck_prompt.json \

--sam_variant sam2 \

--output output_images/sam2_truck.png \

--show

SAM3 — Convert to ONNX

SAM3 extends the SAM family with open-vocabulary, text-driven segmentation. In addition to point and rectangle prompts, it accepts text prompts (e.g., "truck", "person") to detect and segment objects without any prior training on those classes.

SAM3 exports into three separate ONNX models: an image encoder, a language (text) encoder, and a decoder.

Pre-exported ONNX models

Pre-exported models are available on HuggingFace and are downloaded automatically:

vietanhdev/segment-anything-3-onnx-models

sam3_image_encoder.onnx (+ .data)

sam3_language_encoder.onnx (+ .data)

sam3_decoder.onnx (+ .data)

Export from PyTorch (optional)

# Clone the SAM3 source (required for export only, not inference)

git submodule update --init sam3

# Install SAM3 dependencies

pip install osam

# Export (add --simplify for ONNX simplification, requires [export] extra on Windows)

python -m samexporter.export_sam3 \

--output_dir output_models/sam3 \

--opset 18

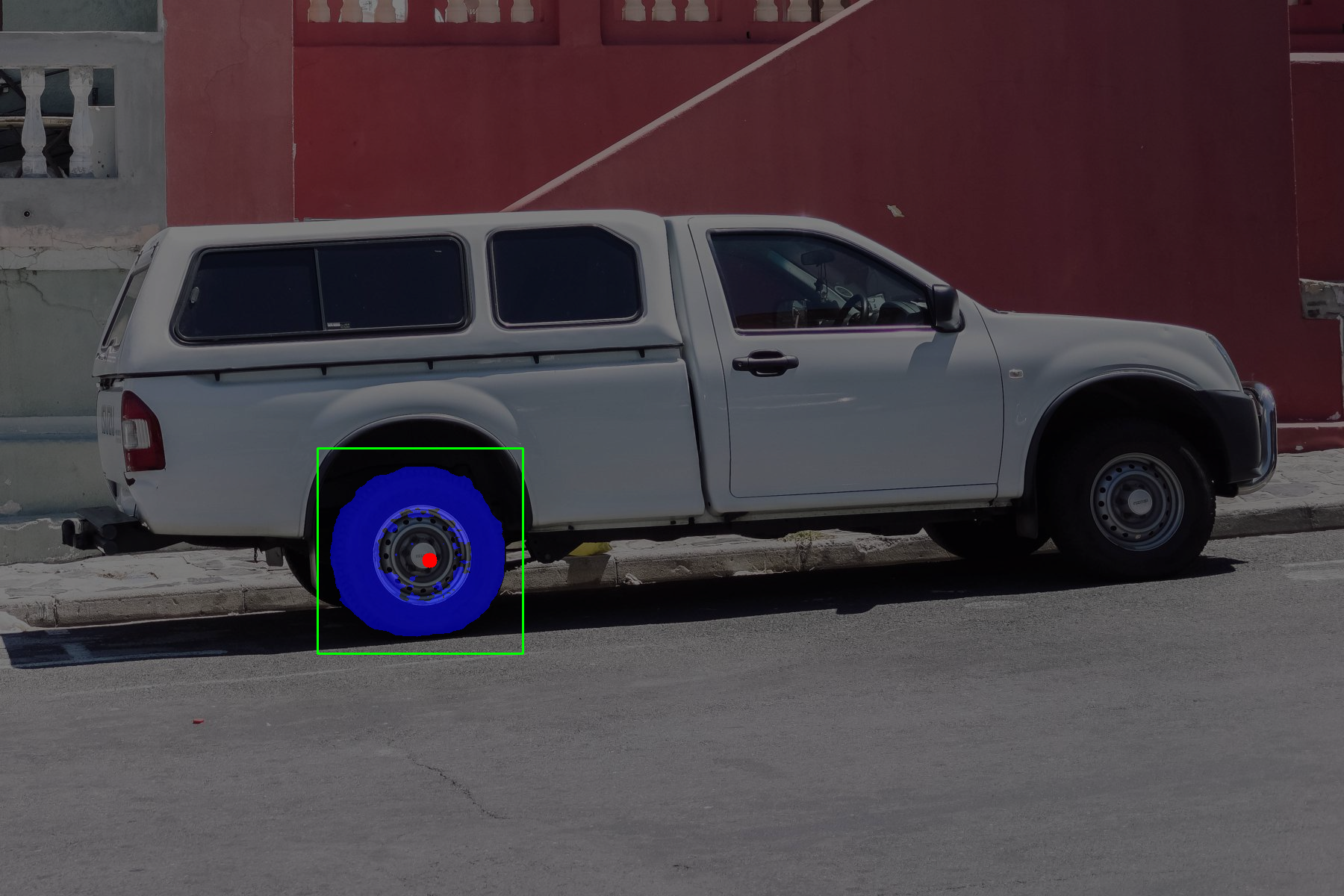

Run inference

Text-only prompt (detects all instances matching the text):

python -m samexporter.inference \

--sam_variant sam3 \

--encoder_model output_models/sam3/sam3_image_encoder.onnx \

--decoder_model output_models/sam3/sam3_decoder.onnx \

--language_encoder_model output_models/sam3/sam3_language_encoder.onnx \

--image images/truck.jpg \

--prompt images/truck_sam3.json \

--text_prompt "truck" \

--output output_images/truck_sam3.png \

--show

Text + rectangle prompt (text guides detection, rectangle refines region):

python -m samexporter.inference \

--sam_variant sam3 \

--encoder_model output_models/sam3/sam3_image_encoder.onnx \

--decoder_model output_models/sam3/sam3_decoder.onnx \

--language_encoder_model output_models/sam3/sam3_language_encoder.onnx \

--image images/truck.jpg \

--prompt images/truck_sam3_box.json \

--text_prompt "truck" \

--output output_images/truck_sam3_box.png \

--show

Text + point prompt:

python -m samexporter.inference \

--sam_variant sam3 \

--encoder_model output_models/sam3/sam3_image_encoder.onnx \

--decoder_model output_models/sam3/sam3_decoder.onnx \

--language_encoder_model output_models/sam3/sam3_language_encoder.onnx \

--image images/truck.jpg \

--prompt images/truck_sam3_point.json \

--text_prompt "truck" \

--output output_images/truck_sam3_point.png \

--show

Note: Always pass

--text_promptfor SAM3. Without it the model defaults to a "visual" text token and may produce zero detections.

Prompt JSON format

Prompts are JSON files containing a list of mark objects:

[

{"type": "point", "data": [x, y], "label": 1},

{"type": "rectangle", "data": [x1, y1, x2, y2]},

{"type": "text", "data": "object description"}

]

label: 1— foreground point;label: 0— background pointtype: "text"is specific to SAM3 (use--text_prompton the CLI instead for convenience)

Tips

- Use quantized models (

*.quant.onnx) for faster inference and smaller file size with minimal accuracy loss. - SAM ViT-B is the fastest SAM1 variant; SAM ViT-H is the most accurate.

- SAM2 Tiny / SAM2.1 Tiny are good CPU-friendly choices for SAM2.

- SAM3 is slower due to its three-model pipeline but uniquely supports natural-language object queries.

- Run the encoder once per image; the lightweight decoder handles prompt changes in real time.

Running tests

pip install pytest

pytest tests/

AnyLabeling

This package was originally developed for the auto-labeling feature in AnyLabeling. However, it can be used independently for any ONNX-based deployment scenario.

License

MIT — see LICENSE for details.

References

- ONNX-SAM2-Segment-Anything: https://github.com/ibaiGorordo/ONNX-SAM2-Segment-Anything

- sam3-onnx: https://github.com/wkentaro/sam3-onnx

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file samexporter-0.4.6.tar.gz.

File metadata

- Download URL: samexporter-0.4.6.tar.gz

- Upload date:

- Size: 37.6 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d6eba3676b7e106c0ee58c2621a0cbff795a07298ebad5c0a3a7d989d41f6ced

|

|

| MD5 |

96dc33a28181e310bdc711f675a76803

|

|

| BLAKE2b-256 |

5587e2c0b07f6a8027eb6675597c062f7f9789d583b0b35a03fce8cddcfe9be3

|

Provenance

The following attestation bundles were made for samexporter-0.4.6.tar.gz:

Publisher:

python-publish.yml on vietanhdev/samexporter

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

samexporter-0.4.6.tar.gz -

Subject digest:

d6eba3676b7e106c0ee58c2621a0cbff795a07298ebad5c0a3a7d989d41f6ced - Sigstore transparency entry: 976239060

- Sigstore integration time:

-

Permalink:

vietanhdev/samexporter@8d7844347a3aafc7f35cf4b1fa2536d7efb07d2c -

Branch / Tag:

refs/tags/v0.4.6 - Owner: https://github.com/vietanhdev

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

python-publish.yml@8d7844347a3aafc7f35cf4b1fa2536d7efb07d2c -

Trigger Event:

push

-

Statement type:

File details

Details for the file samexporter-0.4.6-py3-none-any.whl.

File metadata

- Download URL: samexporter-0.4.6-py3-none-any.whl

- Upload date:

- Size: 45.7 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3d9f08eda9b10d72f328399b58ae9753ae9b3ee0b5878fd24b693d9286ce47a9

|

|

| MD5 |

328e4d1d91952ee5adcb4fdfd636d523

|

|

| BLAKE2b-256 |

fa9e8648740ea94aff48f18ea2f18b0eac8facd40be3fc934de35501fe40bef5

|

Provenance

The following attestation bundles were made for samexporter-0.4.6-py3-none-any.whl:

Publisher:

python-publish.yml on vietanhdev/samexporter

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

samexporter-0.4.6-py3-none-any.whl -

Subject digest:

3d9f08eda9b10d72f328399b58ae9753ae9b3ee0b5878fd24b693d9286ce47a9 - Sigstore transparency entry: 976239061

- Sigstore integration time:

-

Permalink:

vietanhdev/samexporter@8d7844347a3aafc7f35cf4b1fa2536d7efb07d2c -

Branch / Tag:

refs/tags/v0.4.6 - Owner: https://github.com/vietanhdev

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

python-publish.yml@8d7844347a3aafc7f35cf4b1fa2536d7efb07d2c -

Trigger Event:

push

-

Statement type: