Tensors and Dynamic neural networks in Python with strong GPU acceleration

Project description

PyTorch is a Python package that provides two high-level features:

- Tensor computation (like NumPy) with strong GPU acceleration

- Deep neural networks built on a tape-based autograd system

You can reuse your favorite Python packages such as NumPy, SciPy, and Cython to extend PyTorch when needed.

Our trunk health (Continuous Integration signals) can be found at hud.pytorch.org.

- More About PyTorch

- Installation

- Getting Started

- Resources

- Communication

- Releases and Contributing

- The Team

- License

More About PyTorch

At a granular level, PyTorch is a library that consists of the following components:

| Component | Description |

|---|---|

| torch | A Tensor library like NumPy, with strong GPU support |

| torch.autograd | A tape-based automatic differentiation library that supports all differentiable Tensor operations in torch |

| torch.jit | A compilation stack (TorchScript) to create serializable and optimizable models from PyTorch code |

| torch.nn | A neural networks library deeply integrated with autograd designed for maximum flexibility |

| torch.multiprocessing | Python multiprocessing, but with magical memory sharing of torch Tensors across processes. Useful for data loading and Hogwild training |

| torch.utils | DataLoader and other utility functions for convenience |

Usually, PyTorch is used either as:

- A replacement for NumPy to use the power of GPUs.

- A deep learning research platform that provides maximum flexibility and speed.

Elaborating Further:

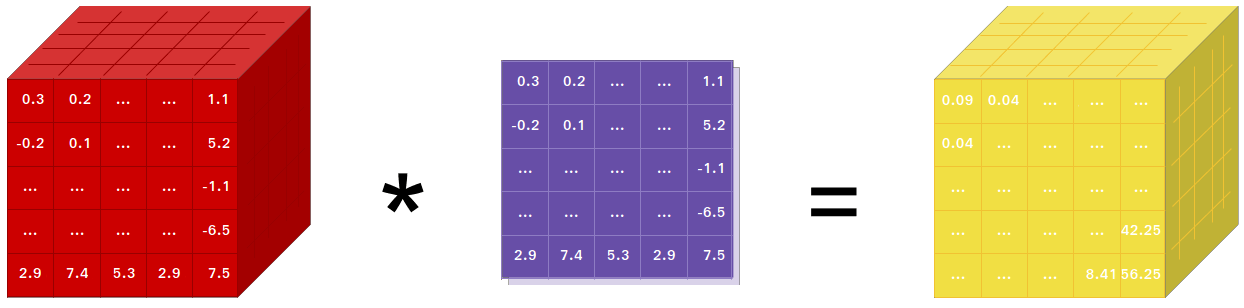

A GPU-Ready Tensor Library

If you use NumPy, then you have used Tensors (a.k.a. ndarray).

PyTorch provides Tensors that can live either on the CPU or the GPU and accelerates the computation by a huge amount.

We provide a wide variety of tensor routines to accelerate and fit your scientific computation needs such as slicing, indexing, mathematical operations, linear algebra, reductions. And they are fast!

Dynamic Neural Networks: Tape-Based Autograd

PyTorch has a unique way of building neural networks: using and replaying a tape recorder.

Most frameworks such as TensorFlow, Theano, Caffe, and CNTK have a static view of the world. One has to build a neural network and reuse the same structure again and again. Changing the way the network behaves means that one has to start from scratch.

With PyTorch, we use a technique called reverse-mode auto-differentiation, which allows you to change the way your network behaves arbitrarily with zero lag or overhead. Our inspiration comes from several research papers on this topic, as well as current and past work such as torch-autograd, autograd, Chainer, etc.

While this technique is not unique to PyTorch, it's one of the fastest implementations of it to date. You get the best of speed and flexibility for your crazy research.

Python First

PyTorch is not a Python binding into a monolithic C++ framework. It is built to be deeply integrated into Python. You can use it naturally like you would use NumPy / SciPy / scikit-learn etc. You can write your new neural network layers in Python itself, using your favorite libraries and use packages such as Cython and Numba. Our goal is to not reinvent the wheel where appropriate.

Imperative Experiences

PyTorch is designed to be intuitive, linear in thought, and easy to use. When you execute a line of code, it gets executed. There isn't an asynchronous view of the world. When you drop into a debugger or receive error messages and stack traces, understanding them is straightforward. The stack trace points to exactly where your code was defined. We hope you never spend hours debugging your code because of bad stack traces or asynchronous and opaque execution engines.

Fast and Lean

PyTorch has minimal framework overhead. We integrate acceleration libraries such as Intel MKL and NVIDIA (cuDNN, NCCL) to maximize speed. At the core, its CPU and GPU Tensor and neural network backends are mature and have been tested for years.

Hence, PyTorch is quite fast — whether you run small or large neural networks.

The memory usage in PyTorch is extremely efficient compared to Torch or some of the alternatives. We've written custom memory allocators for the GPU to make sure that your deep learning models are maximally memory efficient. This enables you to train bigger deep learning models than before.

Extensions Without Pain

Writing new neural network modules, or interfacing with PyTorch's Tensor API, was designed to be straightforward and with minimal abstractions.

You can write new neural network layers in Python using the torch API or your favorite NumPy-based libraries such as SciPy.

If you want to write your layers in C/C++, we provide a convenient extension API that is efficient and with minimal boilerplate. No wrapper code needs to be written. You can see a tutorial here and an example here.

Installation

Binaries

Commands to install binaries via Conda or pip wheels are on our website: https://pytorch.org/get-started/locally/

NVIDIA Jetson Platforms

Python wheels for NVIDIA's Jetson Nano, Jetson TX1/TX2, Jetson Xavier NX/AGX, and Jetson AGX Orin are provided here and the L4T container is published here

They require JetPack 4.2 and above, and @dusty-nv and @ptrblck are maintaining them.

From Source

Prerequisites

If you are installing from source, you will need:

- Python 3.10 or later

- A compiler that fully supports C++20, such as clang or gcc (gcc 11.3.0 or newer is required, on Linux)

- Visual Studio or Visual Studio Build Tool (Windows only)

- At least 10 GB of free disk space

- 30-60 minutes for the initial build (subsequent rebuilds are much faster)

* PyTorch CI uses Visual C++ BuildTools, which come with Visual Studio Enterprise, Professional, or Community Editions. You can also install the build tools from https://visualstudio.microsoft.com/visual-cpp-build-tools/. The build tools do not come with Visual Studio Code by default.

An example of environment setup is shown below:

- Linux:

$ source <CONDA_INSTALL_DIR>/bin/activate

$ conda create -y -n <CONDA_NAME>

$ conda activate <CONDA_NAME>

- Windows:

$ source <CONDA_INSTALL_DIR>\Scripts\activate.bat

$ conda create -y -n <CONDA_NAME>

$ conda activate <CONDA_NAME>

$ call "C:\Program Files\Microsoft Visual Studio\<VERSION>\Community\VC\Auxiliary\Build\vcvarsall.bat" x64

A conda environment is not required. You can also do a PyTorch build in a

standard virtual environment, e.g., created with tools like uv, provided

your system has installed all the necessary dependencies unavailable as pip

packages (e.g., CUDA, MKL.)

NVIDIA CUDA Support

If you want to compile with CUDA support, select a supported version of CUDA from our support matrix, then install the following:

- NVIDIA CUDA

- NVIDIA cuDNN v9.0 or above

- Compiler compatible with CUDA

Note: You could refer to the cuDNN Support Matrix for cuDNN versions with the various supported CUDA, CUDA driver, and NVIDIA hardware.

If you want to disable CUDA support, export the environment variable USE_CUDA=0.

Other potentially useful environment variables may be found in setup.py. If

CUDA is installed in a non-standard location, set PATH so that the nvcc you

want to use can be found (e.g., export PATH=/usr/local/cuda-12.8/bin:$PATH).

If you are building for NVIDIA's Jetson platforms (Jetson Nano, TX1, TX2, AGX Xavier), Instructions to install PyTorch for Jetson Nano are available here

AMD ROCm Support

If you want to compile with ROCm support, install

- AMD ROCm 4.0 and above installation

- ROCm is currently supported only for Linux systems.

By default the build system expects ROCm to be installed in /opt/rocm. If ROCm is installed in a different directory, the ROCM_PATH environment variable must be set to the ROCm installation directory. The build system automatically detects the AMD GPU architecture. Optionally, the AMD GPU architecture can be explicitly set with the PYTORCH_ROCM_ARCH environment variable AMD GPU architecture

If you want to disable ROCm support, export the environment variable USE_ROCM=0.

Other potentially useful environment variables may be found in setup.py.

Intel GPU Support

If you want to compile with Intel GPU support, follow these

- PyTorch Prerequisites for Intel GPUs instructions.

- Intel GPU is supported for Linux and Windows.

If you want to disable Intel GPU support, export the environment variable USE_XPU=0.

Other potentially useful environment variables may be found in setup.py.

Get the PyTorch Source

git clone https://github.com/pytorch/pytorch

cd pytorch

# if you are updating an existing checkout

git submodule sync

git submodule update --init --recursive

Install Dependencies

Common

# Run this command from the PyTorch directory after cloning the source code using the “Get the PyTorch Source“ section above

pip install --group dev

On Linux

pip install mkl-static mkl-include

# CUDA only: Add LAPACK support for the GPU if needed

# magma installation: run with active conda environment. specify CUDA version to install

.ci/docker/common/install_magma_conda.sh 12.4

# (optional) If using torch.compile with inductor/triton, install the matching version of triton

# Run from the pytorch directory after cloning

# For Intel GPU support, please explicitly `export USE_XPU=1` before running command.

make triton

On Windows

pip install mkl-static mkl-include

# Add these packages if torch.distributed is needed.

# Distributed package support on Windows is a prototype feature and is subject to changes.

conda install -c conda-forge libuv=1.51

Install PyTorch

On Linux

If you're compiling for AMD ROCm then first run this command:

# Only run this if you're compiling for ROCm

python tools/amd_build/build_amd.py

Install PyTorch

# the CMake prefix for conda environment

export CMAKE_PREFIX_PATH="${CONDA_PREFIX:-'$(dirname $(which conda))/../'}:${CMAKE_PREFIX_PATH}"

python -m pip install --no-build-isolation -v -e .

# the CMake prefix for non-conda environment, e.g. Python venv

# call following after activating the venv

export CMAKE_PREFIX_PATH="${VIRTUAL_ENV}:${CMAKE_PREFIX_PATH}"

On macOS

python -m pip install --no-build-isolation -v -e .

On Windows

If you want to build legacy python code, please refer to Building on legacy code and CUDA

CPU-only builds

In this mode PyTorch computations will run on your CPU, not your GPU.

python -m pip install --no-build-isolation -v -e .

Note on OpenMP: The desired OpenMP implementation is Intel OpenMP (iomp). In order to link against iomp, you'll need to manually download the library and set up the building environment by tweaking CMAKE_INCLUDE_PATH and LIB. The instruction here is an example for setting up both MKL and Intel OpenMP. Without these configurations for CMake, Microsoft Visual C OpenMP runtime (vcomp) will be used.

CUDA based build

In this mode PyTorch computations will leverage your GPU via CUDA for faster number crunching

NVTX is needed to build PyTorch with CUDA. NVTX is a part of CUDA distributive, where it is called "Nsight Compute". To install it onto an already installed CUDA run CUDA installation once again and check the corresponding checkbox. Make sure that CUDA with Nsight Compute is installed after Visual Studio.

Currently, VS 2017 / 2019, and Ninja are supported as the generator of CMake. If ninja.exe is detected in PATH, then Ninja will be used as the default generator, otherwise, it will use VS 2017 / 2019.

If Ninja is selected as the generator, the latest MSVC will get selected as the underlying toolchain.

Additional libraries such as Magma, oneDNN, a.k.a. MKLDNN or DNNL, and Sccache are often needed. Please refer to the installation-helper to install them.

You can refer to the build_pytorch.bat script for some other environment variables configurations

cmd

:: Set the environment variables after you have downloaded and unzipped the mkl package,

:: else CMake would throw an error as `Could NOT find OpenMP`.

set CMAKE_INCLUDE_PATH={Your directory}\mkl\include

set LIB={Your directory}\mkl\lib;%LIB%

:: Read the content in the previous section carefully before you proceed.

:: [Optional] If you want to override the underlying toolset used by Ninja and Visual Studio with CUDA, please run the following script block.

:: "Visual Studio 2019 Developer Command Prompt" will be run automatically.

:: Make sure you have CMake >= 3.12 before you do this when you use the Visual Studio generator.

set CMAKE_GENERATOR_TOOLSET_VERSION=14.27

set DISTUTILS_USE_SDK=1

for /f "usebackq tokens=*" %i in (`"%ProgramFiles(x86)%\Microsoft Visual Studio\Installer\vswhere.exe" -version [15^,17^) -products * -latest -property installationPath`) do call "%i\VC\Auxiliary\Build\vcvarsall.bat" x64 -vcvars_ver=%CMAKE_GENERATOR_TOOLSET_VERSION%

:: [Optional] If you want to override the CUDA host compiler

set CUDAHOSTCXX=C:\Program Files (x86)\Microsoft Visual Studio\2019\Community\VC\Tools\MSVC\14.27.29110\bin\HostX64\x64\cl.exe

python -m pip install --no-build-isolation -v -e .

Intel GPU builds

In this mode PyTorch with Intel GPU support will be built.

Please make sure the common prerequisites as well as the prerequisites for Intel GPU are properly installed and the environment variables are configured prior to starting the build. For build tool support, Visual Studio 2022 is required.

Then PyTorch can be built with the command:

:: CMD Commands:

:: Set the CMAKE_PREFIX_PATH to help find corresponding packages

:: %CONDA_PREFIX% only works after `conda activate custom_env`

if defined CMAKE_PREFIX_PATH (

set "CMAKE_PREFIX_PATH=%CONDA_PREFIX%\Library;%CMAKE_PREFIX_PATH%"

) else (

set "CMAKE_PREFIX_PATH=%CONDA_PREFIX%\Library"

)

python -m pip install --no-build-isolation -v -e .

Adjust Build Options (Optional)

You can adjust the configuration of cmake variables optionally (without building first), by doing the following. For example, adjusting the pre-detected directories for CuDNN or BLAS can be done with such a step.

On Linux

export CMAKE_PREFIX_PATH="${CONDA_PREFIX:-'$(dirname $(which conda))/../'}:${CMAKE_PREFIX_PATH}"

CMAKE_ONLY=1 python setup.py build

ccmake build # or cmake-gui build

On macOS

export CMAKE_PREFIX_PATH="${CONDA_PREFIX:-'$(dirname $(which conda))/../'}:${CMAKE_PREFIX_PATH}"

MACOSX_DEPLOYMENT_TARGET=11.0 CMAKE_ONLY=1 python setup.py build

ccmake build # or cmake-gui build

Docker Image

Using pre-built images

You can also pull a pre-built docker image from Docker Hub and run with docker v23.0+

docker run --gpus all --rm -ti --ipc=host pytorch/pytorch:latest

Please note that PyTorch uses shared memory to share data between processes, so if torch multiprocessing is used (e.g.

for multithreaded data loaders) the default shared memory segment size that container runs with is not enough, and you

should increase shared memory size either with --ipc=host or --shm-size command line options to nvidia-docker run.

Building the image yourself

NOTE: Must be built with a Docker version >= 23.0

The Dockerfile is supplied to build images with CUDA 12.1 support and cuDNN v9.

You can pass PYTHON_VERSION=x.y make variable to specify which Python version is to be used by Miniconda, or leave it

unset to use the default, as the Dockerfile uses system Python.

make -f docker.Makefile

# images are tagged as docker.io/${your_docker_username}/pytorch

You can also pass the CMAKE_VARS="..." environment variable to specify additional CMake variables to be passed to CMake during the build.

See setup.py for the list of available variables.

make -f docker.Makefile

Building the Documentation

To build documentation in various formats, you will need Sphinx

and the pytorch_sphinx_theme2.

Before you build the documentation locally, ensure torch is

installed in your environment. For small fixes, you can install the

nightly version as described in Getting Started.

For more complex fixes, such as adding a new module and docstrings for the new module, you might need to install torch from source. See Docstring Guidelines for docstring conventions.

cd docs/

pip install -r requirements.txt

make html

make serve

Run make to get a list of all available output formats.

If you get a katex error run npm install katex. If it persists, try

npm install -g katex

[!NOTE] If you see a numpy incompatibility error, run:

pip install 'numpy<2'

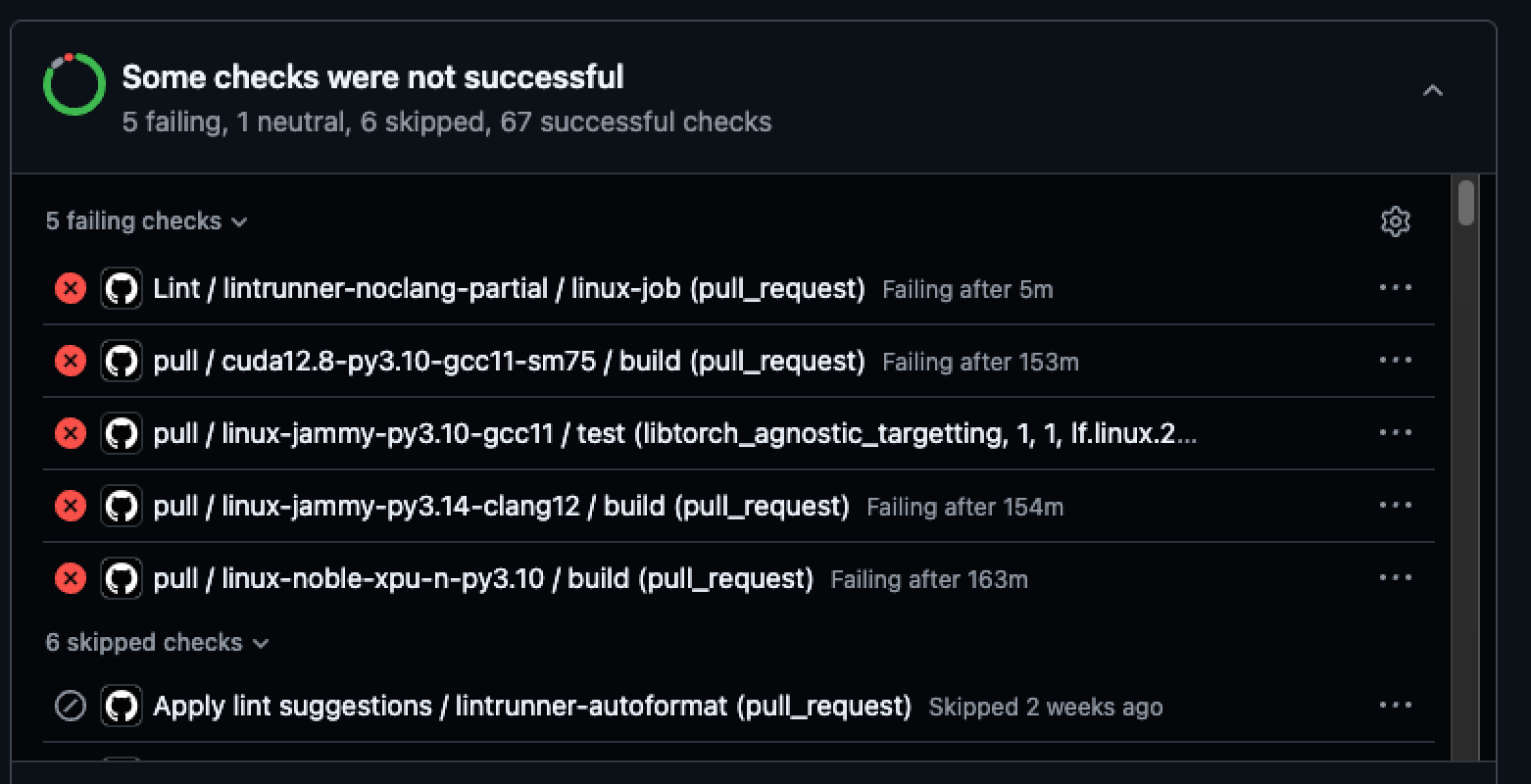

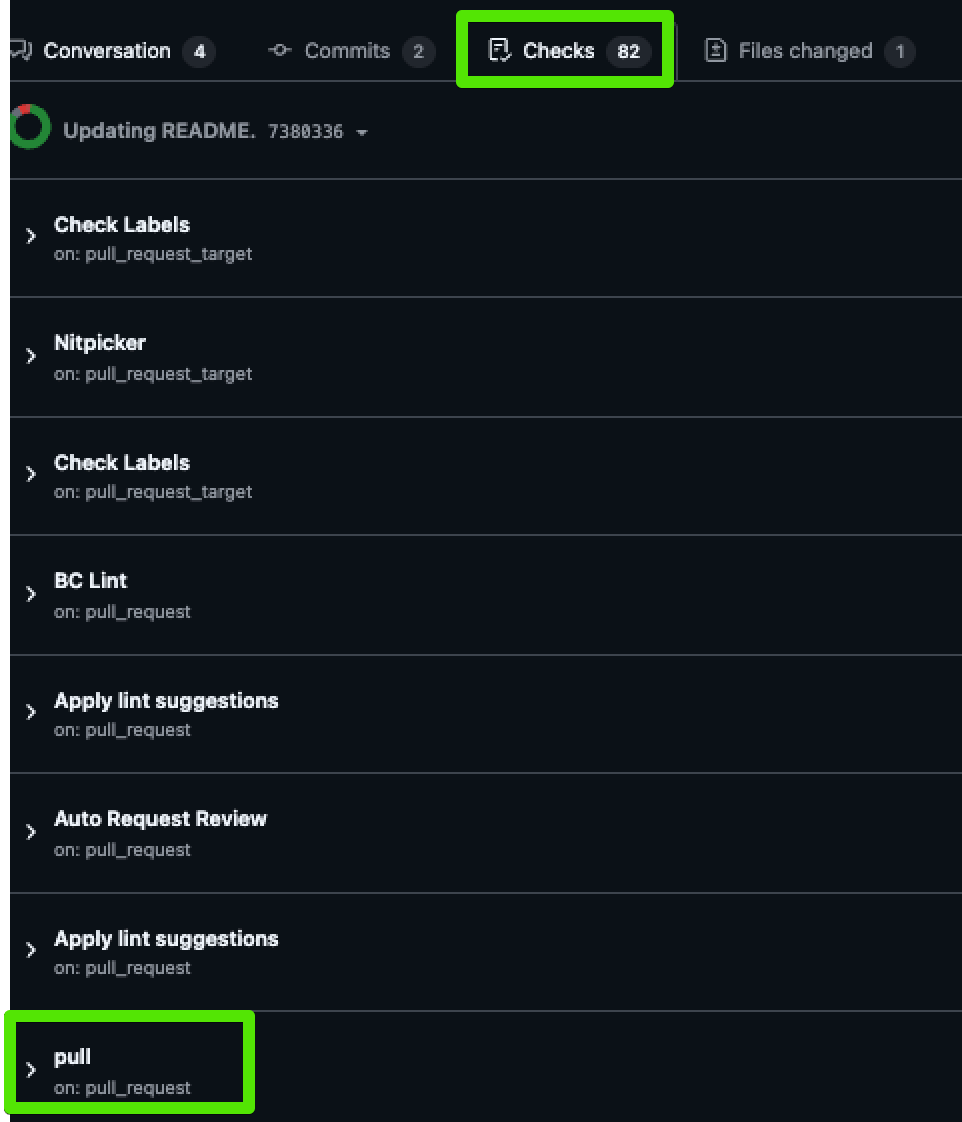

Troubleshooting CI Errors

Your build may show errors you didn't have locally - here's how to find the errors relevant to the docs.

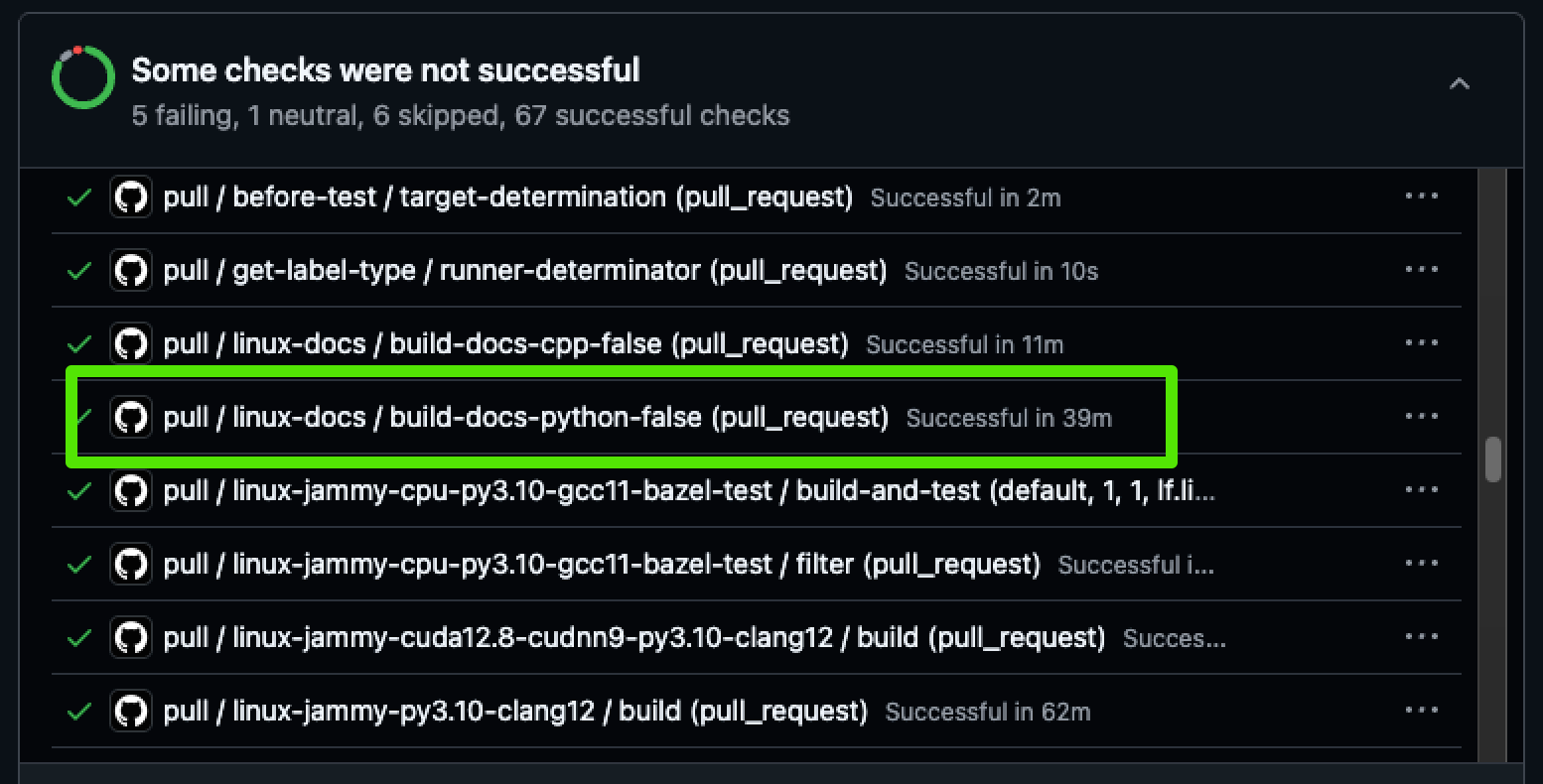

If the build has any errors, you will see something like this on the PR:

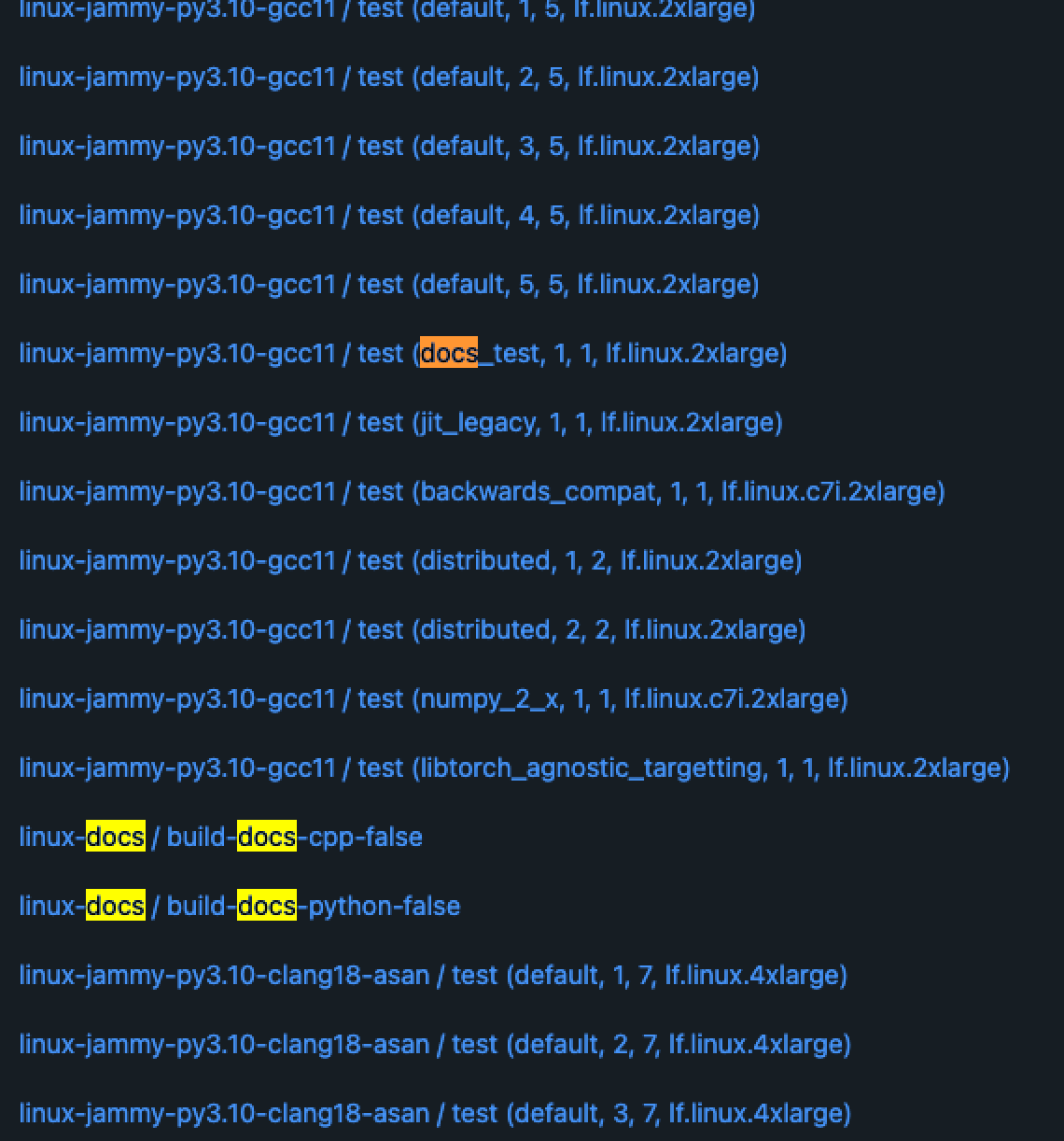

Any doc-related errors will occur in jobs that include "doc" somewhere in the title. It doesn't look like any of these jobs are relevant to our docs.

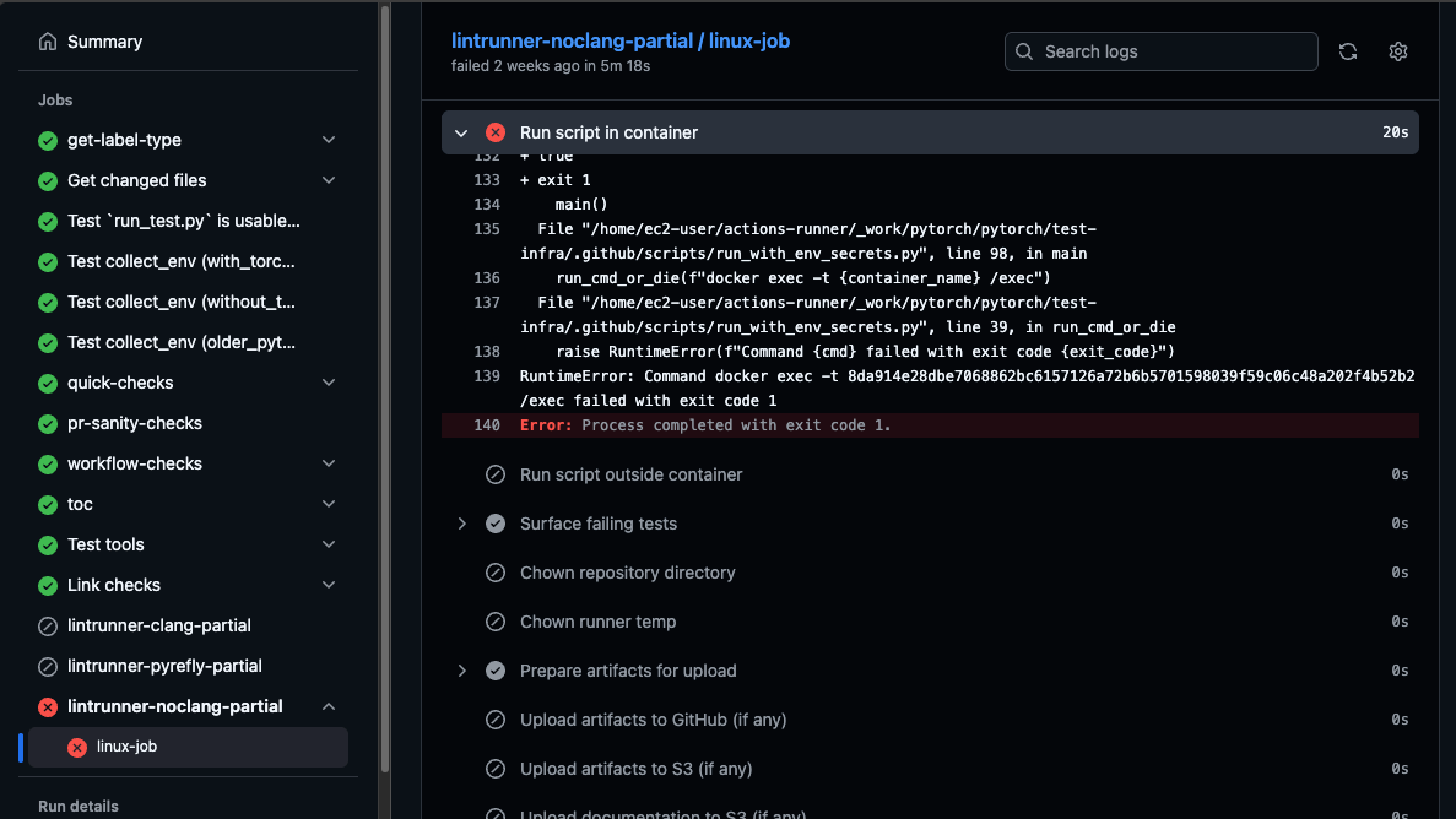

Let's take a look anyway. Click on the job to see the logs:

And we can be sure that this job does not involve docs.

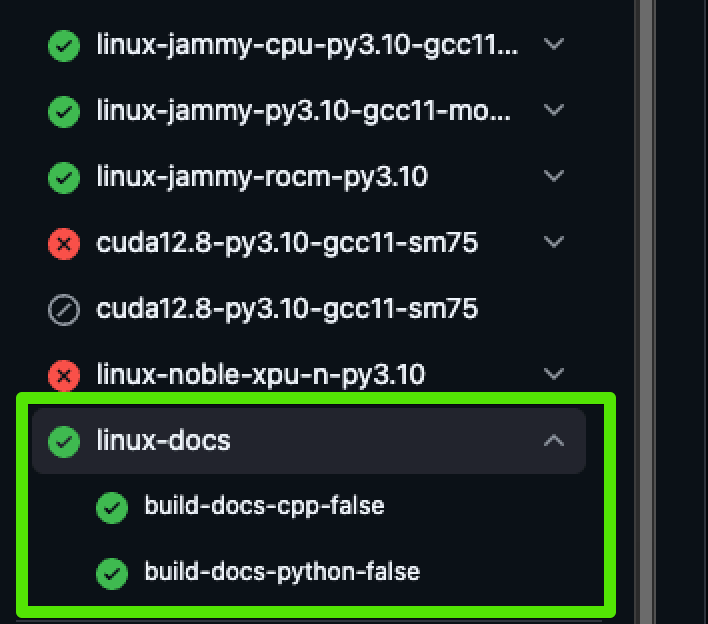

Looking at this build, we can see these jobs are relevant to our docs - and they didn't have any errors:

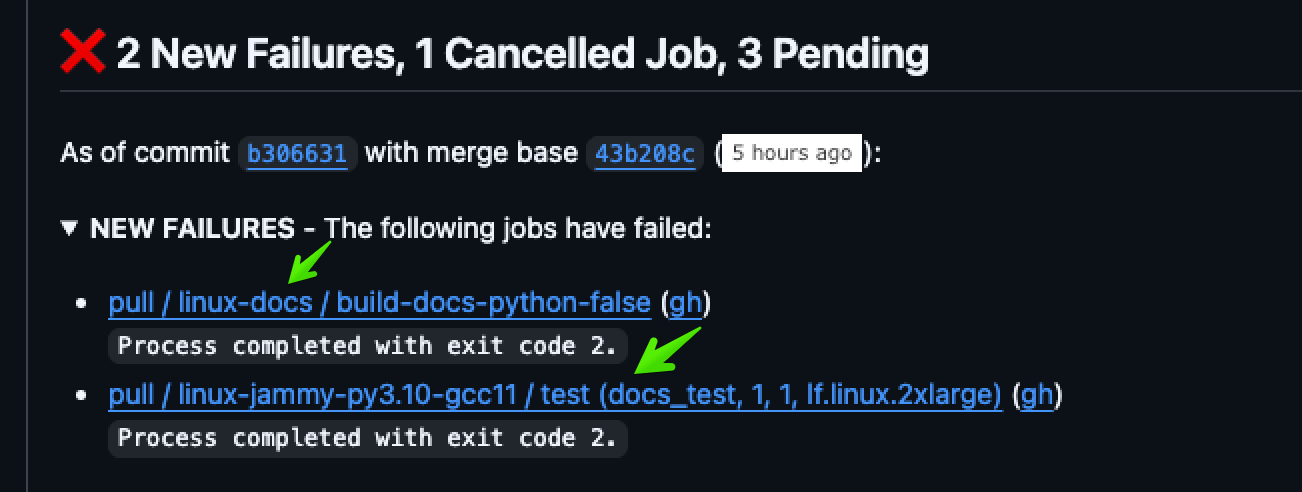

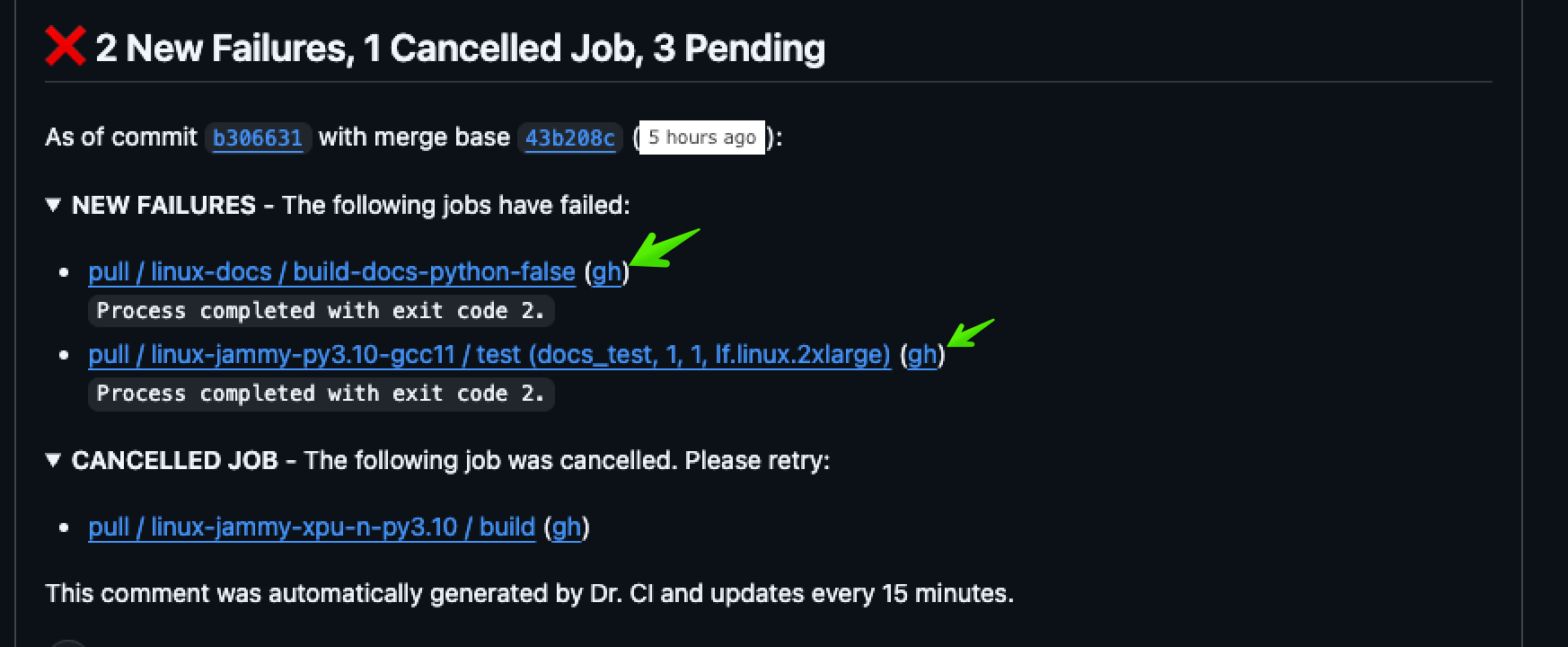

You might also see a comment on the PR like this:

We can see that some of these issues are relevant to our docs.

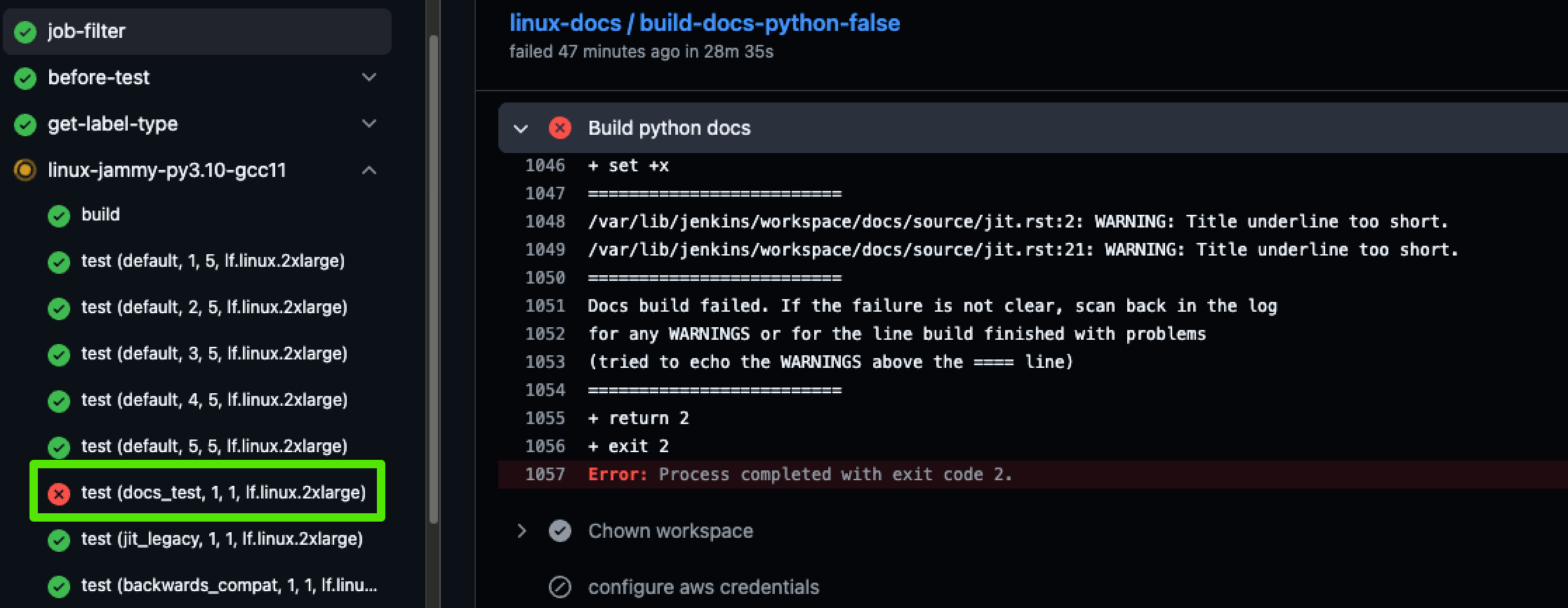

Open the logs by clicking on the gh link:

And here we can see there is a doc-related error:

You can always find the relevant doc builds by going to the Checks tab on your PR, and scrolling down to pull.

You can either click through or toggle the accordion to see all of the jobs here, where you can see the docs jobs highlighted:

If you click through, you'll see the doc jobs at the bottom, like this:

Building a PDF

To compile a PDF of all PyTorch documentation, ensure you have

texlive and LaTeX installed. On macOS, you can install them using:

brew install --cask mactex

To create the PDF:

-

Run:

make latexpdfThis will generate the necessary files in the

build/latexdirectory. -

Navigate to this directory and execute:

make LATEXOPTS="-interaction=nonstopmode"This will produce a

pytorch.pdfwith the desired content. Run this command one more time so that it generates the correct table of contents and index.

[!NOTE] To view the Table of Contents, switch to the Table of Contents view in your PDF viewer.

Previous Versions

Installation instructions and binaries for previous PyTorch versions may be found on our website.

Getting Started

Pointers to get you started:

- Tutorials: get you started with understanding and using PyTorch

- Examples: easy to understand PyTorch code across all domains

- The API Reference

- Glossary

Resources

- PyTorch.org

- PyTorch Tutorials

- PyTorch Examples

- PyTorch Models

- Intro to Deep Learning with PyTorch from Udacity

- Intro to Machine Learning with PyTorch from Udacity

- Deep Neural Networks with PyTorch from Coursera

- PyTorch Twitter

- PyTorch Blog

- PyTorch YouTube

Communication

- Forums: Discuss implementations, research, etc. https://discuss.pytorch.org

- GitHub Issues: Bug reports, feature requests, install issues, RFCs, thoughts, etc.

- Slack: The PyTorch Slack hosts a primary audience of moderate to experienced PyTorch users and developers for general chat, online discussions, collaboration, etc. If you are a beginner looking for help, the primary medium is PyTorch Forums. If you need a slack invite, please fill this form: https://goo.gl/forms/PP1AGvNHpSaJP8to1

- Newsletter: No-noise, a one-way email newsletter with important announcements about PyTorch. You can sign-up here: https://eepurl.com/cbG0rv

- Facebook Page: Important announcements about PyTorch. https://www.facebook.com/pytorch

- For brand guidelines, please visit our website at pytorch.org

Releases and Contributing

Typically, PyTorch has three minor releases a year. Please let us know if you encounter a bug by filing an issue.

We appreciate all contributions. If you are planning to contribute back bug-fixes, please do so without any further discussion.

If you plan to contribute new features, utility functions, or extensions to the core, please first open an issue and discuss the feature with us. Sending a PR without discussion might end up resulting in a rejected PR because we might be taking the core in a different direction than you might be aware of.

To learn more about making a contribution to PyTorch, please see our Contribution page. For more information about PyTorch releases, see Release page.

The Team

PyTorch is a community-driven project with several skillful engineers and researchers contributing to it.

PyTorch is currently maintained by Soumith Chintala, Gregory Chanan, Dmytro Dzhulgakov, Edward Yang, Alban Desmaison, Piotr Bialecki and Nikita Shulga with major contributions coming from hundreds of talented individuals in various forms and means. A non-exhaustive but growing list needs to mention: Trevor Killeen, Sasank Chilamkurthy, Sergey Zagoruyko, Adam Lerer, Francisco Massa, Alykhan Tejani, Luca Antiga, Alban Desmaison, Andreas Koepf, James Bradbury, Zeming Lin, Yuandong Tian, Guillaume Lample, Marat Dukhan, Natalia Gimelshein, Christian Sarofeen, Martin Raison, Edward Yang, Zachary Devito.

Note: This project is unrelated to hughperkins/pytorch with the same name. Hugh is a valuable contributor to the Torch community and has helped with many things Torch and PyTorch.

License

PyTorch has a BSD-style license, as found in the LICENSE file.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distributions

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file torch-2.12.0-cp314-cp314t-win_amd64.whl.

File metadata

- Download URL: torch-2.12.0-cp314-cp314t-win_amd64.whl

- Upload date:

- Size: 123.2 MB

- Tags: CPython 3.14t, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

5f96b63f8287f66a005dd1b5a6abba2920f11156c5e5c4d815f3e2050fd1aa16

|

|

| MD5 |

be89415865b2346c93f325990ea275b9

|

|

| BLAKE2b-256 |

8821afadd25ecd81b3cea1e11c73cf1ab41a983a50271548c3ec7ec3b9efc3e9

|

File details

Details for the file torch-2.12.0-cp314-cp314t-manylinux_2_28_x86_64.whl.

File metadata

- Download URL: torch-2.12.0-cp314-cp314t-manylinux_2_28_x86_64.whl

- Upload date:

- Size: 532.2 MB

- Tags: CPython 3.14t, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

6a7512adfdd7f6732e40de1c620831e3c75b39b98cef60b11d0c5f0a76473ec5

|

|

| MD5 |

14942792ed21de2d5f0eab81acbf597c

|

|

| BLAKE2b-256 |

c9e91a0b575d98d0afedd8f157d23fa3d2759421483660448e60d0a4b10b6daa

|

File details

Details for the file torch-2.12.0-cp314-cp314t-manylinux_2_28_aarch64.whl.

File metadata

- Download URL: torch-2.12.0-cp314-cp314t-manylinux_2_28_aarch64.whl

- Upload date:

- Size: 426.4 MB

- Tags: CPython 3.14t, manylinux: glibc 2.28+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a43ac605a5e13116c72b64c359644cce0229f213dde48d2ae0ae5eb5becf7feb

|

|

| MD5 |

fdcdd483db9d12254412c4270a81c048

|

|

| BLAKE2b-256 |

565e83c450ec7b0bb40a7b74611c1b5440f9260e33c54c90d556fd4a1f0fd955

|

File details

Details for the file torch-2.12.0-cp314-cp314t-macosx_14_0_arm64.whl.

File metadata

- Download URL: torch-2.12.0-cp314-cp314t-macosx_14_0_arm64.whl

- Upload date:

- Size: 88.3 MB

- Tags: CPython 3.14t, macOS 14.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b4556715c8572758625d62b6e0ae3b1f76c440221913a6fb5e100f321fb4fb02

|

|

| MD5 |

94fead780314e126f289afadd1064cb4

|

|

| BLAKE2b-256 |

7b782e12b37ce50a19a037d7bc62d652a5a8f27385a7b05859d6bc9204f20cfe

|

File details

Details for the file torch-2.12.0-cp314-cp314-win_amd64.whl.

File metadata

- Download URL: torch-2.12.0-cp314-cp314-win_amd64.whl

- Upload date:

- Size: 123.0 MB

- Tags: CPython 3.14, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c66696857e987efb8bc1777a37357ec4f60ab5e8af6250b83d6034437fa2d8f3

|

|

| MD5 |

9b27f592ef26c3fe0bd41cd482b6cdfb

|

|

| BLAKE2b-256 |

f1b492c80d1bbfee1c0036c06d1d2155a3065bd2423134c83bf8a47e65cd6b9b

|

File details

Details for the file torch-2.12.0-cp314-cp314-manylinux_2_28_x86_64.whl.

File metadata

- Download URL: torch-2.12.0-cp314-cp314-manylinux_2_28_x86_64.whl

- Upload date:

- Size: 532.3 MB

- Tags: CPython 3.14, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e2ad3eb85d39c3cab62dfa93ed5a73516e6a53c6713cb97d004004fe089f0f1f

|

|

| MD5 |

af20615206e4a9ad0e5a7952cbbded46

|

|

| BLAKE2b-256 |

cdd47e730dba0c7032a4154dc9056b76cf9625515e030e269cfbf8098fcfee7d

|

File details

Details for the file torch-2.12.0-cp314-cp314-manylinux_2_28_aarch64.whl.

File metadata

- Download URL: torch-2.12.0-cp314-cp314-manylinux_2_28_aarch64.whl

- Upload date:

- Size: 426.4 MB

- Tags: CPython 3.14, manylinux: glibc 2.28+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

891c769072637c74e9a5a77a3bc782894696d8ffec83b938df8536dee7f0ba78

|

|

| MD5 |

09d195686bb6118b4ba093e1a6196e88

|

|

| BLAKE2b-256 |

33c31c1eb00e34555b536dddf792676026a988d710ed36981aa00499b36b0620

|

File details

Details for the file torch-2.12.0-cp314-cp314-macosx_14_0_arm64.whl.

File metadata

- Download URL: torch-2.12.0-cp314-cp314-macosx_14_0_arm64.whl

- Upload date:

- Size: 88.0 MB

- Tags: CPython 3.14, macOS 14.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f7dfae4a519197dfa050e98d8e36378a0fb5899625a875c2b54445005a2e404e

|

|

| MD5 |

53803ea728166038db01d1db5869a6e3

|

|

| BLAKE2b-256 |

67dcac069f8d6e8be701535921141055293b0d4819d3d7f224a4612cf157c7f9

|

File details

Details for the file torch-2.12.0-cp313-cp313t-win_amd64.whl.

File metadata

- Download URL: torch-2.12.0-cp313-cp313t-win_amd64.whl

- Upload date:

- Size: 123.2 MB

- Tags: CPython 3.13t, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2140e373e9a51a3e22ef62e8d14366d0b470d18f0adf19fdc757368077133a34

|

|

| MD5 |

4d77cbd484b3951126c360b86f65151f

|

|

| BLAKE2b-256 |

d8c8052405e6ad05d3237bfe5a4df78f917773956f8e17813a2d44c059068b74

|

File details

Details for the file torch-2.12.0-cp313-cp313t-manylinux_2_28_x86_64.whl.

File metadata

- Download URL: torch-2.12.0-cp313-cp313t-manylinux_2_28_x86_64.whl

- Upload date:

- Size: 532.2 MB

- Tags: CPython 3.13t, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a6a2eebb237d3b1d9ad3b378e86d9b9e0782afdea8b1e0eba6a13646b9b49c07

|

|

| MD5 |

c0b3a16f4a47799f0e8a67c0a51fc44a

|

|

| BLAKE2b-256 |

4394b0b4fdc3014122e0a7302fb90086d352aa48f2576f0b252561ebb38c01a8

|

File details

Details for the file torch-2.12.0-cp313-cp313t-manylinux_2_28_aarch64.whl.

File metadata

- Download URL: torch-2.12.0-cp313-cp313t-manylinux_2_28_aarch64.whl

- Upload date:

- Size: 426.4 MB

- Tags: CPython 3.13t, manylinux: glibc 2.28+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

af68dbf403439cae9ceaeaaf92f8352b460787dcd27b92aa05c40dd4a19c0f1e

|

|

| MD5 |

bf9ab081a4a60f67fc40644495ac2d22

|

|

| BLAKE2b-256 |

b707055d06d985b445d67422d25b033c11cf55bbb81785d4c4e68e28bca5820e

|

File details

Details for the file torch-2.12.0-cp313-cp313t-macosx_14_0_arm64.whl.

File metadata

- Download URL: torch-2.12.0-cp313-cp313t-macosx_14_0_arm64.whl

- Upload date:

- Size: 88.3 MB

- Tags: CPython 3.13t, macOS 14.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

10ee1448a9f304d3b987eb4656f664ba6e4d7b410ca7a5a7c642199777a2cf88

|

|

| MD5 |

514fec13d248b8b60c15492c2d88eb1a

|

|

| BLAKE2b-256 |

9bade95e822f3538171e22640a7fbe839a1fdb666600bf6487025de2ff03b11a

|

File details

Details for the file torch-2.12.0-cp313-cp313-win_amd64.whl.

File metadata

- Download URL: torch-2.12.0-cp313-cp313-win_amd64.whl

- Upload date:

- Size: 123.0 MB

- Tags: CPython 3.13, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3fee918902090ade827643e758e98363278815de583c75d111fdd665ebffde9f

|

|

| MD5 |

e8ef02ec283efa8ff2e750cbee0c93a5

|

|

| BLAKE2b-256 |

3e2fbdbaaa267de519ef1b73054bf590d8c93c37a266c9a4e24a01bd38b6918f

|

File details

Details for the file torch-2.12.0-cp313-cp313-manylinux_2_28_x86_64.whl.

File metadata

- Download URL: torch-2.12.0-cp313-cp313-manylinux_2_28_x86_64.whl

- Upload date:

- Size: 532.3 MB

- Tags: CPython 3.13, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

5d6b560dfa7d56291c07d615c3bb73e8d9943d9b6d87f76cd0d9d570c4797fa6

|

|

| MD5 |

14a4c1f7df7ae52066273dfc4a4a961e

|

|

| BLAKE2b-256 |

498a94bdecd13f5aaa90d45920b89789d9fe7c6f4af8c3cdd7ce01fcb59908fc

|

File details

Details for the file torch-2.12.0-cp313-cp313-manylinux_2_28_aarch64.whl.

File metadata

- Download URL: torch-2.12.0-cp313-cp313-manylinux_2_28_aarch64.whl

- Upload date:

- Size: 426.4 MB

- Tags: CPython 3.13, manylinux: glibc 2.28+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

864392c73b7654f4d2b3ae712f607937d0dbb1101c4555fbb41848106b297f39

|

|

| MD5 |

80475dfea9f0d50b1ba21c2ac196ed70

|

|

| BLAKE2b-256 |

a50452bdaf4787eab6ac7d7f5851dff934e4def0bc8ead9c8fd2b69b3e529699

|

File details

Details for the file torch-2.12.0-cp313-cp313-macosx_14_0_arm64.whl.

File metadata

- Download URL: torch-2.12.0-cp313-cp313-macosx_14_0_arm64.whl

- Upload date:

- Size: 88.0 MB

- Tags: CPython 3.13, macOS 14.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

90dd587a5f61bfe1307148b581e2084fc5bc4a06e2b90a20e9a36b81087ff16b

|

|

| MD5 |

427eef2dbaf96a5606c40e0232f15d77

|

|

| BLAKE2b-256 |

86ca01896c80ba921676aa45886b2c5b8d774912de2a1f719de48169c6f755cd

|

File details

Details for the file torch-2.12.0-cp312-cp312-win_amd64.whl.

File metadata

- Download URL: torch-2.12.0-cp312-cp312-win_amd64.whl

- Upload date:

- Size: 123.0 MB

- Tags: CPython 3.12, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8b958caff4a14d3a3b0b2dfc6a378f64dda9728a9dad28c08a0db9ce4dafb549

|

|

| MD5 |

68679a26cfced6dfc5b36a2eb020f3b1

|

|

| BLAKE2b-256 |

b9c264b06cbb7830fb3cd9be13e1158b31a3f36b68e6a209105ee3c9d9480be0

|

File details

Details for the file torch-2.12.0-cp312-cp312-manylinux_2_28_x86_64.whl.

File metadata

- Download URL: torch-2.12.0-cp312-cp312-manylinux_2_28_x86_64.whl

- Upload date:

- Size: 532.3 MB

- Tags: CPython 3.12, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4b4f64c2c2b11f7510d93dd6412b87025ff6eddd6bb61c3b5a3d892ea20c4756

|

|

| MD5 |

33b0d87d47fe05b8efdb2542e30bdfae

|

|

| BLAKE2b-256 |

def080026028b603c4650ff270fc3785bdef4bd6738765a9cc5a0f5a637d65a2

|

File details

Details for the file torch-2.12.0-cp312-cp312-manylinux_2_28_aarch64.whl.

File metadata

- Download URL: torch-2.12.0-cp312-cp312-manylinux_2_28_aarch64.whl

- Upload date:

- Size: 426.4 MB

- Tags: CPython 3.12, manylinux: glibc 2.28+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8fbef9f108a863e7722a73740998967e3b074742a834fc5be3a535a2befa7057

|

|

| MD5 |

8590839682a0b6683662baddf661d13d

|

|

| BLAKE2b-256 |

798176debf1db1343bd929bbb5d74c89fb437c2ed88eb144712557e7bd3eea45

|

File details

Details for the file torch-2.12.0-cp312-cp312-macosx_14_0_arm64.whl.

File metadata

- Download URL: torch-2.12.0-cp312-cp312-macosx_14_0_arm64.whl

- Upload date:

- Size: 88.0 MB

- Tags: CPython 3.12, macOS 14.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b41339df93d491435e790ff8bcbae1c0ce777175889bfd1281d119862793e6a2

|

|

| MD5 |

3c6ef44e25df1d11e3faef83e3480e80

|

|

| BLAKE2b-256 |

efbb285d643f254731294c9b595a007eac39db4600a98682d7bca688f42ca164

|

File details

Details for the file torch-2.12.0-cp311-cp311-win_amd64.whl.

File metadata

- Download URL: torch-2.12.0-cp311-cp311-win_amd64.whl

- Upload date:

- Size: 123.0 MB

- Tags: CPython 3.11, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

dd37188ea325042cb1f6cafa56822b11ada2520c04791a52629b0af25bdfbfd9

|

|

| MD5 |

7894738b24ad9a5bf2e47356d20c3eda

|

|

| BLAKE2b-256 |

121ba61ce2004f9ab0ea8964a6e6168133a127795667639e2ff4f8f2bdb16a65

|

File details

Details for the file torch-2.12.0-cp311-cp311-manylinux_2_28_x86_64.whl.

File metadata

- Download URL: torch-2.12.0-cp311-cp311-manylinux_2_28_x86_64.whl

- Upload date:

- Size: 532.2 MB

- Tags: CPython 3.11, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

415c1b8d0412f67551c8e89a2daca0fb3e56694af0281ba155eaa9da481f58b4

|

|

| MD5 |

be5136a0a14b9c2dab7cedbe431a4e47

|

|

| BLAKE2b-256 |

e2d2a7dd5a3f9bdaa7842124e8e2359202b317c48d47d2fc5816fafdf2049adb

|

File details

Details for the file torch-2.12.0-cp311-cp311-manylinux_2_28_aarch64.whl.

File metadata

- Download URL: torch-2.12.0-cp311-cp311-manylinux_2_28_aarch64.whl

- Upload date:

- Size: 426.4 MB

- Tags: CPython 3.11, manylinux: glibc 2.28+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c12592630aef72feaf18bd3f197ef587bbfa21131b31c38b23ab2e55fce92e36

|

|

| MD5 |

be50f91d44796a476000132625466418

|

|

| BLAKE2b-256 |

129cdda0dbd547dc549839824135f223792fd0e725f28ed0715dda366b7acaa2

|

File details

Details for the file torch-2.12.0-cp311-cp311-macosx_14_0_arm64.whl.

File metadata

- Download URL: torch-2.12.0-cp311-cp311-macosx_14_0_arm64.whl

- Upload date:

- Size: 88.0 MB

- Tags: CPython 3.11, macOS 14.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

10802fd383bbfed646212e765a72c37d2185205d4f26eb197a254e8ac7ddcb25

|

|

| MD5 |

a049ddb7de330cdb8efc4a90a0aa2d2b

|

|

| BLAKE2b-256 |

1862131124fb95df03811b8260d1d43dcc5ee85ea1a344b964613d7efe77fb08

|

File details

Details for the file torch-2.12.0-cp310-cp310-win_amd64.whl.

File metadata

- Download URL: torch-2.12.0-cp310-cp310-win_amd64.whl

- Upload date:

- Size: 122.9 MB

- Tags: CPython 3.10, Windows x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

cf9839790285dd472e7a16aafcb4a4e6bf58ec1b494045044b0eefb0eb4bd1f2

|

|

| MD5 |

e6e02f210242f5599fda5894820f96e9

|

|

| BLAKE2b-256 |

4a648a0d036e166a6aa85ee09bef072f3655d1ba5d5486a68d1b03b6813c01b3

|

File details

Details for the file torch-2.12.0-cp310-cp310-manylinux_2_28_x86_64.whl.

File metadata

- Download URL: torch-2.12.0-cp310-cp310-manylinux_2_28_x86_64.whl

- Upload date:

- Size: 532.1 MB

- Tags: CPython 3.10, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d47e7dee68ac4cd7a068b26bcd6b989935427709fae1c8f7bd0019978f829e15

|

|

| MD5 |

50a1dcb8136f32ecd7c8602c7f6a0354

|

|

| BLAKE2b-256 |

8e0cc76b6a087820bab55705b94dfc074e520de9ae91f5ef90da2ecbf2a3ef12

|

File details

Details for the file torch-2.12.0-cp310-cp310-manylinux_2_28_aarch64.whl.

File metadata

- Download URL: torch-2.12.0-cp310-cp310-manylinux_2_28_aarch64.whl

- Upload date:

- Size: 426.4 MB

- Tags: CPython 3.10, manylinux: glibc 2.28+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d4d029801cb7b6df858804a2a21b00cc2aa0bf0ee5d2ab18d343c9e9e5681f35

|

|

| MD5 |

8929b56340caad20ee7e705ddd568375

|

|

| BLAKE2b-256 |

3460d930eac44c30de06ed16f6d1ba4e785e1632532b50d8f0bf9bf699a4d0c7

|

File details

Details for the file torch-2.12.0-cp310-cp310-macosx_14_0_arm64.whl.

File metadata

- Download URL: torch-2.12.0-cp310-cp310-macosx_14_0_arm64.whl

- Upload date:

- Size: 88.0 MB

- Tags: CPython 3.10, macOS 14.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.11.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

1834bd984f8a2f4f16bdfbeecca9146184b220aa46276bf5756735b5dae12812

|

|

| MD5 |

10c4e47917159218c6baf291d5713321

|

|

| BLAKE2b-256 |

c2b753fe0436586716ab7aecff41e26b9302d57c85ded481fd83a2cd741e6b4e

|