HyperFlow: Next-Generation Computational Framework for Machine Learning & Deep Learning

Project description

HyperFlow: Next-Generation Computational Framework for Machine Learning & Deep Learning

🚀 Introduction

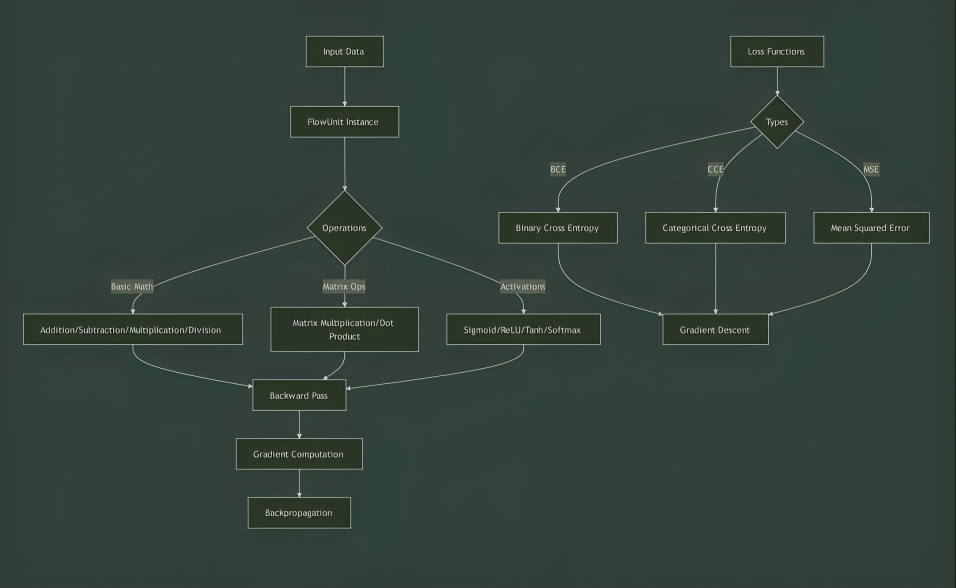

HyperFlow is an advanced computational framework designed to enhance machine learning and deep learning research. At its core is the FlowUnit class, an optimized and modular implementation supporting:

- 🔢 Mathematical operations

- ⚙️ Activation functions

- 📉 Optimization techniques

- 🔄 Backpropagation

HyperFlow provides simplicity and power, making it ideal for understanding neural networks, automatic differentiation, and optimization techniques.

🎯 Inspiration

Inspired by Micrograd by Andrej Karpathy, HyperFlow extends its capabilities by offering:

- 🚀 Advanced mathematical operations

- 🎯 Broader activation function support

- ⚡ Optimized backpropagation

- 🔍 Enhanced modularity

It serves as a lightweight yet powerful alternative to complex deep learning frameworks like PyTorch.

💡 Why HyperFlow?

✅ Lightweight & Transparent – Focuses on raw Python implementations to help understand ML/DL concepts.

⚡ Efficient & Optimized – Uses map functions for better performance.

🔧 Flexible & Powerful – Supports neural networks, including backpropagation.

📉 Minimal NumPy Dependency – Encourages learning without excessive reliance on pre-built libraries.

🔑 Core Functionalities

🔢 Mathematical Operations

create2darray,convert2darray,add,sub,mul,matmul,dot,pow

⚙️ Activation Functions

sigmoid,tanh,ReLU,Leaky ReLU,softmax

📉 Loss Functions

categorical_cross_entropy,binary_cross_entropy,mse_loss

🔄 Optimization & Backpropagation

backpropagate,gradient_descent

🧠 Neural Network Implementation

The Neuron.py module simplifies the creation of:

- 🏗 Neurons

- 🔗 Layers

- 🏛 Complete Neural Networks

This module offers full control over weights, biases, and architecture for in-depth experimentation.

📌 Examples & Outputs

✅ Dot Product Calculation

from hyperflow import FlowUnit

a = FlowUnit([1, 2, 3])

b = FlowUnit([4, 5, 6])

result = a.__dot__(b)

print(f"Dot product result: {result.data}")

Output:

Dot product result: 32

🔄 Backpropagation Test

def test_backpropagation():

x = FlowUnit(2.0)

y = FlowUnit(-3.0)

z = FlowUnit(1.5)

a = x.sigmoid()

b = y.tanh()

c = z.relu()

d = x.leaky_relu()

loss = a + b + c + d

loss.backpropagate()

print(f"x.grad: {x.grad}")

print(f"y.grad: {y.grad}")

print(f"z.grad: {z.grad}")

test_backpropagation()

Output:

x.grad: 1.1049935854035067

y.grad: 0.00986603716543999

z.grad: 1.0

🔢 Activation Function Tests

def test_flow_unit_functions():

data = np.array([1.0, 2.0, 3.0])

flow_unit = FlowUnit(data)

sigmoid_out = flow_unit.sigmoid()

print("Sigmoid Output:", sigmoid_out.data)

tanh_out = flow_unit.tanh()

print("Tanh Output:", tanh_out.data)

relu_out = flow_unit.relu()

print("ReLU Output:", relu_out.data)

leaky_relu_out = flow_unit.leaky_relu(alpha=0.01)

print("Leaky ReLU Output:", leaky_relu_out.data)

softmax_out = flow_unit.softmax()

print("Softmax Output:", softmax_out.data)

test_flow_unit_functions()

Output:

Sigmoid Output: [0.731 0.880 0.952]

Tanh Output: [0.761 0.964 0.995]

ReLU Output: [1.0 2.0 3.0]

Leaky ReLU Output: [1.0 2.0 3.0]

Softmax Output: [0.090 0.244 0.665]

📉 Loss Function Tests

def test_loss_functions():

logits = FlowUnit(np.array([2.0, 1.0, 0.1]))

target = [1, 0, 0]

cce_loss = LossFunctions.categorical_cross_entropy(logits, target)

print("Categorical Cross-Entropy Loss:", cce_loss.data)

inputs = FlowUnit(np.array([[1.0, 2.0], [3.0, 4.0]]))

target = FlowUnit(np.array([1, 0]))

parameters = (FlowUnit(np.array([0.5, -0.5])), FlowUnit(0.1))

bce_loss = LossFunctions.binary_cross_entropy_loss(inputs, target, parameters)

print("Binary Cross-Entropy Loss:", bce_loss.data)

test_loss_functions()

Output:

Categorical Cross-Entropy Loss: 0.417

Binary Cross-Entropy Loss: [0.715]

📚 Explore More Use Cases

Find more examples and use cases in the GitHub repository.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file hyperflow_package-1.0.0.tar.gz.

File metadata

- Download URL: hyperflow_package-1.0.0.tar.gz

- Upload date:

- Size: 11.5 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3d489de391adcca4f1f348655a6fdd970894c0b7caf3abe9b2bb39c22b81fd57

|

|

| MD5 |

116f578f00875dc99cdab6bfab183a74

|

|

| BLAKE2b-256 |

6998343e627712184e7216707476407ca03422127c044bbd31822bc16c045e6f

|

File details

Details for the file HyperFlow_Package-1.0.0-py3-none-any.whl.

File metadata

- Download URL: HyperFlow_Package-1.0.0-py3-none-any.whl

- Upload date:

- Size: 12.1 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.6

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8dfb40fa0e8904cc7ffa11e6acd71418603a3f7c43b33a21709953c1a8f5b583

|

|

| MD5 |

21f0f2b233ff67c8e34fe47d99fe06bf

|

|

| BLAKE2b-256 |

b2c5aeda8ea46f1f110279139c560b9836d86d0ae02b8e3b499c61f6288ea0cd

|