Unofficial implementation of Momentum Low-Rank Compression (MLorc) for memory-efficient LLM fine-tuning

Project description

MLorc - Momentum Low-Rank Compression for Memory-Efficient LLM Fine-tuning

Unofficial implementation of "MLorc: Momentum Low-rank Compression for Large Language Model Adaptation"

This repository introduces MLorc (Momentum Low-rank Compression), a novel and highly memory-efficient paradigm designed to significantly reduce the memory footprint of full-parameter fine-tuning for large language models. Based on the paper "MLorc: Momentum Low-rank Compression for Large Language Model Adaptation" this method offers a compelling alternative to existing memory-efficient techniques.

How MLorc Works

MLorc's core innovation lies in its approach to momentum compression and reconstruction:

- Direct Momentum Compression: It directly compresses and reconstructs both first and second-order momentum using Randomized SVD (RSVD) at each optimization step.

- Adaptive Second-Order Momentum Handling: To ensure stability, especially for non-negative second-order momentum, MLorc adaptively adds a small constant to zero values introduced by ReLU during reconstruction.

Key Advantages of MLorc

MLorc is broadly applicable to any momentum-based optimizer (e.g., Adam, Lion) and delivers superior performance:

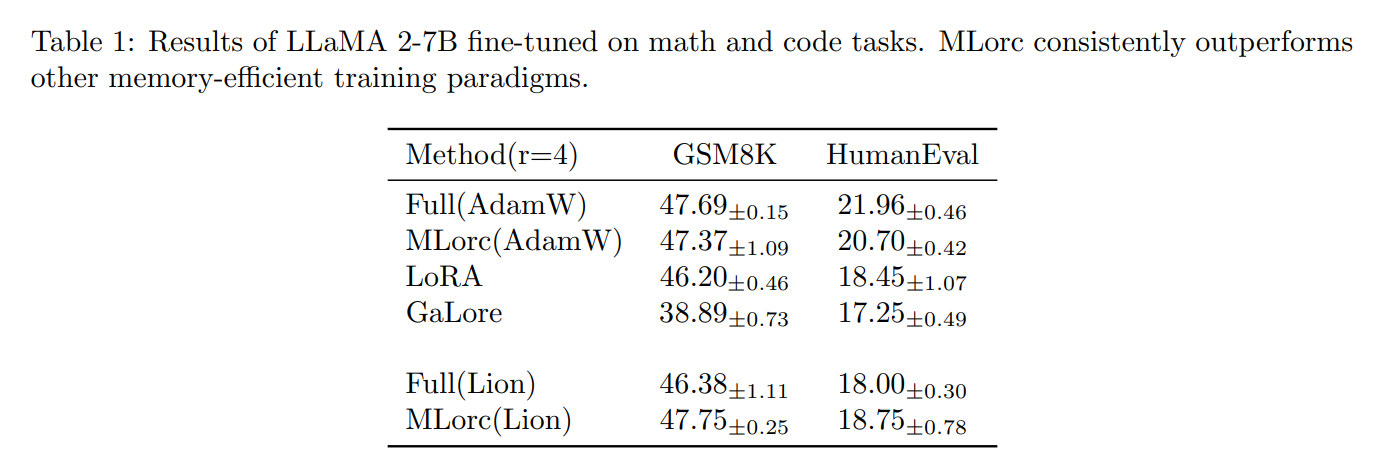

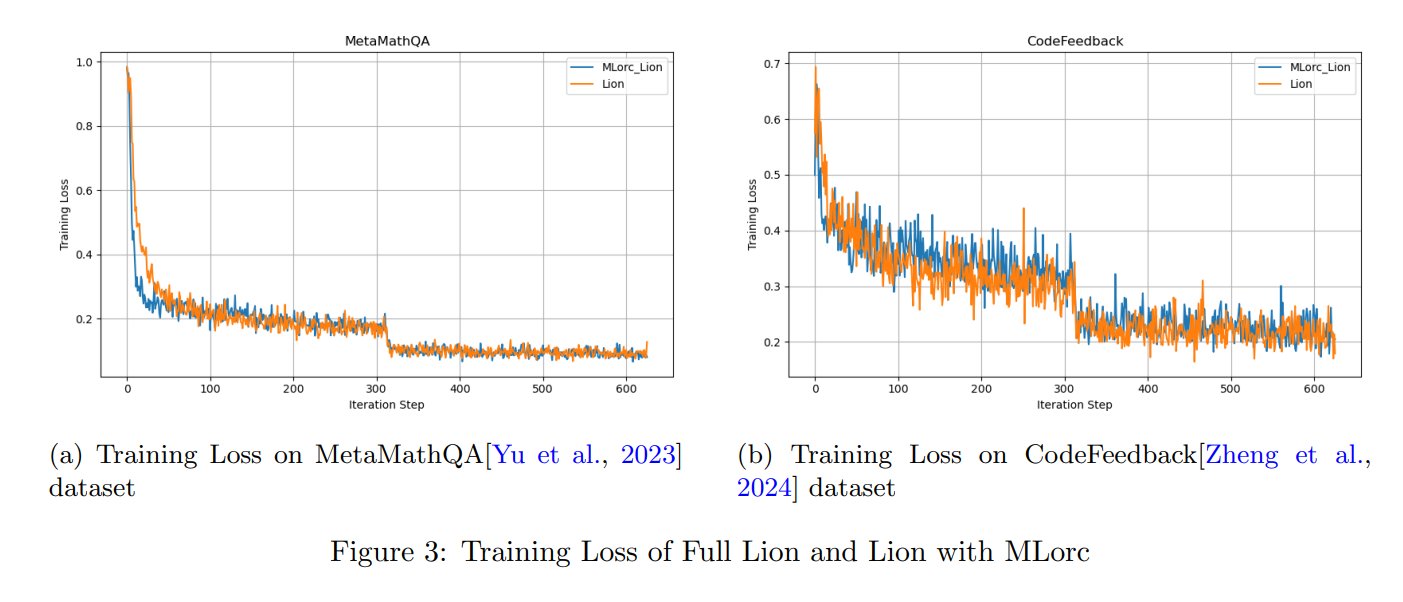

- State-of-the-Art Performance: Empirically, MLorc consistently outperforms other memory-efficient methods like LoRA and GaLore in terms of validation accuracy. It can even match or exceed the performance of full fine-tuning with a small rank (e.g.,

rank=4). - Memory and Time Efficiency: It maintains comparable memory efficiency to LoRA while demonstrating improved time efficiency compared to GaLore.

- Theoretical Guarantees: MLorc offers a theoretical guarantee for convergence, matching the convergence rate of the original Lion optimizer under reasonable assumptions.

Included MLorc-Integrated Optimizers

This repository integrates MLorc into six momentum-based optimizers, each with additional enhancements for improved performance and stability:

-

MLorc_AdamW: AdamW with MLorc compression, featuring:- Fused Backward Pass

- Gradient Descent with Adaptive Momentum Scaling (Grams): For better performance and faster convergence.

atan2smoothing & scaling: A robust replacement foreps(no tuning required), which also incorporates gradient clipping. (If enabled,epsis ignored.)- OrthoGrad: Prevents "naïve loss minimization" (NLM) that can lead to overfitting by removing the gradient component parallel to the weight, thus improving generalization

-

MLorc_Prodigy:- Same Features as

MLorc_AdamW - Incorporates MLorc with the Prodigy adaptive method and its associated features.

- Same Features as

-

MLorc_Lion: Lion with MLorc compression, featuring:- Fused Backward Pass

- OrthoGrad

use_cautious: use the cautious varaint of Lion.clip_threshold: whether to clip the gradients norm per-parameter as proposed in the paper Lions and Muons: Optimization via Stochastic Frank-Wolfe to make Lion more stable (default: 5.0, from the paper).

-

MLorc_DAdapt_Lion:- Same Features as

MLorc_Lion - Integrates MLorc with the DAdaptation adaptive method for LION, and includes the slice_p feature (from Prodigy).

- Same Features as

-

MLorc_Adopt:- Same Features as

MLorc_AdamW - Implements the method of ADOPT: Modified Adam Can Converge with Any β_2 with the Optimal Rate.

- Same Features as

-

MLorc_CAME:- Same Features as

MLorc_AdamW - The first moment (momentum) is compressed using the low-rank factorization from MLorc, while the adaptive pre-conditioning and confidence-guided updates are from CAME: Confidence-guided Adaptive Memory Efficient Optimization.

- Same Features as

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file MLorc_optim-0.1.3.tar.gz.

File metadata

- Download URL: MLorc_optim-0.1.3.tar.gz

- Upload date:

- Size: 19.3 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.11

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4d4a87f7407137f6f0083b3b1917baf8f7b7799bd9cc4282c222ba3e140a599c

|

|

| MD5 |

dd1e339593f36464739e8c9168e8e334

|

|

| BLAKE2b-256 |

1ba0e8b8ab54a38a48937a6410e2f27ab9ac5140ee7e07386223d408036041e9

|

File details

Details for the file MLorc_optim-0.1.3-py3-none-any.whl.

File metadata

- Download URL: MLorc_optim-0.1.3-py3-none-any.whl

- Upload date:

- Size: 29.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.11

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3b8d38423d23822ddcf21c87ea64e561bc6cd1f7cfe7f40c6b20576f83f2d783

|

|

| MD5 |

391029421054b48f6e4e34ddbe3df49d

|

|

| BLAKE2b-256 |

9530df261c52fdfa1ee0c61ee97ea827a9b8ce380a3309648899a6659431f4dc

|