Intelligence Begins with Memory

Project description

MemOS: Memory Operating System for AI Agents

MemOS is an open-source Agent Memory framework that empowers AI agents with long-term memory, personality consistency, and contextual recall. It enables agents to remember past interactions, learn over time, and build evolving identities across sessions.

Designed for AI companions, role-playing NPCs, and multi-agent systems, MemOS provides a unified API for memory representation, retrieval, and update — making it the foundation for next-generation memory-augmented AI agents.

🆕 MemOS 2.0 introduces knowledge base system, multi-modal memory (images & documents), tool memory for Agent optimization, memory feedback mechanism for precise control, and enterprise-grade architecture with Redis Streams scheduler and advanced DB optimizations.

Get Free API: Try API

MemOS is an operating system for Large Language Models (LLMs) that enhances them with long-term memory capabilities. It allows LLMs to store, retrieve, and manage information, enabling more context-aware, consistent, and personalized interactions. MemOS 2.0 features comprehensive knowledge base management, multi-modal memory support, tool memory for Agent enhancement, and enterprise-grade architecture optimizations.

- Website: https://memos.openmem.net/

- Documentation: https://memos-docs.openmem.net/home/overview/

- API Reference: https://memos-docs.openmem.net/api-reference/configure-memos/

- Source Code: https://github.com/MemTensor/MemOS

📰 News

Stay up to date with the latest MemOS announcements, releases, and community highlights.

- 2025-12-24 - 🎉 MemOS v2.0: Stardust (星尘) Release: Major upgrade featuring comprehensive Knowledge Base system with automatic document/URL parsing and cross-project sharing; Memory feedback mechanism for correction and precise deletion; Multi-modal memory supporting images and charts; Tool Memory to enhance Agent planning; Full architecture upgrade with Redis Streams multi-level queue scheduler and DB optimizations; New streaming/non-streaming Chat interfaces; Complete MCP upgrade; Lightweight deployment modes (quick & full).

- 2025-11-06 - 🎉 MemOS v1.1.3 (Async Memory & Preference): Millisecond-level async memory add (support plain-text-memory and preference memory); enhanced BM25, graph recall, and mixture search; full results & code for LoCoMo, LongMemEval, PersonaMem, and PrefEval released.

- 2025-10-30 - 🎉 MemOS v1.1.2 (API & MCP Update): API architecture overhaul and full MCP (Model Context Protocol) support — enabling models, IDEs, and agents to read/write external memory directly.

- 2025-09-10 - 🎉 MemOS v1.0.1 (Group Q&A Bot): Group Q&A bot based on MemOS Cube, updated KV-Cache performance comparison data across different GPU deployment schemes, optimized test benchmarks and statistics, added plaintext memory Reranker sorting, optimized plaintext memory hallucination issues, and Playground version updates. Try PlayGround

- 2025-08-07 - 🎉 MemOS v1.0.0 (MemCube Release): First MemCube with word game demo, LongMemEval evaluation, BochaAISearchRetriever integration, NebulaGraph support, enhanced search capabilities, and official Playground launch.

- 2025-07-29 – 🎉 MemOS v0.2.2 (Nebula Update): Internet search+Nebula DB integration, refactored memory scheduler, KV Cache stress tests, MemCube Cookbook release (CN/EN), and 4b/1.7b/0.6b memory ops models.

- 2025-07-21 – 🎉 MemOS v0.2.1 (Neo Release): Lightweight Neo version with plaintext+KV Cache functionality, Docker/multi-tenant support, MCP expansion, and new Cookbook/Mud game examples.

- 2025-07-11 – 🎉 MemOS v0.2.0 (Cross-Platform): Added doc search/bilingual UI, MemReader-4B (local deploy), full Win/Mac/Linux support, and playground end-to-end connection.

- 2025-07-07 – 🎉 MemOS 1.0 (Stellar) Preview Release: A SOTA Memory OS for LLMs is now open-sourced.

- 2025-07-04 – 🎉 MemOS Paper Released: MemOS: A Memory OS for AI System was published on arXiv.

- 2025-05-28 – 🎉 Short Paper Uploaded: MemOS: An Operating System for Memory-Augmented Generation (MAG) in Large Language Models was published on arXiv.

- 2024-07-04 – 🎉 Memory3 Model Released at WAIC 2024: The new memory-layered architecture model was unveiled at the 2024 World Artificial Intelligence Conference.

- 2024-07-01 – 🎉 Memory3 Paper Released: Memory3: Language Modeling with Explicit Memory introduces the new approach to structured memory in LLMs.

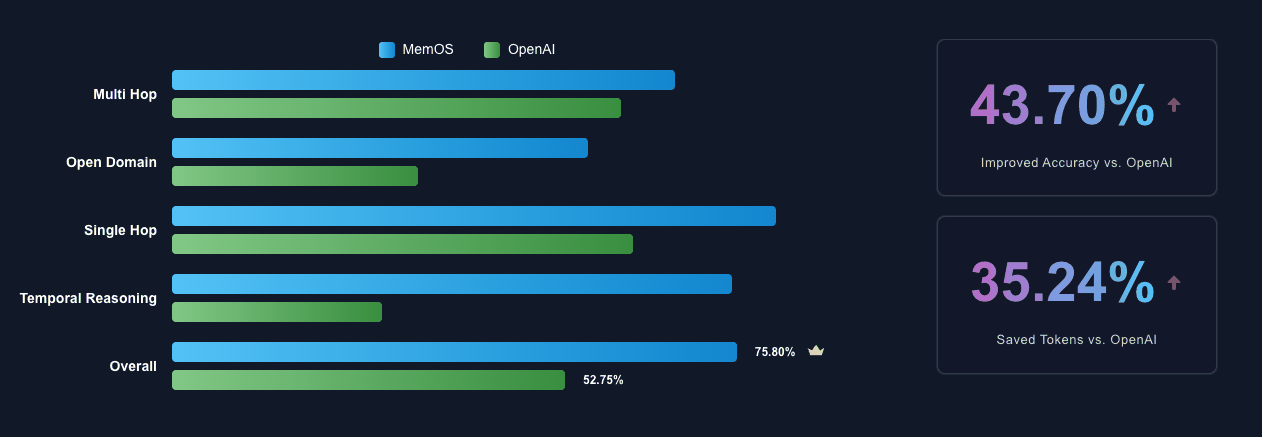

📈 Performance Benchmark

MemOS demonstrates significant improvements over baseline memory solutions in multiple memory tasks, showcasing its capabilities in information extraction, temporal and cross-session reasoning, and personalized preference responses.

| Model | LOCOMO | LongMemEval | PrefEval-10 | PersonaMem |

|---|---|---|---|---|

| GPT-4o-mini | 52.75 | 55.4 | 2.8 | 43.46 |

| MemOS | 75.80 | 77.80 | 71.90 | 61.17 |

| Improvement | +43.70% | +40.43% | +2568% | +40.75% |

Detailed Evaluation Results

- We use gpt-4o-mini as the processing and judging LLM and bge-m3 as embedding model in MemOS evaluation.

- The evaluation was conducted under conditions that align various settings as closely as possible. Reproduce the results with our scripts at

evaluation. - Check the full search and response details at huggingface https://huggingface.co/datasets/MemTensor/MemOS_eval_result.

💡 MemOS outperforms all other methods (Mem0, Zep, Memobase, SuperMemory et al.) across all benchmarks!

✨ Key Features

- 🧠 Memory-Augmented Generation (MAG): Provides a unified API for memory operations, integrating with LLMs to enhance chat and reasoning with contextual memory retrieval.

- 📦 Modular Memory Architecture (MemCube): A flexible and modular architecture that allows for easy integration and management of different memory types.

- 💾 Multiple Memory Types:

- Textual Memory: For storing and retrieving unstructured or structured text knowledge.

- Activation Memory: Caches key-value pairs (

KVCacheMemory) to accelerate LLM inference and context reuse. - Parametric Memory: Stores model adaptation parameters (e.g., LoRA weights).

- Tool Memory 🆕: Records Agent tool call trajectories and experiences to improve planning capabilities.

- 📚 Knowledge Base System 🆕: Build multi-dimensional knowledge bases with automatic document/URL parsing, splitting, and cross-project sharing capabilities.

- 🔧 Memory Controllability 🆕:

- Feedback Mechanism: Use

add_feedbackAPI to correct, supplement, or replace existing memories with natural language. - Precise Deletion: Delete specific memories by User ID or Memory ID via API or MCP tools.

- Feedback Mechanism: Use

- 👁️ Multi-Modal Support 🆕: Support for image understanding and memory, including chart parsing in documents.

- ⚡ Advanced Architecture:

- DB Optimization: Enhanced connection management and batch insertion for high-concurrency scenarios.

- Advanced Retrieval: Custom tag and info field filtering with complex logical operations.

- Redis Streams Scheduler: Multi-level queue architecture with intelligent orchestration for fair multi-tenant scheduling.

- Stream & Non-Stream Chat: Ready-to-use streaming and non-streaming chat interfaces.

- 🔌 Extensible: Easily extend and customize memory modules, data sources, and LLM integrations.

- 🏂 Lightweight Deployment 🆕: Support for quick mode and complete mode deployment options.

🚀 Getting Started

⭐️ MemOS online API

The easiest way to use MemOS. Equip your agent with memory in minutes!

Sign up and get started onMemOS dashboard.

Self-Hosted Server

- Get the repository.

git clone https://github.com/MemTensor/MemOS.git

cd MemOS

pip install -r ./docker/requirements.txt

- Configure

docker/.env.exampleand copy toMemOS/.env - Start the service.

uvicorn memos.api.server_api:app --host 0.0.0.0 --port 8001 --workers 8

Local SDK

Here's a quick example of how to create a MemCube, load it from a directory, access its memories, and save it.

from memos.mem_cube.general import GeneralMemCube

# Initialize a MemCube from a local directory

mem_cube = GeneralMemCube.init_from_dir("examples/data/mem_cube_2")

# Access and print all memories

print("--- Textual Memories ---")

for item in mem_cube.text_mem.get_all():

print(item)

print("\n--- Activation Memories ---")

for item in mem_cube.act_mem.get_all():

print(item)

# Save the MemCube to a new directory

mem_cube.dump("tmp/mem_cube")

MOS (Memory Operating System) is a higher-level orchestration layer that manages multiple MemCubes and provides a unified API for memory operations. Here's a quick example of how to use MOS:

from memos.configs.mem_os import MOSConfig

from memos.mem_os.main import MOS

# init MOS

mos_config = MOSConfig.from_json_file("examples/data/config/simple_memos_config.json")

memory = MOS(mos_config)

# create user

user_id = "b41a34d5-5cae-4b46-8c49-d03794d206f5"

memory.create_user(user_id=user_id)

# register cube for user

memory.register_mem_cube("examples/data/mem_cube_2", user_id=user_id)

# add memory for user

memory.add(

messages=[

{"role": "user", "content": "I like playing football."},

{"role": "assistant", "content": "I like playing football too."},

],

user_id=user_id,

)

# Later, when you want to retrieve memory for user

retrieved_memories = memory.search(query="What do you like?", user_id=user_id)

# output text_memories: I like playing football, act_memories, para_memories

print(f"text_memories: {retrieved_memories['text_mem']}")

For more detailed examples, please check out the examples directory.

📦 Installation

Install via pip

pip install MemoryOS

Optional Dependencies

MemOS provides several optional dependency groups for different features. You can install them based on your needs.

| Feature | Package Name |

|---|---|

| Tree Memory | MemoryOS[tree-mem] |

| Memory Reader | MemoryOS[mem-reader] |

| Memory Scheduler | MemoryOS[mem-scheduler] |

Example installation commands:

pip install MemoryOS[tree-mem]

pip install MemoryOS[tree-mem,mem-reader]

pip install MemoryOS[mem-scheduler]

pip install MemoryOS[tree-mem,mem-reader,mem-scheduler]

External Dependencies

Ollama Support

To use MemOS with Ollama, first install the Ollama CLI:

curl -fsSL https://ollama.com/install.sh | sh

Transformers Support

To use functionalities based on the transformers library, ensure you have PyTorch installed (CUDA version recommended for GPU acceleration).

Download Examples

To download example code, data and configurations, run the following command:

memos download_examples

💬 Community & Support

Join our community to ask questions, share your projects, and connect with other developers.

- GitHub Issues: Report bugs or request features in our GitHub Issues.

- GitHub Pull Requests: Contribute code improvements via Pull Requests.

- GitHub Discussions: Participate in our GitHub Discussions to ask questions or share ideas.

- Discord: Join our Discord Server.

- WeChat: Scan the QR code to join our WeChat group.

📜 Citation

[!NOTE] We publicly released the Short Version on May 28, 2025, making it the earliest work to propose the concept of a Memory Operating System for LLMs.

If you use MemOS in your research, we would appreciate citations to our papers.

@article{li2025memos_long,

title={MemOS: A Memory OS for AI System},

author={Li, Zhiyu and Song, Shichao and Xi, Chenyang and Wang, Hanyu and Tang, Chen and Niu, Simin and Chen, Ding and Yang, Jiawei and Li, Chunyu and Yu, Qingchen and Zhao, Jihao and Wang, Yezhaohui and Liu, Peng and Lin, Zehao and Wang, Pengyuan and Huo, Jiahao and Chen, Tianyi and Chen, Kai and Li, Kehang and Tao, Zhen and Ren, Junpeng and Lai, Huayi and Wu, Hao and Tang, Bo and Wang, Zhenren and Fan, Zhaoxin and Zhang, Ningyu and Zhang, Linfeng and Yan, Junchi and Yang, Mingchuan and Xu, Tong and Xu, Wei and Chen, Huajun and Wang, Haofeng and Yang, Hongkang and Zhang, Wentao and Xu, Zhi-Qin John and Chen, Siheng and Xiong, Feiyu},

journal={arXiv preprint arXiv:2507.03724},

year={2025},

url={https://arxiv.org/abs/2507.03724}

}

@article{li2025memos_short,

title={MemOS: An Operating System for Memory-Augmented Generation (MAG) in Large Language Models},

author={Li, Zhiyu and Song, Shichao and Wang, Hanyu and Niu, Simin and Chen, Ding and Yang, Jiawei and Xi, Chenyang and Lai, Huayi and Zhao, Jihao and Wang, Yezhaohui and others},

journal={arXiv preprint arXiv:2505.22101},

year={2025},

url={https://arxiv.org/abs/2505.22101}

}

@article{yang2024memory3,

author = {Yang, Hongkang and Zehao, Lin and Wenjin, Wang and Wu, Hao and Zhiyu, Li and Tang, Bo and Wenqiang, Wei and Wang, Jinbo and Zeyun, Tang and Song, Shichao and Xi, Chenyang and Yu, Yu and Kai, Chen and Xiong, Feiyu and Tang, Linpeng and Weinan, E},

title = {Memory$^3$: Language Modeling with Explicit Memory},

journal = {Journal of Machine Learning},

year = {2024},

volume = {3},

number = {3},

pages = {300--346},

issn = {2790-2048},

doi = {https://doi.org/10.4208/jml.240708},

url = {https://global-sci.com/article/91443/memory3-language-modeling-with-explicit-memory}

}

🙌 Contributing

We welcome contributions from the community! Please read our contribution guidelines to get started.

📄 License

MemOS is licensed under the Apache 2.0 License.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file memoryos-2.0.0.tar.gz.

File metadata

- Download URL: memoryos-2.0.0.tar.gz

- Upload date:

- Size: 596.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.9.25

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b797daf676990c347e2fa520bd0ca99e8f78ab2a20c0ed3bda1ce8b0d2bc337e

|

|

| MD5 |

19057d99f8d78f24e3b3881bac7890f3

|

|

| BLAKE2b-256 |

b3782fe5b9974ccc2b6a6542f2f6ebbd58bef8df6991ead69712d89d761df5d0

|

File details

Details for the file memoryos-2.0.0-py3-none-any.whl.

File metadata

- Download URL: memoryos-2.0.0-py3-none-any.whl

- Upload date:

- Size: 763.6 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.9.25

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ca89cf62d67ea8910d7ad11846c7568bd7d8e94cd545bedad8e5d3682a6a97c3

|

|

| MD5 |

9431c66e41bfa58ae8a032ce5cd7852c

|

|

| BLAKE2b-256 |

37f66e39f86c26aa8f44e72118b3aa10da3c85517d9822c58a9b7a6bf407cb9c

|

MemOS 2.0: 星尘(Stardust)

MemOS 2.0: 星尘(Stardust)