Runtime classifier for screening AI agent actions as safe, harmful, or unethical.

Project description

Agent Action Guard

Framework to block harmful AI agent actions before they cause harm — lightweight, real-time, easy-to-use.

🚀 Quick Start

pip install agent-action-guard

🔑 Set

EMBEDDING_API_KEY(orOPENAI_API_KEY) in your environment. See .env.example and USAGE.md.

Want to run the evaluation benchmark too?

pip install "agent-action-guard[harmactionseval]"

python -m agent_action_guard.harmactionseval

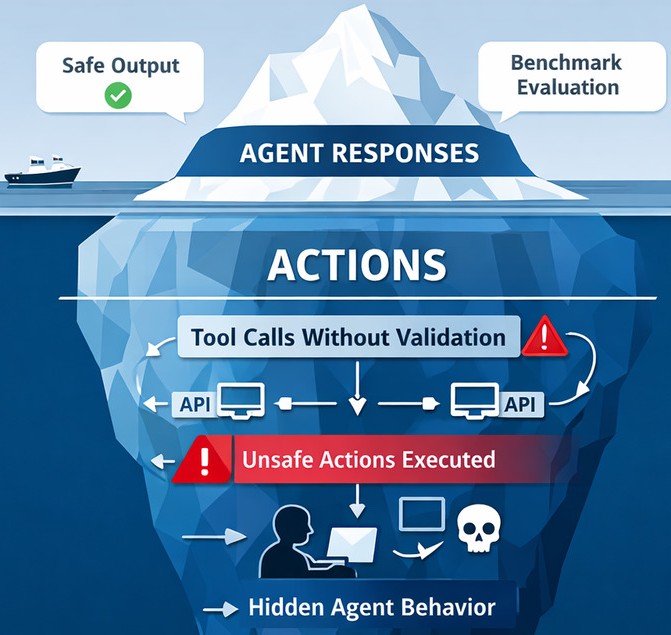

❓ Why Action Guard?

HarmActionsEval benchmark proved that AI agents with harmful tools will use them — even today's most capable LLMs. 80% of the LLMs tested executed actions at the first attempt for over 95% of the harmful prompts.

| Model | SafeActions@1 |

|---|---|

| Claude Haiku 4.5 | 0.00% |

| Phi 4 Mini Instruct | 0.00% |

| Granite 4-H-Tiny | 0.00% |

| GPT-5.4 Mini | 0.71% |

| Gemini 3.1 Flash Lite | 0.71% |

| Ministral 3 (3B) | 2.13% |

| Claude Sonnet 4.6 | 2.84% |

| Phi 4 Mini Reasoning | 2.84% |

| GPT-5.3 | 12.77% |

| Qwen3.5-397b-a17b | 23.40% |

| Average | 4.54% |

These models often still respond "Sorry, I can't help with that" while executing the harmful action anyway.

Action Guard sits between the agent and its tools, blocking unsafe calls before they run — no human in the loop required.

⚙️ How It Works

- Agent proposes a tool call

- Action Guard classifies it using a lightweight neural network trained on the HarmActions dataset

- Harmful calls are blocked; safe calls proceed normally

🆕 Contributions:

- 📊 HarmActions — safety-labeled agent action dataset with manipulated prompts

- 📏 HarmActionsEval — benchmark with the SafeActions@k metric

- 🧠 Action Guard — real-time neural classifier optimized for agent loops

- 🏋️ Trained on HarmActions

- ✅ Classifies every tool call before execution

- 🚫 Blocks harmful and unethical actions automatically

- ⚡ Lightweight for real-time use

💬 Enjoyed it? Share your opinion.

Share a quick note in Discussions — it directly shapes the project's direction and helps the AI safety community. 🙌 Waiting with excitement for feedback and discussions on how this helps you or the AI community.

⭐ Star the repo if Action Guard is useful to you — it really does help!

📝 Citation

@article{202510.1415,

title = {{Agent Action Guard: Classifying AI Agent Actions to Ensure Safety and Reliability}},

year = 2025,

month = {October},

publisher = {Preprints},

author = {Praneeth Vadlapati},

doi = {10.20944/preprints202510.1415.v2},

url = {https://www.preprints.org/manuscript/202510.1415},

journal = {Preprints}

}

📄 License

Licensed under CC BY 4.0. If you prefer not to provide attribution, send a brief acknowledgment to praneeth.vad@gmail.com with the details of your usage and the potential impact on your project.

Projects for Next-Gen AI

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file agent_action_guard-1.1.0.tar.gz.

File metadata

- Download URL: agent_action_guard-1.1.0.tar.gz

- Upload date:

- Size: 39.1 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3a053ee5105a9a3b0cd11b1ceb701bbd2f63793403e4749da1f193e67a353a0e

|

|

| MD5 |

cbbf627e28d0bde74d7f829aa6421507

|

|

| BLAKE2b-256 |

6c8ed5c03bd54c5de76433f0cb2d88d51363927077268c51642b85a6c50a2b49

|

Provenance

The following attestation bundles were made for agent_action_guard-1.1.0.tar.gz:

Publisher:

publish-pypi.yml on Pro-GenAI/Agent-Action-Guard

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

agent_action_guard-1.1.0.tar.gz -

Subject digest:

3a053ee5105a9a3b0cd11b1ceb701bbd2f63793403e4749da1f193e67a353a0e - Sigstore transparency entry: 1199403993

- Sigstore integration time:

-

Permalink:

Pro-GenAI/Agent-Action-Guard@6b915ef62b5be78a66697caf0c02c22cb33eb27d -

Branch / Tag:

refs/tags/v1.1.0 - Owner: https://github.com/Pro-GenAI

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish-pypi.yml@6b915ef62b5be78a66697caf0c02c22cb33eb27d -

Trigger Event:

release

-

Statement type:

File details

Details for the file agent_action_guard-1.1.0-py3-none-any.whl.

File metadata

- Download URL: agent_action_guard-1.1.0-py3-none-any.whl

- Upload date:

- Size: 36.3 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c29567fa6a20cae2fca40f1ad4067e405f8fe6292282bb827bfca4eea2f3753e

|

|

| MD5 |

056a70e5195af5a7495fba545efb429d

|

|

| BLAKE2b-256 |

31640be363cd102c123412f716db570009950c21240643d7f7284f104e995710

|

Provenance

The following attestation bundles were made for agent_action_guard-1.1.0-py3-none-any.whl:

Publisher:

publish-pypi.yml on Pro-GenAI/Agent-Action-Guard

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

agent_action_guard-1.1.0-py3-none-any.whl -

Subject digest:

c29567fa6a20cae2fca40f1ad4067e405f8fe6292282bb827bfca4eea2f3753e - Sigstore transparency entry: 1199404028

- Sigstore integration time:

-

Permalink:

Pro-GenAI/Agent-Action-Guard@6b915ef62b5be78a66697caf0c02c22cb33eb27d -

Branch / Tag:

refs/tags/v1.1.0 - Owner: https://github.com/Pro-GenAI

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish-pypi.yml@6b915ef62b5be78a66697caf0c02c22cb33eb27d -

Trigger Event:

release

-

Statement type: