IBM Analog Hardware Acceleration Kit

Project description

IBM Analog Hardware Acceleration Kit

Description

IBM Analog Hardware Acceleration Kit is an open source Python toolkit for exploring and using the capabilities of in-memory computing devices in the context of artificial intelligence.

:warning: This library is currently in beta and under active development. Please be mindful of potential issues and keep an eye for improvements, new features and bug fixes in upcoming versions.

The toolkit consists of two main components:

Pytorch integration

A series of primitives and features that allow using the toolkit within

PyTorch:

- Analog neural network modules (fully connected layer, 1d/2d/3d convolution layers, LSTM layer, sequential container).

- Analog training using torch training workflow:

- Analog torch optimizers (SGD).

- Analog in-situ training using customizable device models and algorithms (Tiki-Taka).

- Analog inference using torch inference workflow:

- State-of-the-art statistical model of a phase-change memory (PCM) array calibrated on hardware measurements from a 1 million PCM devices chip.

- Hardware-aware training with hardware non-idealities and noise included in the forward pass to make the trained models more robust during inference on Analog hardware.

Analog devices simulator

A high-performant (CUDA-capable) C++ simulator that allows for simulating a wide range of analog devices and crossbar configurations by using abstract functional models of material characteristics with adjustable parameters. Features include:

- Forward pass output-referred noise and device fluctuations, as well as adjustable ADC and DAC discretization and bounds

- Stochastic update pulse trains for rows and columns with finite weight update size per pulse coincidence

- Device-to-device systematic variations, cycle-to-cycle noise and adjustable asymmetry during analog update

- Adjustable device behavior for exploration of material specifications for training and inference

- State-of-the-art dynamic input scaling, bound management, and update management schemes

Other features

Along with the two main components, the toolkit includes other functionalities such as:

- A library of device presets that are calibrated to real hardware data and based on models in the literature, along with a configuration that specifies a particular device and optimizer choice.

- A module for executing high-level use cases ("experiments"), such as neural network training with minimal code overhead.

- A utility to automatically convert a downloaded model (e.g., pre-trained) to its equivalent Analog model by replacing all linear/conv layers to Analog layers (e.g., for convenient hardware-aware training).

- Integration with the AIHW Composer platform, a no-code web experience that allows executing experiments in the cloud.

How to cite?

In case you are using the IBM Analog Hardware Acceleration Kit for your research, please cite the AICAS21 paper that describes the toolkit:

Malte J. Rasch, Diego Moreda, Tayfun Gokmen, Manuel Le Gallo, Fabio Carta, Cindy Goldberg, Kaoutar El Maghraoui, Abu Sebastian, Vijay Narayanan. "A flexible and fast PyTorch toolkit for simulating training and inference on analog crossbar arrays" (2021 IEEE 3rd International Conference on Artificial Intelligence Circuits and Systems)

Usage

Training example

from torch import Tensor

from torch.nn.functional import mse_loss

# Import the aihwkit constructs.

from aihwkit.nn import AnalogLinear

from aihwkit.optim import AnalogSGD

x = Tensor([[0.1, 0.2, 0.4, 0.3], [0.2, 0.1, 0.1, 0.3]])

y = Tensor([[1.0, 0.5], [0.7, 0.3]])

# Define a network using a single Analog layer.

model = AnalogLinear(4, 2)

# Use the analog-aware stochastic gradient descent optimizer.

opt = AnalogSGD(model.parameters(), lr=0.1)

opt.regroup_param_groups(model)

# Train the network.

for epoch in range(10):

pred = model(x)

loss = mse_loss(pred, y)

loss.backward()

opt.step()

print('Loss error: {:.16f}'.format(loss))

You can find more examples in the examples/ folder of the project, and

more information about the library in the documentation. Please note that

the examples have some additional dependencies - you can install them via

pip install -r requirements-examples.txt.

You can find interactive notebooks and tutorials in the notebooks/ directory.

Further reading

We also recommend to take a look at the tutorial article that describes the usage of the toolkit that can be found here:

Manuel Le Gallo, Corey Lammie, Julian Buechel, Fabio Carta, Omobayode Fagbohungbe, Charles Mackin, Hsinyu Tsai, Vijay Narayanan, Abu Sebastian, Kaoutar El Maghraoui, Malte J. Rasch. "Using the IBM Analog In-Memory Hardware Acceleration Kit for Neural Network Training and Inference" (APL Machine Learning Journal:1(4) 2023)

What is Analog AI?

In traditional hardware architecture, computation and memory are siloed in different locations. Information is moved back and forth between computation and memory units every time an operation is performed, creating a limitation called the von Neumann bottleneck.

Analog AI delivers radical performance improvements by combining compute and memory in a single device, eliminating the von Neumann bottleneck. By leveraging the physical properties of memory devices, computation happens at the same place where the data is stored. Such in-memory computing hardware increases the speed and energy efficiency needed for next-generation AI workloads.

What is an in-memory computing chip?

An in-memory computing chip typically consists of multiple arrays of memory devices that communicate with each other. Many types of memory devices such as phase-change memory (PCM), resistive random-access memory (RRAM), and Flash memory can be used for in-memory computing.

Memory devices have the ability to store synaptic weights in their analog charge (Flash) or conductance (PCM, RRAM) state. When these devices are arranged in a crossbar configuration, it allows to perform an analog matrix-vector multiplication in a single time step, exploiting the advantages of analog storage capability and Kirchhoff’s circuits laws. You can learn more about it in our online demo.

In deep learning, data propagation through multiple layers of a neural network involves a sequence of matrix multiplications, as each layer can be represented as a matrix of synaptic weights. The devices are arranged in multiple crossbar arrays, creating an artificial neural network where all matrix multiplications are performed in-place in an analog manner. This structure allows to run deep learning models at reduced energy consumption.

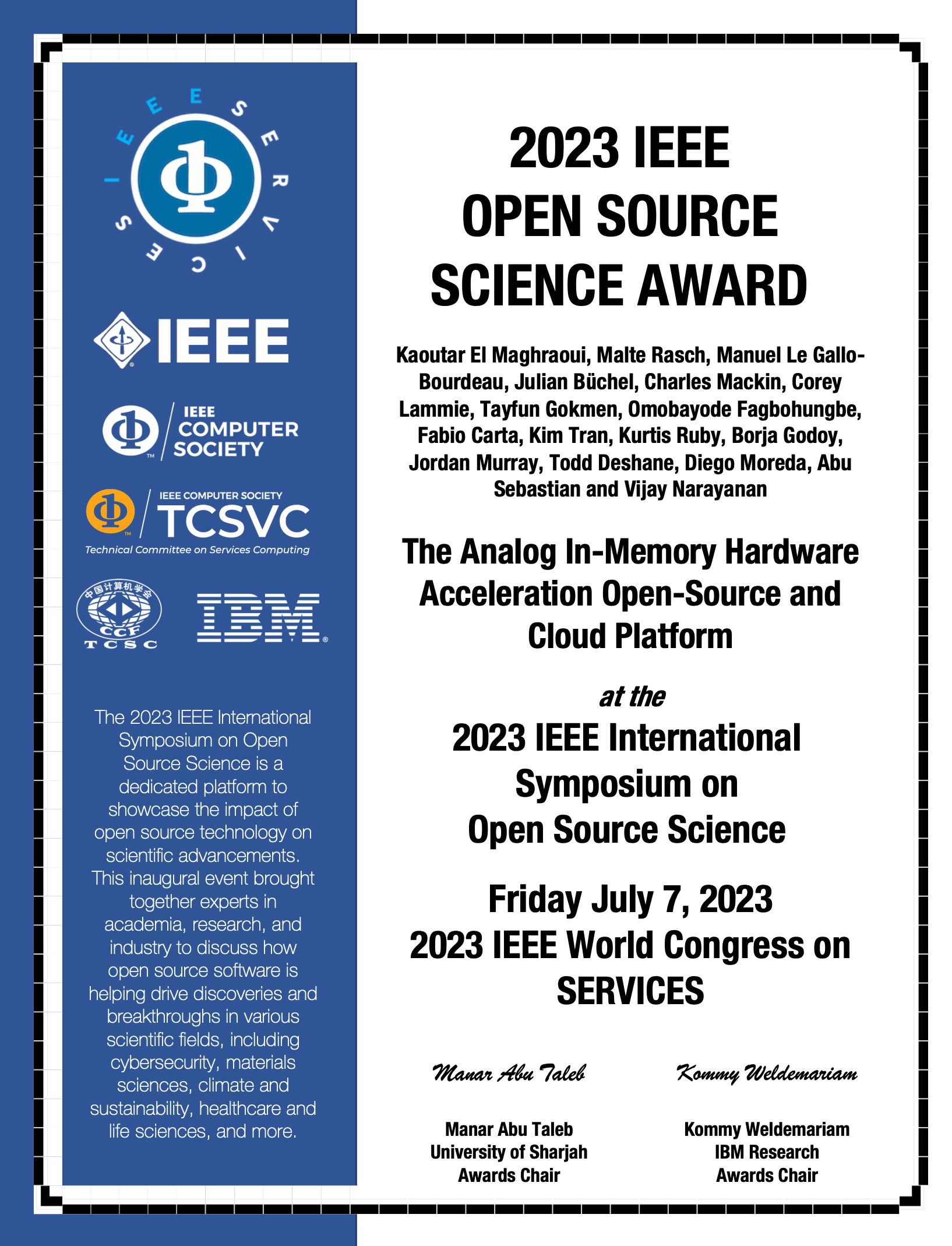

Awards and Media Mentions

- IBM Research blog: [Open-sourcing analog AI simulation]: https://research.ibm.com/blog/analog-ai-for-efficient-computing

- We are proud to share that the AIHWKIT and the companion cloud composer received the IEEE OPEN SOURCE SCIENCE award in 2023.

Installation

Installing from PyPI

The preferred way to install this package is by using the Python package index:

pip install aihwkit

Conda-based Installation

There is a conda package for aihwkit available in conda-forge. It can be installed in a conda environment running on a Linux or WSL in a Windows system.

-

CPU

conda install -c conda-forge aihwkit

-

GPU

conda install -c conda-forge aihwkit-gpu

If you encounter any issues during download or want to compile the package

for your environment, please take a look at the advanced installation guide.

That section describes the additional libraries and tools required for

compiling the sources using a build system based on cmake.

Docker Installation

For GPU support, you can also build a docker container following the CUDA Dockerfile instructions. You can then run a GPU enabled docker container using the follwing command from your peoject dircetory

docker run --rm -it --gpus all -v $(pwd):$HOME --name aihwkit aihwkit:cuda bash

Authors

IBM Research has developed IBM Analog Hardware Acceleration Kit, with Malte Rasch, Diego Moreda, Fabio Carta, Julian Büchel, Corey Lammie, Charles Mackin, Kim Tran, Tayfun Gokmen, Manuel Le Gallo-Bourdeau, and Kaoutar El Maghraoui as the initial core authors, along with many contributors.

You can contact us by opening a new issue in the repository or alternatively

at the aihwkit@us.ibm.com email address.

License

This project is licensed under [MIT License].

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file aihwkit-1.1.0.tar.gz.

File metadata

- Download URL: aihwkit-1.1.0.tar.gz

- Upload date:

- Size: 644.9 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

10315a8bfde7e35772a3ae0082fc0340f69b8ab61854dee7b81b1f0e82da0544

|

|

| MD5 |

b4668a2f9a00a34539bc7c4e0f89dd61

|

|

| BLAKE2b-256 |

a5e71f1ba5d9317e418d51c90f75ea3d1e1c1003ee87f95b31d2885c07799bb5

|

File details

Details for the file aihwkit-1.1.0-cp312-cp312-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl.

File metadata

- Download URL: aihwkit-1.1.0-cp312-cp312-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl

- Upload date:

- Size: 13.7 MB

- Tags: CPython 3.12, manylinux: glibc 2.27+ x86-64, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

99073e3f70fef3875bfeb7ea068e8c6df45cf99516514f135563246b2d3b4537

|

|

| MD5 |

4a45ef9fdbc720ba033bcd9d7b425114

|

|

| BLAKE2b-256 |

0403ad7674fa693d96e9d3a3650d5be794276b9cc6e4c070f38e6b80cba7bcec

|

File details

Details for the file aihwkit-1.1.0-cp312-cp312-macosx_26_0_arm64.whl.

File metadata

- Download URL: aihwkit-1.1.0-cp312-cp312-macosx_26_0_arm64.whl

- Upload date:

- Size: 1.3 MB

- Tags: CPython 3.12, macOS 26.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4357cdd9ec90167939feef0319d2dbf619bcb824166bd57b11cd7099a03265ad

|

|

| MD5 |

6e2ae908df09a1af34711b97003b4324

|

|

| BLAKE2b-256 |

4e14caea95780be2c5bf4f39691b3e6c49792381618cc84b6e8c086acc07ad99

|

File details

Details for the file aihwkit-1.1.0-cp311-cp311-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl.

File metadata

- Download URL: aihwkit-1.1.0-cp311-cp311-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl

- Upload date:

- Size: 13.7 MB

- Tags: CPython 3.11, manylinux: glibc 2.27+ x86-64, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b7eb748c8b847a4241cad1d1b08996da18d31068bcb0ad3ac1b2f3b9a3d4ea5b

|

|

| MD5 |

8815d1e73c52253d8b994e709ba7884e

|

|

| BLAKE2b-256 |

d05565fede589bdc53b4bb0b279b77c1ec4c7a846c2ca68c281a7a6c98380f40

|

File details

Details for the file aihwkit-1.1.0-cp311-cp311-macosx_26_0_arm64.whl.

File metadata

- Download URL: aihwkit-1.1.0-cp311-cp311-macosx_26_0_arm64.whl

- Upload date:

- Size: 1.3 MB

- Tags: CPython 3.11, macOS 26.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2fbe825c546c8dee291e429f04292436a9f80df1af2fde9ab9f156ca73e40df6

|

|

| MD5 |

f82dfe7ba06a25fc39f9b2764073865d

|

|

| BLAKE2b-256 |

03080d54fd9b22ca63d094a86fd273a11d6d9d04365d7c1ff7de17803373f49d

|

File details

Details for the file aihwkit-1.1.0-cp310-cp310-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl.

File metadata

- Download URL: aihwkit-1.1.0-cp310-cp310-manylinux_2_27_x86_64.manylinux_2_28_x86_64.whl

- Upload date:

- Size: 13.6 MB

- Tags: CPython 3.10, manylinux: glibc 2.27+ x86-64, manylinux: glibc 2.28+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

7c5757f84c416dce9b0ae46e7c221ad281990c0c4dabb93a3274cbb9da3215ff

|

|

| MD5 |

d093aa4dde9807e7970ea0024f4d2ceb

|

|

| BLAKE2b-256 |

2f860ea7ba91ae51043fbfd5e4dd9fde333ae7736f7c162178a6ba63a6094c8a

|

File details

Details for the file aihwkit-1.1.0-cp310-cp310-macosx_26_0_arm64.whl.

File metadata

- Download URL: aihwkit-1.1.0-cp310-cp310-macosx_26_0_arm64.whl

- Upload date:

- Size: 1.3 MB

- Tags: CPython 3.10, macOS 26.0+ ARM64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.12.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b5cb0a665bbd36533f034e395a8e4907bd03d9777db9dcf3cefd64f5db65b3e9

|

|

| MD5 |

04e421e9fcb49f43fc21c98650975d1c

|

|

| BLAKE2b-256 |

97ec5c7d26f848840cbb101ce9f0b3497c5c498590342fda00b5750bf18491ac

|