No project description provided

Project description

Breaking: AIxBlock is going to open-source its full code soon in Q1-2025. Please follow us for more updates. Here is a brief overview of our project.

AIxBlock

An On-Chain Open-Source Platform for Rapid AI Model Development and Productization Using Decentralized Resources with Flexibility and Scalability

🚀 Why AIxBlock?

AIxBlock is the first on-chain, open-source, comprehensive AI development platform designed to enable AI developers and businesses to:

- Develop and Productize AI Models effortlessly and cost-effectively.

- Access Decentralized Marketplaces of compute resources, datasets, pre-trained models, and labelers, saving up to 90% on costs.

- Build and monetize Multi-AI Agent Teams: Create AI teams where each agent has specific roles, tools, and goals, working together to automate any workflows, bringing unprecedented efficiency and scalability to your operations.

- Maintain Full Privacy Control with seamless self-hosting capabilities.

🌟 Features

- End-to-End AI Development Lifecycle: From data crawling, labeling, training, real-time demos, to deployment.

- Supports a Diversity of AI Models and a wide range of data formats: The platform is highly versatile and supports a wide range of model types, including: CV, ASR, NLP, LLM, Generative AI.

- Multi-AI Agent Infrastructure: Build AI teams where each agent has specific roles, tools, and goals. These agents work together seamlessly to automate complex workflows, enabling businesses to streamline operations with efficiency and flexibility.

- Flexibly Customizable: AIxBlock allows users to tailor workflows and features to meet their unique requirements.

- Decentralized Marketplaces:

- Globally distributed, high-end, and on-demand GPU resources.

- Diverse, high-quality decentralized datasets (coming soon).

-

This Decentralized Dataset Pool is A rich collection of data types, including but not limited to: - Thousands of real-world call center audio datasets in multiple languages and accents across various domains and industries (redacted for PII and PHI). - Doctor-patient conversations in English (redacted for sensitive information). - Real-world medical dictation audio in English. - Real-world medical datasets in DICOM format. - Real-world utterances in multiple languages. - Hundreds of thousands of real-world facial recognition datasets representing multiple ethnicities, all collected with consent forms. - Many other unique and high-quality datasets. - The pool is continuously enriched by contributions from new contributors globally. - Decentralized labelers from over 100 countries.

- Decentralized pre-trained models for auto-labeling, fine-tuning, and deployment.

-

- Training DDP (Distributed Data Parallel): One of the core technology backbones of AIxBlock is Distributed Data Parallel (DDP) training. This allows models, including large language models (LLMs), to be trained across multiple nodes, significantly accelerating the training process and reducing computation bottlenecks. As of now, we support both Pytorch and Tensorflow DDP.

- Instant Self-Hosting: Deploy on-premise in minutes with zero complex configurations.

- Monetization Opportunities: Monetize your models, AI agents or idle compute resources with just a few clicks through our integrated marketplaces.

🛠️ Getting Started

- Clone the Repository:

git clone https://github.com/<your-org>/aixblock.git cd aixblock

- Install Dependencies:

pip install -r requirements.txt

- Run the Platform:

python run.py

🤝 How to Contribute

- Check the Issues for open tasks.

- Fork the repository and create your feature branch:

git checkout -b feature/your-feature-name

- Submit a Pull Request with detailed notes.

🎁 Community Contribution Rewards

- Earn points for every meaningful contribution.

- Accumulate points that will be converted into tokens during our Token Generation Event (TGE).

- Post-TGE, receive monthly rewards based on your contribution level.

- Be part of our long-term profit-sharing ecosystem for every single contribution. To foster sustainable growth and reward valuable contributions, we allocate 15% of the total token supply for ecosystem growth. This allocation is divided into two main categories:

- Grants and Funding for Outstanding Projects (35%)

- Open-Source Contributor Rewards (65%)

This section outlines the mechanisms for allocating these tokens, including how contributions are rewarded, thresholds to ensure fairness, and strategies for maintaining reserves.

- Grants and Funding for Outstanding Projects (35%)

We dedicate 35% of the ecosystem growth allocation to fund innovative and impactful projects built on top of our ecosystem. These grants are designed to:

- Support developers creating tools, applications, or integrations that expand the ecosystem’s functionality.

- Encourage research and development of new use cases for the platform.

- Drive education, community growth, and user adoption through hackathons, tutorials, and outreach efforts.

- Open-Source Contributor Rewards (65%)

We allocate 65% of the ecosystem growth tokens to reward contributors for their efforts in maintaining and improving the open-source ecosystem. This ensures that contributors are fairly compensated for their time and expertise while fostering innovation and collaboration.

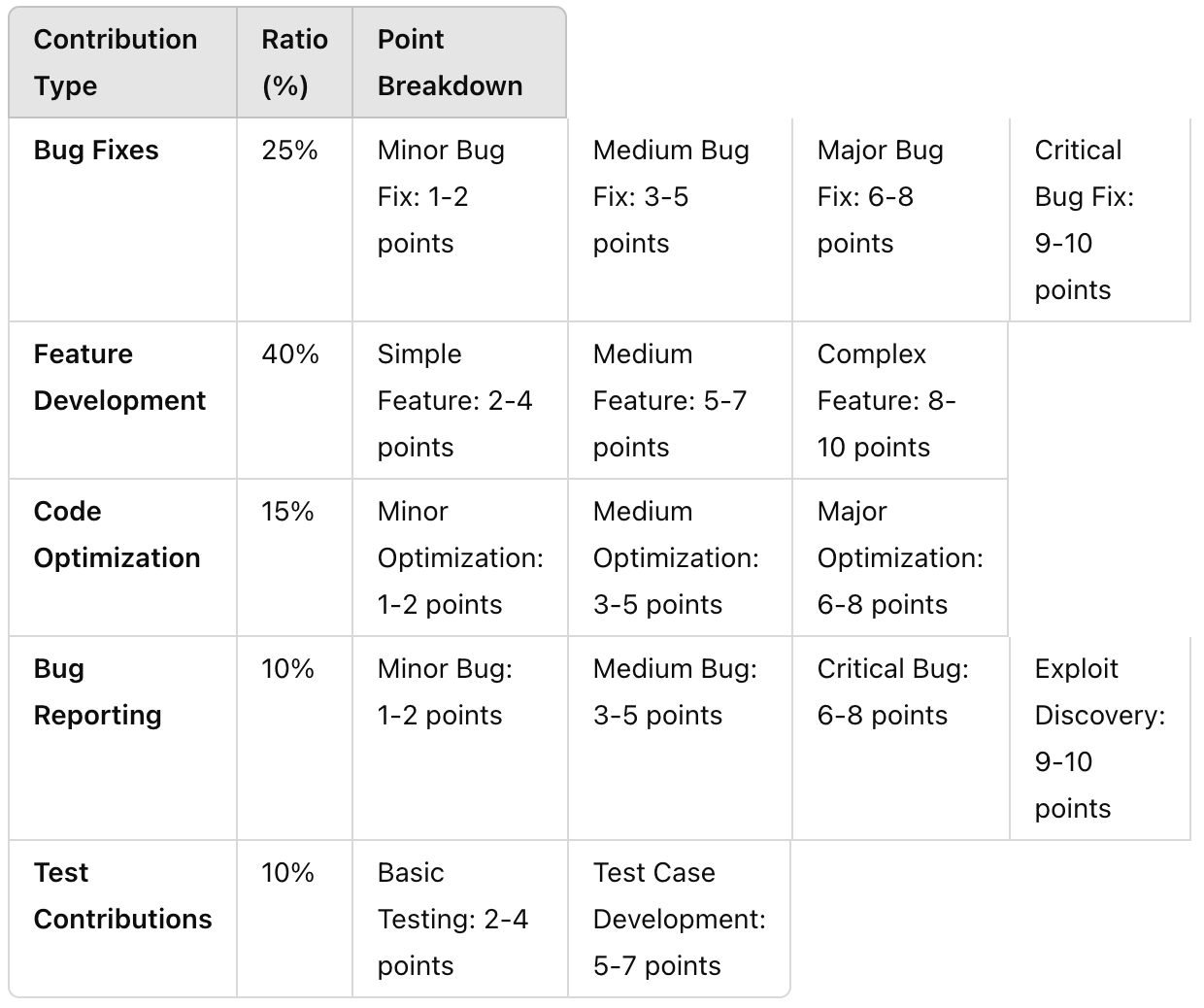

The contributions are categorized and weighted as follows:

- Monthly Token Distribution

Every month, a fixed number of tokens from the open-source contributor pool are unlocked and distributed based on the total points earned by contributors during that period.

Fairness Mechanism: Threshold for Token Distribution

To prevent scenarios where only a small number of contributors claim all tokens with minimal effort, we implement a threshold system:

- Minimum Points Threshold: If the total points earned by all contributors in a given month are less than 500 points, a reduced ratio of 50% of the monthly token allocation will be distributed. The remaining tokens will be added to a community reserve fund.

- Reasoning: A threshold of 500 points ensures that contributions reach a baseline level of activity and effort. Distributing only 50% of the allocation incentivizes more participation in subsequent months while maintaining fairness.

Point-to-Token Calculation:

Tokens are distributed proportionally based on the points earned:

Example Calculation:

- Monthly Token Pool: 10,000 tokens (for detailed monthly vesting, please check our whitepaper

- Total Contributor Points: 1,000 points

- Contributor A’s Points: 100 points → He earns: 100*10000/1000 tokens (equal to 1k tokens)

If the total points were below the threshold (e.g., 400 points):

- Only 50% of the monthly token pool (5,000 tokens) would be distributed.

- Contributor A’s Token Share with reduced distribution. → He earns: 100*5000/400 tokens (equal to 1250 tokens)

💬 Join the Community

- Discord: Join Us

- Twitter: Follow Us

- Telegram: Join the Discussion

- LinkedIn: Follow Us

- YouTube: Watch Our Channel

- Website: https://aixblock.io

- Platform: https://app.aixblock.io

🔖 Keywords

ai, llm, decentralized-ai, generative-ai, asr, computer-vision, nlp, privacy, security, open-source, distributed-computing, ddp, distributed-training, distributed-data-parrallel, self-hosted-ai platform, decentralized-dataset-pool, self-hosted-labeling-tool, self-hosted-ai-deployment, fine-tuning, ai-development-platform, ai-production-platform

⭐ Show Your Support

Give this repository a ⭐ and share it with your network to help grow the AIxBlock community.

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file aixblock_core-0.0.3.tar.gz.

File metadata

- Download URL: aixblock_core-0.0.3.tar.gz

- Upload date:

- Size: 38.1 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.10.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

dc9fff4d5027c9ffc942edd58e5ddd0fdb57d9079bcd353ba24fe5939bd41af7

|

|

| MD5 |

d66df4f68b5cae6a509e1aa47f2d535f

|

|

| BLAKE2b-256 |

ec9d0450fb46a24d10606d51cbb5005cefe9909b95e06757d581cc48331b9bc1

|

File details

Details for the file aixblock_core-0.0.3-py3-none-any.whl.

File metadata

- Download URL: aixblock_core-0.0.3-py3-none-any.whl

- Upload date:

- Size: 38.3 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.10.13

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a8bede1f922afa1423ed3de8f869564b7d9b8c74378f4762718c22ac97c5be1d

|

|

| MD5 |

ce81570f55f27da246f900f847b939c0

|

|

| BLAKE2b-256 |

136081ffd9f6512822ecf0947958980cb58500b49b8eedf87ab98b831d8fcff5

|