Open-source tool for exploring, labeling, and monitoring data for NLP projects.

Project description

✨ Argilla ✨

Open-source data curation platform for LLMs

MLOps for NLP: from data labeling to model monitoring

https://github.com/argilla-io/argilla/assets/1107111/49e28d64-9799-4cac-be49-19dce0f6bd86

📄 Documentation | 🚀 Quickstart | 🎼 Cheatsheet | 🫱🏾🫲🏼 Contribute | 🗺️ Roadmap

🚀 Quickstart

Argilla is an open-source data curation platform for LLMs. Using Argilla, everyone can build robust language models through faster data curation using both human and machine feedback. We provide support for each step in the MLOps cycle, from data labeling to model monitoring.

There are different options to get started:

-

Take a look at our quickstart page 🚀

-

Start contributing by looking at our contributor guidelines 🫱🏾🫲🏼

-

Skip some steps with our cheatsheet 🎼

🎼 Cheatsheet

Python package

pip install argilla

Deploy Locally

docker run -d --name argilla -p 6900:6900 argilla/argilla-quickstart:latest

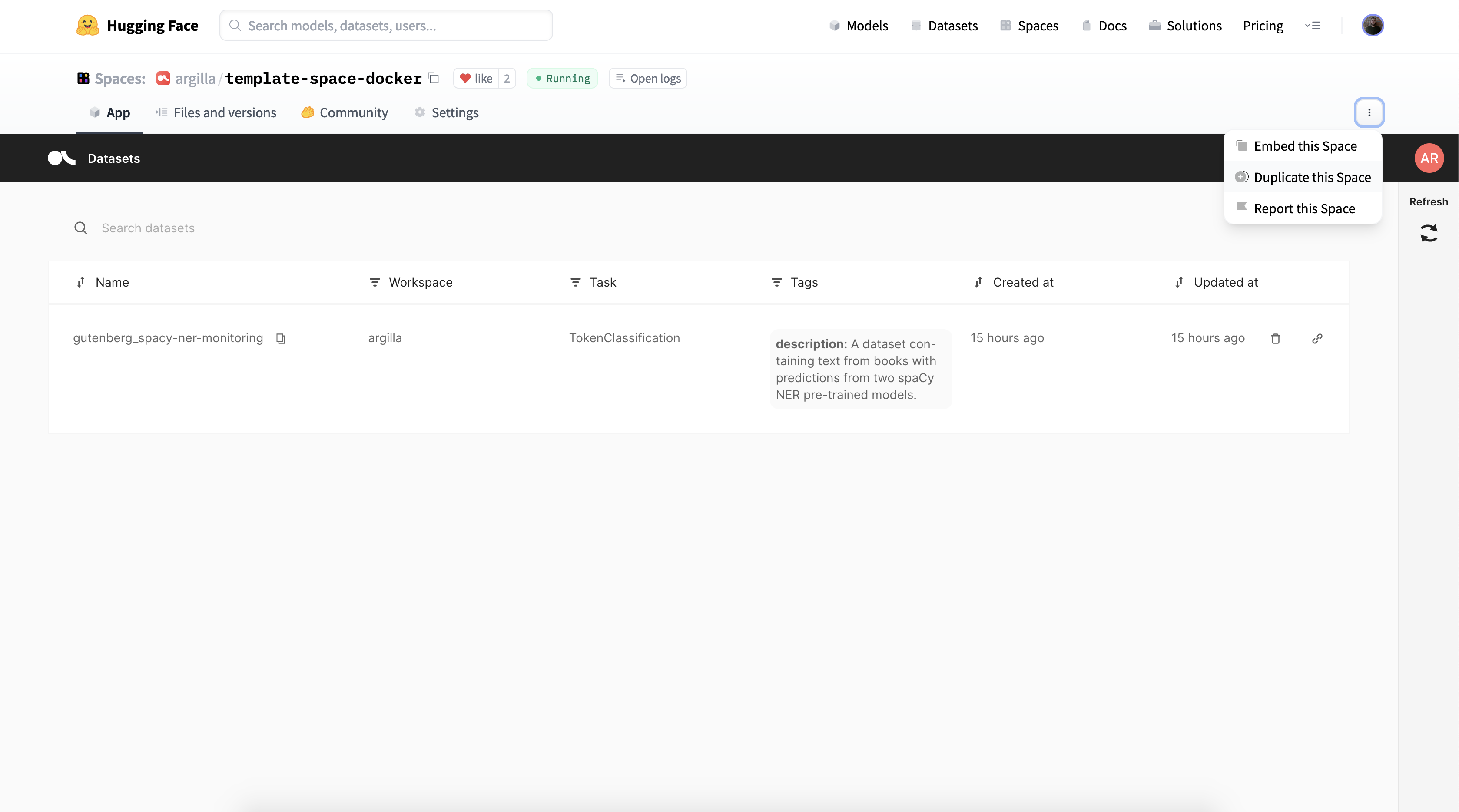

Deploy on Hugging Face Hub

HuggingFace Spaces now have persistent storage and this is supported from Argilla 1.11.0 onwards, but you will need to manually activate it via the HuggingFace Spaces settings. Otherwise, unless you're on a paid space upgrade, after 48 hours of inactivity the space will be shut off and you will lose all the data. To avoid losing data, we highly recommend using the persistent storage layer offered by HuggingFace.

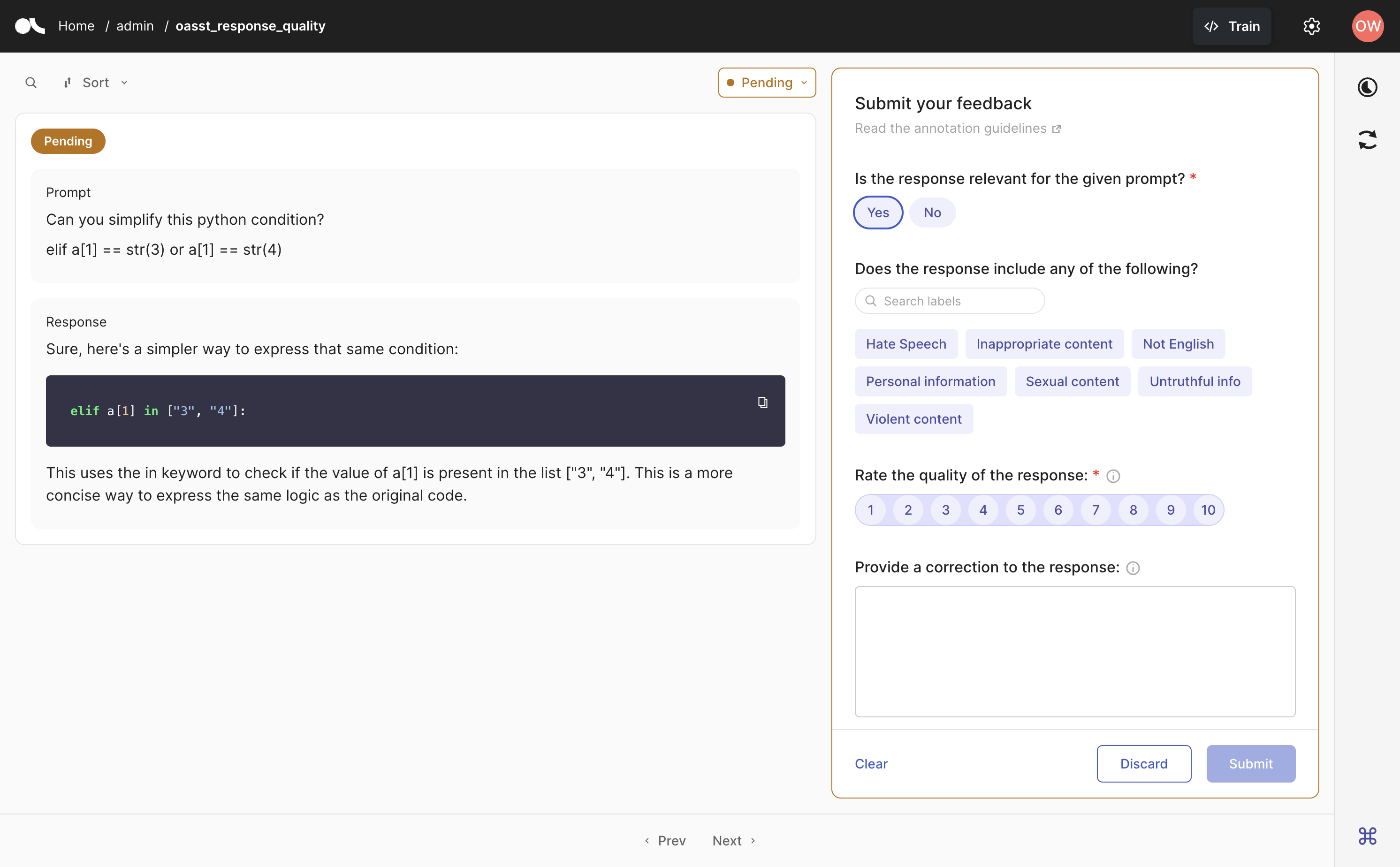

LLM support

import argilla as rg

dataset = rg.FeedbackDataset(

guidelines="Please, read the question carefully and try to answer it as accurately as possible.",

fields=[

rg.TextField(name="question"),

rg.TextField(name="answer"),

],

questions=[

rg.RatingQuestion(

name="answer_quality",

description="How would you rate the quality of the answer?",

values=[1, 2, 3, 4, 5],

),

rg.TextQuestion(

name="answer_correction",

description="If you think the answer is not accurate, please, correct it.",

required=False,

),

]

)

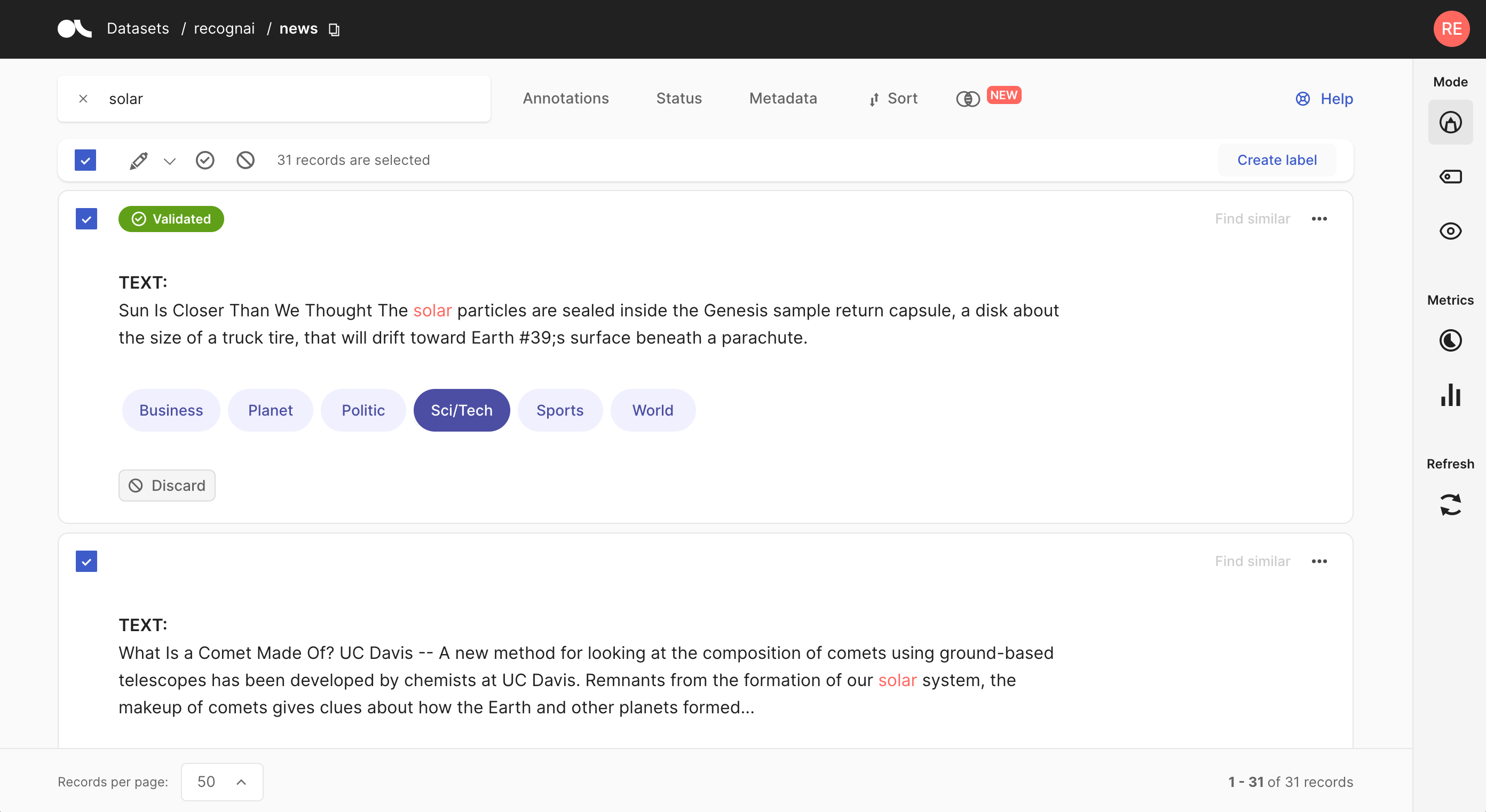

Create Records

import argilla as rg

rec = rg.TextClassificationRecord(

text="Sun Is Closer... a parachute.",

prediction=[("Sci/Tech", 0.75), ("World", 0.25)],

annotation="Sci/Tech"

)

rg.log(records=record, name="news")

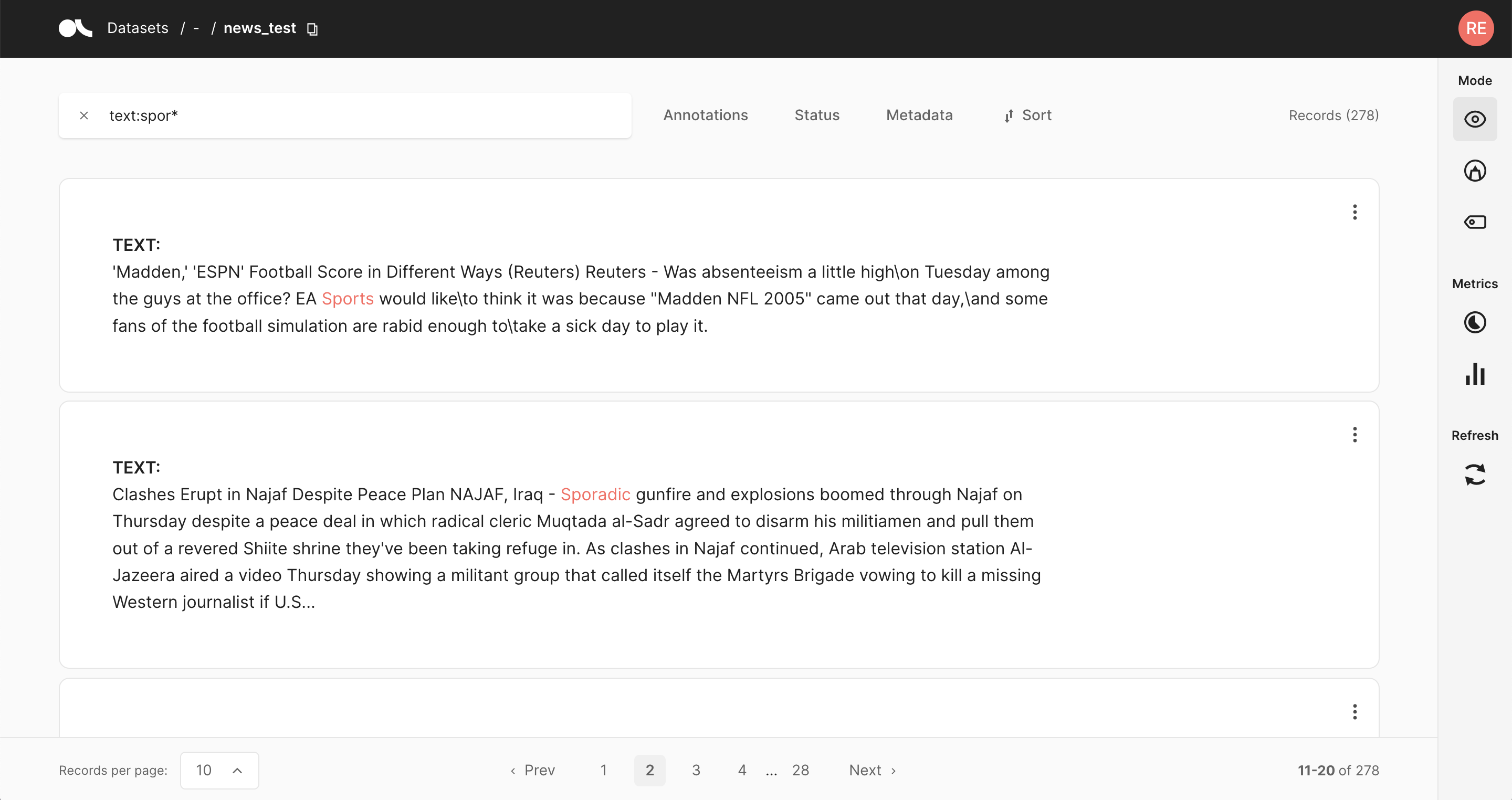

Query datasets

import argilla as rg

rg.load(name="news", query="text:spor*")

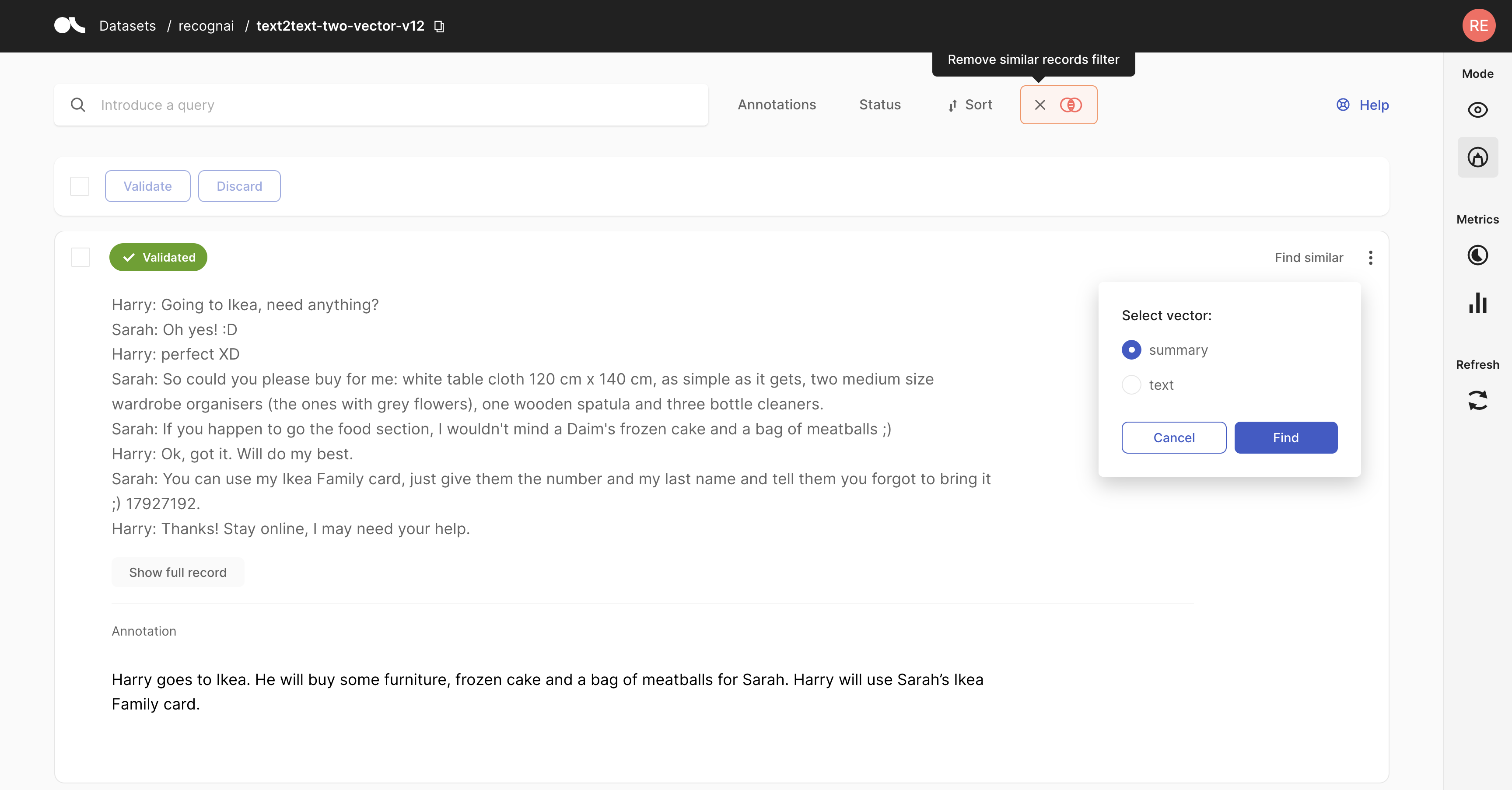

Semantic search

import argilla as rg

record = rg.TextClassificationRecord(

text="Hello world, I am a vector record!",

vectors= {"my_vector_name": [0, 42, 1984]}

)

rg.log(name="dataset", records=record)

rg.load(name="dataset", vector=("my_vector_name", [0, 43, 1985]))

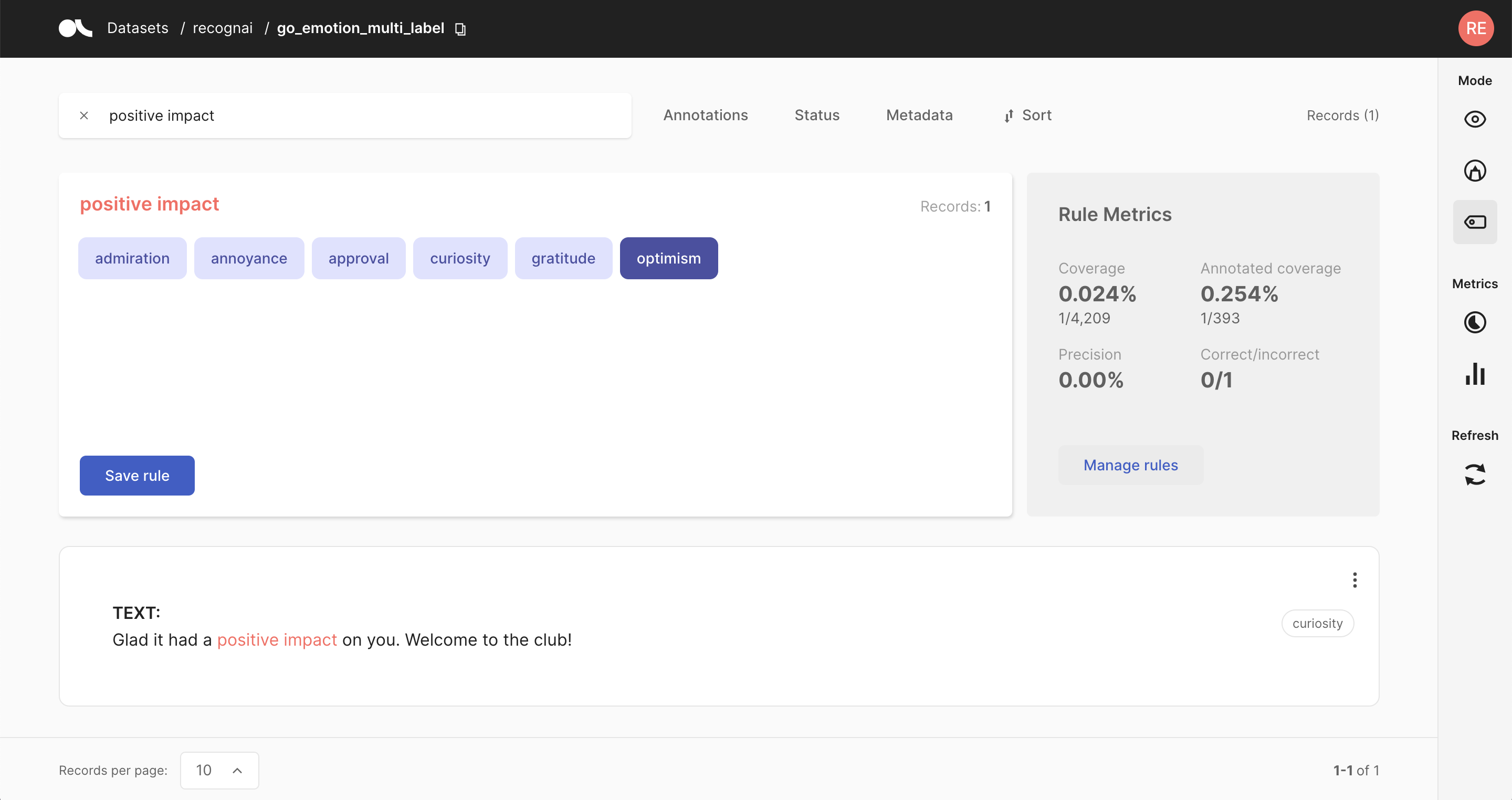

Weak supervision

from argilla.labeling.text_classification import add_rules, Rule

rule = Rule(query="positive impact", label="optimism")

add_rules(dataset="go_emotion", rules=[rule])

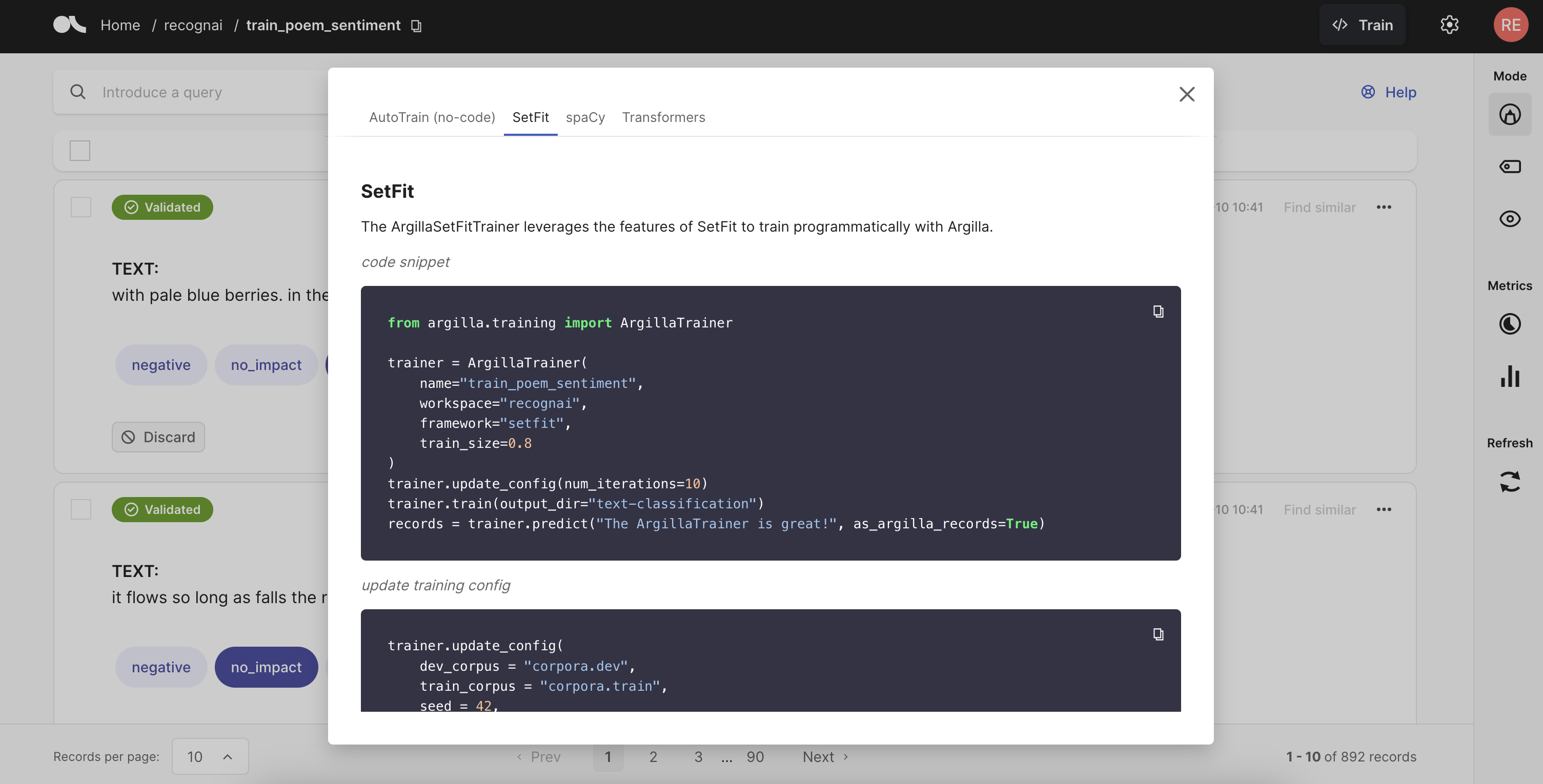

Train models

from argilla.training import ArgillaTrainer

trainer = ArgillaTrainer(name="news", workspace="recognai", framework="setfit")

trainer.train()

📏 Principles

-

Open: Argilla is free, open-source, and 100% compatible with major NLP libraries (Hugging Face transformers, spaCy, Stanford Stanza, Flair, etc.). In fact, you can use and combine your preferred libraries without implementing any specific interface.

-

End-to-end: Most annotation tools treat data collection as a one-off activity at the beginning of each project. In real-world projects, data collection is a key activity of the iterative process of ML model development. Once a model goes into production, you want to monitor and analyze its predictions and collect more data to improve your model over time. Argilla is designed to close this gap, enabling you to iterate as much as you need.

-

User and Developer Experience: The key to sustainable NLP solutions are to make it easier for everyone to contribute to projects. Domain experts should feel comfortable interpreting and annotating data. Data scientists should feel free to experiment and iterate. Engineers should feel in control of data pipelines. Argilla optimizes the experience for these core users to make your teams more productive.

-

Beyond hand-labeling: Classical hand-labeling workflows are costly and inefficient, but having humans in the loop is essential. Easily combine hand-labeling with active learning, bulk-labeling, zero-shot models, and weak supervision in novel data annotation workflows**.

🫱🏾🫲🏼 Contribute

We love contributors and have launched a collaboration with JustDiggit to hand out our very own bunds and help the re-greening of sub-Saharan Africa. To help our community with the creation of contributions, we have created our developer and contributor docs. Additionally, you can always schedule a meeting with our Developer Advocacy team so they can get you up to speed.

🥇 Contributors

🗺️ Roadmap

We continuously work on updating our plans and our roadmap and we love to discuss those with our community. Feel encouraged to participate.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file argilla-1.14.0.tar.gz.

File metadata

- Download URL: argilla-1.14.0.tar.gz

- Upload date:

- Size: 2.1 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.17

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

3481997d84ba6b6ec4ebf553cff64fd7c49719867ee316fc527328b44cf83942

|

|

| MD5 |

a5762aae322611b999029f9187aaa61c

|

|

| BLAKE2b-256 |

91f2bb81f8259c62461b32c2580b2980bf8c6ee4f06567ef80561ea52ea5e0f9

|

File details

Details for the file argilla-1.14.0-py3-none-any.whl.

File metadata

- Download URL: argilla-1.14.0-py3-none-any.whl

- Upload date:

- Size: 2.5 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.9.17

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f148041bf77dcaf4df58e31c515eb717013804167ee9f5796b31451c6681bc1b

|

|

| MD5 |

42d097e9f10a942abd85ef7403451078

|

|

| BLAKE2b-256 |

2ac3ca79095ad404a8dc127d98872a00c1eee41eab730bdb99070935aa156636

|