Attribute statements generated by LLMs to preceding tokens using attention weights.

Project description

AT2: Learning to Attribute with Attention

[getting started]

[tutorials]

[paper]

[bib]

Maintained by Ben Cohen-Wang

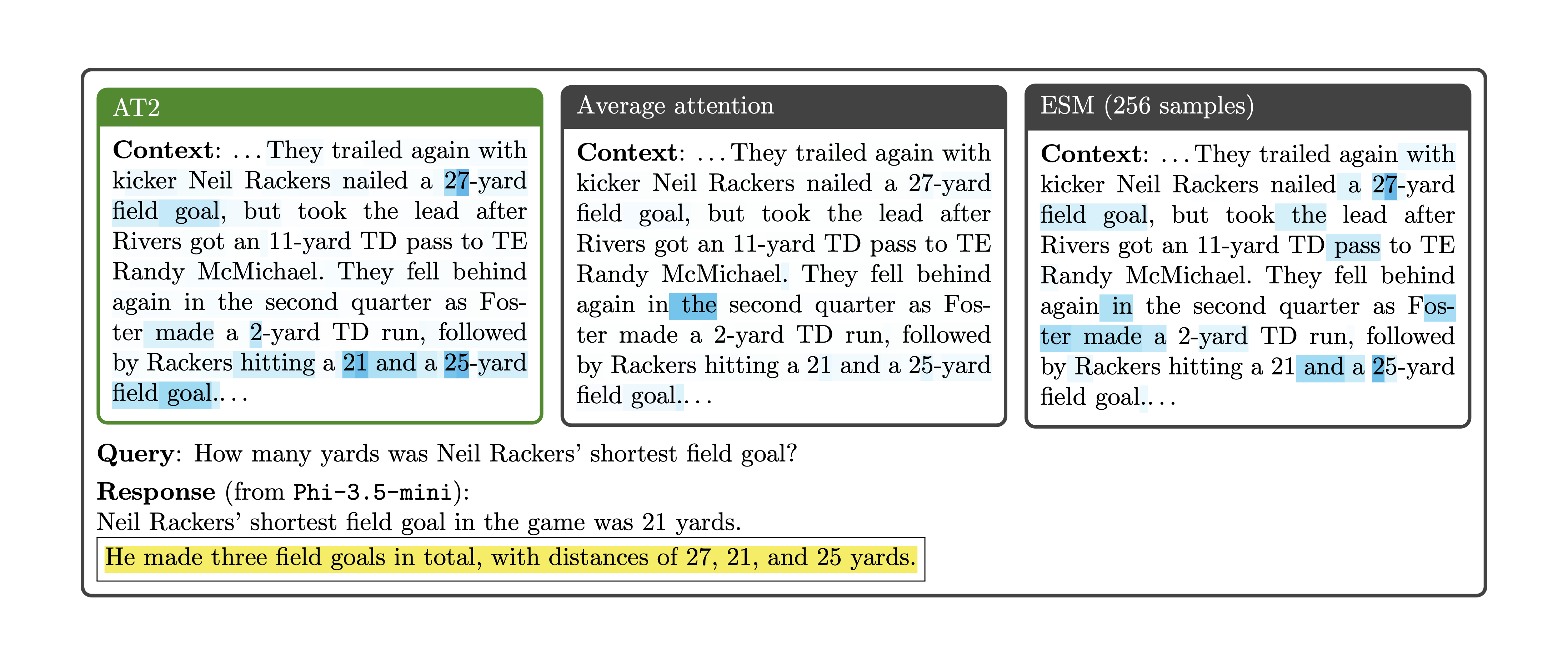

Attribution with Attention (AT2) is a method for using attention weights to pinpoint the specific information that a language model uses when generating a particular statement. This "information" can be, for example, a user-provided context, retrieved documents relevant to a query, or even a model's own intermediate thoughts. For each piece of information (or source), AT2 assigns a score indicating the extent to which the model uses it to generate a particular statement.

AT2 significantly outperforms naïve methods for leveraging attention for attribution and performs comparably to much more expensive approaches. See our paper for more details!

How does AT2 work?

When we say that a language model uses a piece of information (or source), we mean that removing this source would affect its generation. To directly pinpoint the sources that a model uses, we can individually ablate these sources and observe how the model's generation changes. Unfortunately, this is really expensive! AT2 instead learns to efficiently predict the effects of ablating sources using attention weights as its features. This process consists of the following steps:

- Collect training data: For each example in a training dataset, measure

- the attention weights of a model's generation to sources

- the effects of removing different sources on the model's generation

- Learn a score estimator: We learn a (linear) score estimator to predict the effect of ablating sources, using attention weights as its features.

- Attribute with the learned score estimator: We can apply the learned score estimator to attribute model behavior for unseen examples.

While steps (1) and (2) are expensive (in our experiments, the training dataset is a few thousand examples), performing attribution for unseen examples is cheap. We just need to apply the learned score estimator to the attention weights produced by the model during inference.

Getting started

Let's walk through a simple example of using the at2 package!

Install at2 with pip:

pip install at2

To use at2 we'll first need to (1) instantiate a model and (2) define an "attribution task."

We can start by creating the model:

import torch

from at2.tasks import SimpleContextAttributionTask

from at2.utils import get_model_and_tokenizer

from at2 import AT2Attributor, AT2ScoreEstimator

model_name = "microsoft/Phi-4-mini-instruct"

model, tokenizer = get_model_and_tokenizer(

model_name,

dtype=torch.bfloat16,

)

Next, we create an attribution task, which consists of an input sequence, the corresponding sequence generated by the model, and a set of sources to which we attribute the model's generation. In this case, the input sequence is a context and a query, and the sources are the individual sentences in the context. We can create an attribution task as follows:

context = """

Attention Is All You Need

Abstract

The dominant sequence transduction models are based on complex recurrent or convolutional neural networks that include an encoder and a decoder. The best performing models also connect the encoder and decoder through an attention mechanism. We propose a new simple network architecture, the Transformer, based solely on attention mechanisms, dispensing with recurrence and convolutions entirely. Experiments on two machine translation tasks show these models to be superior in quality while being more parallelizable and requiring significantly less time to train. Our model achieves 28.4 BLEU on the WMT 2014 English-to-German translation task, improving over the existing best results, including ensembles, by over 2 BLEU. On the WMT 2014 English-to-French translation task, our model establishes a new single-model state-of-the-art BLEU score of 41.8 after training for 3.5 days on eight GPUs, a small fraction of the training costs of the best models from the literature. We show that the Transformer generalizes well to other tasks by applying it successfully to English constituency parsing both with large and limited training data.

1 Introduction

Recurrent neural networks, long short-term memory [13] and gated recurrent [7] neural networks in particular, have been firmly established as state of the art approaches in sequence modeling and transduction problems such as language modeling and machine translation [35, 2, 5]. Numerous efforts have since continued to push the boundaries of recurrent language models and encoder-decoder architectures [38, 24, 15].

Recurrent models typically factor computation along the symbol positions of the input and output sequences. Aligning the positions to steps in computation time, they generate a sequence of hidden states ht, as a function of the previous hidden state ht-1 and the input for position t. This inherently sequential nature precludes parallelization within training examples, which becomes critical at longer sequence lengths, as memory constraints limit batching across examples. Recent work has achieved significant improvements in computational efficiency through factorization tricks [21] and conditional computation [32], while also improving model performance in case of the latter. The fundamental constraint of sequential computation, however, remains.

Attention mechanisms have become an integral part of compelling sequence modeling and transduction models in various tasks, allowing modeling of dependencies without regard to their distance in the input or output sequences [2, 19]. In all but a few cases [27], however, such attention mechanisms are used in conjunction with a recurrent network.

In this work we propose the Transformer, a model architecture eschewing recurrence and instead relying entirely on an attention mechanism to draw global dependencies between input and output. The Transformer allows for significantly more parallelization and can reach a new state of the art in translation quality after being trained for as little as twelve hours on eight P100 GPUs.

"""

query = "What type of GPUs did the authors use in this paper?"

task = SimpleContextAttributionTask(

context=context,

query=query,

model=model,

tokenizer=tokenizer,

source_type="sentence",

)

The task object we've created handles generating a response from the context and query for us:

In [1]: task.generation

Out[1]: The authors used P100 GPUs in this paper.

Finally, we can create an AT2Attributor (in this case, using an existing learned score estimator from HuggingFace), and attribute the model's generation!

attributor = AT2Attributor.from_hub(task, "madrylab/at2-phi-4-mini-instruct")

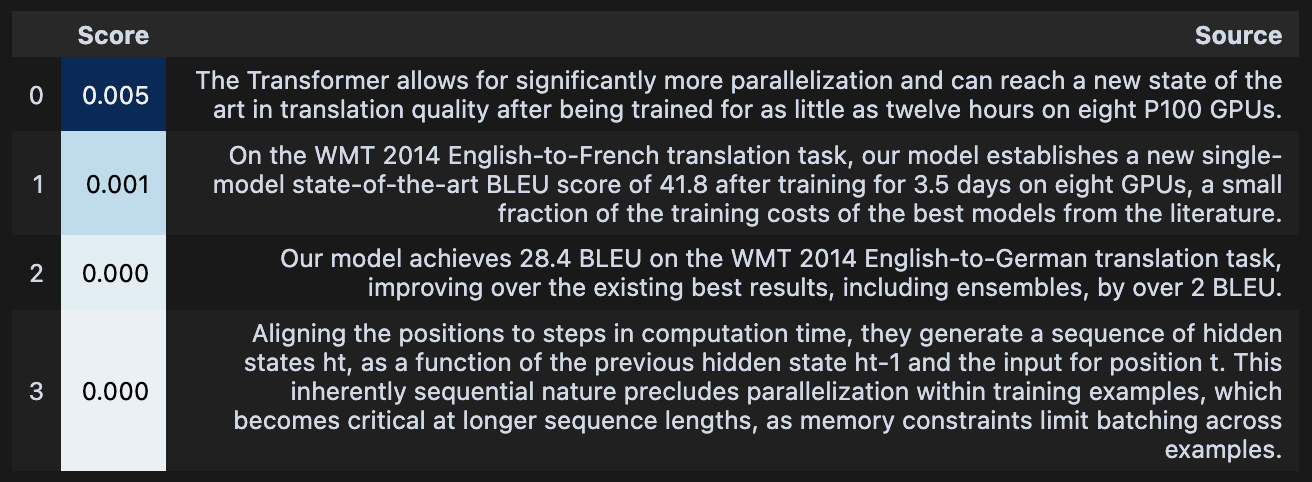

In [2]: attributor.show_attribution()

Out[2]:

Tutorials

We provide a few notebooks to walk through the different functionalities of AT2. AT2 learns to attribute a particular model's generation to preceding tokens.

- Getting started: We use an existing instance of AT2 to attribute the generations of

Phi-4-mini-instruct. - Training AT2: We train AT2 from scratch to learn to attribute a model's generations.

- Multi-document context attribution: We construct a custom attribution task with sources across multiple documents.

- Thought attribution: We attribute a reasoning model's final response to its intermediate thoughts.

Learned score estimators 🤗

We provide learned score estimators for a few popular models in this HuggingFace collection.

These estimators were trained using the training scripts in scripts.

Citation

@article{cohenwang2025learning,

title={Learning to Attribute with Attention},

author={Cohen-Wang, Benjamin and Chuang, Yung-Sung and Madry, Aleksander},

journal={arXiv preprint arXiv:2504.13752},

year={2025}

}

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file at2-0.0.1.tar.gz.

File metadata

- Download URL: at2-0.0.1.tar.gz

- Upload date:

- Size: 29.1 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.16

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

aa9a3226faf1c5ad6580bbf5f2f742ae94e4ee799933cedf83d811697b75dc57

|

|

| MD5 |

d9674abe40589e7311817ab0eb0386d8

|

|

| BLAKE2b-256 |

9f2c585745dddad0f1580e373495b5e39ae8f58d1f158db3a6bacce61d27a5ef

|

File details

Details for the file at2-0.0.1-py3-none-any.whl.

File metadata

- Download URL: at2-0.0.1-py3-none-any.whl

- Upload date:

- Size: 28.3 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.16

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e5e24ff6f105f65aef2b33f8b244e050263c5ae1c1de9ba69767c672b64026ee

|

|

| MD5 |

88ec729d2e0c3dd5004b39533c9d0aff

|

|

| BLAKE2b-256 |

cd19f579578d80f9299380ebb8f0aabc932a0650873847cb986012c4b9d347cb

|