Fast and Reasonably Accurate Word Tokenizer for Thai

Project description

AttaCut: Fast and Reasonably Accurate Word Tokenizer for Thai

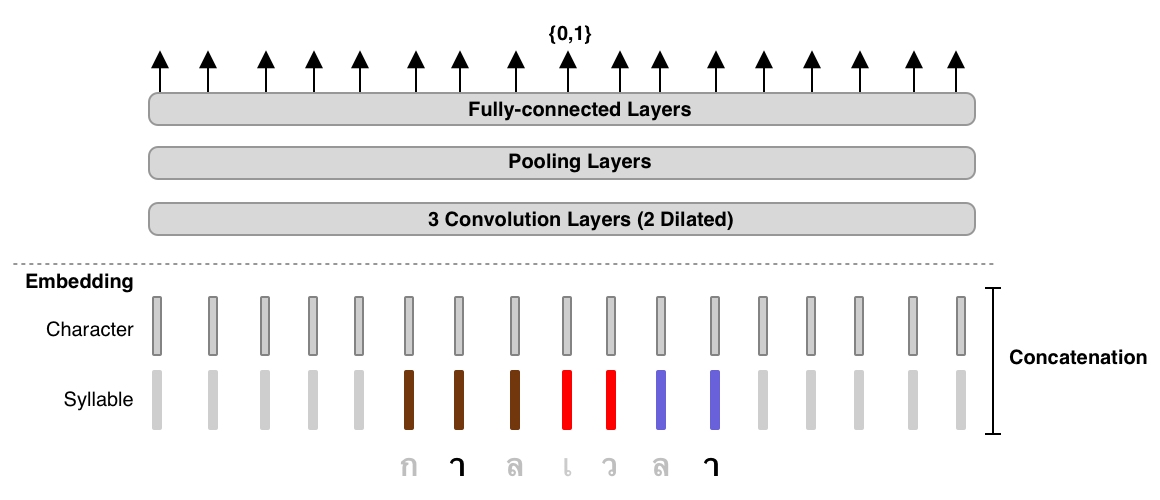

How does AttaCut look like?

TL;DR: 3-Layer Dilated CNN on syllable and character features. It’s 6x faster than DeepCut (SOTA) while its WL-f1 on BEST is 91%, only 2% lower.

Installation

$ pip install attacut

Remarks: Windows users need to install PyTorch before the command above. Please consult PyTorch.org for more details.

Usage

Command-Line Interface

$ attacut-cli -h

AttaCut: Fast and Reasonably Accurate Word Tokenizer for Thai

Usage:

attacut-cli <src> [--dest=<dest>] [--model=<model>]

attacut-cli [-v | --version]

attacut-cli [-h | --help]

Arguments:

<src> Path to input text file to be tokenized

Options:

-h --help Show this screen.

--model=<model> Model to be used [default: attacut-sc].

--dest=<dest> If not specified, it'll be <src>-tokenized-by-<model>.txt

-v --version Show version

High-Level API

from attacut import tokenize, Tokenizer

# tokenize `txt` using our best model `attacut-sc`

words = tokenize(txt)

# alternatively, an AttaCut tokenizer might be instantiated directly, allowing

# one to specify whether to use `attacut-sc` or `attacut-c`.

atta = Tokenizer(model="attacut-sc")

words = atta.tokenize(txt)

Benchmark Results

Belows are brief summaries. More details can be found on our benchmarking page.

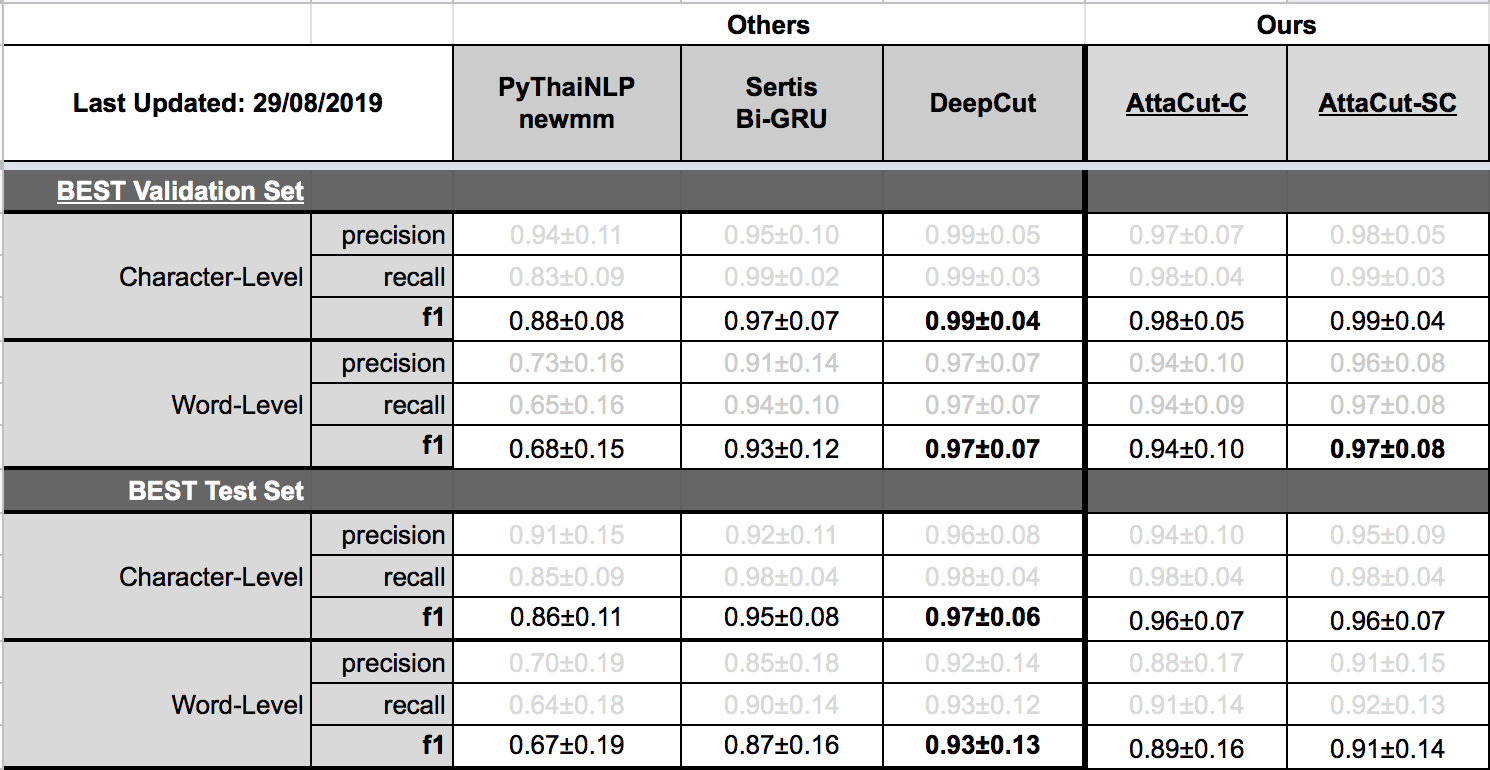

Tokenization Quality

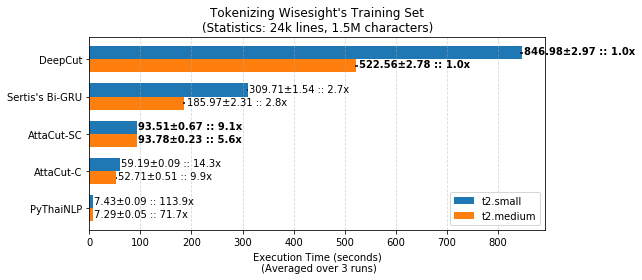

Speed

Retraining on Custom Dataset

Please refer to our retraining page

Related Resources

Acknowledgements

This repository was initially done by Pattarawat Chormai, while interning at Dr. Attapol Thamrongrattanarit's NLP Lab, Chulalongkorn University, Bangkok, Thailand. Many people have involed in this project. Complete list of names can be found on Acknowledgement.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file attacut-1.0.6.tar.gz.

File metadata

- Download URL: attacut-1.0.6.tar.gz

- Upload date:

- Size: 1.3 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/1.13.0 pkginfo/1.5.0.1 requests/2.22.0 setuptools/41.2.0 requests-toolbelt/0.9.1 tqdm/4.32.2 CPython/3.7.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ced9d8cd6b2817f6aeb441a2919b0f2da02b432294b08dc82702b40176a79bba

|

|

| MD5 |

0d32368ece14466da30601e9181e996f

|

|

| BLAKE2b-256 |

9c086b905097d1cd72dabc50b867c68bda1f971412a7dfee37b5b68fae997258

|

File details

Details for the file attacut-1.0.6-py3-none-any.whl.

File metadata

- Download URL: attacut-1.0.6-py3-none-any.whl

- Upload date:

- Size: 1.3 MB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/1.13.0 pkginfo/1.5.0.1 requests/2.22.0 setuptools/41.2.0 requests-toolbelt/0.9.1 tqdm/4.32.2 CPython/3.7.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d04193149476d1c371c7d0177a4363ba70d1a6d6f7d2246a577669eb4ea93f2c

|

|

| MD5 |

4d731a11321e0420dff1f9add2a82371

|

|

| BLAKE2b-256 |

f6564ab7204bde7468be65d047578192975035d9bc4e786990a407a28a8f75b8

|