No project description provided

Reason this release was yanked:

Misnamed package - see `attention-smithy` instead

Project description

AttentionSmithy

The Attention Is All You Need paper completely revolutionized the AI industry. After inspiring such programs like GPT and BERT, it seems all deep learning research began exclusively focusing on the attention mechanism behind transformers. This has created a great deal of research surrounding the topic, spawning hundreds of variations to the original paper meant to enhance the original program or tailor it to new applications. Most of these developments happen in isolation, disconnected from the broader community and incompatible with tools made by other developers. For developers that want to experiment with combining these ideas to fit a new problem, such a disjointed state is frustrating.

AttentionSmithy was designed as a platform that allows for flexible experimentation with the attention mechanism in a variety of applications. This includes the ability to use a multitude of positional embeddings, variations on the attention mechanism, and others.

The baseline code was originally inspired by The Annotated Transformer blog code. We have created examples of transformer models in the following repositories:

- machine translation, including an neural architecture search (NAS) setup

- geneformer

Future Directions

🤝 Join the conversation! 🤝

As you read and have ideas, please go to the Discussions tab of this repository and share them with us. We have ideas for future extensions and applications, and would love your input.

AttentionSmithy Components

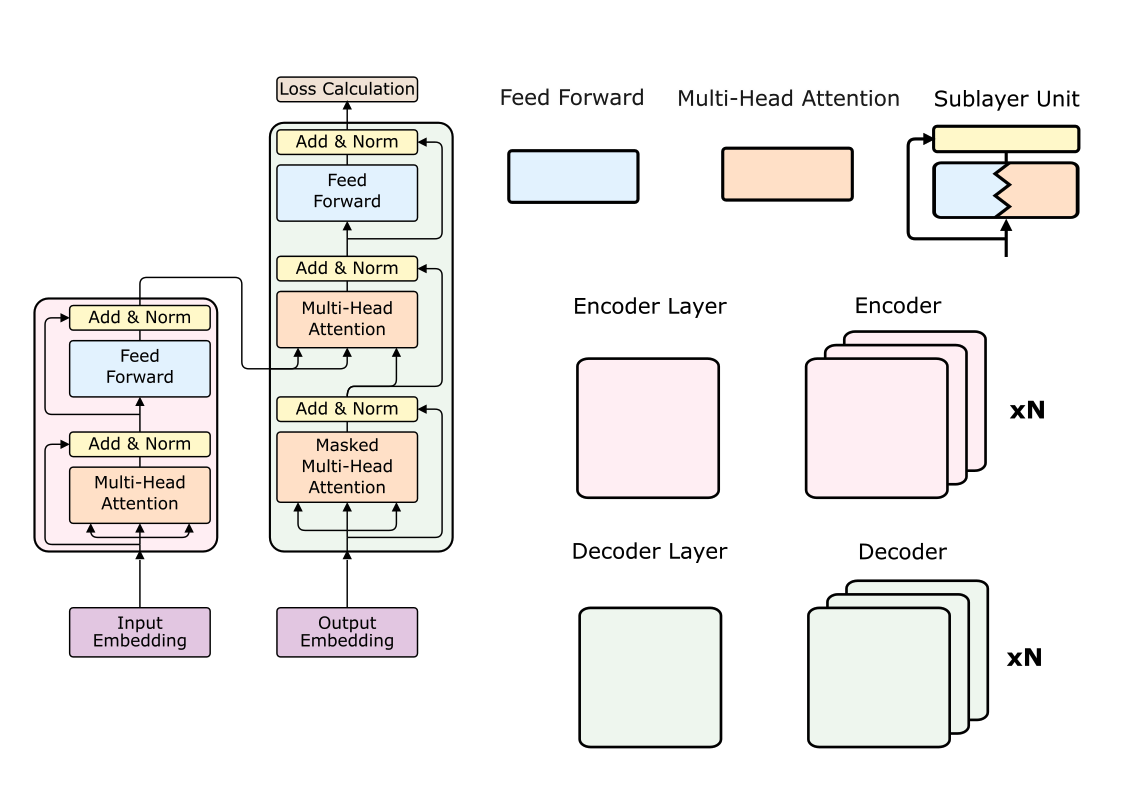

Here is a visual depiction of the different components of a transformer model, using Figure 1 from Attention Is All You Need as reference.

AttentionSmithy Numeric Embedding

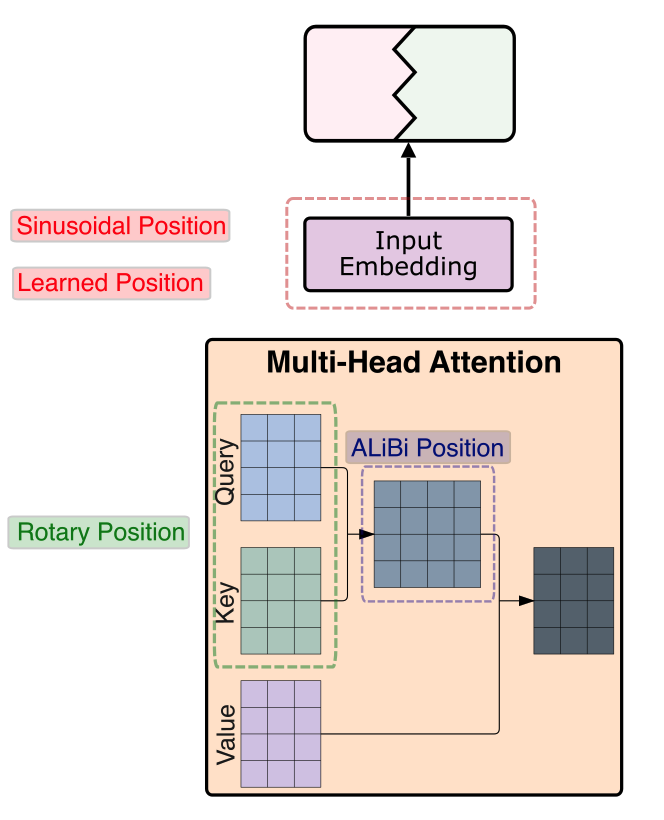

Here is a visual depiction of where each positional or numeric embedding fits in to the original model. We have implemented 4 popular strategies (sinusoidal, learned, rotary, ALiBi), but would like to expand to more in the future.

AttentionSmithy Attention Methods

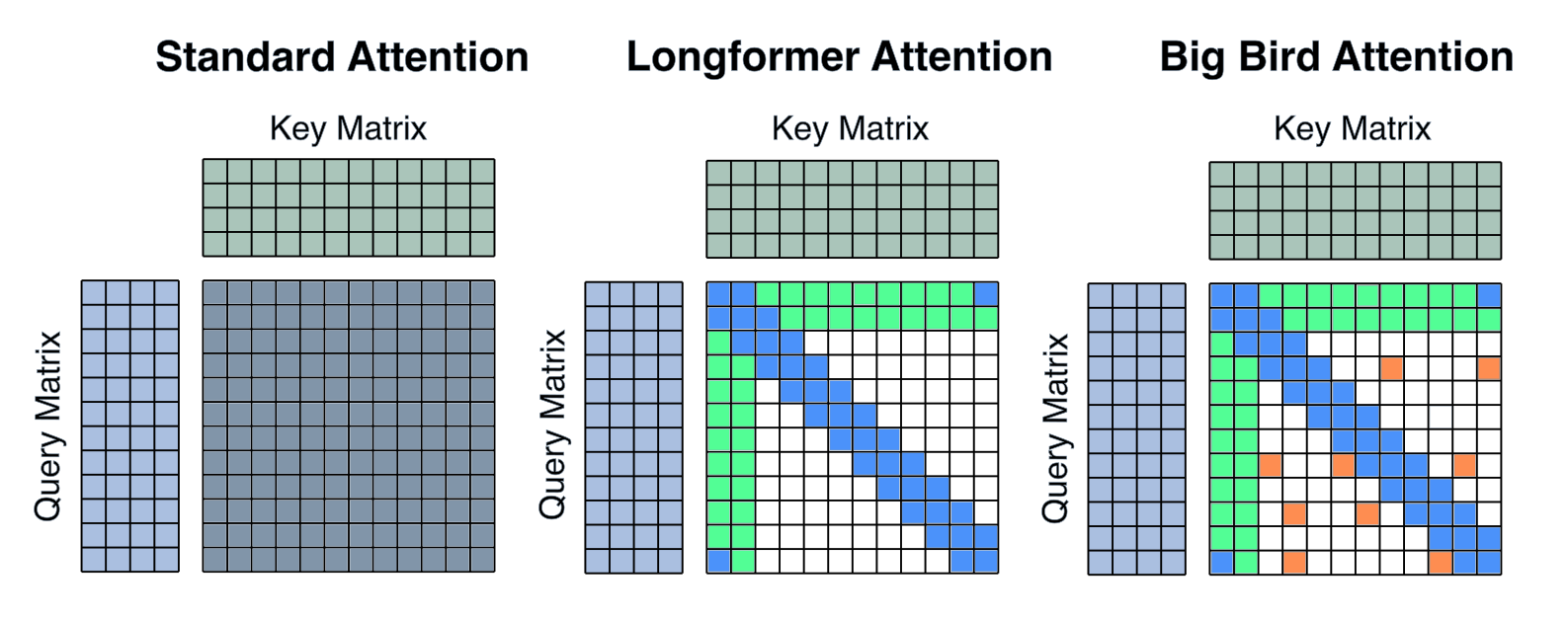

Here is a basic visual of possible attention mechanisms AttentionSmithy has been designed to incorporate in future development efforts. The provided examples include Longformer attention and Big Bird attention.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file attention_smithyr-1.0.0.tar.gz.

File metadata

- Download URL: attention_smithyr-1.0.0.tar.gz

- Upload date:

- Size: 54.5 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.11.10

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

94b8057d99c2adec23778d0252eeec8314d546c07266be46fc1edd54538a12a4

|

|

| MD5 |

0243cbe2be600666f88f1d559e619072

|

|

| BLAKE2b-256 |

67933985b68ac8e45ccd3e78e598d05d4e30436a7723ceb194d316bed7b17d6a

|

File details

Details for the file attention_smithyr-1.0.0-py3-none-any.whl.

File metadata

- Download URL: attention_smithyr-1.0.0-py3-none-any.whl

- Upload date:

- Size: 65.1 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.11.10

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f367da6eb802f6c73ef7e5b6e3987279fcc3e3bbbefc845a4e61a1ad814c0a0d

|

|

| MD5 |

1f41842f5902e0f07cb762d463391ed5

|

|

| BLAKE2b-256 |

c4233ad4c086cfd91bd7b0b1712df0a60b63eeeb6b1c733d06775da963db37a6

|