Bagua is a deep learning training acceleration framework for PyTorch. It provides a one-stop training acceleration solution, including faster distributed training compared to PyTorch DDP, faster dataloader, kernel fusion, and more.

Project description

WARNING: THIS PROJECT IS CURRENTLY IN MAINTENANCE MODE, DUE TO COMPANY REORGANIZATION.

Bagua is a deep learning training acceleration framework for PyTorch developed by AI platform@Kuaishou Technology and DS3 Lab@ETH Zürich. Bagua currently supports:

- Advanced Distributed Training Algorithms: Users can extend the training on a single GPU to multi-GPUs (may across multiple machines) by simply adding a few lines of code (optionally in elastic mode). One prominent feature of Bagua is to provide a flexible system abstraction that supports state-of-the-art system relaxation techniques of distributed training. So far, Bagua has integrated communication primitives including

- Centralized Synchronous Communication (e.g. Gradient AllReduce)

- Decentralized Synchronous Communication (e.g. Decentralized SGD)

- Low Precision Communication (e.g. ByteGrad)

- Asynchronous Communication (e.g. Async Model Average)

- Cached Dataset: When data loading is slow or data preprocessing is tedious, they could become a major bottleneck of the whole training process. Bagua provides cached dataset to speedup this process by caching data samples in memory, so that reading these samples after the first time becomes much faster.

- TCP Communication Acceleration (Bagua-Net): Bagua-Net is a low level communication acceleration feature provided by Bagua. It can greatly improve the throughput of AllReduce on TCP network. You can enable Bagua-Net optimization on any distributed training job that uses NCCL to do GPU communication (this includes PyTorch-DDP, Horovod, DeepSpeed, and more).

- Performance Autotuning: Bagua can automatically tune system parameters to achieve the highest throughput.

- Generic Fused Optimizer: Bagua provides generic fused optimizer which improve the performance of optimizers by fusing the optimizer

.step()operation on multiple layers. It can be applied to arbitrary PyTorch optimizer, in contrast to NVIDIA Apex's approach, where only some specific optimizers are implemented. - Load Balanced Data Loader: When the computation complexity of samples in training data are different, for example in NLP and speech tasks, where each sample have different lengths, distributed training throughput can be greatly improved by using Bagua's load balanced data loader, which distributes samples in a way that each worker's workload are similar.

- Integration with PyTorch Lightning: Are you using PyTorch Lightning for your distributed training job? Now you can use Bagua in PyTorch Lightning by simply set

𝚜𝚝𝚛𝚊𝚝𝚎𝚐𝚢=𝙱𝚊𝚐𝚞𝚊𝚂𝚝𝚛𝚊𝚝𝚎𝚐𝚢in your Trainer. This enables you to take advantage of a range of advanced training algorithms, including decentralized methods, asynchronous methods, communication compression, and their combinations!

Its effectiveness has been evaluated in various scenarios, including VGG and ResNet on ImageNet, BERT Large and many industrial applications at Kuaishou.

Links

Performance

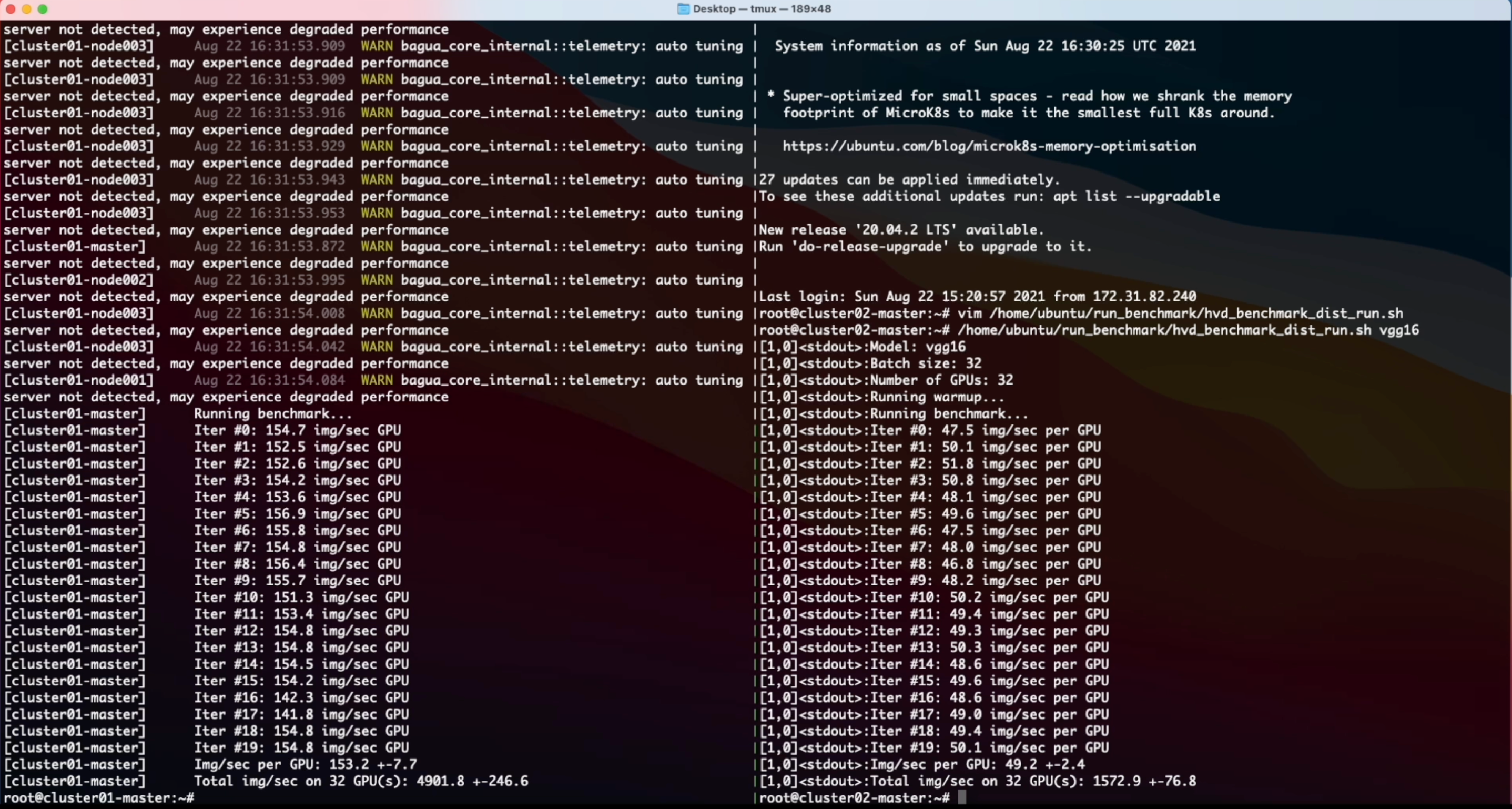

The performance of different systems and algorithms on VGG16 with 128 GPUs under different network bandwidth.

Epoch time of BERT-Large Finetune under different network conditions for different systems.

For more comprehensive and up to date results, refer to Bagua benchmark page.

Installation

Wheels (precompiled binary packages) are available for Linux (x86_64). Package names are different depending on your CUDA Toolkit version (CUDA Toolkit version is shown in nvcc --version).

| CUDA Toolkit version | Installation command |

|---|---|

| >= v10.2 | pip install bagua-cuda102 |

| >= v11.1 | pip install bagua-cuda111 |

| >= v11.3 | pip install bagua-cuda113 |

Add --pre to pip install commands to install pre-release (development) versions. See Bagua tutorials for quick start guide and more installation options.

Quick Start on AWS

Thanks to the Amazon Machine Images (AMI), we can provide users an easy way to deploy and run Bagua on AWS EC2 clusters with flexible size of machines and a wide range of GPU types. Users can find our pre-installed Bagua image on EC2 by the unique AMI-ID that we publish here. Note that AMI is a regional resource, so please make sure you are using the machines in same reginon as our AMI.

| Bagua version | AMI ID | Region |

|---|---|---|

| 0.6.3 | ami-0e719d0e3e42b397e | us-east-1 |

| 0.9.0 | ami-0f01fd14e9a742624 | us-east-1 |

To manage the EC2 cluster more efficiently, we use Starcluster as a toolkit to manipulate the cluster. In the config file of Starcluster, there are a few configurations that need to be set up by users, including AWS credentials, cluster settings, etc. More information regarding the Starcluster configuration can be found in this tutorial.

For example, we create a EC2 cluster with 4 machines, each of which has 8 V100 GPUs (p3.16xlarge). The cluster is based on the Bagua AMI we pre-installed in us-east-1 region. Then the config file of Starcluster would be:

# region of EC2 instances, here we choose us_east_1

AWS_REGION_NAME = us-east-1

AWS_REGION_HOST = ec2.us-east-1.amazonaws.com

# AMI ID of Bagua

NODE_IMAGE_ID = ami-0e719d0e3e42b397e

# number of instances

CLUSTER_SIZE = 4

# instance type

NODE_INSTANCE_TYPE = p3.16xlarge

With above setup, we created two identical clusters to benchmark a synthesized image classification task over Bagua and Horovod, respectively. Here is the screen recording video of this experiment.

Cite Bagua

% System Overview

@misc{gan2021bagua,

title={BAGUA: Scaling up Distributed Learning with System Relaxations},

author={Shaoduo Gan and Xiangru Lian and Rui Wang and Jianbin Chang and Chengjun Liu and Hongmei Shi and Shengzhuo Zhang and Xianghong Li and Tengxu Sun and Jiawei Jiang and Binhang Yuan and Sen Yang and Ji Liu and Ce Zhang},

year={2021},

eprint={2107.01499},

archivePrefix={arXiv},

primaryClass={cs.LG}

}

% Theory on System Relaxation Techniques

@book{liu2020distributed,

title={Distributed Learning Systems with First-Order Methods: An Introduction},

author={Liu, J. and Zhang, C.},

isbn={9781680837018},

series={Foundations and trends in databases},

url={https://books.google.com/books?id=vzQmzgEACAAJ},

year={2020},

publisher={now publishers}

}

Contributors

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distributions

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file bagua_cuda113-0.8.3.dev189-cp39-cp39-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: bagua_cuda113-0.8.3.dev189-cp39-cp39-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 18.4 MB

- Tags: CPython 3.9, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.9.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c9136ebec4f907788b193196694bfee9f099a8fc8e6ec558fc726c24188af0b3

|

|

| MD5 |

e8beaf318108197b39ba974b788ca872

|

|

| BLAKE2b-256 |

c236db589b3592ea0ddb17d5b8ad4604a3711704f5482bbb2370948d2cc6d1e2

|

File details

Details for the file bagua_cuda113-0.8.3.dev189-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: bagua_cuda113-0.8.3.dev189-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 18.4 MB

- Tags: CPython 3.8, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.9.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

6aa8eec476b9e56b1c936e085a9f5add80ecaa25d568f0cb8065116e8155500e

|

|

| MD5 |

f270a981d9873549c6f39d63215a02c9

|

|

| BLAKE2b-256 |

5f121227dcca43b0413d87d5032eef68969d6c52931687ea392e0bebf2ec0537

|

File details

Details for the file bagua_cuda113-0.8.3.dev189-cp37-cp37m-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.

File metadata

- Download URL: bagua_cuda113-0.8.3.dev189-cp37-cp37m-manylinux_2_17_x86_64.manylinux2014_x86_64.whl

- Upload date:

- Size: 18.4 MB

- Tags: CPython 3.7m, manylinux: glibc 2.17+ x86-64

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.0 CPython/3.9.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

fad99e13aa789a865557a892ba00a9c3c559902464aea16a8443c63c0f7b183c

|

|

| MD5 |

65a629c03edae2244bd27c3168bcd188

|

|

| BLAKE2b-256 |

d16d20e73dc3a7dadbd12c0d4390a015755b5e612f6949c5f78d9f502659ca92

|