A lightweight library for generating synthetic instruction tuning datasets for your data without GPT.

Project description

Bonito

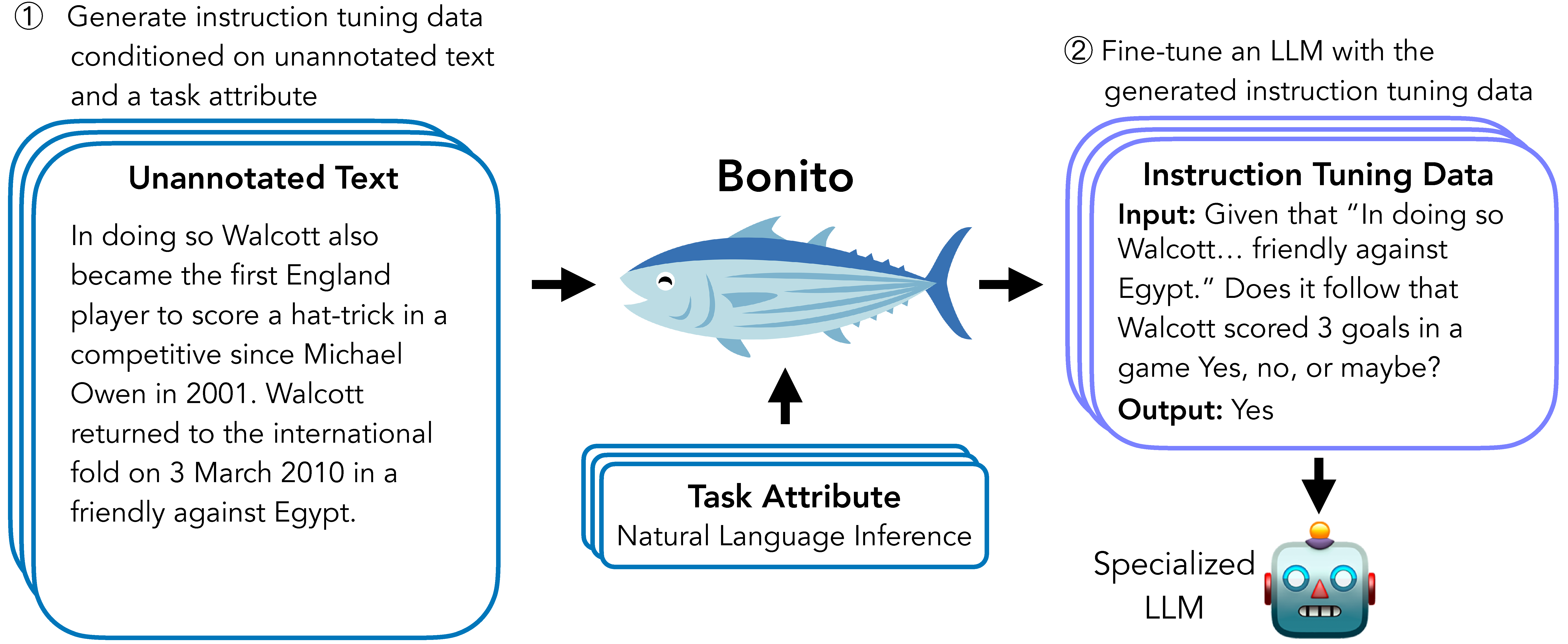

Bonito is an open-source model for conditional task generation: the task of converting unannotated text into task-specific training datasets for instruction tuning. This repo is a lightweight library for Bonito to easily create synthetic datasets built on top of the Hugging Face transformers and vllm libraries.

- Paper: Learning to Generate Instruction Tuning Datasets for Zero-Shot Task Adaptation

- Model: bonito-v1

- Demo: Bonito on Spaces

- Dataset: ctga-v1

- Code: To reproduce experiments in our paper, see nayak-aclfindings24-code.

News

- 🐠 February 2025: Uploaded

bonito-llmto PyPI. - 🐡 August 2024: Released new Bonito model with Meta Llama 3.1 as the base model.

- 🐟 June 2024: Bonito is accepted to ACL Findings 2024.

Installation

Create an environment and install the package using the following command:

pip3 install bonito-llm

Basic Usage

To generate synthetic instruction tuning dataset using Bonito, you can use the following code:

from bonito import Bonito

from vllm import SamplingParams

from datasets import load_dataset

# Initialize the Bonito model

bonito = Bonito("BatsResearch/bonito-v1")

# load dataset with unannotated text

unannotated_text = load_dataset(

"BatsResearch/bonito-experiment",

"unannotated_contract_nli"

)["train"].select(range(10))

# Generate synthetic instruction tuning dataset

sampling_params = SamplingParams(max_tokens=256, top_p=0.95, temperature=0.5, n=1)

synthetic_dataset = bonito.generate_tasks(

unannotated_text,

context_col="input",

task_type="nli",

sampling_params=sampling_params

)

Supported Task Types

Here we include the supported task types [full name (short form)]: extractive question answering (exqa), multiple-choice question answering (mcqa), question generation (qg), question answering without choices (qa), yes-no question answering (ynqa), coreference resolution (coref), paraphrase generation (paraphrase), paraphrase identification (paraphrase_id), sentence completion (sent_comp), sentiment (sentiment), summarization (summarization), text generation (text_gen), topic classification (topic_class), word sense disambiguation (wsd), textual entailment (te), natural language inference (nli)

You can use either the full name or the short form to specify the task_type in generate_tasks.

Tutorial

We have created a tutorial here for how to use a quantized version of the model in a Google Colab T4 instance. The quantized version was graciously contributed by user alexandreteles. We have an additional tutorial to try out the Bonito model on A100 GPU on Google Colab here.

Citation

If you use Bonito in your research, please cite the following paper:

@inproceedings{bonito:aclfindings24,

title = {Learning to Generate Instruction Tuning Datasets for Zero-Shot Task Adaptation},

author = {Nayak, Nihal V. and Nan, Yiyang and Trost, Avi and Bach, Stephen H.},

booktitle = {Findings of the Association for Computational Linguistics: ACL 2024},

year = {2024}}

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file bonito_llm-0.1.0.tar.gz.

File metadata

- Download URL: bonito_llm-0.1.0.tar.gz

- Upload date:

- Size: 6.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

71570544c9514f92688afecd8a366fdc673d86cb16c29b16f8d5546ff852b53c

|

|

| MD5 |

50815b4c0508417ac887e0f408416637

|

|

| BLAKE2b-256 |

ebeff62e660f8ab45a383998e93f8b9a6a916821df03d2e3b3cfbdce638de1f6

|

File details

Details for the file bonito_llm-0.1.0-py3-none-any.whl.

File metadata

- Download URL: bonito_llm-0.1.0-py3-none-any.whl

- Upload date:

- Size: 6.4 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.10.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

20c61ed56fdf169348b4b7fed8f01eb9ba55363ac3593459d42d9868db8b27bb

|

|

| MD5 |

7cf6a5647ab7e85212018d25b7f896c7

|

|

| BLAKE2b-256 |

32682a65cf3dfd017afbc6cbb93127f92cbfe97d9a4af290b707156cdcd2c4d9

|