Communication with Spark controllers

Project description

Spark Service

The Spark service handles connectivity for the BrewPi Spark controller.

This includes USB/TCP communication with the controller, but also encoding, decoding, and broadcasting data.

Features

SparkConduit (communication.py)

Direct communication with the Spark is handled here, for both USB and TCP connections. Data is not decoded or interpreted, but passed on to the SparkCommander.

Controlbox Protocol (commands.py)

The Spark communicates using the Controlbox protocol. A set of commands is defined to manage blocks on the controller.

In the commands module, this protocol of bits and bytes is encapsulated by Command classes. They are capable of converting a Python dict to a hexadecimal byte string, and vice versa.

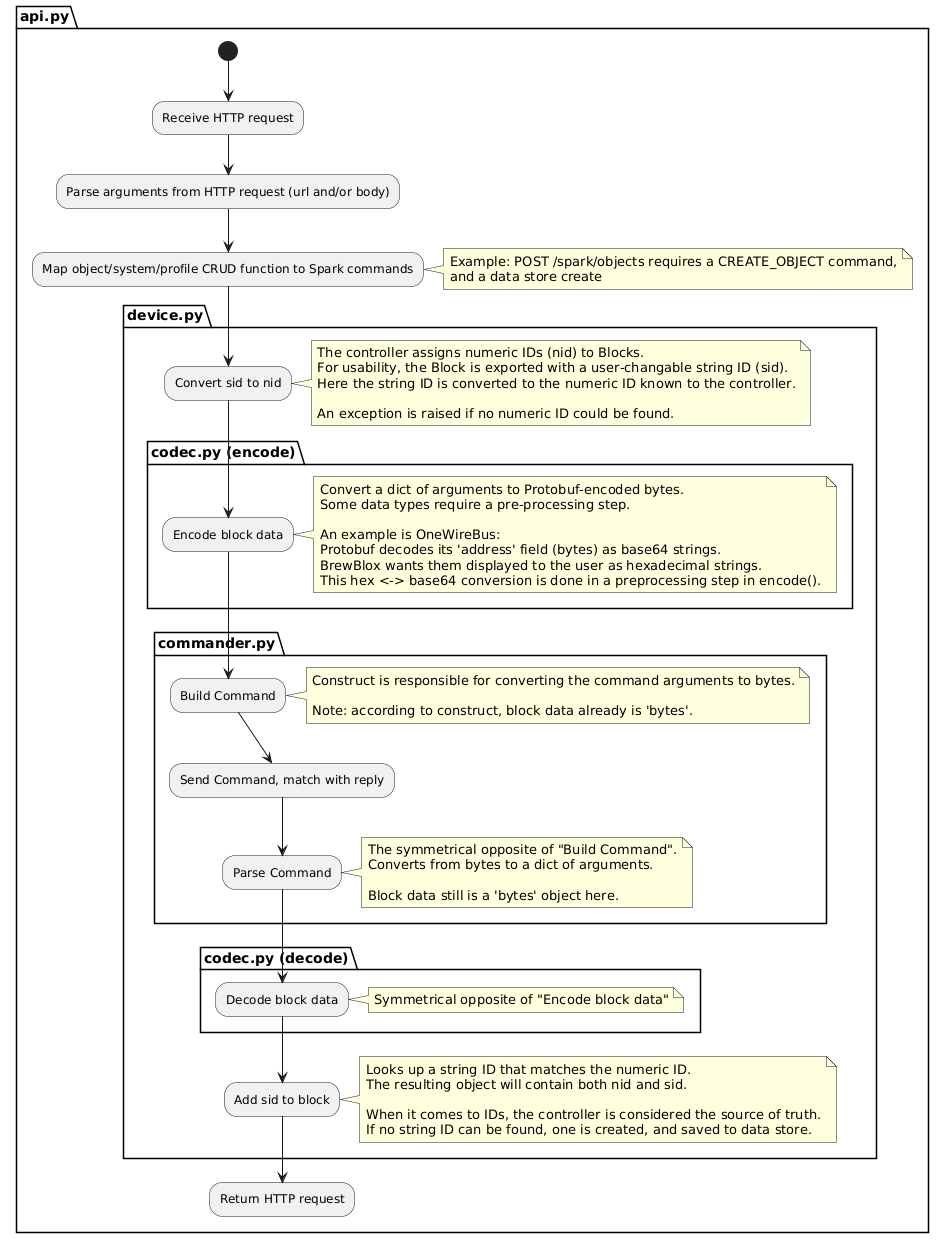

SparkCommander (commander.py)

Serial communication is asynchronous: requests and responses are not linked at the transport layer.

SparkCommander is responsible for building and sending a command, and then matching it with a subsequently received response.

SimulationCommander (commander_sim.py)

For when using an actual Spark is inconvenient, there is a simulation version. It serves as a drop-in replacement for the real commander: it handles commands, and returns sensible values. Commands are encoded/decoded, to closely match the real situation.

Datastore (datastore.py)

The service must keep track of object metadata not directly persisted by the controller. This includes user-defined object names and descriptions.

Services are capable of interacting with a BrewPi Spark that has pre-existing blocks, but will be unable to display objects with a human-meaningful name.

Object metadata is persisted to files. This does not include object settings - these are the responsibility of the Spark itself.

Codec (codec.py)

While the controller <-> service communication uses the Controlbox protocol, individual objects are encoded separately, using Google's Protocol Buffers.

The codec is responsible for converting JSON-serializable dicts to byte arrays, and vice versa. A specific transcoder is defined for each object.

For this reason, the object payload in Controlbox consists of two parts: a numerical object_type ID, and the object_data bytes.

SparkController (device.py)

SparkController combines the functionality of commands, commander, datastore, and codec to allow interaction with the Spark using Pythonic functions.

Any command is modified both incoming and outgoing: ID's are converted using the datastore, data is sent to codec, and everything is wrapped in the correct command before it is sent to SparkCommander.

Broadcaster (broadcaster.py)

The Spark service is not responsible for retaining any object data. Any requests are encoded and forwarded to the Spark.

To reduce the impact of this bottleneck, and to persist historic data, Broadcaster reads all objects every few seconds, and broadcasts their values to the eventbus.

Here, the data will likely be picked up by the History Service.

Seeder (seeder.py)

Some actions are required when connecting to a (new) Spark controller. The Seeder feature waits for a connection to be made, and then performs these one-time tasks.

Examples are:

- Setting Spark system clock

- Reading controller-specific data from the remote datastore

REST API

ObjectApi (object_api.py)

Offers full CRUD (Create, Read, Update, Delete) functionality for Spark objects.

SystemApi (system_api.py)

System objects are distinct from normal objects in that they can't be created or deleted by the user.

RemoteApi (remote_api.py)

Occasionally, it is desirable for multiple Sparks to work in concert. One might be connected to a temperature sensor, while the other controls a heater.

Remote blocks allow synchronization between master and slave blocks.

In the sensor/heater example, the Spark with the heater would be configured to have a dummy sensor object linked to the heater.

Instead of directly reading a sensor, this dummy object is updated by the service whenever it receives an update from the master object (the real sensor).

AliasApi (alias_api.py)

All objects can have user-defined names. The AliasAPI allows users to set or change those names.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

File details

Details for the file brewblox-devcon-spark-0.5.3.dev178.tar.gz.

File metadata

- Download URL: brewblox-devcon-spark-0.5.3.dev178.tar.gz

- Upload date:

- Size: 1.1 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/1.13.0 pkginfo/1.5.0.1 requests/2.22.0 setuptools/40.6.2 requests-toolbelt/0.9.1 tqdm/4.32.2 CPython/3.7.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

92b5db6a67bbbd727e908221fa04eba67b47b01cca0414a6dbec2592c81e0358

|

|

| MD5 |

64f122403d8c61b3f53f7474e30789e3

|

|

| BLAKE2b-256 |

d5b58b4de70e3ee343ec7434e3b99cba711f1f26b35ce1b3de924595e09fc8cd

|