A high-performance cross-framework Parameter Server for Deep Learning

Project description

BytePS

BytePS is a high performance and general distributed training framework. It supports TensorFlow, Keras, PyTorch, and MXNet, and can run on either TCP or RDMA network.

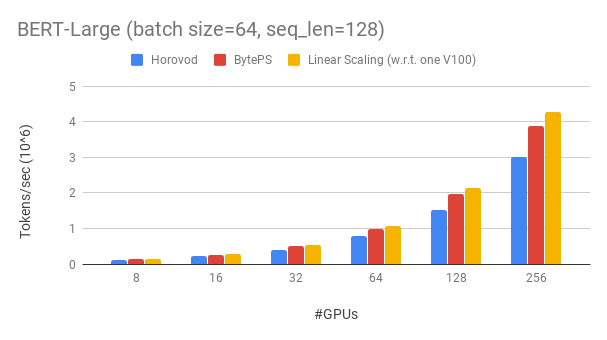

BytePS outperforms existing open-sourced distributed training frameworks by a large margin. For example, on BERT-large training, BytePS can achieve ~90% scaling efficiency with 256 GPUs (see below), which is much higher than Horovod+NCCL. In certain scenarios, BytePS can double the training speed compared with Horovod+NCCL.

News

- BytePS paper has been accepted to OSDI'20. The code to reproduce the end-to-end evaluation is available here.

- Support gradient compression.

- v0.2.4

- Fix compatibility issue with tf2 + standalone keras

- Add support for tensorflow.keras

- Improve robustness of broadcast

- v0.2.3

- Add DistributedDataParallel module for PyTorch

- Fix the problem of different CPU tensor using the same name

- Add skip_synchronize api for PyTorch

- Add the option for lazy/non-lazy init

- v0.2.0

- Largely improve RDMA performance by enforcing page aligned memory.

- Add IPC support for RDMA. Now support colocating servers and workers without sacrificing much performance.

- Fix a hanging bug in BytePS server.

- Fix RDMA-related segmentation fault problem during fork() (e.g., used by PyTorch data loader).

- New feature: Enable mixing use of colocate and non-colocate servers, along with a smart tensor allocation strategy.

- New feature: Add

bpslaunchas the command to launch tasks. - Add support for pip install:

pip3 install byteps

Performance

We show our experiment on BERT-large training, which is based on GluonNLP toolkit. The model uses mixed precision.

We use Tesla V100 32GB GPUs and set batch size equal to 64 per GPU. Each machine has 8 V100 GPUs (32GB memory) with NVLink-enabled. Machines are inter-connected with 100 Gbps RDMA network. This is the same hardware setup you can get on AWS.

BytePS achieves ~90% scaling efficiency for BERT-large with 256 GPUs. The code is available here. As a comparison, Horovod+NCCL has only ~70% scaling efficiency even after expert parameter tunning.

With slower network, BytePS offers even more performance advantages -- up to 2x of Horovod+NCCL. You can find more evaluation results at performance.md.

Goodbye MPI, Hello Cloud

How can BytePS outperform Horovod by so much? One of the main reasons is that BytePS is designed for cloud and shared clusters, and throws away MPI.

MPI was born in the HPC world and is good for a cluster built with homogeneous hardware and for running a single job. However, cloud (or in-house shared clusters) is different.

This leads us to rethink the best communication strategy, as explained in here. In short, BytePS only uses NCCL inside a machine, while re-implements the inter-machine communication.

BytePS also incorporates many acceleration techniques such as hierarchical strategy, pipelining, tensor partitioning, NUMA-aware local communication, priority-based scheduling, etc.

Quick Start

We provide a step-by-step tutorial for you to run benchmark training tasks. The simplest way to start is to use our docker images. Refer to Documentations for how to launch distributed jobs and more detailed configurations. After you can start BytePS, read best practice to get the best performance.

Below, we explain how to install BytePS by yourself. There are two options.

Install by pip

pip3 install byteps

Build from source code

You can try out the latest features by directly installing from master branch:

git clone --recursive https://github.com/bytedance/byteps

cd byteps

python3 setup.py install

Notes for above two options:

- BytePS assumes that you have already installed one or more of the following frameworks: TensorFlow / PyTorch / MXNet.

- BytePS depends on CUDA and NCCL. You should specify the NCCL path with

export BYTEPS_NCCL_HOME=/path/to/nccl. By default it points to/usr/local/nccl. - The installation requires gcc>=4.9. If you are working on CentOS/Redhat and have gcc<4.9, you can try

yum install devtoolset-7before everything else. In general, we recommend using gcc 4.9 for best compatibility (how to pin gcc). - RDMA support: During setup, the script will automatically detect the RDMA header file. If you want to use RDMA, make sure your RDMA environment has been properly installed and tested before install (install on Ubuntu-18.04).

Examples

Basic examples are provided under the example folder.

To reproduce the end-to-end evaluation in our OSDI'20 paper, find the code at this repo.

Use BytePS in Your Code

Though being totally different at its core, BytePS is highly compatible with Horovod interfaces (Thank you, Horovod community!). We chose Horovod interfaces in order to minimize your efforts for testing BytePS.

If your tasks only rely on Horovod's allreduce and broadcast, you should be able to switch to BytePS in 1 minute. Simply replace import horovod.tensorflow as hvd by import byteps.tensorflow as bps, and then replace all hvd in your code by bps. If your code invokes hvd.allreduce directly, you should also replace it by bps.push_pull.

Many of our examples were copied from Horovod and modified in this way. For instance, compare the MNIST example for BytePS and Horovod.

BytePS also supports other native APIs, e.g., PyTorch Distributed Data Parallel and TensorFlow Mirrored Strategy. See DistributedDataParallel.md and MirroredStrategy.md for usage.

Limitations and Future Plans

BytePS does not support pure CPU training for now. One reason is that the cheap PS assumption of BytePS do not hold for CPU training. Consequently, you need CUDA and NCCL to build and run BytePS.

We would like to have below features, and there is no fundamental difficulty to implement them in BytePS architecture. However, they are not implemented yet:

- Sparse model training

- Fault-tolerance

- Straggler-mitigation

Publications

-

[OSDI'20] "A Unified Architecture for Accelerating Distributed DNN Training in Heterogeneous GPU/CPU Clusters". Yimin Jiang, Yibo Zhu, Chang Lan, Bairen Yi, Yong Cui, Chuanxiong Guo.

-

[SOSP'19] "A Generic Communication Scheduler for Distributed DNN Training Acceleration". Yanghua Peng, Yibo Zhu, Yangrui Chen, Yixin Bao, Bairen Yi, Chang Lan, Chuan Wu, Chuanxiong Guo. (Code is at bytescheduler branch)

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

File details

Details for the file byteps-0.2.5.post15.tar.gz.

File metadata

- Download URL: byteps-0.2.5.post15.tar.gz

- Upload date:

- Size: 4.6 MB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/3.1.1 pkginfo/1.5.0.1 requests/2.24.0 setuptools/41.2.0 requests-toolbelt/0.9.1 tqdm/4.46.0 CPython/3.8.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

9d6a3c5be4c4b5a172739744bb32725c433f9a9f63794e1885e7376ff4eea99f

|

|

| MD5 |

2be57409a12e9de88c5fea1360f2f188

|

|

| BLAKE2b-256 |

131a215b1dde04b99cf13ded3e87eb4abcf521fa24cca184a13ef3e6ee324e8c

|