Compose Farm - run docker compose commands across multiple hosts

Project description

Compose Farm

A minimal CLI tool to run Docker Compose commands across multiple hosts via SSH.

[!NOTE] Run

docker composecommands across multiple hosts via SSH. One YAML maps services to hosts. Change the mapping, runup, and it auto-migrates. No Kubernetes, no Swarm, no magic.

- Why Compose Farm?

- How It Works

- Requirements

- Limitations & Best Practices

- Installation

- Configuration

- Usage

- Traefik Multihost Ingress (File Provider)

- Comparison with Alternatives

- License

Why Compose Farm?

I used to run 100+ Docker Compose stacks on a single machine that kept running out of memory. I needed a way to distribute services across multiple machines without the complexity of:

- Kubernetes: Overkill for my use case. I don't need pods, services, ingress controllers, or YAML manifests 10x the size of my compose files.

- Docker Swarm: Effectively in maintenance mode—no longer being invested in by Docker.

Both require changes to your compose files. Compose Farm requires zero changes—your existing docker-compose.yml files work as-is.

I also wanted a declarative setup—one config file that defines where everything runs. Change the config, run up, and services migrate automatically. See Comparison with Alternatives for how this compares to other approaches.

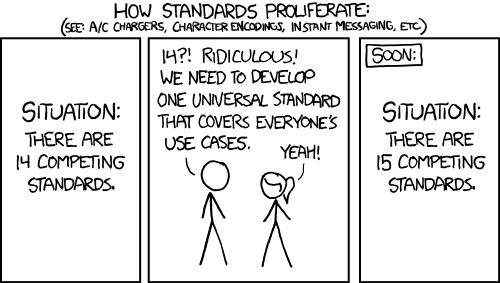

Before you say it—no, this is not a new standard. I changed nothing about my existing setup. When I added more hosts, I just mounted my drives at the same paths, and everything worked. You can do all of this manually today—SSH into a host and run docker compose up.

Compose Farm just automates what you'd do by hand:

- Runs

docker composecommands over SSH - Tracks which service runs on which host

- Auto-migrates services when you change the host assignment

- Generates Traefik file-provider config for cross-host routing

It's a convenience wrapper, not a new paradigm.

How It Works

- You run

cf up plex - Compose Farm looks up which host runs

plex(e.g.,server-1) - It SSHs to

server-1(or runs locally iflocalhost) - It executes

docker compose -f /opt/compose/plex/docker-compose.yml up -d - Output is streamed back with

[plex]prefix

That's it. No orchestration, no service discovery, no magic.

Requirements

- Python 3.11+ (we recommend uv for installation)

- SSH key-based authentication to your hosts (uses ssh-agent)

- Docker and Docker Compose installed on all target hosts

- Shared storage: All compose files must be accessible at the same path on all hosts

- Docker networks: External networks must exist on all hosts (use

cf init-networkto create)

Compose Farm assumes your compose files are accessible at the same path on all hosts. This is typically achieved via:

- NFS mount (e.g.,

/opt/composemounted from a NAS) - Synced folders (e.g., Syncthing, rsync)

- Shared filesystem (e.g., GlusterFS, Ceph)

# Example: NFS mount on all Docker hosts

nas:/volume1/compose → /opt/compose (on server-1)

nas:/volume1/compose → /opt/compose (on server-2)

nas:/volume1/compose → /opt/compose (on server-3)

Compose Farm simply runs docker compose -f /opt/compose/{service}/docker-compose.yml on the appropriate host—it doesn't copy or sync files.

Limitations & Best Practices

Compose Farm moves containers between hosts but does not provide cross-host networking. Docker's internal DNS and networks don't span hosts.

What breaks when you move a service

- Docker DNS -

http://redis:6379won't resolve from another host - Docker networks - Containers can't reach each other via network names

- Environment variables -

DATABASE_URL=postgres://db:5432stops working

Best practices

-

Keep dependent services together - If an app needs a database, redis, or worker, keep them in the same compose file on the same host

-

Only migrate standalone services - Services that don't talk to other containers (or only talk to external APIs) are safe to move

-

Expose ports for cross-host communication - If services must communicate across hosts, publish ports and use IP addresses instead of container names:

# Instead of: DATABASE_URL=postgres://db:5432 # Use: DATABASE_URL=postgres://192.168.1.66:5432

This includes Traefik routing—containers need published ports for the file-provider to reach them

What Compose Farm doesn't do

- No overlay networking (use Docker Swarm or Kubernetes for that)

- No service discovery across hosts

- No automatic dependency tracking between compose files

If you need containers on different hosts to communicate seamlessly, you need Docker Swarm, Kubernetes, or a service mesh—which adds the complexity Compose Farm is designed to avoid.

Installation

uv tool install compose-farm

# or

pip install compose-farm

🐳 Docker

docker run --rm \

-v $SSH_AUTH_SOCK:/ssh-agent -e SSH_AUTH_SOCK=/ssh-agent \

-v ./compose-farm.yaml:/root/.config/compose-farm/compose-farm.yaml:ro \

ghcr.io/basnijholt/compose-farm up --all

Or create an alias:

alias cf='docker run --rm -v $SSH_AUTH_SOCK:/ssh-agent -e SSH_AUTH_SOCK=/ssh-agent -v ./compose-farm.yaml:/root/.config/compose-farm/compose-farm.yaml:ro ghcr.io/basnijholt/compose-farm'

Configuration

Create ~/.config/compose-farm/compose-farm.yaml (or ./compose-farm.yaml in your working directory):

compose_dir: /opt/compose # Must be the same path on all hosts

hosts:

server-1:

address: 192.168.1.10

user: docker

server-2:

address: 192.168.1.11

# user defaults to current user

local: localhost # Run locally without SSH

services:

plex: server-1

jellyfin: server-2

sonarr: server-1

radarr: local # Runs on the machine where you invoke compose-farm

# Multi-host services (run on multiple/all hosts)

autokuma: all # Runs on ALL configured hosts

dozzle: [server-1, server-2] # Explicit list of hosts

Compose files are expected at {compose_dir}/{service}/compose.yaml (also supports compose.yml, docker-compose.yml, docker-compose.yaml).

Multi-Host Services

Some services need to run on every host. This is typically required for tools that access host-local resources like the Docker socket (/var/run/docker.sock), which cannot be accessed remotely without security risks.

Common use cases:

- AutoKuma - auto-creates Uptime Kuma monitors from container labels (needs local Docker socket)

- Dozzle - real-time log viewer (needs local Docker socket)

- Promtail/Alloy - log shipping agents (needs local Docker socket and log files)

- node-exporter - Prometheus host metrics (needs access to host /proc, /sys)

This is the same pattern as Docker Swarm's deploy.mode: global.

Use the all keyword or an explicit list:

services:

# Run on all configured hosts

autokuma: all

dozzle: all

# Run on specific hosts

node-exporter: [server-1, server-2, server-3]

When you run cf up autokuma, it starts the service on all hosts in parallel. Multi-host services:

- Are excluded from migration logic (they always run everywhere)

- Show output with

[service@host]prefix for each host - Track all running hosts in state

Usage

The CLI is available as both compose-farm and the shorter cf alias.

| Command | Description |

|---|---|

cf up <svc> |

Start service (auto-migrates if host changed) |

cf down <svc> |

Stop service |

cf restart <svc> |

down + up |

cf update <svc> |

pull + down + up |

cf pull <svc> |

Pull latest images |

cf logs -f <svc> |

Follow logs |

cf ps |

Show status of all services |

cf sync |

Discover running services + capture image digests |

cf check |

Validate config, mounts, networks |

cf init-network |

Create Docker network on hosts |

cf traefik-file |

Generate Traefik file-provider config |

All commands support --all to operate on all services.

# Start services (auto-migrates if host changed in config)

cf up plex jellyfin

cf up --all

cf up --migrate # only services needing migration (state ≠ config)

# Stop services

cf down plex

# Pull latest images

cf pull --all

# Restart (down + up)

cf restart plex

# Update (pull + down + up) - the end-to-end update command

cf update --all

# Sync state with reality (discovers running services + captures image digests)

cf sync # updates state.yaml and dockerfarm-log.toml

cf sync --dry-run # preview without writing

# Validate config, traefik labels, mounts, and networks

cf check # full validation (includes SSH checks)

cf check --local # fast validation (skip SSH)

cf check jellyfin # check service + show which hosts can run it

# Create Docker network on new hosts (before migrating services)

cf init-network nuc hp # create mynetwork on specific hosts

cf init-network # create on all hosts

# View logs

cf logs plex

cf logs -f plex # follow

# Show status

cf ps

Auto-Migration

When you change a service's host assignment in config and run up, Compose Farm automatically:

- Checks that required mounts and networks exist on the new host (aborts if missing)

- Runs

downon the old host - Runs

up -don the new host - Updates state tracking

Use cf up --migrate (or -m) to automatically find and migrate all services where the current state differs from config—no need to list them manually.

# Before: plex runs on server-1

services:

plex: server-1

# After: change to server-2, then run `cf up plex`

services:

plex: server-2 # Compose Farm will migrate automatically

Traefik Multihost Ingress (File Provider)

If you run a single Traefik instance on one "front‑door" host and want it to route to Compose Farm services on other hosts, Compose Farm can generate a Traefik file‑provider fragment from your existing compose labels.

How it works

- Your

docker-compose.ymlremains the source of truth. Put normaltraefik.*labels on the container you want exposed. - Labels and port specs may use

${VAR}/${VAR:-default}; Compose Farm resolves these using the stack's.envfile and your current environment, just like Docker Compose. - Publish a host port for that container (via

ports:). The generator prefers host‑published ports so Traefik can reach the service across hosts; if none are found, it warns and you'd need L3 reachability to container IPs. - If a router label doesn't specify

traefik.http.routers.<name>.serviceand there's only one Traefik service defined on that container, Compose Farm wires the router to it. compose-farm.yamlstays unchanged: justhostsandservices: service → host.

Example docker-compose.yml pattern:

services:

plex:

ports: ["32400:32400"]

labels:

- traefik.enable=true

- traefik.http.routers.plex.rule=Host(`plex.lab.mydomain.org`)

- traefik.http.routers.plex.entrypoints=websecure

- traefik.http.routers.plex.tls.certresolver=letsencrypt

- traefik.http.services.plex.loadbalancer.server.port=32400

One‑time Traefik setup

Enable a file provider watching a directory (any path is fine; a common choice is on your shared/NFS mount):

providers:

file:

directory: /mnt/data/traefik/dynamic.d

watch: true

Generate the fragment

cf traefik-file --all --output /mnt/data/traefik/dynamic.d/compose-farm.yml

Re‑run this after changing Traefik labels, moving a service to another host, or changing published ports.

Auto-regeneration

To automatically regenerate the Traefik config after up, down, restart, or update,

add traefik_file to your config:

compose_dir: /opt/compose

traefik_file: /opt/traefik/dynamic.d/compose-farm.yml # auto-regenerate on up/down/restart/update

traefik_service: traefik # skip services on same host (docker provider handles them)

hosts:

# ...

services:

traefik: server-1 # Traefik runs here

plex: server-2 # Services on other hosts get file-provider entries

# ...

The traefik_service option specifies which service runs Traefik. Services on the same host

are skipped in the file-provider config since Traefik's docker provider handles them directly.

Now cf up plex will update the Traefik config automatically—no separate

traefik-file command needed.

Combining with existing config

If you already have a dynamic.yml with manual routes, middlewares, etc., move it into the

directory and Traefik will merge all files:

mkdir -p /opt/traefik/dynamic.d

mv /opt/traefik/dynamic.yml /opt/traefik/dynamic.d/manual.yml

cf traefik-file --all -o /opt/traefik/dynamic.d/compose-farm.yml

Update your Traefik config to use directory watching instead of a single file:

# Before

- --providers.file.filename=/dynamic.yml

# After

- --providers.file.directory=/dynamic.d

- --providers.file.watch=true

Comparison with Alternatives

There are many ways to run containers on multiple hosts. Here is where Compose Farm sits:

| Docker Contexts | K8s / Swarm | Ansible / Terraform | Portainer / Coolify | Compose Farm | |

|---|---|---|---|---|---|

| No compose rewrites | ✅ | ❌ | ✅ | ✅ | ✅ |

| Version controlled | ✅ | ✅ | ✅ | ❌ | ✅ |

| State tracking | ❌ | ✅ | ✅ | ✅ | ✅ |

| Auto-migration | ❌ | ✅ | ❌ | ❌ | ✅ |

| Interactive CLI | ❌ | ❌ | ❌ | ❌ | ✅ |

| Parallel execution | ❌ | ✅ | ✅ | ✅ | ✅ |

| Agentless | ✅ | ❌ | ✅ | ❌ | ✅ |

| High availability | ❌ | ✅ | ❌ | ❌ | ❌ |

Docker Contexts — You can use docker context create remote ssh://... and docker compose --context remote up. But it's manual: you must remember which host runs which service, there's no global view, no parallel execution, and no auto-migration.

Kubernetes / Docker Swarm — Full orchestration that abstracts away the hardware. But they require cluster initialization, separate control planes, and often rewriting compose files. They introduce complexity (consensus, overlay networks) unnecessary for static "pet" servers.

Ansible / Terraform — Infrastructure-as-Code tools that can SSH in and deploy containers. But they're push-based configuration management, not interactive CLIs. Great for setting up state, clumsy for day-to-day operations like cf logs -f or quickly restarting a service.

Portainer / Coolify — Web-based management UIs. But they're UI-first and often require agents on your servers. Compose Farm is CLI-first and agentless.

Compose Farm is the middle ground: a robust CLI that productizes the manual SSH pattern. You get the "cluster feel" (unified commands, state tracking) without the "cluster cost" (complexity, agents, control planes).

License

MIT

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file compose_farm-0.15.1.tar.gz.

File metadata

- Download URL: compose_farm-0.15.1.tar.gz

- Upload date:

- Size: 111.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a8c673f669a0b246db981da9516b95f2b761395dfda38b87109b1ae756ad70b6

|

|

| MD5 |

da17a594fed1c554290ee190d84554db

|

|

| BLAKE2b-256 |

bac667e29f90e26643b67fa31590239667a1c86ab91a1c37843e4fb6a8ebec48

|

Provenance

The following attestation bundles were made for compose_farm-0.15.1.tar.gz:

Publisher:

release.yml on basnijholt/compose-farm

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

compose_farm-0.15.1.tar.gz -

Subject digest:

a8c673f669a0b246db981da9516b95f2b761395dfda38b87109b1ae756ad70b6 - Sigstore transparency entry: 767514775

- Sigstore integration time:

-

Permalink:

basnijholt/compose-farm@b6d50a22b47f92a13559143d12f763474e39c797 -

Branch / Tag:

refs/tags/v0.15.1 - Owner: https://github.com/basnijholt

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@b6d50a22b47f92a13559143d12f763474e39c797 -

Trigger Event:

release

-

Statement type:

File details

Details for the file compose_farm-0.15.1-py3-none-any.whl.

File metadata

- Download URL: compose_farm-0.15.1-py3-none-any.whl

- Upload date:

- Size: 36.8 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

32d69b7a39a17bd13fc8caee6b9df2262c2ac17d1b9e89a0719a40e5e85f4e8a

|

|

| MD5 |

9852ce026bd29134dbb64741e0fe0de4

|

|

| BLAKE2b-256 |

61a950744b3a7b5aee970b5835f3e81bb15371457c6e3787b4f73d49065cc5a1

|

Provenance

The following attestation bundles were made for compose_farm-0.15.1-py3-none-any.whl:

Publisher:

release.yml on basnijholt/compose-farm

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

compose_farm-0.15.1-py3-none-any.whl -

Subject digest:

32d69b7a39a17bd13fc8caee6b9df2262c2ac17d1b9e89a0719a40e5e85f4e8a - Sigstore transparency entry: 767514776

- Sigstore integration time:

-

Permalink:

basnijholt/compose-farm@b6d50a22b47f92a13559143d12f763474e39c797 -

Branch / Tag:

refs/tags/v0.15.1 - Owner: https://github.com/basnijholt

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@b6d50a22b47f92a13559143d12f763474e39c797 -

Trigger Event:

release

-

Statement type: