Conting Researcher - A fork of GPT-Researcher with enhanced features for comprehensive online research on a variety of tasks.

Project description

🔎 Conting Researcher

Note: This is a fork of GPT-Researcher with enhanced features and customizations.

Conting Researcher is an open deep research agent designed for both web and local research on any given task.

The agent produces detailed, factual, and unbiased research reports with citations. GPT Researcher provides a full suite of customization options to create tailor made and domain specific research agents. Inspired by the recent Plan-and-Solve and RAG papers, GPT Researcher addresses misinformation, speed, determinism, and reliability by offering stable performance and increased speed through parallelized agent work.

Our mission is to empower individuals and organizations with accurate, unbiased, and factual information through AI.

Why GPT Researcher?

- Objective conclusions for manual research can take weeks, requiring vast resources and time.

- LLMs trained on outdated information can hallucinate, becoming irrelevant for current research tasks.

- Current LLMs have token limitations, insufficient for generating long research reports.

- Limited web sources in existing services lead to misinformation and shallow results.

- Selective web sources can introduce bias into research tasks.

Demo

https://github.com/user-attachments/assets/8fcaaa4c-31e5-4814-89b4-94f1433d139d

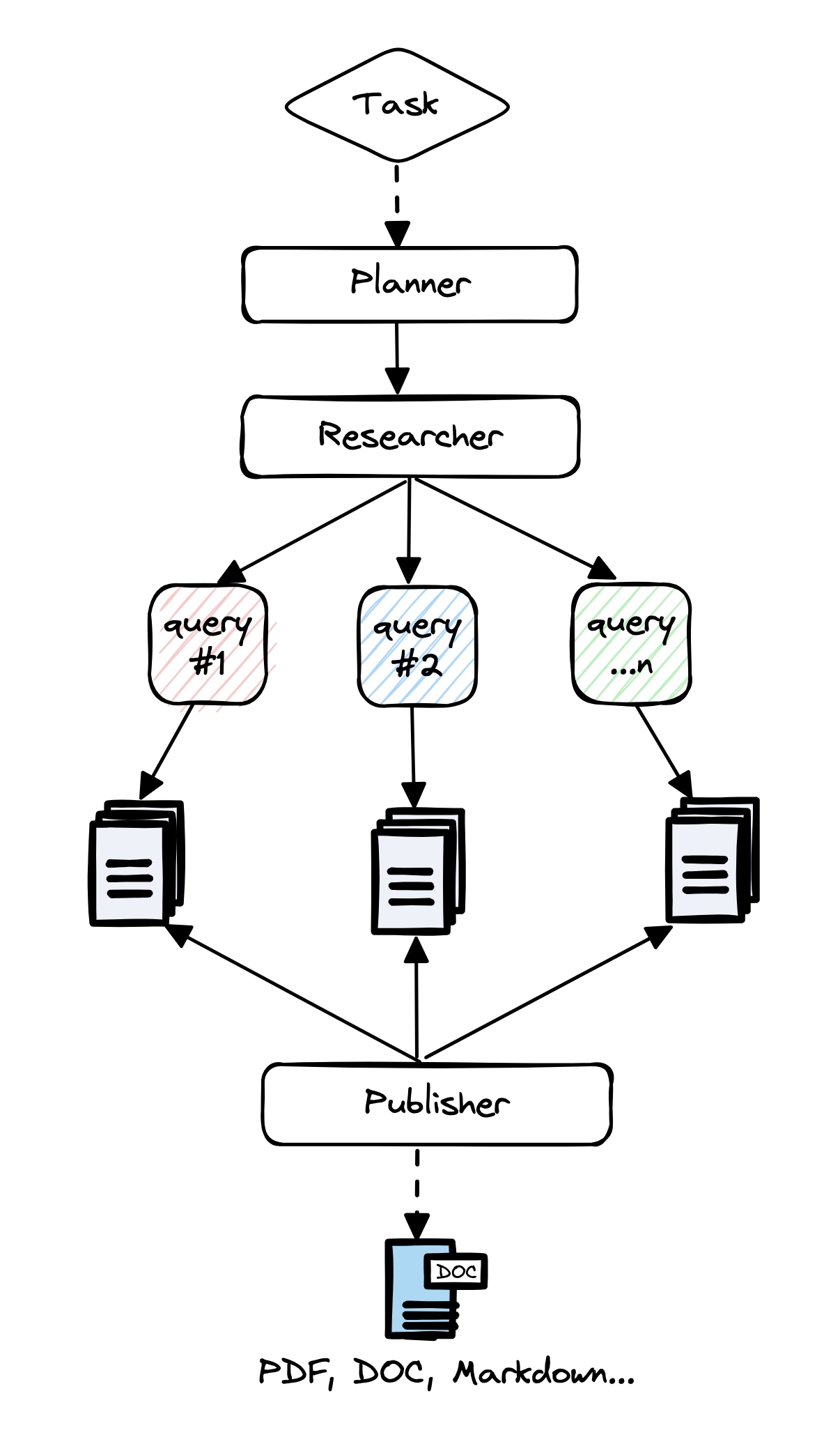

Architecture

The core idea is to utilize 'planner' and 'execution' agents. The planner generates research questions, while the execution agents gather relevant information. The publisher then aggregates all findings into a comprehensive report.

Steps:

- Create a task-specific agent based on a research query.

- Generate questions that collectively form an objective opinion on the task.

- Use a crawler agent for gathering information for each question.

- Summarize and source-track each resource.

- Filter and aggregate summaries into a final research report.

Tutorials

Features

- 📝 Generate detailed research reports using web and local documents.

- 🖼️ Smart image scraping and filtering for reports.

- 📜 Generate detailed reports exceeding 2,000 words.

- 🌐 Aggregate over 20 sources for objective conclusions.

- 🖥️ Frontend available in lightweight (HTML/CSS/JS) and production-ready (NextJS + Tailwind) versions.

- 🔍 JavaScript-enabled web scraping.

- 📂 Maintains memory and context throughout research.

- 📄 Export reports to PDF, Word, and other formats.

📖 Documentation

See the Documentation for:

- Installation and setup guides

- Configuration and customization options

- How-To examples

- Full API references

⚙️ Getting Started

Installation

-

Install Python 3.11 or later. Guide.

-

Clone the project and navigate to the directory:

git clone https://github.com/assafelovic/gpt-researcher.git cd gpt-researcher

-

Set up API keys by exporting them or storing them in a

.envfile.export OPENAI_API_KEY={Your OpenAI API Key here} export TAVILY_API_KEY={Your Tavily API Key here}

-

Install dependencies and start the server:

pip install -r requirements.txt python -m uvicorn main:app --reload

Visit http://localhost:8000 to start.

For other setups (e.g., Poetry or virtual environments), check the Getting Started page.

Run as PIP package

pip install gpt-researcher

Example Usage:

...

from gpt_researcher import GPTResearcher

query = "why is Nvidia stock going up?"

researcher = GPTResearcher(query=query)

# Conduct research on the given query

research_result = await researcher.conduct_research()

# Write the report

report = await researcher.write_report()

...

For more examples and configurations, please refer to the PIP documentation page.

🔧 MCP Client

GPT Researcher supports MCP integration to connect with specialized data sources like GitHub repositories, databases, and custom APIs. This enables research from data sources alongside web search.

export RETRIEVER=tavily,mcp # Enable hybrid web + MCP research

from gpt_researcher import GPTResearcher

import asyncio

import os

async def mcp_research_example():

# Enable MCP with web search

os.environ["RETRIEVER"] = "tavily,mcp"

researcher = GPTResearcher(

query="What are the top open source web research agents?",

mcp_configs=[

{

"name": "github",

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-github"],

"env": {"GITHUB_TOKEN": os.getenv("GITHUB_TOKEN")}

}

]

)

research_result = await researcher.conduct_research()

report = await researcher.write_report()

return report

For comprehensive MCP documentation and advanced examples, visit the MCP Integration Guide.

✨ Deep Research

GPT Researcher now includes Deep Research - an advanced recursive research workflow that explores topics with agentic depth and breadth. This feature employs a tree-like exploration pattern, diving deeper into subtopics while maintaining a comprehensive view of the research subject.

- 🌳 Tree-like exploration with configurable depth and breadth

- ⚡️ Concurrent processing for faster results

- 🤝 Smart context management across research branches

- ⏱️ Takes ~5 minutes per deep research

- 💰 Costs ~$0.4 per research (using

o3-minion "high" reasoning effort)

Learn more about Deep Research in our documentation.

Run with Docker

Step 1 - Install Docker

Step 2 - Clone the '.env.example' file, add your API Keys to the cloned file and save the file as '.env'

Step 3 - Within the docker-compose file comment out services that you don't want to run with Docker.

docker-compose up --build

If that doesn't work, try running it without the dash:

docker compose up --build

Step 4 - By default, if you haven't uncommented anything in your docker-compose file, this flow will start 2 processes:

- the Python server running on localhost:8000

- the React app running on localhost:3000

Visit localhost:3000 on any browser and enjoy researching!

📄 Research on Local Documents

You can instruct the GPT Researcher to run research tasks based on your local documents. Currently supported file formats are: PDF, plain text, CSV, Excel, Markdown, PowerPoint, and Word documents.

Step 1: Add the env variable DOC_PATH pointing to the folder where your documents are located.

export DOC_PATH="./my-docs"

Step 2:

- If you're running the frontend app on localhost:8000, simply select "My Documents" from the "Report Source" Dropdown Options.

- If you're running GPT Researcher with the PIP package, pass the

report_sourceargument as "local" when you instantiate theGPTResearcherclass code sample here.

🤖 MCP Server

We've moved our MCP server to a dedicated repository: gptr-mcp.

The GPT Researcher MCP Server enables AI applications like Claude to conduct deep research. While LLM apps can access web search tools with MCP, GPT Researcher MCP delivers deeper, more reliable research results.

Features:

- Deep research capabilities for AI assistants

- Higher quality information with optimized context usage

- Comprehensive results with better reasoning for LLMs

- Claude Desktop integration

For detailed installation and usage instructions, please visit the official repository.

👪 Multi-Agent Assistant

As AI evolves from prompt engineering and RAG to multi-agent systems, we're excited to introduce our new multi-agent assistant built with LangGraph.

By using LangGraph, the research process can be significantly improved in depth and quality by leveraging multiple agents with specialized skills. Inspired by the recent STORM paper, this project showcases how a team of AI agents can work together to conduct research on a given topic, from planning to publication.

An average run generates a 5-6 page research report in multiple formats such as PDF, Docx and Markdown.

Check it out here or head over to our documentation for more information.

🖥️ Frontend Applications

GPT-Researcher now features an enhanced frontend to improve the user experience and streamline the research process. The frontend offers:

- An intuitive interface for inputting research queries

- Real-time progress tracking of research tasks

- Interactive display of research findings

- Customizable settings for tailored research experiences

Two deployment options are available:

- A lightweight static frontend served by FastAPI

- A feature-rich NextJS application for advanced functionality

For detailed setup instructions and more information about the frontend features, please visit our documentation page.

🚀 Contributing

We highly welcome contributions! Please check out contributing if you're interested.

Please check out our roadmap page and reach out to us via our Discord community if you're interested in joining our mission.

✉️ Support / Contact us

- Community Discord

- Author Email: assaf.elovic@gmail.com

🛡 Disclaimer

This project, GPT Researcher, is an experimental application and is provided "as-is" without any warranty, express or implied. We are sharing codes for academic purposes under the Apache 2 license. Nothing herein is academic advice, and NOT a recommendation to use in academic or research papers.

Our view on unbiased research claims:

- The main goal of GPT Researcher is to reduce incorrect and biased facts. How? We assume that the more sites we scrape the less chances of incorrect data. By scraping multiple sites per research, and choosing the most frequent information, the chances that they are all wrong is extremely low.

- We do not aim to eliminate biases; we aim to reduce it as much as possible. We are here as a community to figure out the most effective human/llm interactions.

- In research, people also tend towards biases as most have already opinions on the topics they research about. This tool scrapes many opinions and will evenly explain diverse views that a biased person would never have read.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file conting_researcher-0.14.0.tar.gz.

File metadata

- Download URL: conting_researcher-0.14.0.tar.gz

- Upload date:

- Size: 156.3 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

9be7478c2bd66e5b1322abdb1c1e8ac92027c5b06051d769cc85a27b042fdd58

|

|

| MD5 |

f493d352bb4e363b146ae095761ae348

|

|

| BLAKE2b-256 |

ef2971f3117718da7a37cd1d6ba6243c550e79cb8842cd00020e921cd81dcf02

|

File details

Details for the file conting_researcher-0.14.0-py3-none-any.whl.

File metadata

- Download URL: conting_researcher-0.14.0-py3-none-any.whl

- Upload date:

- Size: 195.7 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.12.9

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2df152cb141fc234dfea1a02ab87371e8907d2d99107db10d08e16e8fb9190ae

|

|

| MD5 |

6287970fd40282cb19a2f3aec7ba415d

|

|

| BLAKE2b-256 |

55f22a10a2fd6503495518bf8c78d5c73067ec36ed221e1e3498032981e2b36f

|