Command-line tool for ASR evaluation and analysis.

Project description

Installation • Usage • Understanding the Report • Normalization • Limitations

Canal is a command-line tool that helps you measure how well a speech-recognition model is performing. Give it a CSV with the correct transcripts alongside the model-generated output, and it produces a self-contained HTML report with overall accuracy metrics and a word-by-word comparison so you can see exactly where the model gets things right — and where it doesn't.

Installation

The recommended way to install Canal is with pipx, which installs CLI tools in isolated environments:

pipx install corti-canal

Don't have pipx installed?

Install pipx first, then install Canal:

# macOS

brew install pipx

pipx ensurepath

# Linux (Debian/Ubuntu)

sudo apt install pipx

pipx ensurepath

# With pip (any platform)

pip install --user pipx

pipx ensurepath

After installing pipx, restart your terminal and then run:

pipx install corti-canal

To upgrade to the latest version:

pipx upgrade corti-canal

Usage

canal report [OPTIONS] INPUT_PATH

Generate an ASR evaluation report from a CSV file. The CSV must contain at least two columns: one with reference transcripts (ground truth) and one with hypothesis transcripts (model output).

Use canal report --help to view the full command description and available options.

Arguments

| Argument | Description |

|---|---|

INPUT_PATH | Path to the CSV file containing the evaluation data. |

Options

| Option | Default |

|---|---|

--output-path | canal-report-{time}.html |

Path for the generated report. Defaults to a timestamped file in the current directory (e.g., canal-report-20251224-173020.html). | |

--ref-col | ref |

| Name of the CSV column containing the ground-truth reference transcripts. | |

--gen-col | gen |

| Name of the CSV column containing the model-generated transcripts. | |

--medical-terms | None |

| Path to a file containing medical terms (one per line) used to compute the Medical Term Recall metric. If not provided, Medical Term Recall will not be computed. | |

--disable-normalization | False |

| Disable text normalization before computing metrics. Basic normalization includes lowercasing and removing punctuation and diacritics. | |

--alignment-type | lv |

Algorithm used to align reference and hypothesis words. lv (Levenshtein) is a conventional word-level alignment used for metrics like WER and CER. ea (ErrorAlign) is a more advanced algorithm that aligns words closer to the way a human reader would. | |

--overwrite | False |

| Allow overwriting an existing report file. Without this flag, Canal will refuse to write over an existing file. | |

Example

# Columns are named "ref" and "gen" (the defaults)

canal report data.csv

# Custom column names and output path

canal report --ref-col text --gen-col transcript --output-path report.html data.csv

# With medical term recall

canal report --medical-terms medical_terms.txt data.csv

Input CSV format

The CSV file should contain at minimum a reference column and a hypothesis column. Any additional columns are ignored.

ref,gen

"The patient was prescribed amoxicillin.","The patient was prescribed amoxicilin."

"The colonoscopy revealed no significant abnormalities.","The colonoscopy revealed significant abnormalities."

Medical terms format

The medical terms file is a plain text file (e.g., a .txt file) with one term per line. Do not include a header line (first-line header), as it will be interpreted as a medical term. It is used with the --medical-terms option to compute the Medical Term Recall (MTR) metric, which measures how many of the listed terms were correctly transcribed.

amoxicillin

atrial fibrillation

colonoscopy

deep vein thrombosis

hypertension

...

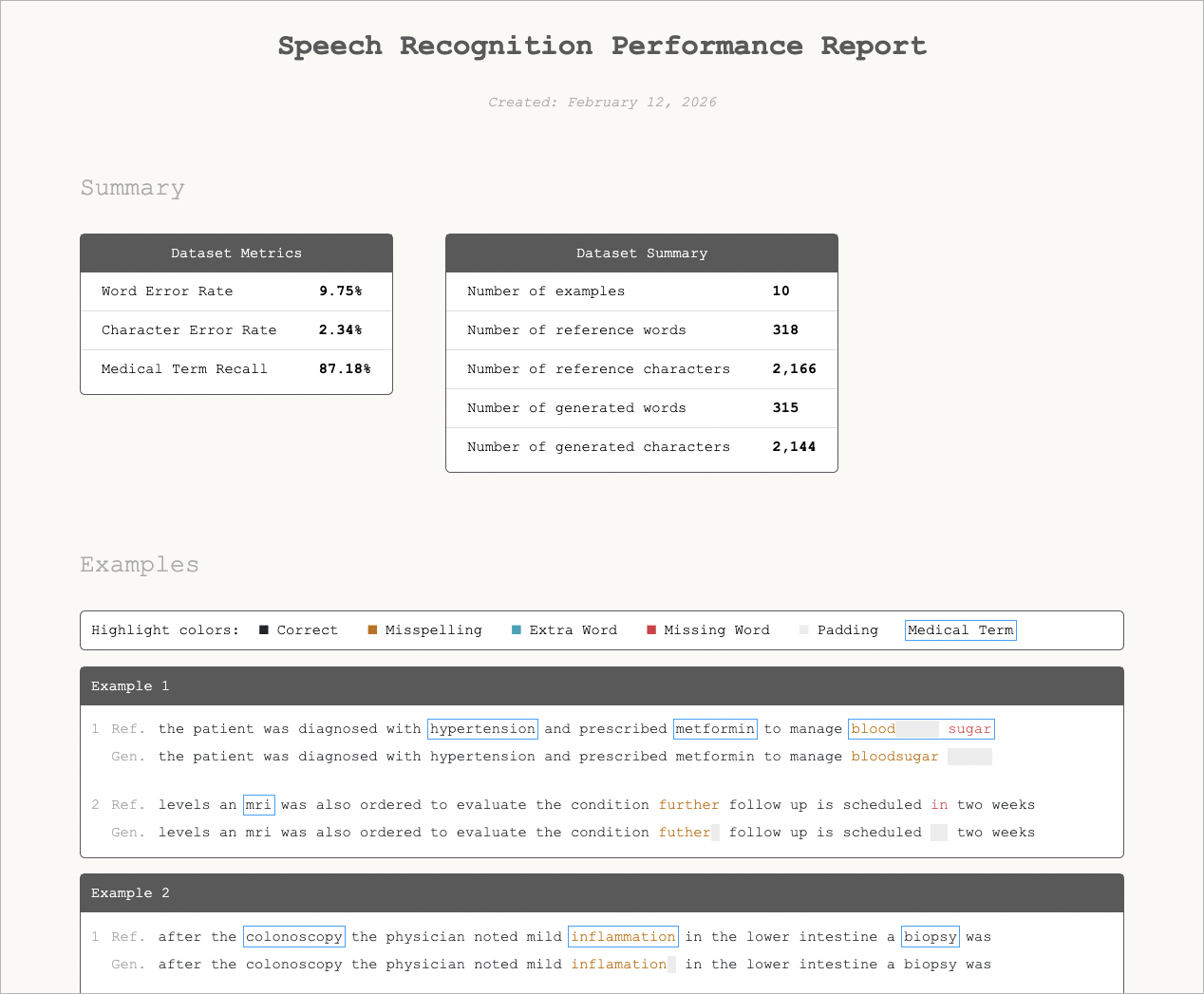

Understanding the Report

See it in action: The

example/folder contains sample input files and a pre-generated report you can open in your browser.

The generated HTML report is fully self-contained (no external dependencies) and can be opened in any browser. It has two main sections:

Summary

The summary section contains two tables side by side:

Dataset Metrics

| Metric | What it measures |

|---|---|

| Word Error Rate (WER) | The proportion of words the model got wrong — whether by misspelling them, adding extra words, or leaving words out. A WER of 0% means every word was correct; 6% means roughly 6 in 100 words had an error. Lower is better. |

| Character Error Rate (CER) | Like WER, but counted per character instead of per word. This gives a more fine-grained view: a single-letter typo (e.g. "amoxicillin" → "amoxicilin") counts as one full word error in WER but only a small character error in CER. Lower is better. |

| Medical Term Recall (MTR) | The proportion of medical terms from your keyword list that the model transcribed correctly. 100% means every term was captured. Only shown when the --medical-terms option is used. Higher is better. |

Dataset Summary

| Field | What it shows |

|---|---|

| Number of examples | How many transcript pairs were evaluated. |

| Number of reference words / characters | Total words and characters in the ground truth. |

| Number of generated words / characters | Total words and characters in the model output. |

Examples (Word Alignments)

Below the summary, the report lists every transcript pair with a word-level alignment visualization. Each example shows two rows:

- Ref. -- the ground-truth reference transcript

- Gen. -- the model-generated transcript

Words are color-coded to show the alignment result:

| Color | Label | Meaning |

|---|---|---|

| Black | Correct | The word was transcribed correctly. |

| Orange | Misspelling | The model produced a different word than the reference. |

| Teal | Extra Word | The model inserted a word that is not in the reference. |

| Red | Missing Word | A reference word was missing from the model output. |

| Grey | Padding | Visual padding to keep the reference and hypothesis rows aligned. |

| Blue box | Medical Term | Indicates a designated medical term for tracking recall. Only shown when the --medical-terms option is used. |

This visualization makes it easy to spot patterns -- for example, if the model consistently misspells certain medical terms or drops words at the end of sentences.

Normalization

By default, Canal normalizes both reference and generated text before computing metrics and alignments. This ensures that superficial differences in casing, punctuation, or accented characters don't inflate error rates. You can disable this with --disable-normalization.

The normalization pipeline applies the following steps in order:

- Unicode NFC composition -- Decomposes and recomposes Unicode characters into their canonical composed form (e.g.

e+ combining acute accent becomesé). - Tokenization -- Splits text into words. Punctuation at the boundaries of words is stripped, while internal punctuation is preserved (e.g. "don't" stays as one token). Hyphens and forward slashes act as word boundaries.

- Lowercasing -- Converts all characters to lowercase.

- Latin transliteration -- Converts accented Latin letters to their ASCII equivalents (e.g.

é→e,ñ→n,ü→u). Non-Latin scripts (Greek, Cyrillic, etc.) are left unchanged. See anyascii.com for an interactive demo of how this kind of transliteration works.

Limitations

- Latin script only. The normalization pipeline is designed for Latin script languages. Evaluating non-Latin script language data may lead to unexpected results.

- Overlapping medical terms are counted multiple times. If terms in the medical terms list overlap (e.g. "blood" and "blood sugar"), each match is counted independently for Medical Term Recall metric.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file corti_canal-0.1.0b6.tar.gz.

File metadata

- Download URL: corti_canal-0.1.0b6.tar.gz

- Upload date:

- Size: 8.2 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b126d7d37f41eef6ce3edb292954210f11d5de8e0156e4b4fabbf5a12cf0f0d7

|

|

| MD5 |

ebe84b6b43329dbf1be7ba61ea00acf8

|

|

| BLAKE2b-256 |

e820ddcabae89fddf7754275b21e2a2f264e693ce923e62c22cd41e53c0cb93d

|

File details

Details for the file corti_canal-0.1.0b6-py3-none-any.whl.

File metadata

- Download URL: corti_canal-0.1.0b6-py3-none-any.whl

- Upload date:

- Size: 8.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8674f1e900ace2bbe3b4f29cf30b082172a40c276283c0f874c5fee2ea60d54c

|

|

| MD5 |

f4136d2a1f5d5682b0de684c770540c5

|

|

| BLAKE2b-256 |

ca04595bbbdb50df6584b1200561244230c5d6cdee078c629a101ccd777d9096

|