No project description provided

Project description

dask-databricks

Cluster tools for running Dask on Databricks multi-node clusters.

Quickstart

To launch a Dask cluster on Databricks you need to create an init script with the following contents and configure your multi-node cluster to use it.

#!/bin/bash

# Install Dask + Dask Databricks

/databricks/python/bin/pip install --upgrade dask[complete] dask-databricks

# Start Dask cluster components

dask databricks run

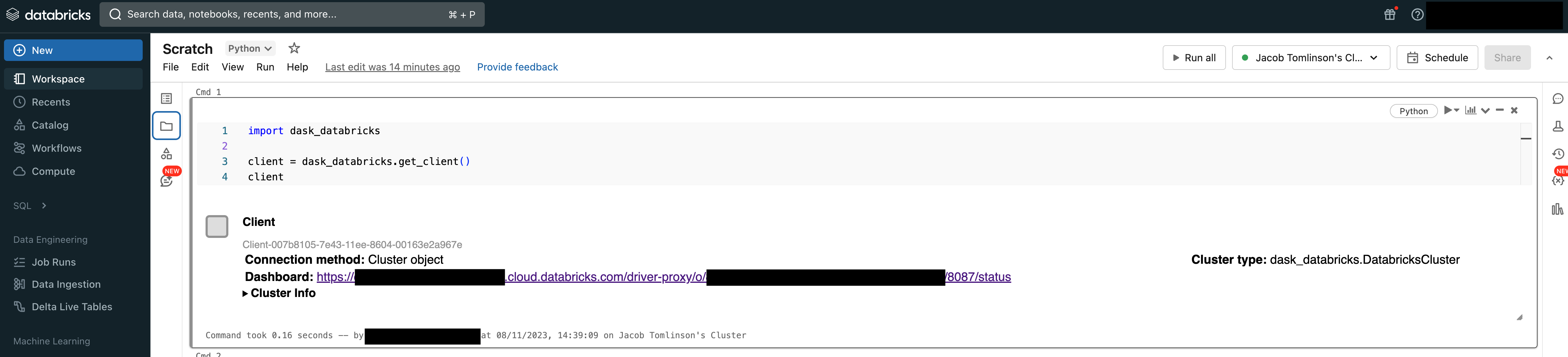

Then from your Databricks Notebook you can quickly connect a Dask Client to the scheduler running on the Spark Driver Node.

import dask_databricks

client = dask_databricks.get_client()

Now you can submit work from your notebook to the multi-node Dask cluster.

def inc(x):

return x + 1

x = client.submit(inc, 10)

x.result()

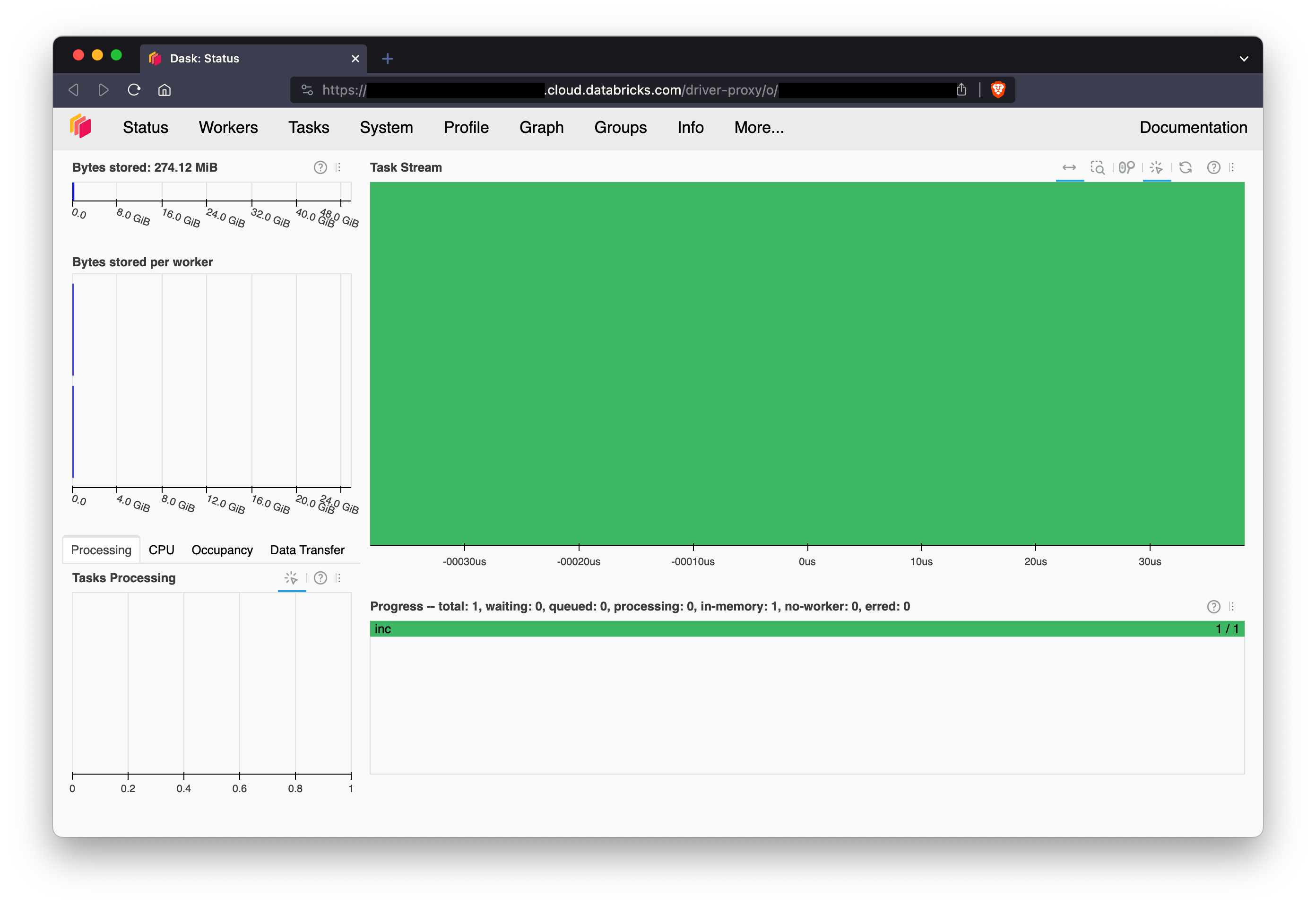

Dashboard

You can access the Dask dashboard via the Databricks driver-node proxy. The link can be found in Client or DatabricksCluster repr or via client.dashboard_link.

>>> print(client.dashboard_link)

https://dbc-dp-xxxx.cloud.databricks.com/driver-proxy/o/xxxx/xx-xxx-xxxx/8087/status

Releasing

Releases of this project are automated using GitHub Actions and the pypa/gh-action-pypi-publish action.

To create a new release push a tag to the upstream repo in the format x.x.x. The package will be built and pushed to PyPI automatically and then later picked up by conda-forge.

# Make sure you have an upstream remote

git remote add upstream git@github.com:dask-contrib/dask-databricks.git

# Create a tag and push it upstream

git tag x.x.x && git push upstream main --tags

Project details

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file dask_databricks-0.3.2.tar.gz.

File metadata

- Download URL: dask_databricks-0.3.2.tar.gz

- Upload date:

- Size: 8.9 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/5.0.0 CPython/3.12.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

09dc89dbb472270ab5491891f65199a4a258d9e6214e7bfb8243077aa71515bc

|

|

| MD5 |

2d4ef22f462ac775a4facb79130c3862

|

|

| BLAKE2b-256 |

269352bed2f5a9f5c32abef821af49984d919e8a6b094d9562c91c99ab88baa2

|

File details

Details for the file dask_databricks-0.3.2-py3-none-any.whl.

File metadata

- Download URL: dask_databricks-0.3.2-py3-none-any.whl

- Upload date:

- Size: 7.7 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/5.0.0 CPython/3.12.3

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

37c3102917c9bd2da22204e66f9d8bec9ef3b79052e9e91f86d6e6d35bee3a9d

|

|

| MD5 |

c2cec7445bed4d35969215bb7c5c6c25

|

|

| BLAKE2b-256 |

11c338bd87b8451545e29bec360678dbf280ebbb4cc9685323688d48085eaf5a

|