A data-centric training system for Large Language Models

Project description

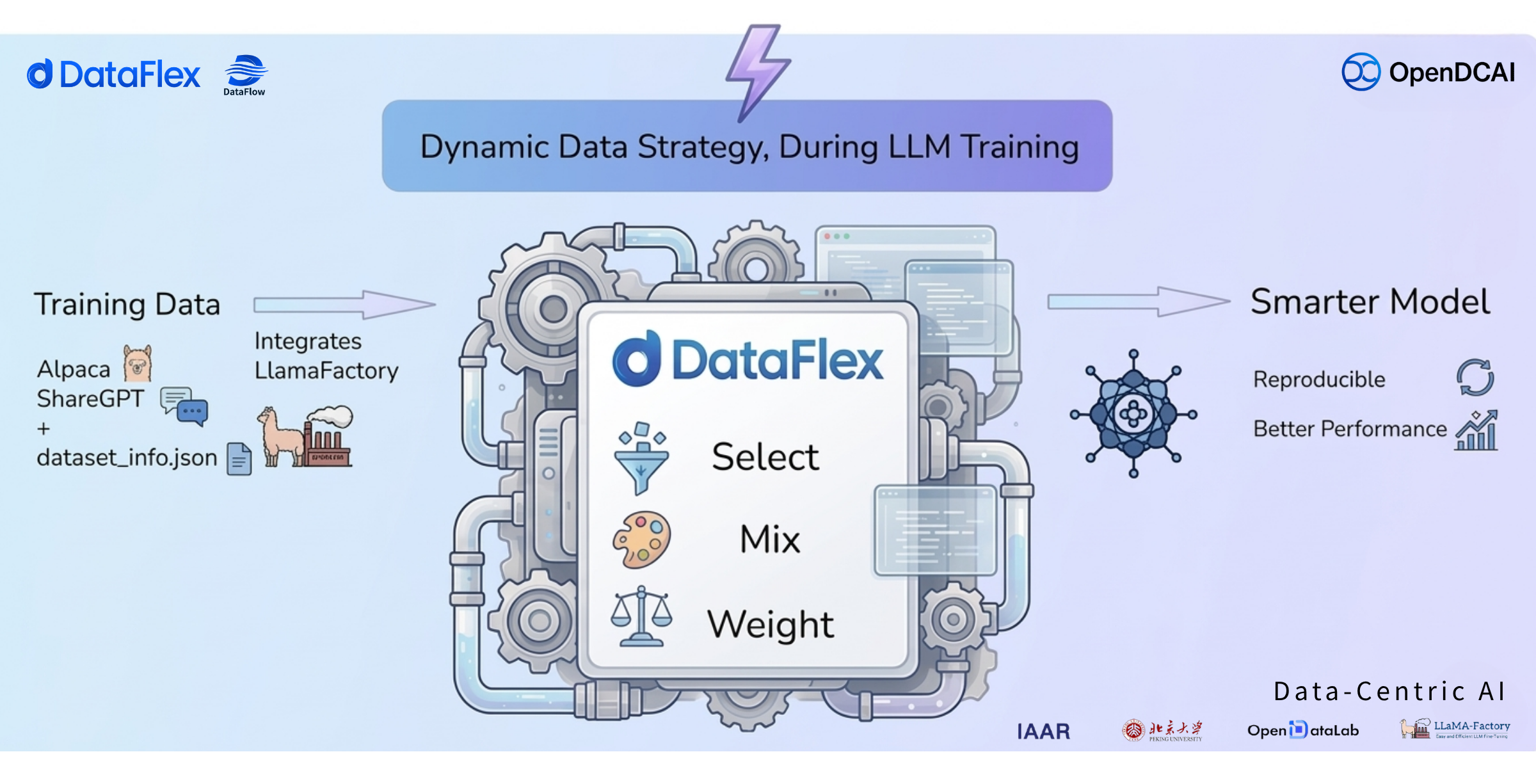

DataFlex

Data Select · Mix · Reweight — Right in the LLM Training Loop

📰 1. News

- [2026-04-04] 🎉 Our technical report ranked #1 on the Hugging Face Daily Papers leaderboard for that day.

- [2026-03-17] We now support gradient computation under DeepSpeed ZeRO-3, enabling training and analysis of larger-scale models.

- [2025-12-23] 🎉 We’re excited to announce the first Data-Centric Training System DataFlex, is now released! Stay tuned for future updates.

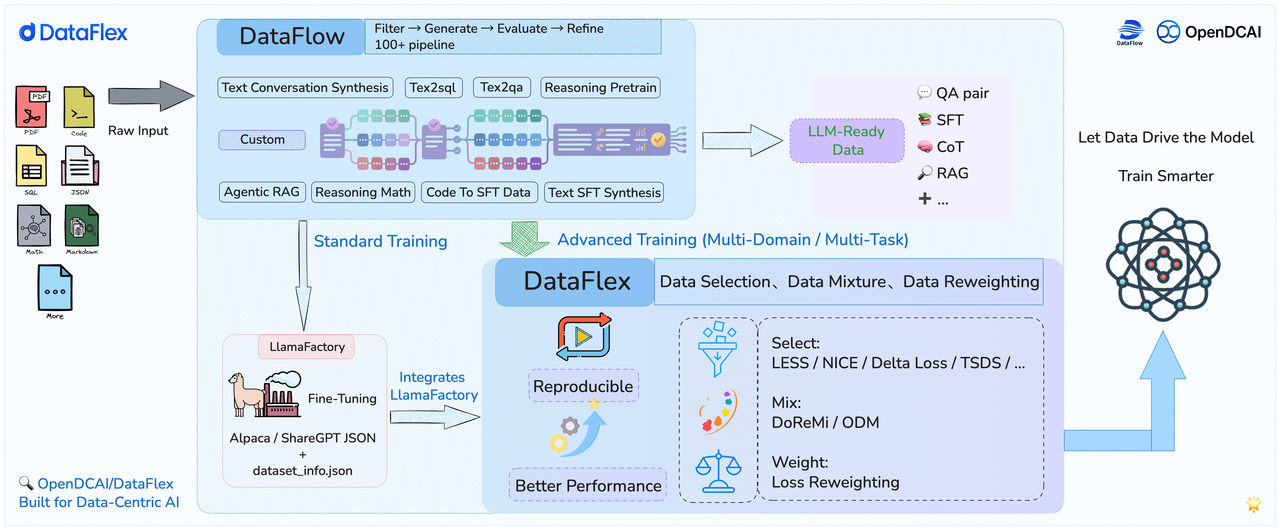

🔍 2. Overview

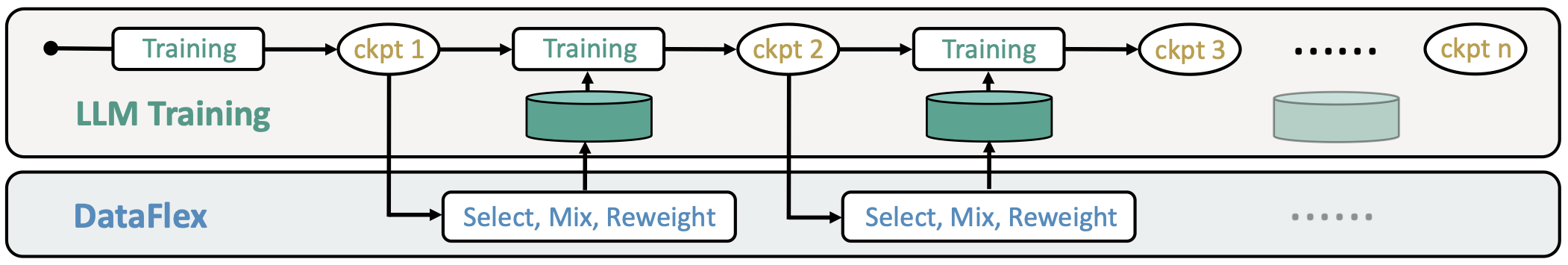

DataFlex is an advanced dynamic training framework built on top of LLaMA-Factory.

It intelligently schedules training data during optimization and integrates several difficult-to-reproduce repositories into a unified framework. The system provides reproducible implementations of Data Selection, Data Mixture, and Data Reweighting, thereby improving both experimental reproducibility and final model performance.

DataFlex integrates seamlessly with LLaMA-Factory, offering researchers and developers more flexible and powerful training control. For goals and design philosophy, please refer to DataFlex-Doc. We summarize repositories related to Data Selection, Data Mixture, and Data Reweighting. ❌ indicates that no official repository is available; ✅ indicates that an official repository is available; ⚠️ indicates that an official repository exists but contains issues.

- Data Selection: Dynamically selects training samples according to a given strategy (e.g., focus on “hard” samples). The data selection algorithms are summarized as follows:

| Method | Category | Requires Model-in-the-Loop? | Official Repo |

|---|---|---|---|

| LESS | Gradient-Based | ✅ Yes | ⚠️official code |

| NICE | Gradient-Based | ✅ Yes | ⚠️official code |

| Loss | Loss-Based | ✅ Yes | ❌ |

| Delta Loss | Loss-Based | ✅ Yes | ❌ |

| NEAR | Data Distribution-Based | ❌ No | ❌ |

| TSDS | Data Distribution-Based | ❌ No | ✅official code |

| Static | No Selection | ❌ No | ❌ |

| Random | Random Sampling | ❌ No | ❌ |

- Data Mixture: Dynamically adjusts the ratio of data from different domains during training. The data mixture algorithms are summarized as follows:

| Method | Category | Requires Model-in-the-Loop? | Official Repo |

|---|---|---|---|

| DOREMI | Offline Mixture | ✅ Yes | ⚠️official code |

| ODM | Online Mixture | ✅ Yes | ⚠️official code |

- Data Reweighting: Dynamically adjusts sample weights during backpropagation to emphasize data preferred by the model. The data reweighting algorithms are summarized as follows:

| Method | Category | Requires Model-in-the-Loop? | Official Repo |

|---|---|---|---|

| Loss Reweighting | Loss-Based | ✅ Yes | ❌ |

- Full compatibility with LLaMA-Factory, drop-in replacement.

📌 3. Quick Start

Please use the following commands for environment setup and installation👇

git clone https://github.com/OpenDCAI/DataFlex.git

cd DataFlex

pip install -e .

Note: Python 3.11+ is recommended. The core dependencies (including

llamafactory) will be installed automatically. If you are using Python 3.10, you need to install a compatible version ofllamafactorymanually.

The launch command is similar to LLaMA-Factory. Below is an example using LESS :

dataflex-cli train examples/train_lora/selectors/less.yaml

Unlike vanilla LLaMA-Factory, your .yaml config file must also include DataFlex-specific parameters. For details, please refer to DataFlex-Doc.

📖 Skills

- How to Use DataFlex — Installation, CLI commands, YAML configuration, training modes, and supported algorithms.

- How to Add a New Algorithm — Architecture overview, registry system, base class interfaces, and step-by-step guide for adding selectors/mixers/weighters.

📚 4. Experimental Results

Using DataFlex can improve performance over the default LLaMA-Factory training.

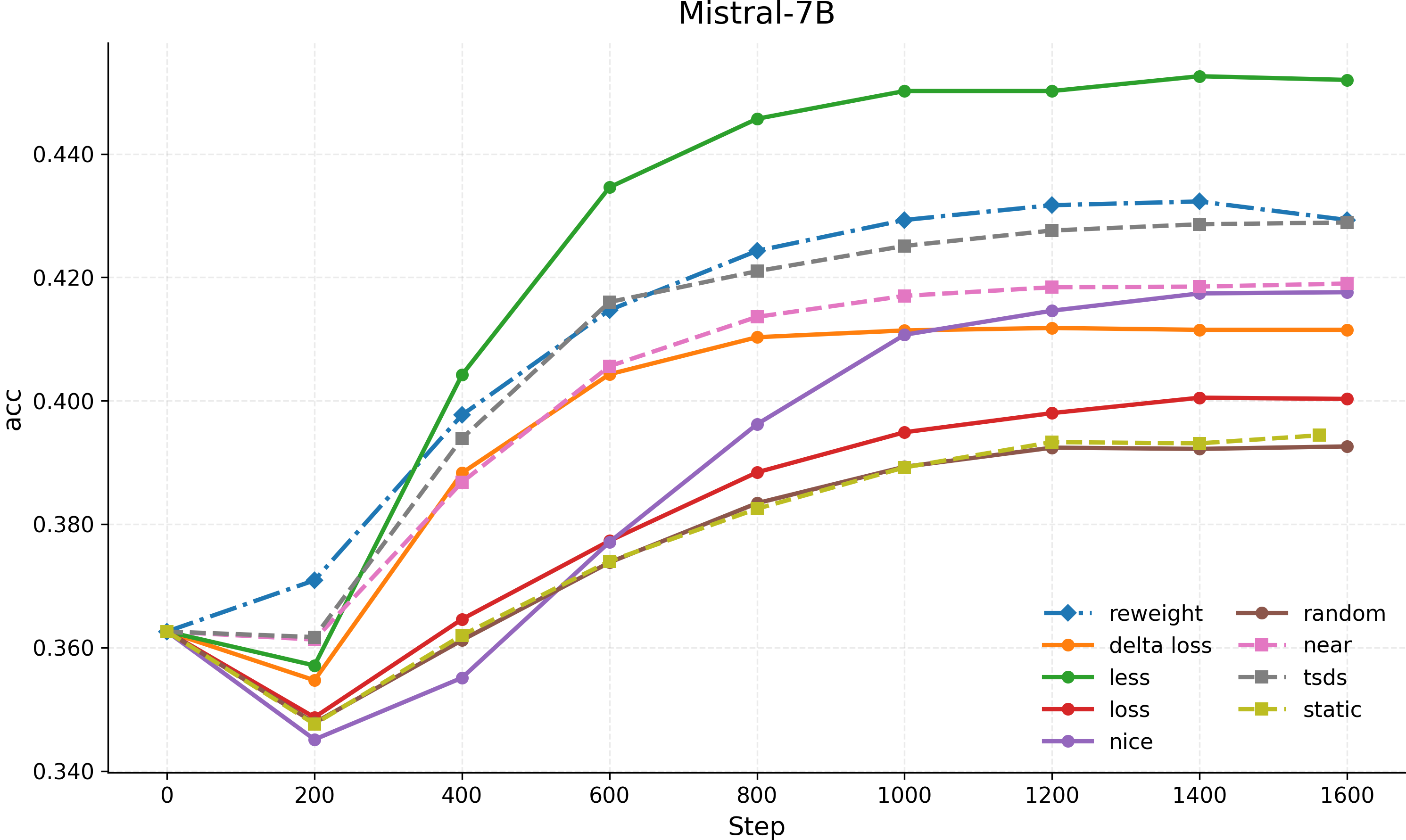

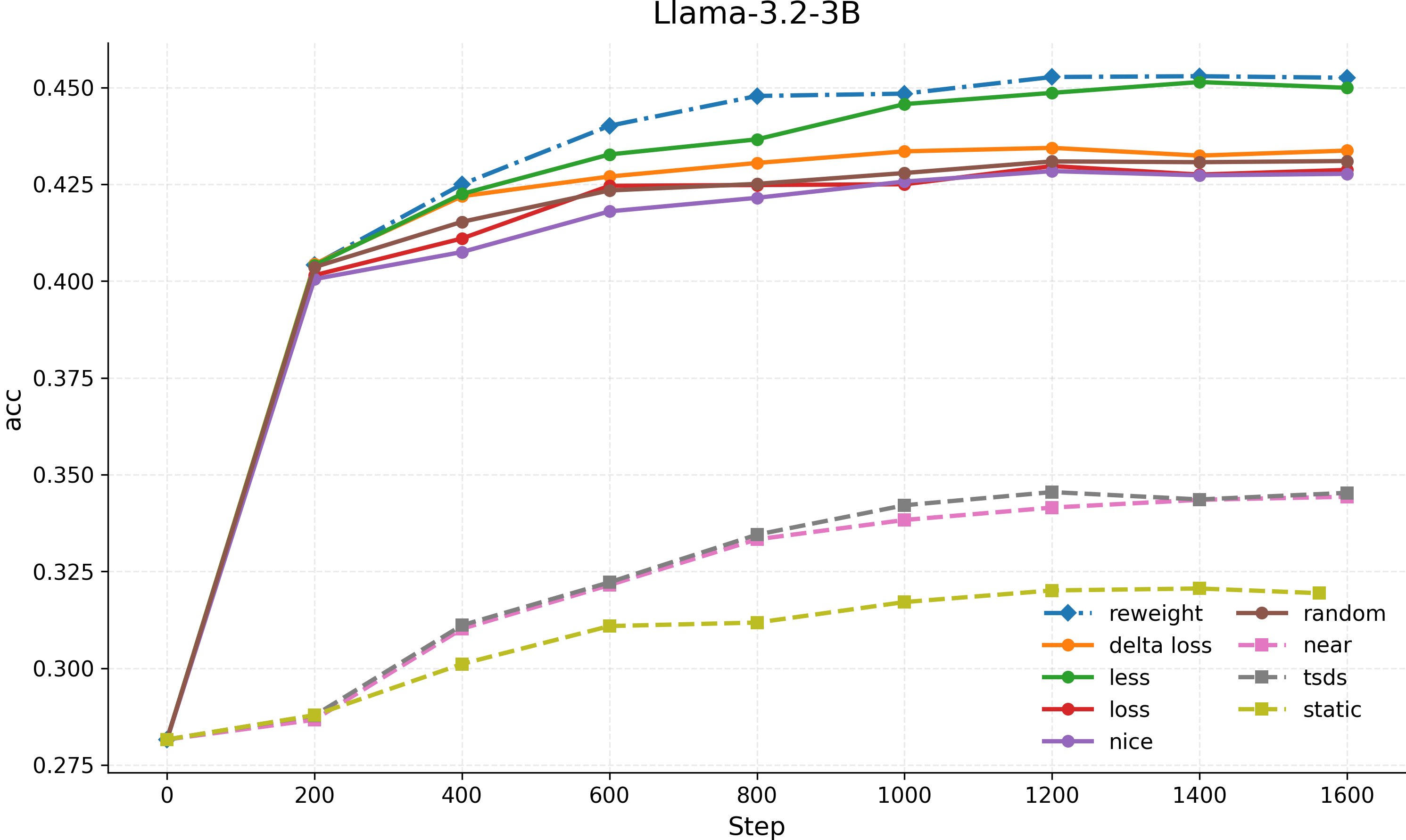

Data Selector & Reweightor Results

We use a subset of Open-Hermes-2.5 as the training dataset. The data selection algorithms and data reweighting algorithm outperform the random selector baseline on the MMLU benchmark subset relevant to the training dataset. For the Less and Nice algorithm, we set the validation set as the MMLU-Validation-Set, using a GPT-5-generated trajectory.

Data Mixture Results

We use subsets of SlimPajama-627B for data mixture. The data mixture algorithms outperform the baseline (default data mixture) on MMLU accuracy while also achieving lower perplexity across different data domains.

| Method | Acc ↑ | Perplexity (PPL) ↓ | |||||||

|---|---|---|---|---|---|---|---|---|---|

| MMLU | ALL | CC | C4 | SE | Wiki | GitHub | ArXiv | Book | |

| Slim-Pajama-6B | |||||||||

| Baseline | 25.27 | 4.217 | 4.278 | 4.532 | 3.402 | 3.546 | 2.640 | 3.508 | 4.778 |

| DoReMi | 25.84 | 4.134 | 4.108 | 4.358 | 3.788 | 3.997 | 3.420 | 3.413 | 4.661 |

| ODM | 26.04 | 4.244 | 4.326 | 4.555 | 3.243 | 3.699 | 2.704 | 2.904 | 4.613 |

| Slim-Pajama-30B | |||||||||

| Baseline | 25.51 | 3.584 | 3.723 | 3.505 | 2.850 | 3.215 | 3.163 | 4.540 | 5.329 |

| DoReMi | 25.97 | 3.562 | 3.731 | 3.503 | 2.706 | 2.985 | 2.973 | 4.441 | 5.214 |

| ODM | 25.63 | 3.429 | 3.598 | 3.519 | 2.382 | 2.713 | 2.255 | 3.487 | 4.746 |

🧩 5. Ecosystem

DataFlex focuses on data scheduling during training. For a complete pipeline starting from raw data, it pairs well with DataFlow:

DataFlow converts raw files into LLM training data through composable operator pipelines — document parsing, knowledge cleaning, QA / CoT synthesis, and training format conversion. The output JSON can be fed directly into DataFlex. The two projects are independent with no code dependency, connected only by standard data formats. DataFlex accepts training data from any source — DataFlow, manual annotation, HuggingFace datasets, or custom processing scripts.

🤝 6. Acknowledgements

We thank LLaMA-Factory for offering an efficient and user-friendly framework for large model fine-tuning, which greatly facilitated rapid iteration in our training and experimentation workflows.

We thank Zhongguancun Academy for their API and GPU support.

Our gratitude extends to all contributors in the open-source community—their efforts collectively drive the development of DataFlex.

📜 7. Citation

If you use DataFlex in your research, feel free to give us a cite.

@article{liang2026dataflex,

title={DataFlex: A Unified Framework for Data-Centric Dynamic Training of Large Language Models},

author={Liang, Hao and Zhao, Zhengyang and Qiang, Meiyi and Chen, Mingrui and Ma, Lu and Yu, Rongyi and Feng, Hengyi and Sun, Shixuan and Meng, Zimo and Ma, Xiaochen and others},

journal={arXiv preprint arXiv:2603.26164},

year={2026}

}

@article{liang2026towards,

title={Towards Next-Generation LLM Training: From the Data-Centric Perspective},

author={Liang, Hao and Zhao, Zhengyang and Han, Zhaoyang and Qiang, Meiyi and Ma, Xiaochen and Zeng, Bohan and Cai, Qifeng and Li, Zhiyu and Tang, Linpeng and Zhang, Wentao and others},

journal={arXiv preprint arXiv:2603.14712},

year={2026}

}

🤝 8. Community & Support

We welcome contributions of new trainers and selectors! Please ensure code formatting is consistent with the existing style before submitting a PR.

We also welcome you to join the DataFlex and DataFlow open-source community to ask questions, share ideas, and collaborate with other developers!

• 📮 GitHub Issues: Report bugs or suggest features

• 🔧 GitHub Pull Requests: Contribute code improvements

• 💬 Join our community groups to connect with us and other contributors!

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file dataflex-1.0.0.tar.gz.

File metadata

- Download URL: dataflex-1.0.0.tar.gz

- Upload date:

- Size: 107.9 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

9a108f33adea5988325c0521cf53c796ed16582900562855133fdfaf5a44a0fd

|

|

| MD5 |

039936f4c9dc422ec1d85ce4307553c5

|

|

| BLAKE2b-256 |

167deafb3374cf9a3c3c1d642518c2227b55502fed4eafc24d2ac21d7fe38121

|

Provenance

The following attestation bundles were made for dataflex-1.0.0.tar.gz:

Publisher:

python-publish.yml on OpenDCAI/DataFlex

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

dataflex-1.0.0.tar.gz -

Subject digest:

9a108f33adea5988325c0521cf53c796ed16582900562855133fdfaf5a44a0fd - Sigstore transparency entry: 1318015878

- Sigstore integration time:

-

Permalink:

OpenDCAI/DataFlex@d81c971df97faefd9fce56a5aed66c0c072a971d -

Branch / Tag:

refs/tags/v1.0.0 - Owner: https://github.com/OpenDCAI

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

python-publish.yml@d81c971df97faefd9fce56a5aed66c0c072a971d -

Trigger Event:

release

-

Statement type:

File details

Details for the file dataflex-1.0.0-py3-none-any.whl.

File metadata

- Download URL: dataflex-1.0.0-py3-none-any.whl

- Upload date:

- Size: 123.6 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

5a1611ce31fbc37d3fef4623a6850f14f9816f0945a1c319ad8e3eb2cc56d775

|

|

| MD5 |

0870555286524f3ca62848a2d02818c2

|

|

| BLAKE2b-256 |

362167e3bbf87262e9162f5b369edda5e5352891576dcad93542351bfc8aa51b

|

Provenance

The following attestation bundles were made for dataflex-1.0.0-py3-none-any.whl:

Publisher:

python-publish.yml on OpenDCAI/DataFlex

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

dataflex-1.0.0-py3-none-any.whl -

Subject digest:

5a1611ce31fbc37d3fef4623a6850f14f9816f0945a1c319ad8e3eb2cc56d775 - Sigstore transparency entry: 1318015940

- Sigstore integration time:

-

Permalink:

OpenDCAI/DataFlex@d81c971df97faefd9fce56a5aed66c0c072a971d -

Branch / Tag:

refs/tags/v1.0.0 - Owner: https://github.com/OpenDCAI

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

python-publish.yml@d81c971df97faefd9fce56a5aed66c0c072a971d -

Trigger Event:

release

-

Statement type: