A package for creation of dataloader with dynamic batch sizes in PyTorch

Project description

dynbatcher - Dynamic Batch Size Dataloader Generator

dynbatcher is a Python package designed to facilitate the creation and management of PyTorch DataLoaders with custom batch sizes and ratios. This package is especially useful for training neural networks with dynamic batch sizes. With dynbatcher you can divide a dataset into subsets with different batch sizes and turn it into a single Dataloader ready for training.

Features

- Split datasets based on custom ratios and batch sizes.

- Create DataLoaders for each subset with different batch sizes.

- Combine multiple DataLoaders into a single DataLoader.

- Plot samples for a selected batch in created Dataloader.

Installation

Install dynbatcher using pip:

pip install dynbatcher

Usage

Importing the package

from torch.utils.data import DataLoader

import torchvision

import torchvision.transforms as transforms

import dynbatcher

# Define batch sizes and ratios

ratios = [0.5, 0.3, 0.2] # Corresponding ratios for each subset

batch_sizes_train = [32, 64, 128] # Corresponding batch sizes for each subset

# Add transforms and download datasets

transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.5,), (0.5,))])

trainset = torchvision.datasets.MNIST(root='./data', train=True, download=True, transform=transform)

testset = torchvision.datasets.MNIST(root='./data', train=False, download=True, transform=transform)

# Set dataloaders and use DBS Merger & Generator

trainloader = dynbatcher.load_merged_trainloader(trainset, batch_sizes_train, ratios, print_info=True)

testloader = DataLoader(testset, batch_size=64, shuffle=False)

# Example: Access and print information for the selected batch, and display samples

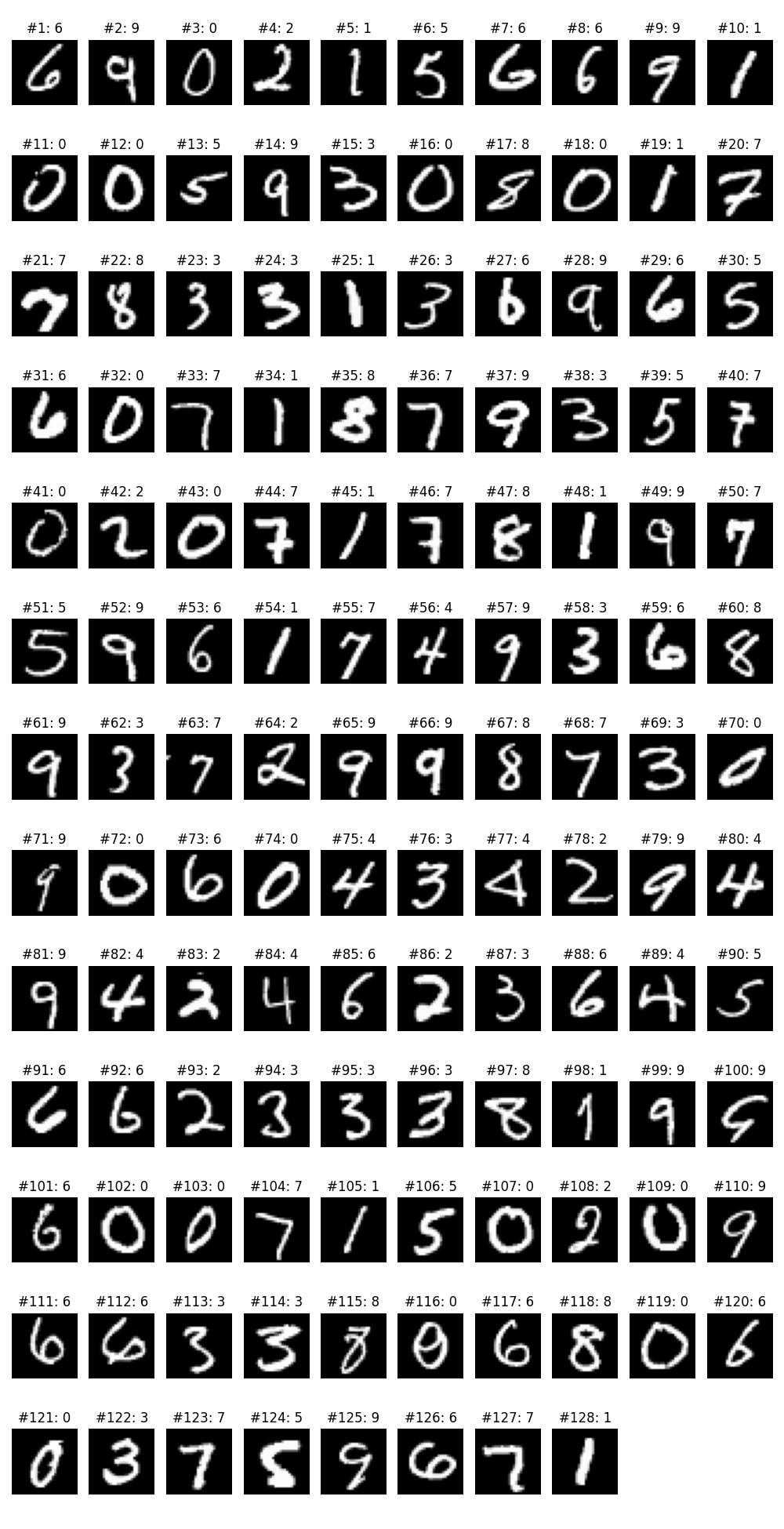

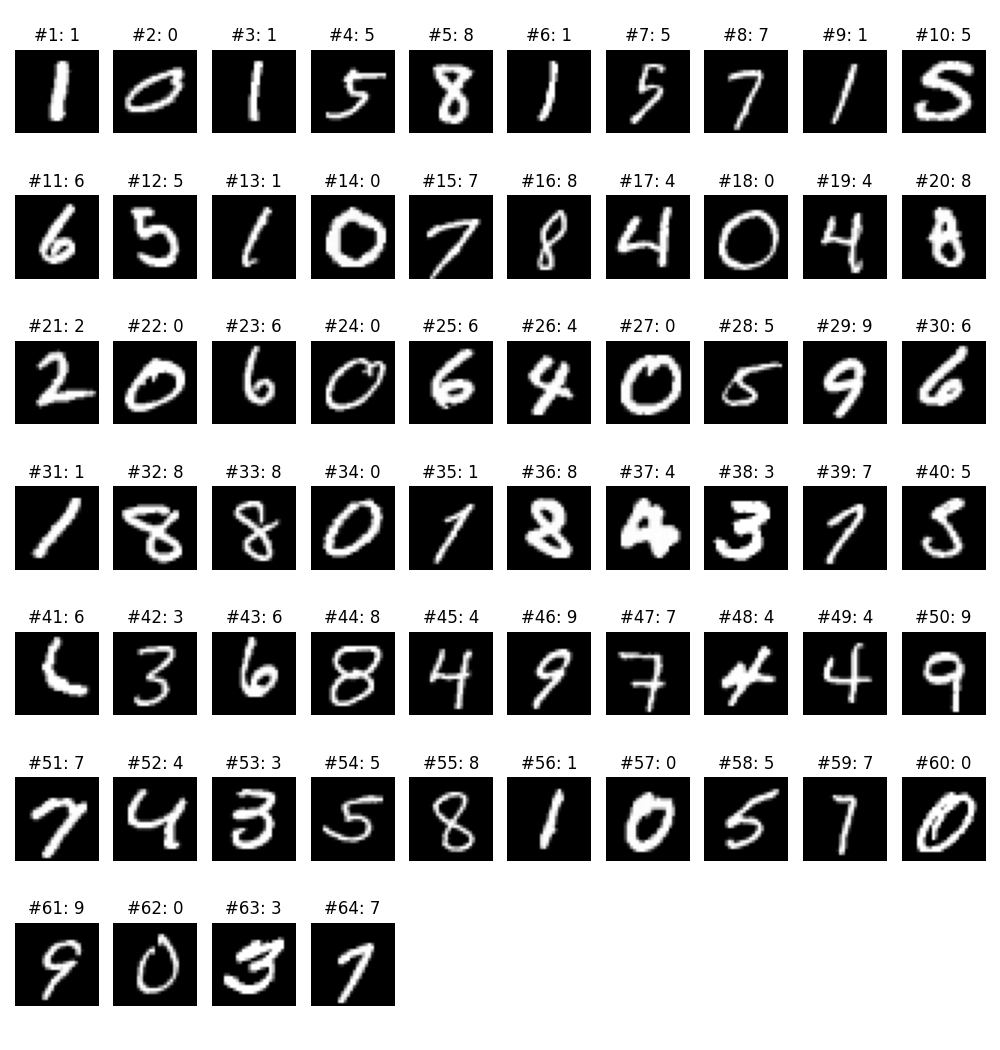

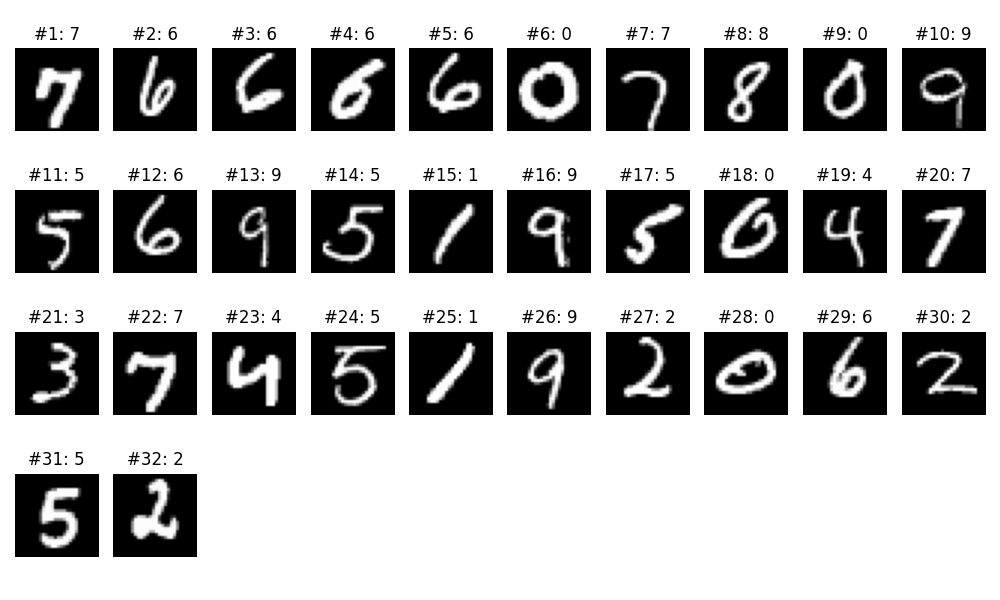

dynbatcher.print_batch_info(trainloader, index=127, display_samples=True)

Parameters

batch_sizes_train: You can choose which batch sizes you want to split the dataset into.ratios: You can choose the ratio in which the data will be allocated to the batch sizes you choose for the dataset. If you do not specify a ratio, it will allocate an equal number of samples to the given batch sizes.

Functions

load_merged_trainloader: This function divides the given dataset with the batch size and ratios you choose and combines them to create a single dataloader.print_batch_info: This function displays and allows you to examine all samples for any batch you select.

Investigating Batch Samples

Contributing

Contributions are welcome! Please open an issue or submit a pull request on GitHub.

License

This project is licensed under the MIT License.

Acknowledgments

Inspired by the need for flexible and efficient DataLoader management in PyTorch.

This `README.md` provides an overview of your project, installation instructions, usage examples, a brief description of the key functions, and information on contributing and licensing.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file dynbatcher-1.1.0.tar.gz.

File metadata

- Download URL: dynbatcher-1.1.0.tar.gz

- Upload date:

- Size: 7.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/5.1.1 CPython/3.10.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

91365c19a7d6a04bcd8c54423d4a415975e9b2503423746e876ea61f655f601f

|

|

| MD5 |

a6568cdeb4a8ca6b8ee62d73010e241a

|

|

| BLAKE2b-256 |

f64c3a4bbdffd0ba0506f53ffe14b68f2d894d6f8827cf49e875ed60eee422d1

|

File details

Details for the file dynbatcher-1.1.0-py3-none-any.whl.

File metadata

- Download URL: dynbatcher-1.1.0-py3-none-any.whl

- Upload date:

- Size: 7.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/5.1.1 CPython/3.10.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

ad277b8eb191ef31c17aa692b8e69b7f5b1761b4e1490e712eddfd2232556cca

|

|

| MD5 |

95fdaac36608e662f6e6b24288605304

|

|

| BLAKE2b-256 |

e8dcb56199dfc8622d44a29392f631926ede32f0baec76af4283c44c19d0b988

|