Embed anything at lightning speed

Project description

Highly Performant, Modular and Memory Safe

Ingestion, Inference and Indexing in Rust 🦀

Python docs »

Rust docs »

Benchmarks

·

FAQ

·

Adapters

.

Collaborations

.

Notebooks

EmbedAnything is a minimalist, yet highly performant, modular, lightning-fast, lightweight, multisource, multimodal, and local embedding pipeline built in Rust. Whether you're working with text, images, audio, PDFs, websites, or other media, EmbedAnything streamlines the process of generating embeddings from various sources and seamlessly streaming (memory-efficient-indexing) them to a vector database. It supports dense, sparse, ONNX, model2vec and late-interaction embeddings, offering flexibility for a wide range of use cases.

Table of Contents

🚀 Key Features

- No Dependency on Pytorch: Easy to deploy on cloud, comes with low memory footprint.

- Highly Modular : Choose any vectorDB adapter for RAG, with

1 line1 word of code - Candle Backend : Supports BERT, Jina, ColPali, Splade, ModernBERT, Reranker, Qwen

- ONNX Backend: Supports BERT, Jina, ColPali, ColBERT Splade, Reranker, ModernBERT, Qwen

- Cloud Embedding Models:: Supports OpenAI, Cohere, and Gemini.

- MultiModality : Works with text sources like PDFs, txt, md, Images JPG and Audio, .WAV

- GPU support : Hardware acceleration on GPU as well.

- Chunking : In-built chunking methods like semantic, late-chunking

- Vector Streaming: Separate file processing, Indexing and Inferencing on different threads, reduces latency.

💡What is Vector Streaming

Embedding models are computationally expensive and time-consuming. By separating document preprocessing from model inference, you can significantly reduce pipeline latency and improve throughput.

Vector streaming transforms a sequential bottleneck into an efficient, concurrent workflow.

The embedding process happens separetly from the main process, so as to maintain high performance enabled by rust MPSC, and no memory leak as embeddings are directly saved to vector database. Find our blog.

🦀 Why Embed Anything

➡️Faster execution.

➡️No Pytorch Dependency, thus low-memory footprint and easy to deploy on cloud.

➡️True multithreading

➡️Running embedding models locally and efficiently

➡️In-built chunking methods like semantic, late-chunking

➡️Supports range of models, Dense, Sparse, Late-interaction, ReRanker, ModernBert.

➡️Memory Management: Rust enforces memory management simultaneously, preventing memory leaks and crashes that can plague other languages

🍓 Our Past Collaborations:

We have collaborated with reputed enterprise like Elastic, Weaviate, SingleStore, Milvus and Analytics Vidya Datahours

You can get in touch with us for further collaborations.

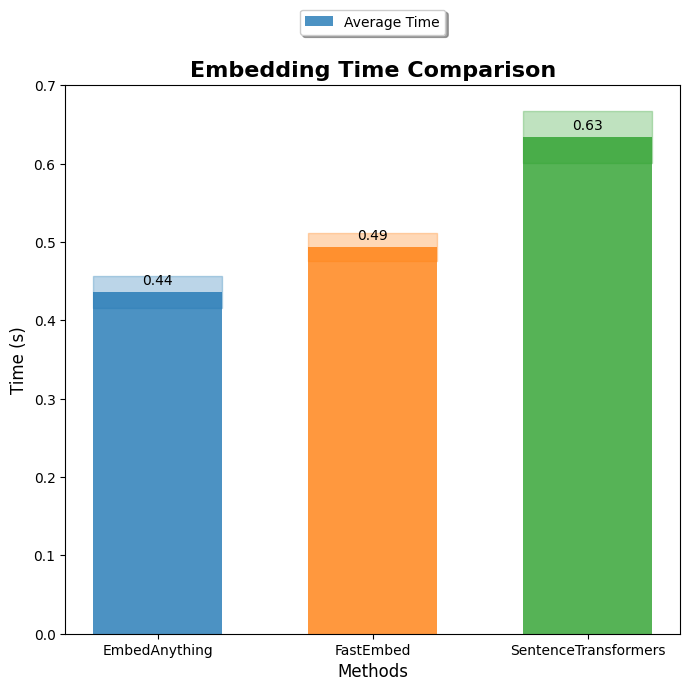

Benchmarks

Inference Speed benchmarks.

Only measures embedding model inference speed, on onnx-runtime. Code

Benchmarks with other fromeworks coming soon!! 🚀

⭐ Supported Models

We support any hugging-face models on Candle. And We also support ONNX runtime for BERT and ColPali.

How to add custom model on candle: from_pretrained_hf

from embed_anything import EmbeddingModel, WhichModel, TextEmbedConfig

import embed_anything

# Load a custom BERT model from Hugging Face

model = EmbeddingModel.from_pretrained_hf(

model_id="sentence-transformers/all-MiniLM-L12-v2"

)

# Configure embedding parameters

config = TextEmbedConfig(

chunk_size=1000, # Maximum characters per chunk

batch_size=32, # Number of chunks to process in parallel

splitting_strategy="sentence" # How to split text: "sentence", "word", or "semantic"

)

# Embed a file (supports PDF, TXT, MD, etc.)

data = embed_anything.embed_file("path/to/your/file.pdf", embedder=model, config=config)

# Access the embeddings and text

for item in data:

print(f"Text: {item.text[:100]}...") # First 100 characters

print(f"Embedding shape: {len(item.embedding)}")

print(f"Metadata: {item.metadata}")

print("---" * 20)

| Model | HF link |

|---|---|

| Jina | Jina Models |

| Bert | All Bert based models |

| CLIP | openai/clip-* |

| Whisper | OpenAI Whisper models |

| ColPali | starlight-ai/colpali-v1.2-merged-onnx |

| Colbert | answerdotai/answerai-colbert-small-v1, jinaai/jina-colbert-v2 and more |

| Splade | Splade Models and other Splade like models |

| Model2Vec | model2vec, minishlab/potion-base-8M |

| Qwen3-Embedding | Qwen/Qwen3-Embedding-0.6B |

| Reranker | Jina Reranker Models, Xenova/bge-reranker, Qwen/Qwen3-Reranker-4B |

Splade Models (Sparse Embeddings)

Sparse embeddings are useful for keyword-based retrieval and hybrid search scenarios.

from embed_anything import EmbeddingModel, WhichModel, TextEmbedConfig

import embed_anything

# Load a SPLADE model for sparse embeddings

model = EmbeddingModel.from_pretrained_hf(

model_id="prithivida/Splade_PP_en_v1"

)

# Configure the embedding process

config = TextEmbedConfig(chunk_size=1000, batch_size=32)

# Embed text files

data = embed_anything.embed_file("test_files/document.txt", embedder=model, config=config)

# Sparse embeddings are useful for hybrid search (combining dense and sparse)

for item in data:

print(f"Text: {item.text}")

print(f"Sparse embedding (non-zero values): {sum(1 for x in item.embedding if x != 0)}")

ONNX-Runtime: from_pretrained_onnx

ONNX models provide faster inference and lower memory usage. Use the ONNXModel enum for pre-configured models or provide a custom model path.

BERT Models

from embed_anything import EmbeddingModel, WhichModel, ONNXModel, Dtype, TextEmbedConfig

import embed_anything

# Option 1: Use a pre-configured ONNX model (recommended)

model = EmbeddingModel.from_pretrained_onnx(

WhichModel.Bert,

model_id=ONNXModel.BGESmallENV15Q # Quantized BGE model for faster inference

)

# Option 2: Use a custom ONNX model from Hugging Face

model = EmbeddingModel.from_pretrained_onnx(

WhichModel.Bert,

model_id="onnx_model_link",

dtype=Dtype.F16 # Use half precision for faster inference

)

# Embed files with ONNX model

config = TextEmbedConfig(chunk_size=1000, batch_size=32)

data = embed_anything.embed_file("test_files/document.pdf", embedder=model, config=config)

ModernBERT (Quantized)

ModernBERT is a state-of-the-art BERT variant optimized for efficiency.

from embed_anything import EmbeddingModel, WhichModel, ONNXModel, Dtype

# Load quantized ModernBERT for maximum efficiency

model = EmbeddingModel.from_pretrained_onnx(

WhichModel.Bert,

model_id=ONNXModel.ModernBERTBase,

dtype=Dtype.Q4F16 # 4-bit quantized for minimal memory usage

)

# Use it like any other model

data = embed_anything.embed_file("test_files/document.pdf", embedder=model)

ColPali (Document Embedding)

ColPali is optimized for document and image-text embedding tasks.

from embed_anything import ColpaliModel

import numpy as np

# Load ColPali ONNX model

model = ColpaliModel.from_pretrained_onnx(

"starlight-ai/colpali-v1.2-merged-onnx",

None

)

# Embed a PDF file (ColPali processes pages as images)

data = model.embed_file("test_files/document.pdf", batch_size=1)

# Query the embedded document

query = "What is the main topic?"

query_embedding = model.embed_query(query)

# Calculate similarity scores

file_embeddings = np.array([e.embedding for e in data])

query_emb = np.array([e.embedding for e in query_embedding])

# Find most relevant pages

scores = np.einsum("bnd,csd->bcns", query_emb, file_embeddings).max(axis=3).sum(axis=2).squeeze()

top_pages = np.argsort(scores)[::-1][:5]

for page_idx in top_pages:

print(f"Page {data[page_idx].metadata['page_number']}: {data[page_idx].text[:200]}")

ColBERT (Late-Interaction Embeddings)

ColBERT provides token-level embeddings for fine-grained semantic matching.

from embed_anything import ColbertModel

import numpy as np

# Load ColBERT ONNX model

model = ColbertModel.from_pretrained_onnx(

"jinaai/jina-colbert-v2",

path_in_repo="onnx/model.onnx"

)

# Embed sentences

sentences = [

"The quick brown fox jumps over the lazy dog",

"The cat is sleeping on the mat",

"The dog is barking at the moon",

"I love pizza",

"The dog is sitting in the park"

]

# ColBERT returns token-level embeddings

embeddings = model.embed(sentences, batch_size=2)

# Each embedding is a matrix: [num_tokens, embedding_dim]

for i, emb in enumerate(embeddings):

print(f"Sentence {i+1}: {sentences[i]}")

print(f"Embedding shape: {emb.shape}") # Shape: (num_tokens, embedding_dim)

ReRankers

Rerankers improve retrieval quality by re-scoring candidate documents.

from embed_anything import Reranker, Dtype, RerankerResult, DocumentRank

# Load a reranker model

reranker = Reranker.from_pretrained(

"jinaai/jina-reranker-v1-turbo-en",

dtype=Dtype.F16

)

# Query and candidate documents

query = "What is the capital of France?"

candidates = [

"France is a country in Europe.",

"Paris is the capital of France.",

"The Eiffel Tower is in Paris."

]

# Rerank documents (returns top-k results)

results: list[RerankerResult] = reranker.rerank(

[query],

candidates,

top_k=2 # Return top 2 results

)

# Access reranked results

for result in results:

documents: list[DocumentRank] = result.documents

for doc in documents:

print(f"Score: {doc.score:.4f} | Text: {doc.text}")

Cloud Embedding Models (Cohere Embed v4)

Use cloud models for high-quality embeddings without local model deployment.

from embed_anything import EmbeddingModel, WhichModel

import os

# Set your API key

os.environ["COHERE_API_KEY"] = "your-api-key-here"

# Initialize the cloud model

model = EmbeddingModel.from_pretrained_cloud(

WhichModel.CohereVision,

model_id="embed-v4.0"

)

# Use it like any other model

data = embed_anything.embed_file("test_files/document.pdf", embedder=model)

Qwen 3 - Embedding

Qwen3 supports over 100 languages including various programming languages.

from embed_anything import EmbeddingModel, WhichModel, TextEmbedConfig, Dtype

import numpy as np

# Initialize Qwen3 embedding model

model = EmbeddingModel.from_pretrained_hf(

WhichModel.Qwen3,

model_id="Qwen/Qwen3-Embedding-0.6B",

dtype=Dtype.F32

)

# Configure embedding

config = TextEmbedConfig(

chunk_size=1000,

batch_size=2,

splitting_strategy="sentence"

)

# Embed a file

data = model.embed_file("test_files/document.pdf", config=config)

# Query embedding

query = "Which GPU is used for training"

query_embedding = np.array(model.embed_query([query])[0].embedding)

# Calculate similarities

embedding_array = np.array([e.embedding for e in data])

similarities = np.matmul(query_embedding, embedding_array.T)

# Get top results

top_5_indices = np.argsort(similarities)[-5:][::-1]

for idx in top_5_indices:

print(f"Score: {similarities[idx]:.4f} | {data[idx].text[:200]}")

For Semantic Chunking

Semantic chunking preserves meaning by splitting text at semantically meaningful boundaries rather than fixed sizes.

from embed_anything import EmbeddingModel, WhichModel, TextEmbedConfig

import embed_anything

# Main embedding model for generating final embeddings

model = EmbeddingModel.from_pretrained_hf(

WhichModel.Bert,

model_id="sentence-transformers/all-MiniLM-L12-v2"

)

# Semantic encoder for determining chunk boundaries

# This model analyzes text to find natural semantic breaks

semantic_encoder = EmbeddingModel.from_pretrained_hf(

model_id="jinaai/jina-embeddings-v2-small-en"

)

# Configure semantic chunking

config = TextEmbedConfig(

chunk_size=1000, # Target chunk size

batch_size=32, # Batch processing size

splitting_strategy="semantic", # Use semantic splitting

semantic_encoder=semantic_encoder # Model for semantic analysis

)

# Embed with semantic chunking

data = embed_anything.embed_file("test_files/document.pdf", embedder=model, config=config)

# Chunks will be split at semantically meaningful boundaries

for item in data:

print(f"Chunk: {item.text[:200]}...")

print("---" * 20)

For Late-Chunking

Late-chunking splits text into smaller units first, then combines them during embedding for better context preservation.

from embed_anything import EmbeddingModel, WhichModel, TextEmbedConfig, EmbedData

# Load your embedding model

model = EmbeddingModel.from_pretrained_hf(

model_id="sentence-transformers/all-MiniLM-L12-v2"

)

# Configure late-chunking

config = TextEmbedConfig(

chunk_size=1000, # Maximum chunk size

batch_size=8, # Batch size for processing

splitting_strategy="sentence", # Split by sentences first

late_chunking=True, # Enable late-chunking

)

# Embed a file with late-chunking

data: list[EmbedData] = model.embed_file("test_files/attention.pdf", config=config)

# Late-chunking helps preserve context across sentence boundaries

for item in data:

print(f"Text: {item.text}")

print(f"Embedding dimension: {len(item.embedding)}")

print("---" * 20)

🧑🚀 Getting Started

💚 Installation

pip install embed-anything

For GPUs and using special models like ColPali

pip install embed-anything-gpu

🚧❌ If it shows cuda error while running on windowns, run the following command:

os.add_dll_directory("C:/Program Files/NVIDIA GPU Computing Toolkit/CUDA/v12.6/bin")

📒 Notebooks

| End-to-End Retrieval and Reranking using VectorDB Adapters |

| ColPali-Onnx |

| Adapters |

| Qwen3- Embedings |

| Benchmarks |

Usage

➡️ Usage For 0.3 and later version

Basic Text Embedding

from embed_anything import EmbeddingModel, WhichModel, TextEmbedConfig

import embed_anything

# Load a model from Hugging Face

model = EmbeddingModel.from_pretrained_local(

WhichModel.Bert,

model_id="sentence-transformers/all-MiniLM-L12-v2"

)

# Simple file embedding with default config

data = embed_anything.embed_file("test_files/test.pdf", embedder=model)

# Access results

for item in data:

print(f"Text chunk: {item.text[:100]}...")

print(f"Embedding shape: {len(item.embedding)}")

Advanced Usage with Configuration

from embed_anything import EmbeddingModel, WhichModel, TextEmbedConfig

import embed_anything

# Load model

model = EmbeddingModel.from_pretrained_hf(

model_id="jinaai/jina-embeddings-v2-small-en"

)

# Configure embedding parameters

config = TextEmbedConfig(

chunk_size=1000, # Characters per chunk

batch_size=32, # Process 32 chunks at once

buffer_size=64, # Buffer size for streaming

splitting_strategy="sentence" # Split by sentences

)

# Embed with custom configuration

data = embed_anything.embed_file(

"test_files/document.pdf",

embedder=model,

config=config

)

# Process embeddings

for item in data:

print(f"Chunk: {item.text}")

print(f"Metadata: {item.metadata}")

Embedding Queries

from embed_anything import EmbeddingModel, WhichModel

import embed_anything

import numpy as np

# Load model

model = EmbeddingModel.from_pretrained_hf(

model_id="sentence-transformers/all-MiniLM-L12-v2"

)

# Embed a query

queries = ["What is machine learning?", "How does neural networks work?"]

query_embeddings = embed_anything.embed_query(queries, embedder=model)

# Use embeddings for similarity search

for i, query_emb in enumerate(query_embeddings):

print(f"Query: {queries[i]}")

print(f"Embedding shape: {len(query_emb.embedding)}")

Embedding Directories

from embed_anything import EmbeddingModel, WhichModel, TextEmbedConfig

import embed_anything

# Load model

model = EmbeddingModel.from_pretrained_hf(

model_id="sentence-transformers/all-MiniLM-L12-v2"

)

# Configure

config = TextEmbedConfig(chunk_size=1000, batch_size=32)

# Embed all files in a directory

data = embed_anything.embed_directory(

"test_files/",

embedder=model,

config=config

)

print(f"Total chunks: {len(data)}")

Using ONNX Models

ONNX models provide faster inference and lower memory usage. You can use pre-configured models via the ONNXModel enum or load custom ONNX models.

Using Pre-configured ONNX Models (Recommended)

from embed_anything import EmbeddingModel, WhichModel, ONNXModel, Dtype, TextEmbedConfig

import embed_anything

# Use a pre-configured ONNX model (tested and optimized)

model = EmbeddingModel.from_pretrained_onnx(

WhichModel.Bert,

model_id=ONNXModel.BGESmallENV15Q, # Quantized BGE model

dtype=Dtype.Q4F16 # Quantized 4-bit float16

)

# Embed files

config = TextEmbedConfig(chunk_size=1000, batch_size=32)

data = embed_anything.embed_file("test_files/document.pdf", embedder=model, config=config)

Using Custom ONNX Models

For custom or fine-tuned models, specify the Hugging Face model ID and path to the ONNX file:

from embed_anything import EmbeddingModel, WhichModel, Dtype

# Load a custom ONNX model from Hugging Face

model = EmbeddingModel.from_pretrained_onnx(

WhichModel.Jina,

hf_model_id="jinaai/jina-embeddings-v2-small-en",

path_in_repo="model.onnx", # Path to ONNX file in the repo

dtype=Dtype.F16 # Use half precision

)

# Use the model

data = embed_anything.embed_file("test_files/document.pdf", embedder=model)

Note: Using pre-configured models (via ONNXModel enum) is recommended as these models are tested and optimized. For a complete list of supported ONNX models, see ONNX Models Guide.

⁉️FAQ

Do I need to know rust to use or contribute to embedanything?

The answer is No. EmbedAnything provides you pyo3 bindings, so you can run any function in python without any issues. To contibute you should check out our guidelines and python folder example of adapters.

How is it different from fastembed?

We provide both backends, candle and onnx. On top of it we also give an end-to-end pipeline, that is you can ingest different data-types and index to any vector database, and inference any model. Fastembed is just an onnx-wrapper.

We've received quite a few questions about why we're using Candle.

One of the main reasons is that Candle doesn't require any specific ONNX format models, which means it can work seamlessly with any Hugging Face model. This flexibility has been a key factor for us. However, we also recognize that we’ve been compromising a bit on speed in favor of that flexibility.

🚧 Contributing to EmbedAnything

First of all, thank you for taking the time to contribute to this project. We truly appreciate your contributions, whether it's bug reports, feature suggestions, or pull requests. Your time and effort are highly valued in this project. 🚀

This document provides guidelines and best practices to help you to contribute effectively. These are meant to serve as guidelines, not strict rules. We encourage you to use your best judgment and feel comfortable proposing changes to this document through a pull request.

🏎️ RoadMap

Accomplishments

One of the aims of EmbedAnything is to allow AI engineers to easily use state of the art embedding models on typical files and documents. A lot has already been accomplished here and these are the formats that we support right now and a few more have to be done.

🖼️ Modalities and Source

We’re excited to share that we've expanded our platform to support multiple modalities, including:

-

Audio files

-

Markdowns

-

Websites

-

Images

-

Videos

-

Graph

This gives you the flexibility to work with various data types all in one place! 🌐

⚙️ Performance

We now support both candle and Onnx backend

➡️ Support for GGUF models

🫐Embeddings:

We had multimodality from day one for our infrastructure. We have already included it for websites, images and audios but we want to expand it further to.

➡️ Graph embedding -- build deepwalks embeddings depth first and word to vec

➡️ Video Embedding

➡️ Yolo Clip

🌊Expansion to other Vector Adapters

We currently support a wide range of vector databases for streaming embeddings, including:

- Elastic: thanks to amazing and active Elastic team for the contribution

- Weaviate

- Pinecone

- Qdrant

- Milvus

- Chroma

How to add an adpters: https://starlight-search.com/blog/2024/02/25/adapter-development-guide.md

💥 Create WASM demos to integrate embedanything directly to the browser.

💜 Add support for ingestion from remote sources

➡️ Support for S3 bucket

➡️ Support for azure storage

➡️ Support for google drive/dropbox

But we're not stopping there! We're actively working to expand this list.

Want to Contribute? If you’d like to add support for your favorite vector database, we’d love to have your help! Check out our contribution.md for guidelines, or feel free to reach out directly turingatverge@gmail.com . Let's build something amazing together! 💡

AWESOME Projects built on EmbedAnything.

- A Rust-based cursor like chat with your codebase tool: https://github.com/timpratim/cargo-chat

- A simple vector-based search engine, also supports ordinary text search : https://github.com/szuwgh/vectorbase2

- Semantic file tracker in CLI operated through daemon built with rust.: https://github.com/sam-salehi/sophist

- FogX-Store is a dataset store service that collects and serves large robotics datasets : https://github.com/J-HowHuang/FogX-Store

- A Dart Wrapper for EmbedAnything Crate: https://github.com/cotw-fabier/embedanythingindart

- Generate embeddings in Rust with tauri on MacOS : https://github.com/do-me/tauri-embedanything-ios

- RAG with EmbedAnything and Milvus: https://milvus.io/docs/v2.5.x/build_RAG_with_milvus_and_embedAnything.md

A big Thank you to all our StarGazers

Star History

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distributions

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file embed_anything-0.7.0.tar.gz.

File metadata

- Download URL: embed_anything-0.7.0.tar.gz

- Upload date:

- Size: 1.0 MB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

458e0226a8ac984cfdb92422859acd7a2cb25322136480225e843c3415ef9d25

|

|

| MD5 |

67aadde19d7e8efa3a38bddc3873dae3

|

|

| BLAKE2b-256 |

b35e63e989aa0c8dd979a4a3cbcf696446a47a9e5901d5e69d368cc1d126cf48

|

File details

Details for the file embed_anything-0.7.0-cp314-cp314-win_amd64.whl.

File metadata

- Download URL: embed_anything-0.7.0-cp314-cp314-win_amd64.whl

- Upload date:

- Size: 23.2 MB

- Tags: CPython 3.14, Windows x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d22cb78ad2874b76ed395427772e9de4117ce3235ca3083c86a2703e4be63102

|

|

| MD5 |

b045ed3e15da456e9c36f5de47ee0e20

|

|

| BLAKE2b-256 |

3926cdb7f7bdce7c486ec5d4f05d75dadc21a546db4b399e1f0a63470cb0521d

|

File details

Details for the file embed_anything-0.7.0-cp314-cp314-manylinux_2_34_x86_64.whl.

File metadata

- Download URL: embed_anything-0.7.0-cp314-cp314-manylinux_2_34_x86_64.whl

- Upload date:

- Size: 25.1 MB

- Tags: CPython 3.14, manylinux: glibc 2.34+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

62cfc656c3681a07c7def2c051f1d6726dcbc555217d7a15b68d2b6dcbcab2da

|

|

| MD5 |

ae3c86cc3a2673e040807232bcbf6625

|

|

| BLAKE2b-256 |

7dbb06e5f445bd4fba36a07ed4bf1648a2c236fd6d112591d113d20dbc380431

|

File details

Details for the file embed_anything-0.7.0-cp314-cp314-macosx_11_0_arm64.whl.

File metadata

- Download URL: embed_anything-0.7.0-cp314-cp314-macosx_11_0_arm64.whl

- Upload date:

- Size: 24.4 MB

- Tags: CPython 3.14, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e3446278d5f485cea855e0a1893e182b51503a773504dfc3b9d3ff5a96451294

|

|

| MD5 |

90c6d1f652a043907bbc57641219e23b

|

|

| BLAKE2b-256 |

81df3e47e5b741948a91868c36871890bc9bfe116a0129b0a0288bae542f7f79

|

File details

Details for the file embed_anything-0.7.0-cp313-cp313-win_amd64.whl.

File metadata

- Download URL: embed_anything-0.7.0-cp313-cp313-win_amd64.whl

- Upload date:

- Size: 23.2 MB

- Tags: CPython 3.13, Windows x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

dc19fc198554a0f60c0b3f4625f4843fe117dff266b3ceba381b0cafb0e61467

|

|

| MD5 |

fc42f8b90eee73325581eec3c8a9857a

|

|

| BLAKE2b-256 |

5647ad8a2dda0bac79d57a2bc138b3672d4eef6957530846135113cdce3caa85

|

File details

Details for the file embed_anything-0.7.0-cp313-cp313-macosx_11_0_arm64.whl.

File metadata

- Download URL: embed_anything-0.7.0-cp313-cp313-macosx_11_0_arm64.whl

- Upload date:

- Size: 24.4 MB

- Tags: CPython 3.13, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c4716c1501b99eac0a6aeabf4c89a93c4f6de83a9ed3bd1f325c6f93a2961a2c

|

|

| MD5 |

b188a9a853b2d03e0ce4d864790b1b6b

|

|

| BLAKE2b-256 |

787808757f212383d27c6f904102438f79a86f61f41809d93a11617b8b620059

|

File details

Details for the file embed_anything-0.7.0-cp312-cp312-win_amd64.whl.

File metadata

- Download URL: embed_anything-0.7.0-cp312-cp312-win_amd64.whl

- Upload date:

- Size: 23.2 MB

- Tags: CPython 3.12, Windows x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

8c750845935e754acd3b6809e6782ef74ac16fc45115bb42f1724bdfd87ccfab

|

|

| MD5 |

ceffdcfba241b69b4b830c0f53e95783

|

|

| BLAKE2b-256 |

b4ca5f1cebe3ff41d082c400994e3423a5b3f79a5651f5051ce686269f661420

|

File details

Details for the file embed_anything-0.7.0-cp312-cp312-manylinux_2_34_x86_64.whl.

File metadata

- Download URL: embed_anything-0.7.0-cp312-cp312-manylinux_2_34_x86_64.whl

- Upload date:

- Size: 25.1 MB

- Tags: CPython 3.12, manylinux: glibc 2.34+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

cbdafc706259eaaa21811f6b631d99a776b4dc872da24da9fef9097b05c9816a

|

|

| MD5 |

a53e8d6adebe6ac7e2f5cf11641bead6

|

|

| BLAKE2b-256 |

c5e38d4d003a8989e05b637efb55baf2c565adfcb686d8118a024fa441b28165

|

File details

Details for the file embed_anything-0.7.0-cp312-cp312-macosx_11_0_arm64.whl.

File metadata

- Download URL: embed_anything-0.7.0-cp312-cp312-macosx_11_0_arm64.whl

- Upload date:

- Size: 24.4 MB

- Tags: CPython 3.12, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2fe2cc62378f4d28079ebf1b2e77921b98ecf938553647e1523e47e74f2cf92e

|

|

| MD5 |

1131c7c9a54da596f6d1c599ebfa694c

|

|

| BLAKE2b-256 |

f06abb0059d1ede1ecd3f683a70e8fe8121a4bfa13253ab57d3d73cecfbb425f

|

File details

Details for the file embed_anything-0.7.0-cp311-cp311-win_amd64.whl.

File metadata

- Download URL: embed_anything-0.7.0-cp311-cp311-win_amd64.whl

- Upload date:

- Size: 23.3 MB

- Tags: CPython 3.11, Windows x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

df9b4185382c687bb98f5918e6c9ab7d912b735b50014dbb2efde2431c759d57

|

|

| MD5 |

ed0dc44f59f90a7a1eba71e4e11c5b94

|

|

| BLAKE2b-256 |

aaf3493b0241f734cd5c18a8b7d1b7c271aed16aec57593b71377c1500713156

|

File details

Details for the file embed_anything-0.7.0-cp311-cp311-manylinux_2_34_x86_64.whl.

File metadata

- Download URL: embed_anything-0.7.0-cp311-cp311-manylinux_2_34_x86_64.whl

- Upload date:

- Size: 25.1 MB

- Tags: CPython 3.11, manylinux: glibc 2.34+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d5fba959430093bb035a3ca060b20568146631b686d2af50062eae94dd93b77d

|

|

| MD5 |

8f7dd76e2bd641a270b8b3066bfcd78c

|

|

| BLAKE2b-256 |

a0c2f7241c8058cf10b712adcfcf7b97f03fb7d83e51af1b38de01371077c1ae

|

File details

Details for the file embed_anything-0.7.0-cp311-cp311-macosx_11_0_arm64.whl.

File metadata

- Download URL: embed_anything-0.7.0-cp311-cp311-macosx_11_0_arm64.whl

- Upload date:

- Size: 24.4 MB

- Tags: CPython 3.11, macOS 11.0+ ARM64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

75e81c1913b3ee2bee70eb8b2b075141b49d508585a7ae8a5f1daddd9bf5db81

|

|

| MD5 |

d9053b55d8467cf13b6573efa6643aba

|

|

| BLAKE2b-256 |

e9b1d6a8a0e06746c0424ea03e53afc49a14d76aef294bb47e1e3d7b8e6b720b

|

File details

Details for the file embed_anything-0.7.0-cp310-cp310-win_amd64.whl.

File metadata

- Download URL: embed_anything-0.7.0-cp310-cp310-win_amd64.whl

- Upload date:

- Size: 23.2 MB

- Tags: CPython 3.10, Windows x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f348fe62b493d72c79fb92aa0f67dc71e39d8fb7bf0dbb0287175350ce4a3c40

|

|

| MD5 |

b24197185e063607b51b26255f7ff82d

|

|

| BLAKE2b-256 |

5e21931aadfa5956909bdcd9f05f89c50d265587ee04772e84651c0fd4ee63a9

|

File details

Details for the file embed_anything-0.7.0-cp310-cp310-manylinux_2_34_x86_64.whl.

File metadata

- Download URL: embed_anything-0.7.0-cp310-cp310-manylinux_2_34_x86_64.whl

- Upload date:

- Size: 25.1 MB

- Tags: CPython 3.10, manylinux: glibc 2.34+ x86-64

- Uploaded using Trusted Publishing? Yes

- Uploaded via: maturin/1.10.2

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

7f4acccd66eb15ca13c5ab6a05c8f09a85af7e26901f4e44aea0a5086d7c3530

|

|

| MD5 |

1e064cc4294dff7e2de899478bfbff7f

|

|

| BLAKE2b-256 |

e01e5ef2cd1d49b628028528e992f8a5943538c8e7e449a1af62d3e0b08adc43

|