No project description provided

Project description

FAESM: A Drop-in Efficient Pytorch Implementation of ESM2

Flash Attention ESM (FAESM) is an efficient PyTorch implementation of the Evolutionary Scale Modeling (ESM) family, which is a family of protein language models (pLMs) that can be used for various protein sequence analysis tasks. FAESM is designed to be more efficient than the official ESM implementation, which can save up to 60% of memory usage and 70% of inference time. The key features of FAESM are:

- Flash Attention: FAESM uses the FlashAttention implementation, by far the most efficient implementation of the self-attention mechanism.

- Scalar Dot-Product Attention (SDPA): FAESM also provides an implementation of the PyTorch Scalar Dot-Product Attention, which is a bit slower than the FlashAttention but it's compatible with most of the system and still faster than the official ESM implementation.

- Same Checkpoint: FAESM is a drop-in replacement of ESM2, having the same API and checkpoint.

Table of Contents

- FAESM: A Drop-in Efficient Pytorch Implementation of ESM2

- Table of Contents

- Installation

- Usage

- Benchmarking

- TODOs

- Appreciation

- Citation

Installation

- Install PyTorch 1.12 and above if you haven't:

pip install pytorch. - [Optional]: Install flash-attn if you want to use the flash attention implementation, which is the fastest and most efficient implementation. However, it can be a bit tricky to install so you can skip this step without any problem. In that case, skip this step and you will use Pytorch SDPA attention.

pip install flash-attn --no-build-isolation

Having trouble installing flash attention but still want to use it? A workaround is docker container. You can use the official nvidia pytorch containers which have all the dependencies for flash attention.

- Install FAESM from github:

# if you want to use flash attention

pip install faesm[flash_attn]

# if you want to forego flash attention and just use SDPA

pip install faesm

Usage

ESM2

FAESM is a drop-in replacement for the official ESM implementation. You can use the same code as you would use the official ESM implementation. For example:import torch

from faesm.esm import FAEsmForMaskedLM

# Step 1: Load the FAESM model

device = 'cuda' if torch.cuda.is_available() else 'cpu'

model = FAEsmForMaskedLM.from_pretrained("facebook/esm2_t33_650M_UR50D").to(device).eval().to(torch.float16)

# Step 2: Prepare a sample input sequence

sequence = "MAIVMGRWKGAR"

inputs = model.tokenizer(sequence, return_tensors="pt")

inputs = {k: v.to(device) for k, v in inputs.items()}

# Step 3: Run inference with the FAESM model

outputs = model(**inputs)

# Step 4: Process and print the output logits and repr.

print("Logits shape:", outputs['logits'].shape) # (batch_size, sequence_length, num_tokens)

print("Repr shape:", outputs['last_hidden_state'].shape) # (batch_size, sequence_length, hidden_size)

# Step 5: start the repo if the code works for u!

ESM-C

Right after EvolutionaryScale release ESM-C, we follow up with the flash attention version of ESM-C in FAESM. You can run ESM-C easily with the following code:

from faesm.esmc import ESMC

sequence = ['MPGWFKKAWYGLASLLSFSSFI']

model = ESMC.from_pretrained("esmc_300m",use_flash_attn=True).to("cuda")

input_ids = model.tokenizer(sequence, return_tensors="pt")["input_ids"].to("cuda")

output = model(input_ids)

print(output.sequence_logits.shape)

print(output.embeddings.shape)

Training [WIP]

Working on an example training script for MLM training on Uniref50. For now, you can use the same training logic as how you would train the official ESM since the FAESM has no difference in the model architecture. It's recommended to use the flash attention for training. Because in the forward pass, it unpads the input sequences to remove all the padding tokens, which 1) speeds up the training & reduces the memory usage and 2) it doesn't require batching sequences of similar length to avoid padding. Also, SDPA is still a good alternative if you can't install flash attention as used in DPLM.

Benchmarking

FAESM vs. Official ESM2

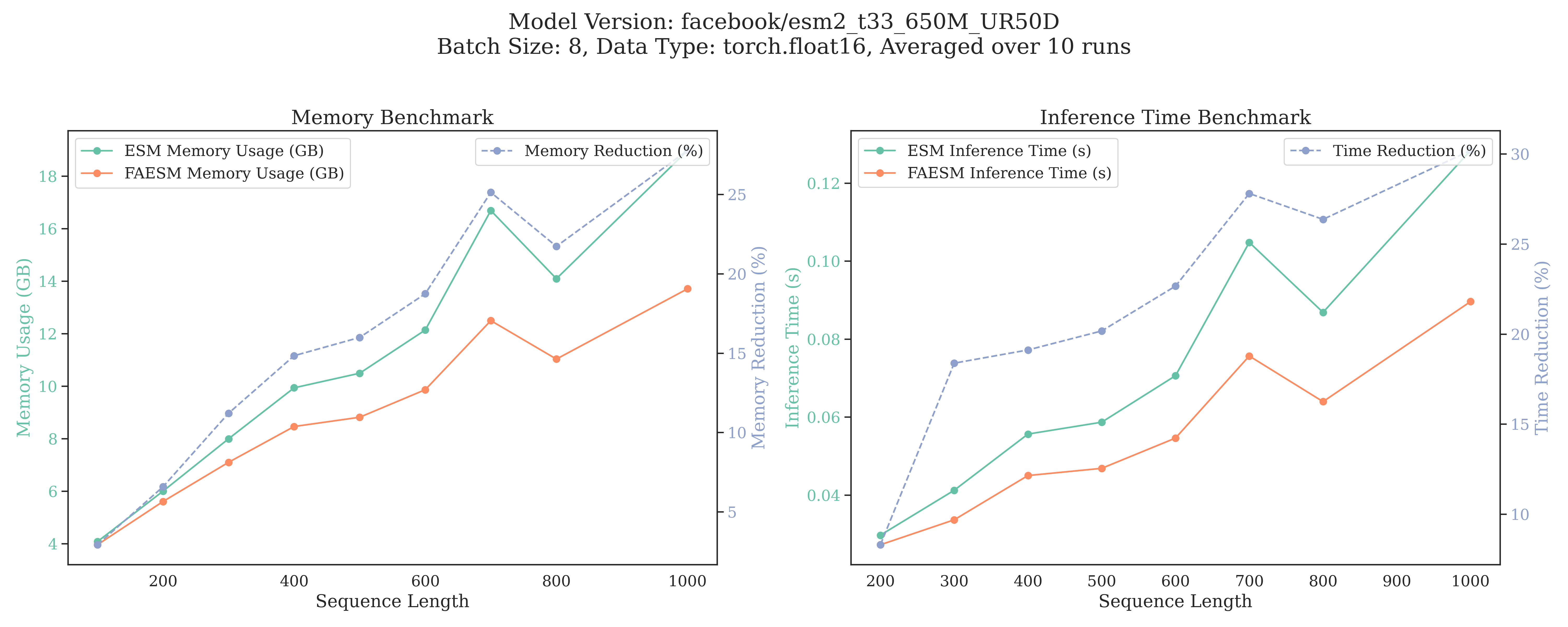

Below is the comparison of peak memory usage and inference time of FAESM with the official ESM2. We show that FAESM can save memory usage by up to 60% and inference time by up to 70% (length 1000). The benchmarking is done on ESM-650M with batch size 8, and a single A100 with 80GB of memory.

Below @ANaka compares the SDFA implementation vs. official ESM2 (see his PR), where we can still get ~30% reduction by just pure pytorch, not too bad :)

You can reproduce the benchmarking of ESM2 by running the following command:

pytest tests/benchmark.py

To test errors between FAESM and the official ESM2 implementation, you can run:

pytest tests/test_compare_esm.py

FAESM-C vs. Official ESM-C

Below we show the scaling of FAESM-C with the official ESM-C, using FAESM-C we can save 60% memory usage and 70% inference time.

Run the following script to reproduce the benchmarking:

pytest tests/benchmark_esmc.py

Run the script to test the errors between FAESM-C and the official ESM-C implementation:

pytest tests/test_compare_esmc.py

TODOs

- Training script

- Integrate FAESM into ESMFold

Appreciation

- The Rotary code is from esm-efficient.

- The ESM modules and the SDPA attention module are inspired by ESM and DPLM.

- I want to highlight that esm-efficient also supports Flash Attention and offers more features such as quantitation and lora. Please check it out!!

This project started as a mutual disappointment with Alex Tong(@atong01) about why there is no efficient implementation of ESM (wasted a lot compute in training pLMs :(. He later helped me debugged the precision errors in my implementation and organize this repo. In the process, I talked @MuhammedHasan regarding his ESM-efficent implementation (see the issues 1 and 2), and also Tri Tao about flash attention (see the issue). Of course shoutout to the ESM teams for creating the ESM family. None of the pieces of code would be possible without their help.

Star History

License

This work is licensed under the MIT license. However, it contains altered and unaltered portions of code licensed under MIT, Apache 2.0, and Cabrian Open License Agreement.

- ESM: MIT Licensed

- DPLM: Apache 2.0 Licensed

- ESMC: See ESM Licensing in particular the Cambrian Open License Agreement

We thank the creators of these prior works for their contributions and open licensing. We also note that model weights may have separate licensing. The ESMC 300M Model is licensed under the EvolutionaryScale Cambrian Open License Agreement. The ESMC 600M Model is licensed under the EvolutionaryScale Cambrian Non-Commercial License Agreement.

Citation

Please cite this repo if you use it in your work.

@misc{faesm2024,

author = {Fred Zhangzhi Peng,Pranam Chatterjee, and contributors},

title = {FAESM: An efficient PyTorch implementation of Evolutionary Scale Modeling (ESM)},

year = {2024},

howpublished = {\url{https://github.com/pengzhangzhi/faesm}},

note = {Efficient PyTorch implementation of ESM with FlashAttention and Scalar Dot-Product Attention (SDPA)},

abstract = {FAESM is a drop-in replacement for the official ESM implementation, designed to save up to 60% memory usage and 70% inference time, while maintaining compatibility with the ESM API.},

}

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file faesm-0.1.0.tar.gz.

File metadata

- Download URL: faesm-0.1.0.tar.gz

- Upload date:

- Size: 28.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.0.1 CPython/3.9.21

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

edb63ce8cb005a41e47cbd87c81c2d07df679a4784ad53ddb86629ae50b4cccf

|

|

| MD5 |

6b853b2d3b36174343fed62a505cb98f

|

|

| BLAKE2b-256 |

54dda844781fd9abe8b2ee6ccd7b01fe45e5cbd7d218f7c3f2817df639d1de08

|

File details

Details for the file faesm-0.1.0-py3-none-any.whl.

File metadata

- Download URL: faesm-0.1.0-py3-none-any.whl

- Upload date:

- Size: 25.2 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.0.1 CPython/3.9.21

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

bd756573bdb49a605eaf09a0adfa9acd38b62c5764a48ff7eaa5073e3a1b1780

|

|

| MD5 |

7d9d97bbbf87d7c8439b8e146c91d03e

|

|

| BLAKE2b-256 |

c7e785e2fe76296f00c056a763cd58c7eb477f37abc6ae345232d6a88de11bb0

|