A package for managing and processing datasets for fairness research in machine learning

Project description

FairML Datasets

A comprehensive Python package for loading, processing, and working with datasets used in fair classification.

Overview

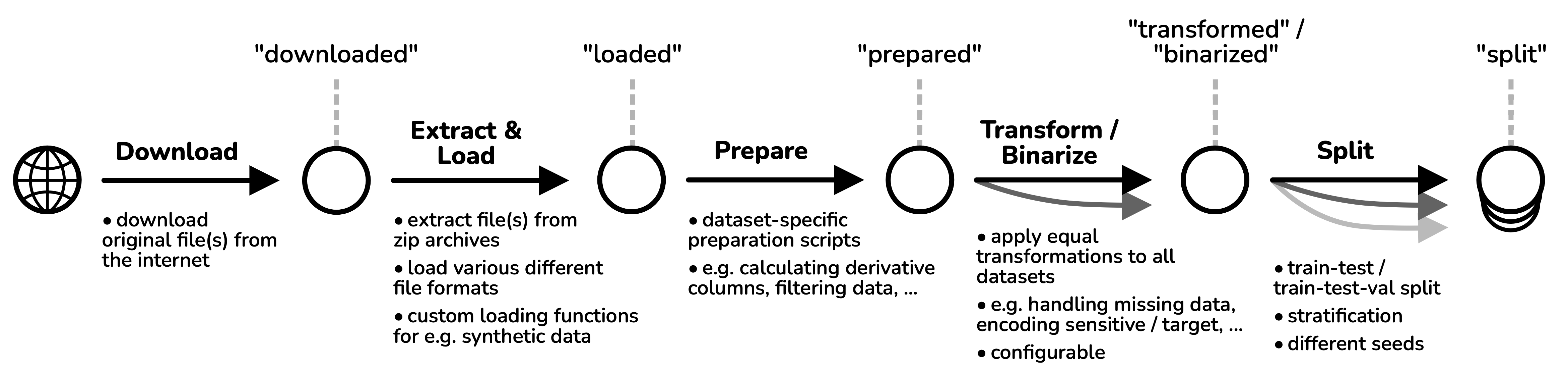

FairML Datasets provides tools and interfaces to download, load, transform, and analyze the datasets in the FairGround corpus. It handles sensitive attributes and facilitates fairness-aware machine learning experiments. The package supports the full data processing pipeline from downloading data all the way to splitting data for ML training.

Key Features

- 📦 Loading: Easily download, load and prepare any of the 44 supported datasets in the corpus.

- 🗂️ Collections: Conveneiently use any of our prespecified collections which have been developed to maximize diversity in algorithmic performance.

- 🔄 Multi-Dataset Support: Easily evaluate your algorithm on one scenario, five or fourty using a simple loop.

- ⚙️ Processing: Automatically apply dataset (pre)processing with configurable choices and defaults available.

- 📊 Metadata Generation: Automatically calculate rich metadata features for datasets.

- 💻 Command-line Interface: Access common operations without writing code.

Installation

pip install fairml-datasets

Or using uv:

uv add fairml-datasets

Quick Start

from fairml_datasets import Dataset

# Access a specific dataset by ID directly

dataset = Dataset.from_id("folktables_acsincome_small")

# Load the dataset

df = dataset.load()

# Check sensitive attributes

print(f"Sensitive columns: {dataset.sensitive_columns}")

# Transform the dataset

df_transformed, info = dataset.transform(df)

# Create train/test/validation split

df_train, df_test, df_val = dataset.train_test_val_split(df_transformed)

Are you curious which datasets are available? Check out the Datasets Overview in the side bar to see the list!

Command-line Usage

The package provides a command-line interface for common operations:

# Generate and export metadata

python -m fairml_datasets metadata

# Export datasets in various processing stages

python -m fairml_datasets export-datasets --stage prepared

# Export dataset citations in BibTeX format

python -m fairml_datasets export-citations

Development

Development dependencies are managed via uv. For information on how to install uv, please refer to official installation instructions.

To install all dependencies, run:

uv sync --dev

Formatting

We're using ruff for formatting of code. You can autoformat and lint code by running:

ruff check . --fix && ruff format .

Tests

Tests are located in the tests/ directory. You can run all tests using pytest:

uv run pytest

License

Due to restrictions in some of the third-party code we include, this work is licensed under two licenses.

The primary license of this work is Creative Commons Attribution 4.0 International License (CC BY 4.0). This license applies to all assets generated by the authors of this work. It does NOT apply to the generate_synthetic_data.py script, which instead is licensed under GNU GPLv3.

The second license, which applies to the complete repository, is the more restrictive GNU GENERAL PUBLIC LICENSE 3 (GNU GPLv3).

Please note that this licensing information only refers to the code, annotations and generated metadata. Individual datasets which are loaded and exported by this package may have different licenses. Please refer to individual datasets and their sources for dataset-level information.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file fairml_datasets-0.2.0.tar.gz.

File metadata

- Download URL: fairml_datasets-0.2.0.tar.gz

- Upload date:

- Size: 103.3 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

409165d309626cde7450dd9b1d9186286f6b2cec21575cd44c5eed06923fa99c

|

|

| MD5 |

81c3ddd8be11b9f8a5adfe6f8e8fe294

|

|

| BLAKE2b-256 |

300d4d5e794d01e38485e15ad973b52464d0148d9340f2aba6264946b40849f9

|

Provenance

The following attestation bundles were made for fairml_datasets-0.2.0.tar.gz:

Publisher:

publish.yml on reliable-ai/fairground

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

fairml_datasets-0.2.0.tar.gz -

Subject digest:

409165d309626cde7450dd9b1d9186286f6b2cec21575cd44c5eed06923fa99c - Sigstore transparency entry: 743336393

- Sigstore integration time:

-

Permalink:

reliable-ai/fairground@4a1438fda6903d44e7d360efba219075b0eed9ed -

Branch / Tag:

refs/tags/v0.2.0 - Owner: https://github.com/reliable-ai

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@4a1438fda6903d44e7d360efba219075b0eed9ed -

Trigger Event:

release

-

Statement type:

File details

Details for the file fairml_datasets-0.2.0-py3-none-any.whl.

File metadata

- Download URL: fairml_datasets-0.2.0-py3-none-any.whl

- Upload date:

- Size: 105.0 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

44eb858496bbee5511305f11693dbce99680b0342d650836ba455a3b80186cc0

|

|

| MD5 |

96eff47295e3ef24584dc0336ef17f16

|

|

| BLAKE2b-256 |

c93dd63875556cfdeeb634436e50e8734e2b7aed3c5f2362f72e2d8d04c929cd

|

Provenance

The following attestation bundles were made for fairml_datasets-0.2.0-py3-none-any.whl:

Publisher:

publish.yml on reliable-ai/fairground

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

fairml_datasets-0.2.0-py3-none-any.whl -

Subject digest:

44eb858496bbee5511305f11693dbce99680b0342d650836ba455a3b80186cc0 - Sigstore transparency entry: 743336399

- Sigstore integration time:

-

Permalink:

reliable-ai/fairground@4a1438fda6903d44e7d360efba219075b0eed9ed -

Branch / Tag:

refs/tags/v0.2.0 - Owner: https://github.com/reliable-ai

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@4a1438fda6903d44e7d360efba219075b0eed9ed -

Trigger Event:

release

-

Statement type: