Automatic response model inference for FastAPI using AST analysis.

Project description

FastAPI AST Inference

Automatic response model inference for FastAPI using AST analysis.

This library analyzes your FastAPI endpoint functions at startup time and automatically generates Pydantic models for OpenAPI documentation based on the dictionary structures you return.

Why?

Before (Standard Pydantic Approach)

from fastapi import FastAPI

from pydantic import BaseModel

from typing import List

# You must define a separate model class for every response structure

class CustomerInfo(BaseModel):

name: str

vip_status: bool

preferences: Dict[str, Union[bool, str]]

class Item(BaseModel):

item_id: int

name: str

price: float

in_stock: bool

class OrderResponse(BaseModel):

order_id: str

status: str

total_amount: float

tags: List[str]

customer_info: CustomerInfo

items: List[Item]

metadata: Optional[Dict[str, Any]] = None

app = FastAPI()

@app.get("/orders/{order_id}", response_model=OrderResponse)

async def get_order(order_id: str):

return {

"order_id": order_id,

"status": "processing",

"total_amount": 150.50,

"tags": ["urgent", "new_customer"],

"customer_info": {

"name": "John Doe",

"vip_status": False,

"preferences": {"notifications": True, "theme": "dark"},

},

"items": [

{

"item_id": 1,

"name": "Laptop Stand",

"price": 45.00,

"in_stock": True,

},

],

"metadata": None,

}

After (With AST Inference)

from fastapi import FastAPI

from fastapi_ast_inference import infer_response

app = FastAPI()

# No model definition needed! Types are inferred from the return statement.

@app.get("/orders/{order_id}")

@infer_response

async def get_order(order_id: str):

return {

"order_id": order_id,

"status": "processing",

"total_amount": 150.50,

"tags": ["urgent", "new_customer"],

"customer_info": {

"name": "John Doe",

"vip_status": False,

"preferences": {"notifications": True, "theme": "dark"},

},

"items": [

{

"item_id": 1,

"name": "Laptop Stand",

"price": 45.00,

"in_stock": True,

},

],

"metadata": None,

}

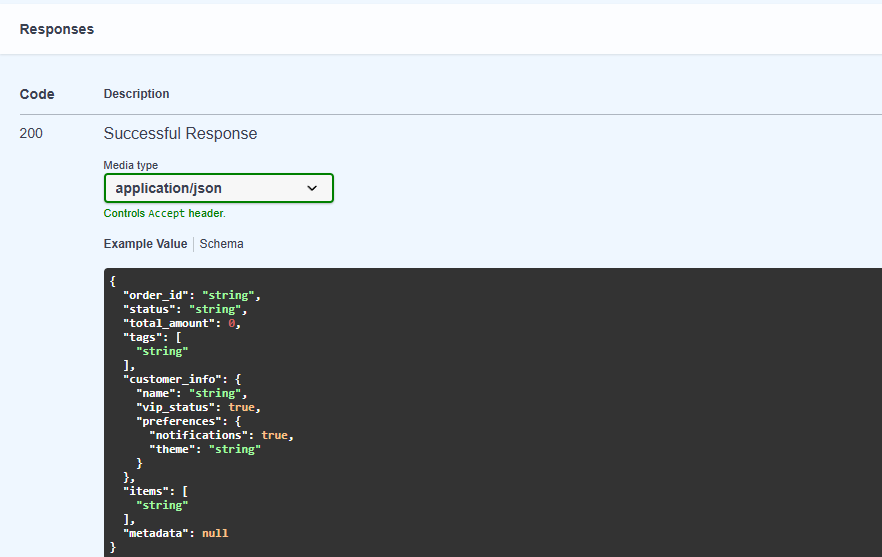

Result: Full OpenAPI schema with typed fields, zero boilerplate!

Installation

pip install fastapi_ast_inference

Usage

Option 1: Decorator (Recommended)

Use the @infer_response decorator on specific endpoints — no additional configuration needed:

from fastapi import FastAPI

from fastapi_ast_inference import infer_response

app = FastAPI()

@app.get("/")

@infer_response

async def root():

return {"message": "Hello, World!", "count": 42}

@app.get("/users/{user_id}")

@infer_response

async def get_user(user_id: int):

return {"id": user_id, "name": "John", "active": True}

Option 2: App-Wide Configuration

⚠️ Note: This affects ALL endpoints in your application.

Apply AST inference to all routes automatically:

from fastapi import FastAPI

from fastapi_ast_inference import InferredAPIRoute

app = FastAPI()

app.router.route_class = InferredAPIRoute # Affects entire app

@app.get("/")

async def root():

return {"message": "Hello, World!", "count": 42}

Option 3: Router-Level Configuration

⚠️ Note: This affects all endpoints in the router.

Apply to specific routers:

from fastapi import APIRouter

from fastapi_ast_inference import InferredAPIRoute, create_inferred_router

# Using the helper function

router = create_inferred_router(prefix="/api/v1", tags=["api"])

# Or manually

router = APIRouter(route_class=InferredAPIRoute) # Affects entire router

@router.get("/items")

async def get_items():

return {"items": ["a", "b", "c"], "total": 3}

Option 4: Programmatic API

Use the inference function directly for custom use cases:

from fastapi_ast_inference import infer_response_model_from_ast

def my_endpoint():

return {"name": "test", "value": 123}

model = infer_response_model_from_ast(my_endpoint)

# model is now a Pydantic BaseModel with 'name: str' and 'value: int' fields

Supported Patterns

✅ Direct Dictionary Literals

@app.get("/")

async def endpoint():

return {"key": "value", "number": 42, "flag": True}

✅ Variable Returns

@app.get("/")

async def endpoint():

data = {"status": "ok", "items": [1, 2, 3]}

return data

✅ Annotated Variables

@app.get("/")

async def endpoint():

result: Dict[str, Any] = {"count": 100, "active": True}

return result

✅ Nested Structures

@app.get("/")

async def endpoint():

return {

"user": {"name": "John", "age": 30},

"settings": {"theme": "dark", "notifications": True}

}

✅ Type Inference from Arguments

@app.get("/items/{item_id}")

async def get_item(item_id: int, name: str):

return {"id": item_id, "name": name} # Types inferred from parameters

❌ Not Supported

- Multiple return statements (different structures in if/else)

- Dynamic dictionary construction (e.g.,

dict(key=value)) - Function call returns (e.g.,

return some_function()) - Non-string dictionary keys

How It Works

-

At Application Startup: When FastAPI registers routes, the library intercepts endpoint functions.

-

AST Analysis: The source code is parsed into an Abstract Syntax Tree.

-

Type Inference: Return statements are analyzed to extract dictionary structure and infer types:

- Constants → their Python types (

"hello"→str,42→int) - Lists →

List[T]where T is inferred from elements - Nested dicts → nested Pydantic models

- Function arguments → types from annotations

- Constants → their Python types (

-

Model Generation: A Pydantic model is dynamically created with the inferred fields.

-

OpenAPI Integration: The generated model is used for response documentation.

Performance

- Zero runtime overhead: AST analysis happens once at startup, not per request

- Cached results: Inferred models are stored and reused

- Graceful fallback: If inference fails, standard FastAPI behavior is preserved

Logging

Enable debug logging to see inference decisions:

import logging

logging.getLogger("fastapi_ast_inference").setLevel(logging.DEBUG)

Example output:

DEBUG:fastapi_ast_inference:AST inference skipped for 'get_data': multiple return statements detected (2)

DEBUG:fastapi_ast_inference:AST inference skipped for 'get_external': return value is not a dict literal

API Reference

infer_response_model_from_ast(func) -> Optional[Type[BaseModel]]

Analyze a function and return an inferred Pydantic model, or None if inference fails.

@infer_response

Decorator that pre-computes the inferred model and sets it as the function's return annotation. Works independently without InferredAPIRoute.

InferredAPIRoute

Custom route class that automatically applies AST inference to endpoints.

create_inferred_router(**kwargs) -> APIRouter

Create an APIRouter with InferredAPIRoute as the default route class.

get_inferred_model(func) -> Optional[Type[BaseModel]]

Retrieve the inferred model from a decorated function.

License

MIT License - see LICENSE for details.

Contributing

Contributions are welcome! Please feel free to submit a Pull Request.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

File details

Details for the file fastapi_ast_inference-0.1.2.tar.gz.

File metadata

- Download URL: fastapi_ast_inference-0.1.2.tar.gz

- Upload date:

- Size: 20.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.14.0

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

d0b675c263df230f7a3945a4cda86a1beb3619843092c495cc2702babcca1b5e

|

|

| MD5 |

3578f09bebb64371edac7357318c2b09

|

|

| BLAKE2b-256 |

315603144a376557418223af6e55091187d198dd0ff9e2e04ea016287e58307c

|