Full-stack FastAPI + Next.js template generator with PydanticAI/LangChain agents, WebSocket streaming, 20+ enterprise integrations, and Logfire/LangSmith observability. Ship AI apps fast. CLI tool to generate production-ready FastAPI + Next.js projects with AI agents, auth, and observability.

Project description

Full-Stack AI Agent Template

Production-Ready AI/LLM Applications — In Minutes, Not Weeks

Quick Start • Features • Demo • PyPI • Docs

🤖 PydanticAI • 🦜 LangChain, LangGraph & DeepAgents • 👥 CrewAI • 🎯 Fully Type-Safe

Related Projects

Building advanced AI agents? Check out pydantic-deepagents — a deepagent framework built on pydantic-ai for building Claude Code-style AI agents with filesystem tools, subagent delegation, persistent memory, context management, cost tracking, and an interactive CLI.

🎯 Why This Template

Building AI/LLM applications requires more than just an API wrapper. You need:

- Type-safe AI agents with tool/function calling

- Real-time streaming responses via WebSocket

- Conversation persistence and history management

- Production infrastructure - auth, rate limiting, observability

- Enterprise integrations - background tasks, webhooks, admin panels

This template gives you all of that out of the box, with 20+ configurable integrations so you can focus on building your AI product, not boilerplate.

Perfect For

- 🤖 AI Chatbots & Assistants - PydanticAI or LangChain agents with streaming responses

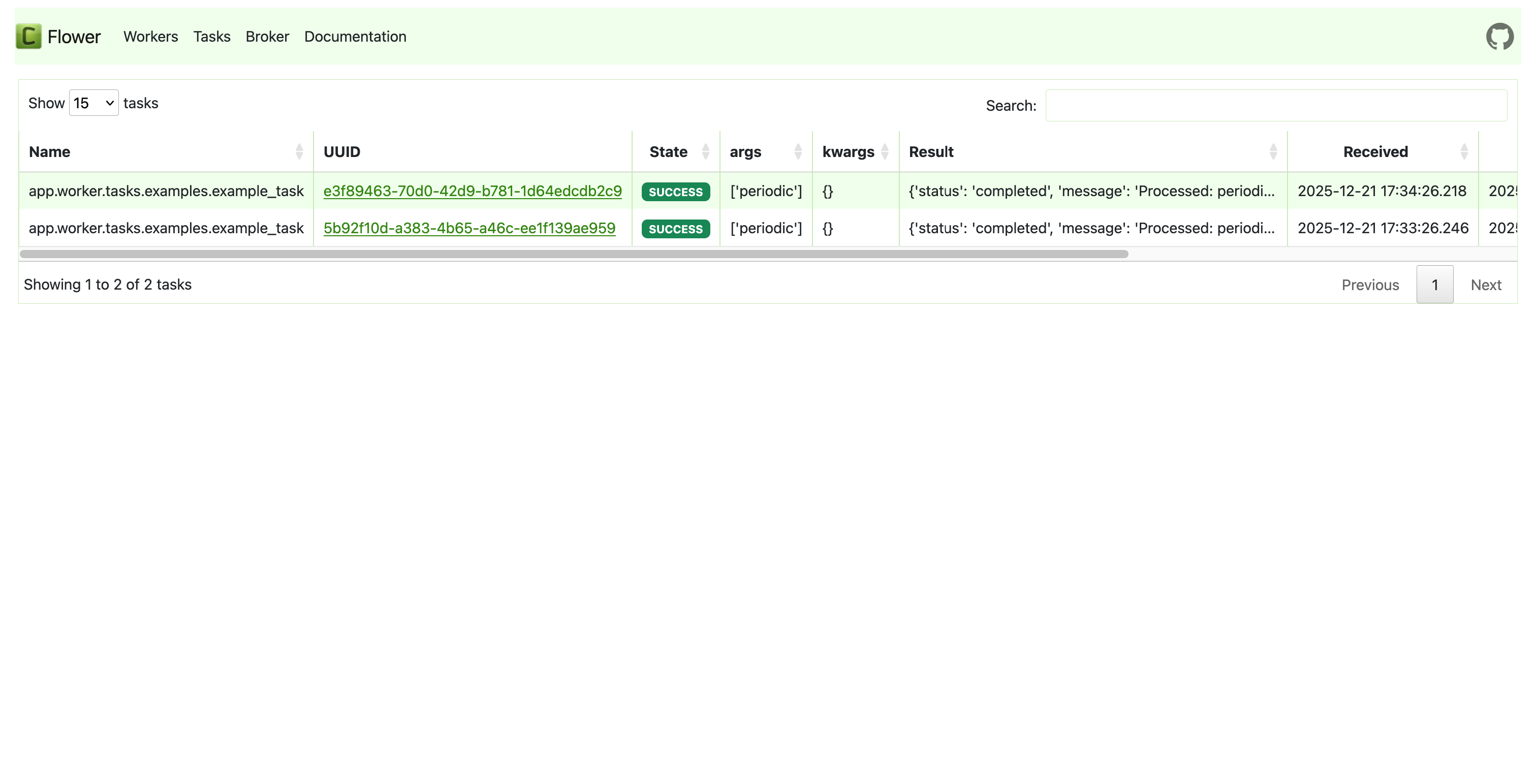

- 📊 ML Applications - Background task processing with Celery/Taskiq

- 🏢 Enterprise SaaS - Full auth, admin panel, webhooks, and more

- 🚀 Startups - Ship fast with production-ready infrastructure

AI-Agent Friendly

Generated projects include CLAUDE.md and AGENTS.md files optimized for AI coding assistants (Claude Code, Codex, Copilot, Cursor, Zed). Following progressive disclosure best practices - concise project overview with pointers to detailed docs when needed.

✨ Features

🤖 AI/LLM First

- PydanticAI or LangChain - Choose your preferred AI framework

- WebSocket Streaming - Real-time responses with full event access

- Conversation Persistence - Save chat history to database

- Custom Tools - Easily extend agent capabilities

- Multi-provider Support - OpenAI, Anthropic, OpenRouter

- Observability - Logfire for PydanticAI, LangSmith for LangChain

⚡ Backend (FastAPI)

- FastAPI + Pydantic v2 - High-performance async API

- Multiple Databases - PostgreSQL (async), MongoDB (async), SQLite

- Authentication - JWT + Refresh tokens, API Keys, OAuth2 (Google)

- Background Tasks - Celery, Taskiq, or ARQ

- Django-style CLI - Custom management commands with auto-discovery

🎨 Frontend (Next.js 15)

- React 19 + TypeScript + Tailwind CSS v4

- AI Chat Interface - WebSocket streaming, tool call visualization

- Authentication - HTTP-only cookies, auto-refresh

- Dark Mode + i18n (optional)

🔌 20+ Enterprise Integrations

| Category | Integrations |

|---|---|

| AI Frameworks | PydanticAI, LangChain |

| Caching & State | Redis, fastapi-cache2 |

| Security | Rate limiting, CORS, CSRF protection |

| Observability | Logfire, LangSmith, Sentry, Prometheus |

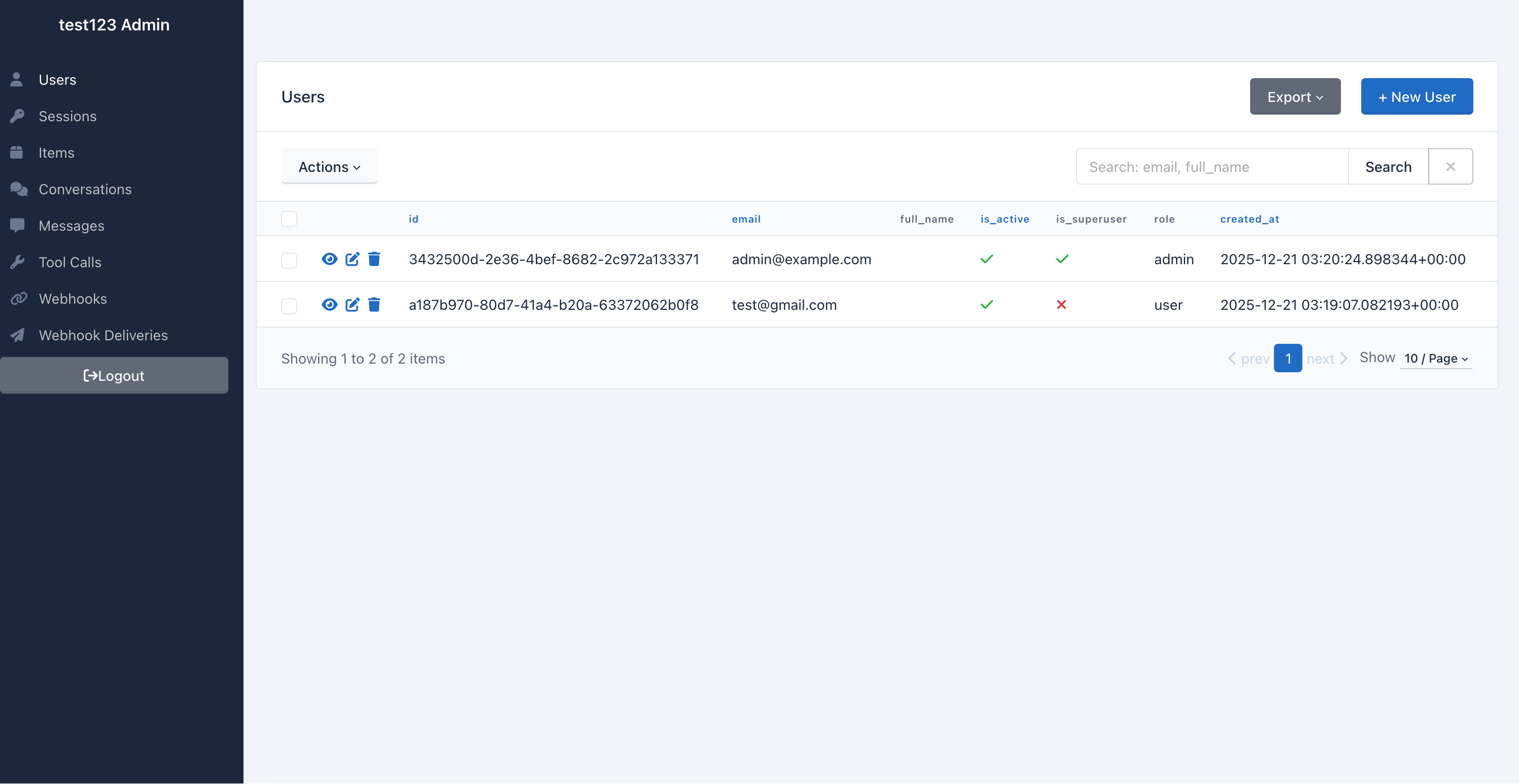

| Admin | SQLAdmin panel with auth |

| Events | Webhooks, WebSockets |

| DevOps | Docker, GitHub Actions, GitLab CI, Kubernetes |

🎬 Demo

📸 Screenshots

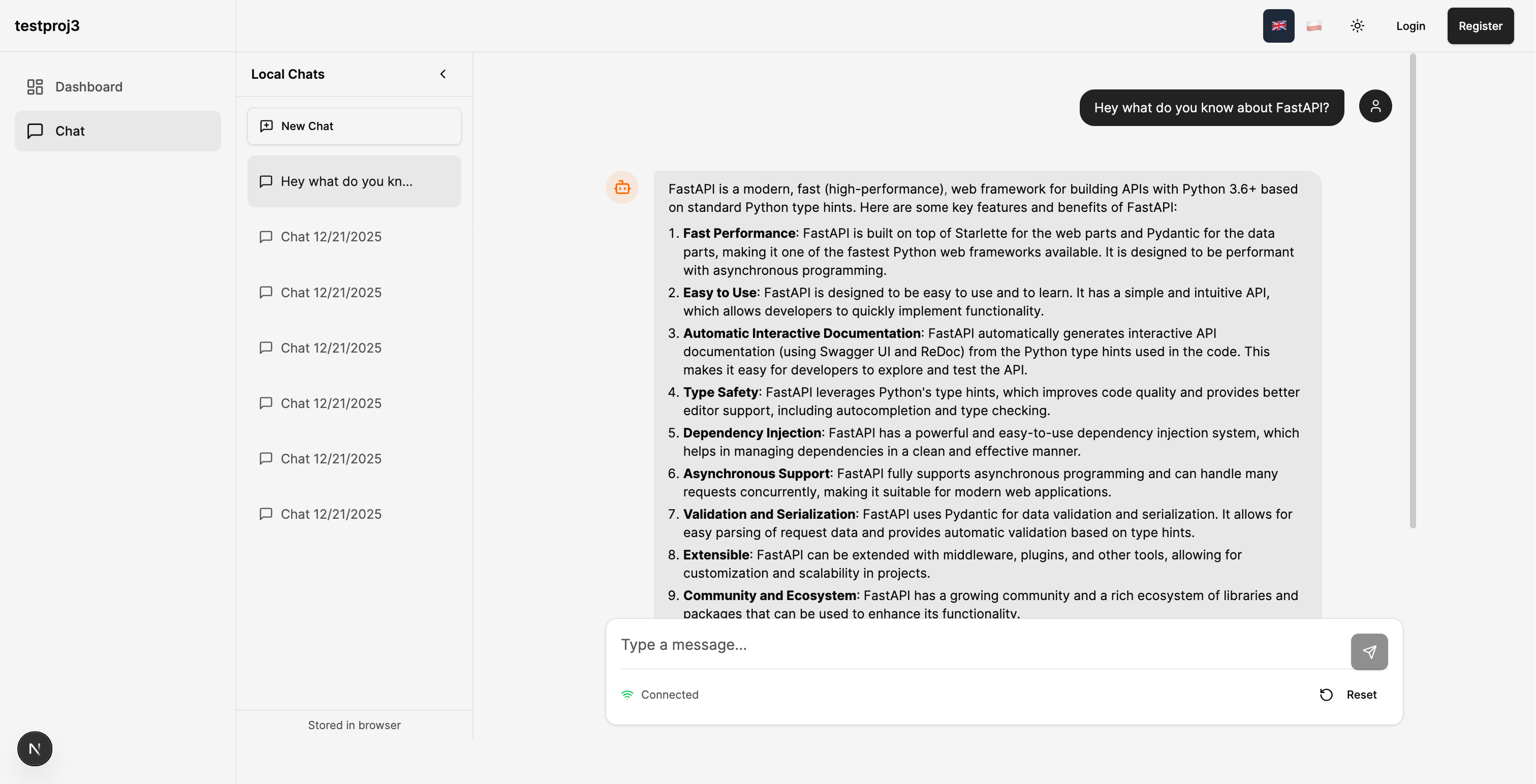

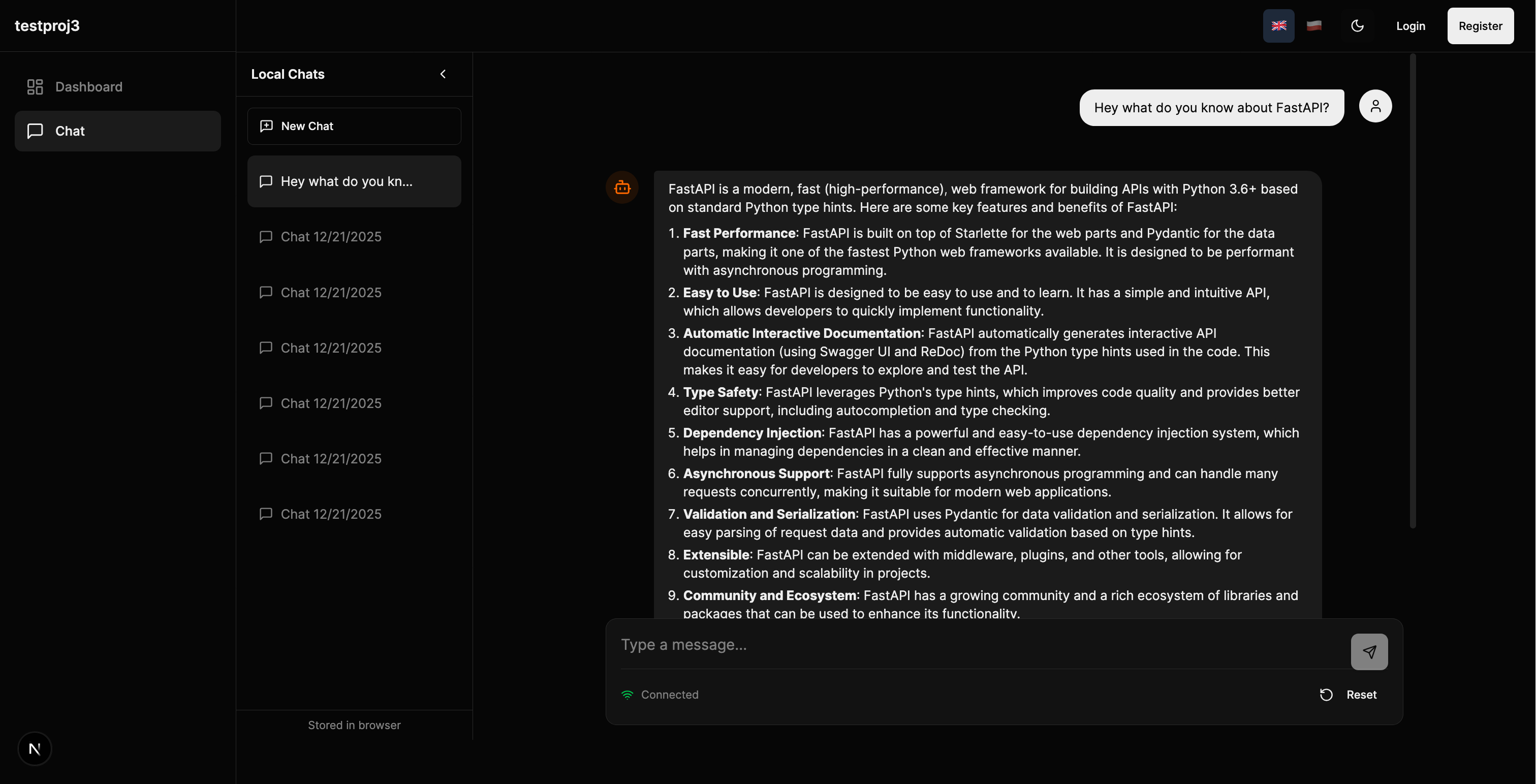

Chat Interface

| Light Mode | Dark Mode |

|---|---|

|

|

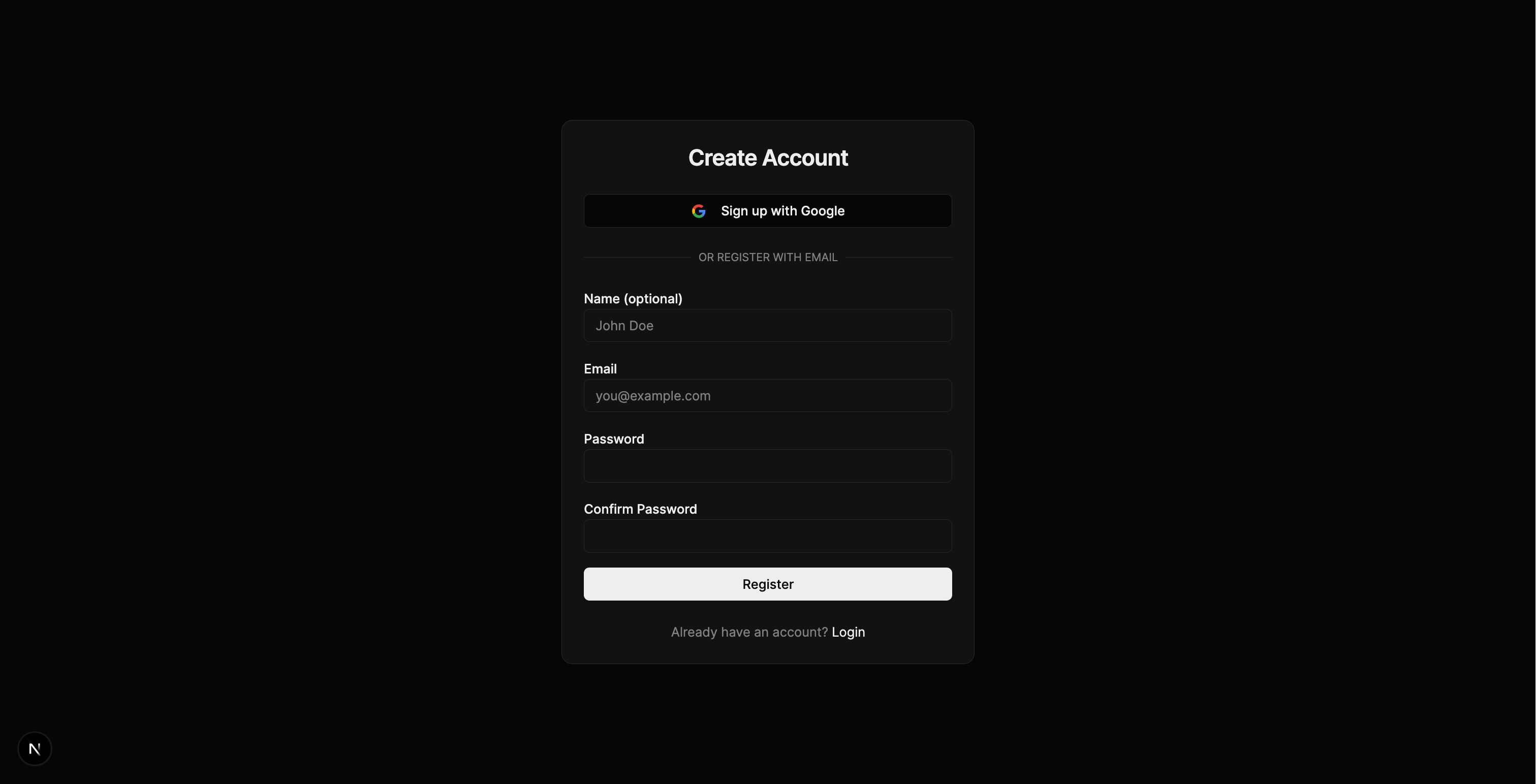

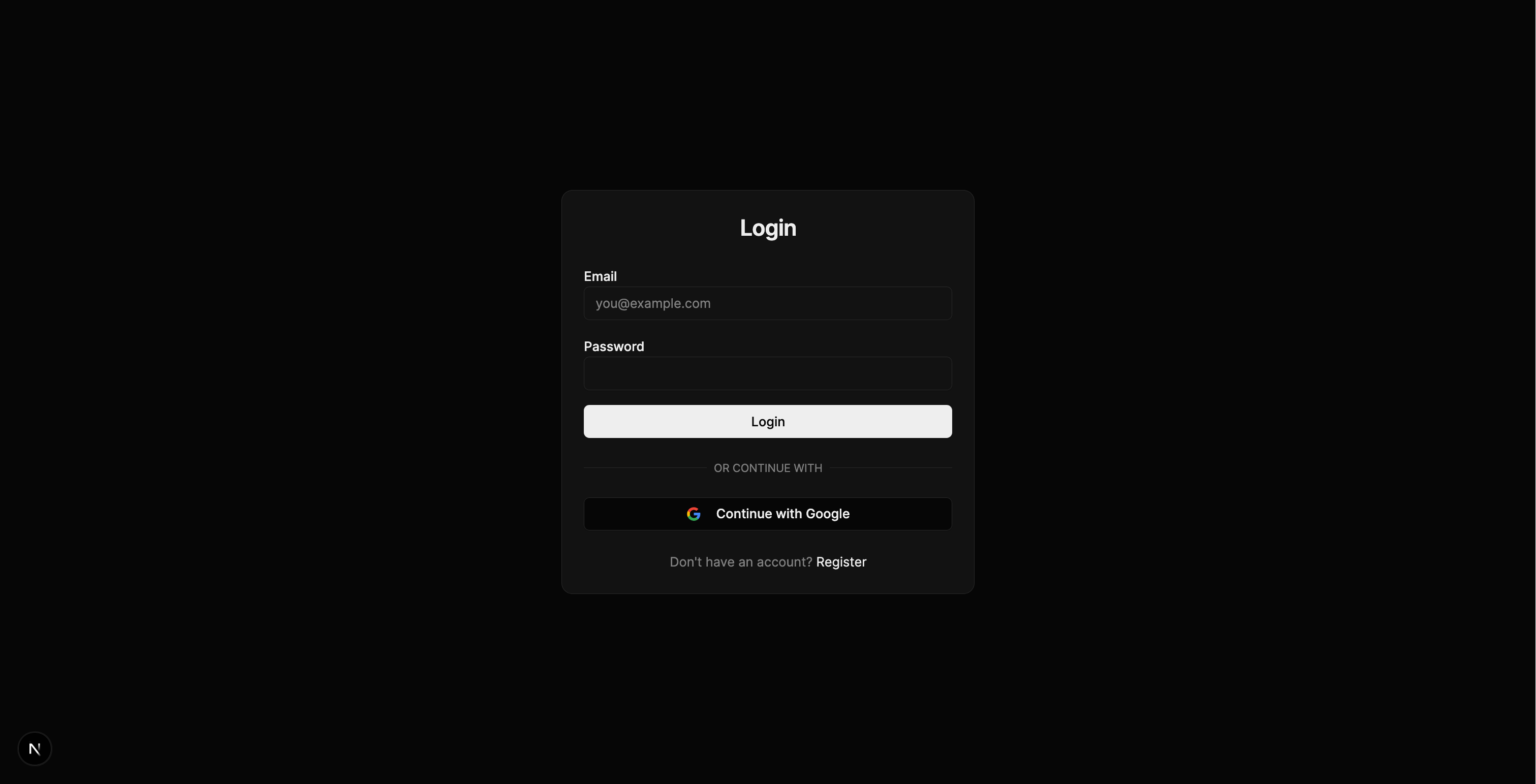

Authentication

| Register | Login |

|---|---|

|

|

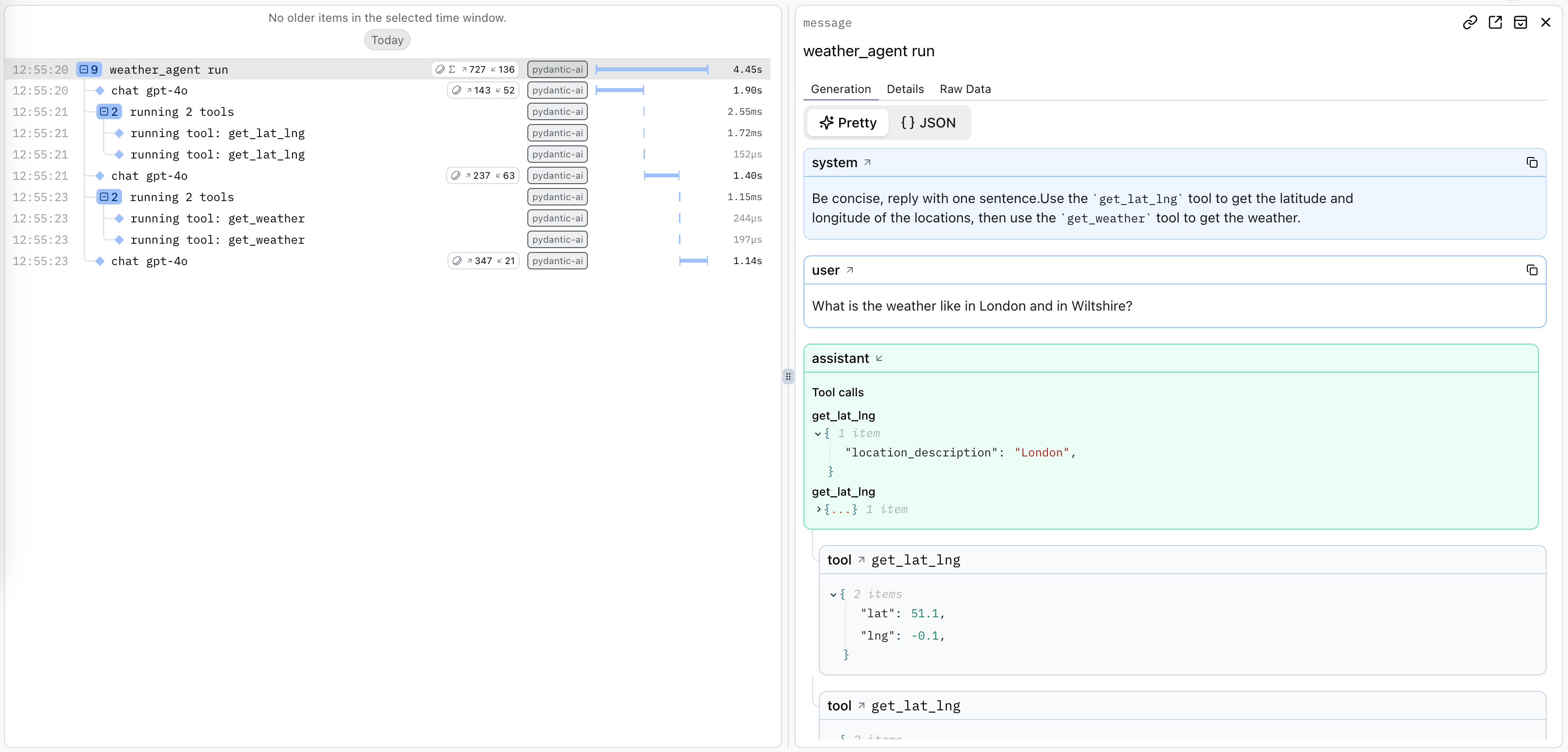

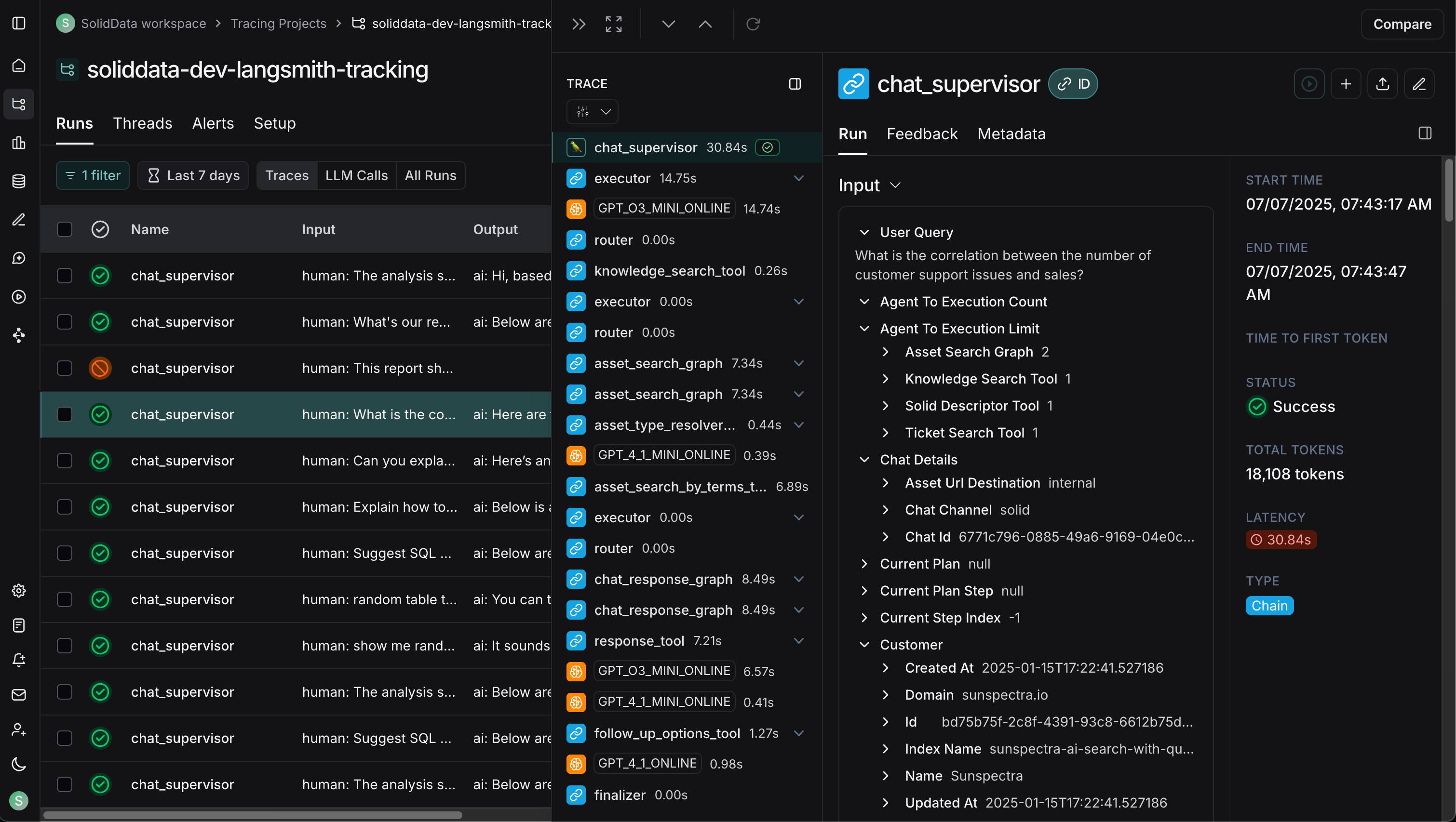

Observability

| Logfire (PydanticAI) | LangSmith (LangChain) |

|---|---|

|

|

Admin, Monitoring & API

| Celery Flower | SQLAdmin Panel |

|---|---|

|

|

| API Documentation |

|---|

|

🚀 Quick Start

Installation

# pip

pip install fastapi-fullstack

# uv (recommended)

uv tool install fastapi-fullstack

# pipx

pipx install fastapi-fullstack

Create Your Project

# Interactive wizard (recommended)

fastapi-fullstack new

# Quick mode with options

fastapi-fullstack create my_ai_app \

--database postgresql \

--auth jwt \

--frontend nextjs

# Use presets for common setups

fastapi-fullstack create my_ai_app --preset production # Full production setup

fastapi-fullstack create my_ai_app --preset ai-agent # AI agent with streaming

# Minimal project (no extras)

fastapi-fullstack create my_ai_app --minimal

Start Development

After generating your project, follow these steps:

1. Install dependencies

cd my_ai_app

make install

Windows Users: The

makecommand requires GNU Make which is not available by default on Windows. Install via Chocolatey (choco install make), use WSL, or run raw commands manually. Each generated project includes a "Manual Commands Reference" section in its README with all commands.

2. Start the database

# PostgreSQL (with Docker)

make docker-db

3. Create and apply database migrations

⚠️ Important: Both commands are required!

db-migratecreates the migration file,db-upgradeapplies it to the database.

# Create initial migration (REQUIRED first time)

make db-migrate

# Enter message: "Initial migration"

# Apply migrations to create tables

make db-upgrade

4. Create admin user

make create-admin

# Enter email and password when prompted

5. Start the backend

make run

6. Start the frontend (new terminal)

cd frontend

bun install

bun dev

Access:

- API: http://localhost:8000

- Docs: http://localhost:8000/docs

- Admin Panel: http://localhost:8000/admin (login with admin user)

- Frontend: http://localhost:3000

Quick Start with Docker

Run everything in Docker:

make docker-up # Start backend + database

make docker-frontend # Start frontend

Using the Project CLI

Each generated project has a CLI named after your project_slug. For example, if you created my_ai_app:

cd backend

# The CLI command is: uv run <project_slug> <command>

uv run my_ai_app server run --reload # Start dev server

uv run my_ai_app db migrate -m "message" # Create migration

uv run my_ai_app db upgrade # Apply migrations

uv run my_ai_app user create-admin # Create admin user

Use make help to see all available Makefile shortcuts.

🏗️ Architecture

graph TB

subgraph Frontend["Frontend (Next.js 15)"]

UI[React Components]

WS[WebSocket Client]

Store[Zustand Stores]

end

subgraph Backend["Backend (FastAPI)"]

API[API Routes]

Services[Services Layer]

Repos[Repositories]

Agent[AI Agent]

end

subgraph Infrastructure

DB[(PostgreSQL/MongoDB)]

Redis[(Redis)]

Queue[Celery/Taskiq]

end

subgraph External

LLM[OpenAI/Anthropic]

Webhook[Webhook Endpoints]

end

UI --> API

WS <--> Agent

API --> Services

Services --> Repos

Services --> Agent

Repos --> DB

Agent --> LLM

Services --> Redis

Services --> Queue

Services --> Webhook

Layered Architecture

The backend follows a clean Repository + Service pattern:

graph LR

A[API Routes] --> B[Services]

B --> C[Repositories]

C --> D[(Database)]

B --> E[External APIs]

B --> F[AI Agents]

| Layer | Responsibility |

|---|---|

| Routes | HTTP handling, validation, auth |

| Services | Business logic, orchestration |

| Repositories | Data access, queries |

See Architecture Documentation for details.

🤖 AI Agent

Choose between PydanticAI or LangChain when generating your project, with support for multiple LLM providers:

# PydanticAI with OpenAI (default)

fastapi-fullstack create my_app --ai-agent --ai-framework pydantic_ai

# PydanticAI with Anthropic

fastapi-fullstack create my_app --ai-agent --ai-framework pydantic_ai --llm-provider anthropic

# PydanticAI with OpenRouter

fastapi-fullstack create my_app --ai-agent --ai-framework pydantic_ai --llm-provider openrouter

# LangChain with OpenAI

fastapi-fullstack create my_app --ai-agent --ai-framework langchain

# LangChain with Anthropic

fastapi-fullstack create my_app --ai-agent --ai-framework langchain --llm-provider anthropic

Supported LLM Providers

| Framework | OpenAI | Anthropic | OpenRouter |

|---|---|---|---|

| PydanticAI | ✓ | ✓ | ✓ |

| LangChain | ✓ | ✓ | - |

PydanticAI Integration

Type-safe agents with full dependency injection:

# app/agents/assistant.py

from pydantic_ai import Agent, RunContext

@dataclass

class Deps:

user_id: str | None = None

db: AsyncSession | None = None

agent = Agent[Deps, str](

model="openai:gpt-4o-mini",

system_prompt="You are a helpful assistant.",

)

@agent.tool

async def search_database(ctx: RunContext[Deps], query: str) -> list[dict]:

"""Search the database for relevant information."""

# Access user context and database via ctx.deps

...

LangChain Integration

Flexible agents with LangGraph:

# app/agents/langchain_assistant.py

from langchain.tools import tool

from langgraph.prebuilt import create_react_agent

@tool

def search_database(query: str) -> list[dict]:

"""Search the database for relevant information."""

...

agent = create_react_agent(

model=ChatOpenAI(model="gpt-4o-mini"),

tools=[search_database],

prompt="You are a helpful assistant.",

)

WebSocket Streaming

Both frameworks use the same WebSocket endpoint with real-time streaming:

@router.websocket("/ws")

async def agent_ws(websocket: WebSocket):

await websocket.accept()

# Works with both PydanticAI and LangChain

async for event in agent.stream(user_input):

await websocket.send_json({

"type": "text_delta",

"content": event.content

})

Observability

Each framework has its own observability solution:

| Framework | Observability | Dashboard |

|---|---|---|

| PydanticAI | Logfire | Agent runs, tool calls, token usage |

| LangChain | LangSmith | Traces, feedback, datasets |

See AI Agent Documentation for more.

📊 Observability

Logfire (for PydanticAI)

Logfire provides complete observability for your application - from AI agents to database queries. Built by the Pydantic team, it offers first-class support for the entire Python ecosystem.

graph LR

subgraph Your App

API[FastAPI]

Agent[PydanticAI]

DB[(Database)]

Cache[(Redis)]

Queue[Celery/Taskiq]

HTTP[HTTPX]

end

subgraph Logfire

Traces[Traces]

Metrics[Metrics]

Logs[Logs]

end

API --> Traces

Agent --> Traces

DB --> Traces

Cache --> Traces

Queue --> Traces

HTTP --> Traces

| Component | What You See |

|---|---|

| PydanticAI | Agent runs, tool calls, LLM requests, token usage, streaming events |

| FastAPI | Request/response traces, latency, status codes, route performance |

| PostgreSQL/MongoDB | Query execution time, slow queries, connection pool stats |

| Redis | Cache hits/misses, command latency, key patterns |

| Celery/Taskiq | Task execution, queue depth, worker performance |

| HTTPX | External API calls, response times, error rates |

LangSmith (for LangChain)

LangSmith provides observability specifically designed for LangChain applications:

| Feature | Description |

|---|---|

| Traces | Full execution traces for agent runs and chains |

| Feedback | Collect user feedback on agent responses |

| Datasets | Build evaluation datasets from production data |

| Monitoring | Track latency, errors, and token usage |

LangSmith is automatically configured when you choose LangChain:

# .env

LANGCHAIN_TRACING_V2=true

LANGCHAIN_API_KEY=your-api-key

LANGCHAIN_PROJECT=my_project

Configuration

Enable Logfire and select which components to instrument:

fastapi-fullstack new

# ✓ Enable Logfire observability

# ✓ Instrument FastAPI

# ✓ Instrument Database

# ✓ Instrument Redis

# ✓ Instrument Celery

# ✓ Instrument HTTPX

Usage

# Automatic instrumentation in app/main.py

import logfire

logfire.configure()

logfire.instrument_fastapi(app)

logfire.instrument_asyncpg()

logfire.instrument_redis()

logfire.instrument_httpx()

# Manual spans for custom logic

with logfire.span("process_order", order_id=order.id):

await validate_order(order)

await charge_payment(order)

await send_confirmation(order)

For more details, see Logfire Documentation.

🛠️ Django-style CLI

Each generated project includes a powerful CLI inspired by Django's management commands:

Built-in Commands

# Server

my_app server run --reload

my_app server routes

# Database (Alembic wrapper)

my_app db init

my_app db migrate -m "Add users"

my_app db upgrade

# Users

my_app user create --email admin@example.com --superuser

my_app user list

Custom Commands

Create your own commands with auto-discovery:

# app/commands/seed.py

from app.commands import command, success, error

import click

@command("seed", help="Seed database with test data")

@click.option("--count", "-c", default=10, type=int)

@click.option("--dry-run", is_flag=True)

def seed_database(count: int, dry_run: bool):

"""Seed the database with sample data."""

if dry_run:

info(f"[DRY RUN] Would create {count} records")

return

# Your logic here

success(f"Created {count} records!")

Commands are automatically discovered from app/commands/ - just create a file and use the @command decorator.

my_app cmd seed --count 100

my_app cmd seed --dry-run

📁 Generated Project Structure

my_project/

├── backend/

│ ├── app/

│ │ ├── main.py # FastAPI app with lifespan

│ │ ├── api/

│ │ │ ├── routes/v1/ # Versioned API endpoints

│ │ │ ├── deps.py # Dependency injection

│ │ │ └── router.py # Route aggregation

│ │ ├── core/ # Config, security, middleware

│ │ ├── db/models/ # SQLAlchemy/MongoDB models

│ │ ├── schemas/ # Pydantic schemas

│ │ ├── repositories/ # Data access layer

│ │ ├── services/ # Business logic

│ │ ├── agents/ # AI agents with centralized prompts

│ │ ├── commands/ # Django-style CLI commands

│ │ └── worker/ # Background tasks

│ ├── cli/ # Project CLI

│ ├── tests/ # pytest test suite

│ └── alembic/ # Database migrations

├── frontend/

│ ├── src/

│ │ ├── app/ # Next.js App Router

│ │ ├── components/ # React components

│ │ ├── hooks/ # useChat, useWebSocket, etc.

│ │ └── stores/ # Zustand state management

│ └── e2e/ # Playwright tests

├── docker-compose.yml

├── Makefile

└── README.md

Generated projects include version metadata in pyproject.toml for tracking:

[tool.fastapi-fullstack]

generator_version = "0.1.5"

generated_at = "2024-12-21T10:30:00+00:00"

⚙️ Configuration Options

Core Options

| Option | Values | Description |

|---|---|---|

| Database | postgresql, mongodb, sqlite, none |

Async by default |

| ORM | sqlalchemy, sqlmodel |

SQLModel for simplified syntax |

| Auth | jwt, api_key, both, none |

JWT includes user management |

| OAuth | none, google |

Social login |

| AI Framework | pydantic_ai, langchain |

Choose your AI agent framework |

| LLM Provider | openai, anthropic, openrouter |

OpenRouter only with PydanticAI |

| Background Tasks | none, celery, taskiq, arq |

Distributed queues |

| Frontend | none, nextjs |

Next.js 15 + React 19 |

Presets

| Preset | Description |

|---|---|

--preset production |

Full production setup with Redis, Sentry, Kubernetes, Prometheus |

--preset ai-agent |

AI agent with WebSocket streaming and conversation persistence |

--minimal |

Minimal project with no extras |

Integrations

Select what you need:

fastapi-fullstack new

# ✓ Redis (caching/sessions)

# ✓ Rate limiting (slowapi)

# ✓ Pagination (fastapi-pagination)

# ✓ Admin Panel (SQLAdmin)

# ✓ AI Agent (PydanticAI or LangChain)

# ✓ Webhooks

# ✓ Sentry

# ✓ Logfire / LangSmith

# ✓ Prometheus

# ... and more

📚 Documentation

| Document | Description |

|---|---|

| Architecture | Repository + Service pattern, layered design |

| Frontend | Next.js setup, auth, state management |

| AI Agent | PydanticAI, tools, WebSocket streaming |

| Observability | Logfire integration, tracing, metrics |

| Deployment | Docker, Kubernetes, production setup |

| Development | Local setup, testing, debugging |

| Changelog | Version history and release notes |

Star History

🙏 Inspiration

This project is inspired by:

- full-stack-fastapi-template by @tiangolo

- fastapi-template by @s3rius

- FastAPI Best Practices by @zhanymkanov

- Django's management commands system

🤝 Contributing

Contributions are welcome! Please read our Contributing Guide for details.

📄 License

MIT License - see LICENSE for details.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file fastapi_fullstack-0.2.0.tar.gz.

File metadata

- Download URL: fastapi_fullstack-0.2.0.tar.gz

- Upload date:

- Size: 232.8 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

b64c971fd35f7b2a4e1185c4ae77b0c5e6f6589df8bd8ad2bcc006d29657859d

|

|

| MD5 |

57bbd83e5fc810aad33590068ce20b78

|

|

| BLAKE2b-256 |

4dd07bf7015069b5e47478896e2bcdaa67f06cbdbc0111d7d76d598b56c3a843

|

Provenance

The following attestation bundles were made for fastapi_fullstack-0.2.0.tar.gz:

Publisher:

release.yml on vstorm-co/full-stack-ai-agent-template

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

fastapi_fullstack-0.2.0.tar.gz -

Subject digest:

b64c971fd35f7b2a4e1185c4ae77b0c5e6f6589df8bd8ad2bcc006d29657859d - Sigstore transparency entry: 1004899067

- Sigstore integration time:

-

Permalink:

vstorm-co/full-stack-ai-agent-template@72f264090d8e0e144f6c5722c8ca159233e91249 -

Branch / Tag:

refs/tags/0.2.1 - Owner: https://github.com/vstorm-co

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@72f264090d8e0e144f6c5722c8ca159233e91249 -

Trigger Event:

release

-

Statement type:

File details

Details for the file fastapi_fullstack-0.2.0-py3-none-any.whl.

File metadata

- Download URL: fastapi_fullstack-0.2.0-py3-none-any.whl

- Upload date:

- Size: 358.9 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c63c34f2b8ac45c81a7acc42e74680116e0b175d701851068a4535eb5dabc5c8

|

|

| MD5 |

ffdc4ff6b13a29941b9c0a99df3bf7b1

|

|

| BLAKE2b-256 |

0f99efcc5c73d2100fa96ee1f8458b0b4e7a3f932652de4dc39bf8e541afd000

|

Provenance

The following attestation bundles were made for fastapi_fullstack-0.2.0-py3-none-any.whl:

Publisher:

release.yml on vstorm-co/full-stack-ai-agent-template

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

fastapi_fullstack-0.2.0-py3-none-any.whl -

Subject digest:

c63c34f2b8ac45c81a7acc42e74680116e0b175d701851068a4535eb5dabc5c8 - Sigstore transparency entry: 1004899069

- Sigstore integration time:

-

Permalink:

vstorm-co/full-stack-ai-agent-template@72f264090d8e0e144f6c5722c8ca159233e91249 -

Branch / Tag:

refs/tags/0.2.1 - Owner: https://github.com/vstorm-co

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@72f264090d8e0e144f6c5722c8ca159233e91249 -

Trigger Event:

release

-

Statement type: