A fully first-order (FFO) layer for differentiable convex optimization

Project description

This is the implementation of fully first-order bilevel gradient to replace the non-fully first-order methods in differentiable optimization.

The main comparison is qpth and cvxpylayer. Our method provides a fully first-order method to differentiate through optimization layers approximately.

It has the advantage in computation and memory efficiency, but in the tradeoff of the accuracy of the gradient.

Please install the required packages:

- torch

- cvxpy

- qpth

- cvxpylayer

- gurobi (python -m pip install gurobipy)

- cvxtorch (git clone https://github.com/cvxpy/cvxtorch.git, cd cvxtorch, pip install -e .)

1) Decision-focused learning (QP case)

Key files

main.py— entrypoint for all methodsffoqp.py- previous ffo version with l2 penaltyffoqp_eq_cst.py- new ffo version without l2 penalty (faster convergence in theory)ffoqp_eq_cst_schur/pdipm/parallelize.py- try to accelerate ffoqp_eq_cst

To run the code, please use:

python main.py --method=ffoqp_eq_cst --eps=0.1 --lr=0.00001 --ydim=200

python main.py --method=ffoqp --eps=0.1 --lr=0.00001 --ydim=200

python main.py --method=cvxpylayer --eps=0.1 --lr=0.00001 --ydim=200

python main.py --method=qpth --eps=0.1 --lr=0.00001 --ydim=200

(optional)

python main.py --method=ffoqp_eq_cst_pdipm --eps=0.1 --lr=0.00001 --ydim=200

python main.py --method=ffoqp_eq_cst_parallelize --eps=0.1 --lr=0.00001 --ydim=200

python main.py --method=ffoqp_eq_cst_schur --eps=0.1 --lr=0.00001 --ydim=200

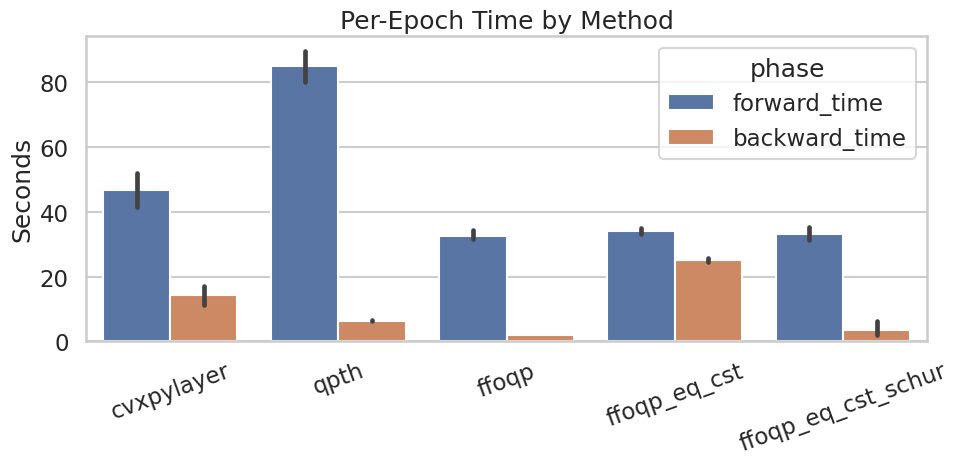

I ran a few simple experiments to compare the performance of the three methods. Each epoch includes 2048 samples (split into training and testing) to run decision-focused leanring (end-to-end learning).

For optimization problems of size 200, the computation results are as follows (please run plot_toy_dfl.ipynb to reproduce it)

The computation improvement can only be seen in larger instances of the optimization problem. This is likely due to the dominance of the overhead in the forward process to solve the optimization problem. Only when the size goes larger, the backward process of the optimization problem becomes more expensive than the forward process. In smaller instances, the overhead in the forward process dominates the computation time, and thus the improvement in the backward process is not significant enough to be observed. qpth also uses a faster algorithm to parallelize and implement the forward pass, which is why qpth is faster than cvxpylayer (in theory they are the same).

2) Second-order Cone program

To run the code, please run:

ffoqsocp_test.ipynb

We found that ffoqp's gradient approximation is more accurate than LPGD in this case.

3) Sudoku (differentiable optimization as a layer)

Key files

sudoku/main_sudoku.py— entrypoint for all methodsffocp_eq.py- new ffo version for general convex problem without l2 penaltycvxpylayers_local/cvxpylayer.py- incorporate the latest diffcp into cvxpylayer to support LPGD

To run the code, please use:

python sudoku/main_sudoku.py --method ffocp --n 3 --epoch 1 --batch_size 8

python sudoku/main_sudoku.py --method lpgd --n 3 --epoch 1 --batch_size 8

python sudoku/main_sudoku.py --method ffoqp --n 3 --epoch 1 --batch_size 8

python sudoku/main_sudoku.py --method cvxpylayer --n 3 --epoch 1 --batch_size 8

python sudoku/main_sudoku.py --method qpth --n 3 --epoch 1 --batch_size 8

To plot the results, please use

python sudoku/plot_results.py

Now we observed that LPGD has a very high test error (~0.9), while ffocp and other methods can achieve low test errors (~0.01-0.1).

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file ffolayer-0.1.2.tar.gz.

File metadata

- Download URL: ffolayer-0.1.2.tar.gz

- Upload date:

- Size: 33.4 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.14

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

70a6ee46ba2187fa62aec6bfe38714c5c10ca4ac0b6b640bae6bbac5b7a651f7

|

|

| MD5 |

e02cb82e9ecc178cfd7261a1e0151960

|

|

| BLAKE2b-256 |

913699b91396267f11796fecc616a1158775d22496ceee26612a385ff6360f97

|

File details

Details for the file ffolayer-0.1.2-py3-none-any.whl.

File metadata

- Download URL: ffolayer-0.1.2-py3-none-any.whl

- Upload date:

- Size: 26.6 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.2.0 CPython/3.10.14

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f4177124140779be43ac5a058c5b79fd3d3eab49a21d87ad2ab576e8c656bdd7

|

|

| MD5 |

49b3eb33eb58ae15e91b35aa0e440cda

|

|

| BLAKE2b-256 |

e852287373912001ad0cc6a877ab8e108c9fc50d10eecdf5c8934be7e1b2bd7f

|