A chat interface over up-to-date Python library documentation.

Project description

🛩️ Fleet Context

A CLI tool over the top 1218 Python libraries.

Used for library q/a & code generation with all avaliable OpenAI models

Website

|

Data Visualizer

|

PyPI

|

@fleet_ai

https://github.com/fleet-ai/context/assets/44193474/80381b25-551e-4602-8987-071e92354f6f

Quick Start

Install the package and run context to ask questions about the most up-to-date Python libraries. You will have to provide your OpenAI key to start a session.

pip install fleet-context

context

If you'd like to run the CLI tool locally, you can clone this repository, cd into it, then run:

pip install -e .

context

If you have an existing package that already uses they keyword context, you can also activate Fleet Context by running:

fleet-context

Limit libraries

You can use the -l or --libraries followed by a list of libraries to limit your session to a certain number of libraries. Defaults to all. View a list of all supported libraries on our website.

context -l langchain pydantic openai

Use a different OpenAI model

You can select a different OpenAI model by using -m or --model. Defaults to gpt-4. You can set your model to gpt-4-1106-preview (gpt-4-turbo), gpt-3.5-turbo, or gpt-3.5-turbo-16k.

context -m gpt-4-1106-preview

Advanced settings

You can control the number of retrieved chunks by using -k or --k_value (defaulted to 15), and you can toggle whether the model cites its source by using -c or --cite_sources (defaults to true).

context -k 25 -c false

Evaluations

Results

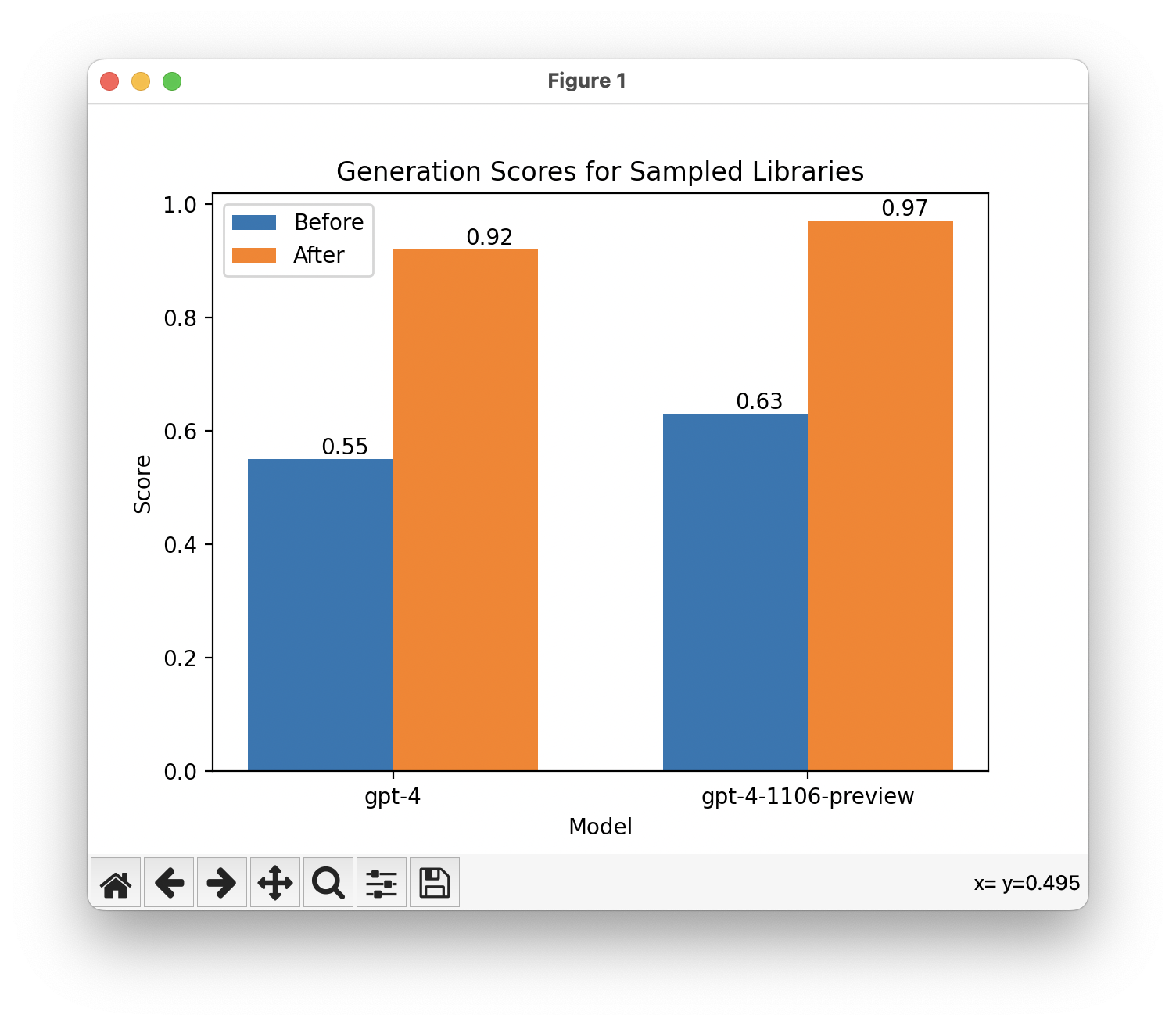

Sampled libraries

We saw a 37-point improvement for gpt-4 generation scores and a 34-point improvement for gpt-4-turbo generation scores amongst a randomly sampled set of 50 libraries.

We attribute this to a lack of knowledge for the most up-to-date versions of libraries for gpt-4, and a combination of relevant up-to-date information to generate with and relevance of information for gpt-4-turbo.

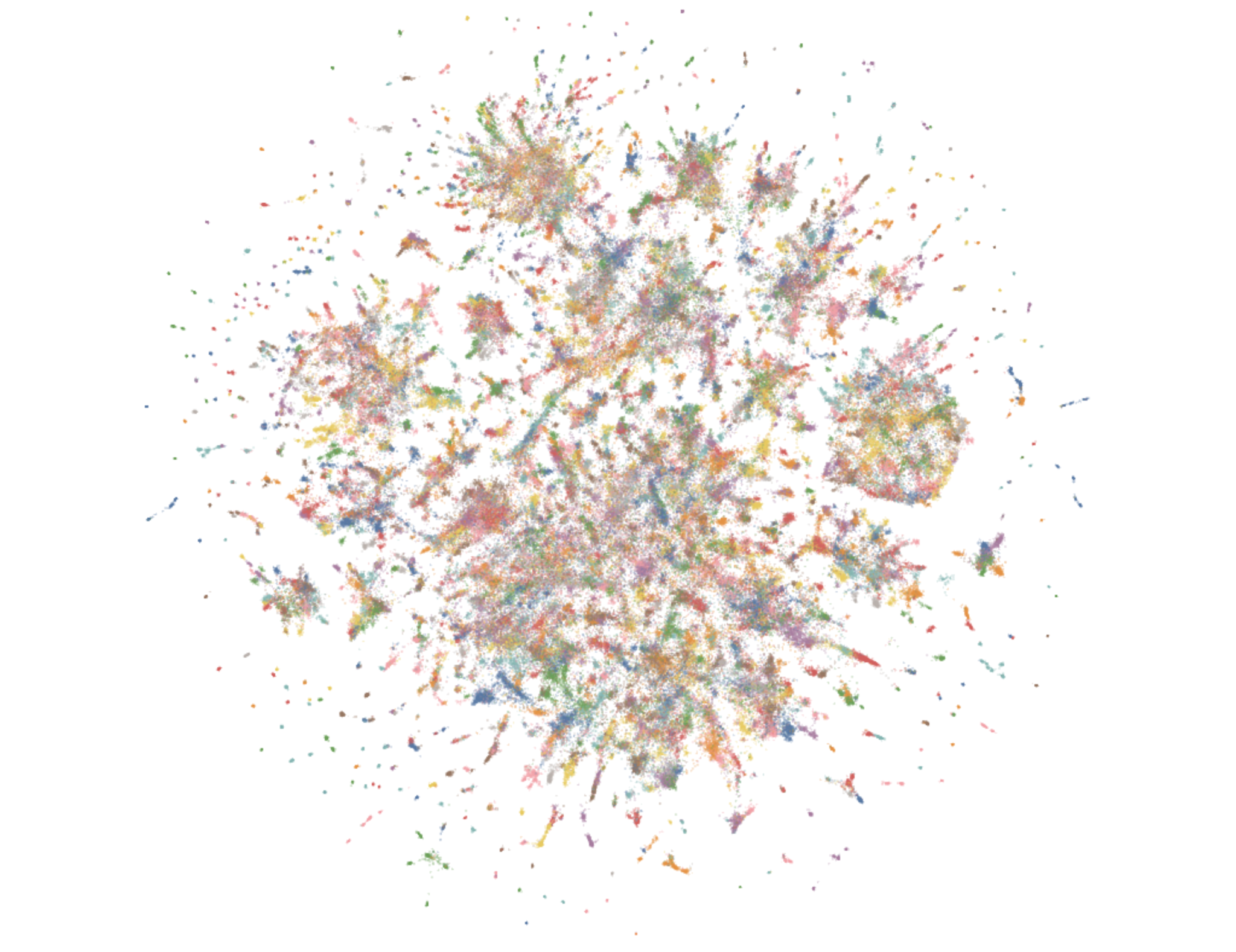

Embeddings

Check out our visualized data here.

You can download all embeddings here.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

File details

Details for the file fleet-context-1.0.14.tar.gz.

File metadata

- Download URL: fleet-context-1.0.14.tar.gz

- Upload date:

- Size: 18.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/4.0.2 CPython/3.11.4

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

52e58393f046fb860f801ffd86539c512d912f7d5177ecec487c86afc7ff8791

|

|

| MD5 |

47f81817275f51a3f9b4eebad6b2153e

|

|

| BLAKE2b-256 |

376d10c591e671a1aace8bb710eefba1a7763136e6b22882ab870ecc7945ec9d

|