Gradient boosting libraries integrated with pytorch

Project description

GBNet

XGBoost and LightGBM integrated into Pytorch as PyTorch Modules

Table of Contents

Install and Docs

pip install gbnet

Docs: https://gbnet.readthedocs.io/

Introduction

There are two main components of gbnet:

-

(1)

gbnet.xgbmodule,gbnet.lgbmoduleandgbnet.gblinearprovide the Pytorch Modules that allow fitting of XGBoost, LightGBM and Boosted Linear models using Pytorch's computational network and differentiation capabilities.- For example, if $

F(X)$ is the output of an XGBoost model, you can use Pytorch to define the loss function, $L(y, F(X))$. Pytorch handles the gradients of $L$ so, as a user, you only specify the loss function. - You can also fit two (or more) boosted models together with Pytorch-supported parametric components. For instance, a recommendation prediction might look like this: $

\sigma(F(user) \times G(item))$ where both $F$ and $G$ are separate boosting models producing embeddings of users and items respectively.gbnetmakes defining and fitting such a model almost as easy as using Pytorch itself.

- For example, if $

-

(2)

gbnet.modelsprovides specific example estimators that accomplish things that were not previously possible using only XGBoost or LightGBM. Current models:Forecastis a forecasting model similar in execution to Metas' Prophet algorithm. In the settings we tested,gbnet.models.forecasting.Forecastbeats the performance of Meta's Prophet algorithm (see the forecasting PR for a comparison).GBOrdis Ordinal Regression using GBMs (both XGBoost and LightGBM supported). The complex loss function (with fitable parameters) is specified in PyTorch and put on top of eitherXGBModuleorLGBModule.- Other models with plans to be integrated are time-varying Survival analysis and more with NLP.

Pytorch Modules

There are currently three Pytorch Modules in gbnet: lgbmodule.LGBModule, xgbmodule.XGBModule and gblinear.GBLinear. These create the interface between PyTorch and the boosting algorithms. LightGBM and XGBoost are wrapped in LGBModule and XGBModule respectively. GBLinear is a linear layer that is trained with boosting (rather than gradient descent) -- for some applications it trains much faster than gradient descent (see this PR for details).

Conceptually, how can Pytorch be used to fit XGBoost or LightGBM models?

Gradient Boosting Machines only require gradients and, for modern packages, hessians to train. Pytorch (and other neural network packages) calculates gradients and hessians. GBMs can therefore be fit as the first layer in neural networks using Pytorch.

CatBoost is also supported but in an experimental capacity since the current gbnet integration with CatBoost is not as performant as the other GBDT packages.

Is training a gbnet model closer to training a neural network or to training a GBM?

It's closer to training a GBM. Currently, the biggest difference between training using gbnet vs basic torch, is that gbnet, like basic usage of xgboost and lightgbm, requires the entire dataset to be fed in. Cached predictions allow these packages to train quickly, and caching cannot happen if input batches change with each training/boosting round. There are some ways around this but there is currently no native functionality in gbnet for true batch training. Additional info is provided in #12.

Basic training of a GBM for comparison to existing gradient boosting packages

import time

import lightgbm as lgb

import numpy as np

import xgboost as xgb

import torch

from gbnet import lgbmodule, xgbmodule

# Generate Dataset

np.random.seed(100)

n = 1000

input_dim = 20

output_dim = 1

X = np.random.random([n, input_dim])

B = np.random.random([input_dim, output_dim])

Y = X.dot(B) + np.random.random([n, output_dim])

iters = 100

t0 = time.time()

# XGBoost training for comparison

xbst = xgb.train(

params={'objective': 'reg:squarederror', 'base_score': 0.0},

dtrain=xgb.DMatrix(X, label=Y),

num_boost_round=iters

)

t1 = time.time()

# LightGBM training for comparison

lbst = lgb.train(

params={'verbose':-1},

train_set=lgb.Dataset(X, label=Y.flatten(), init_score=[0 for i in range(n)]),

num_boost_round=iters

)

t2 = time.time()

# XGBModule training

xnet = xgbmodule.XGBModule(n, input_dim, output_dim, params={})

xmse = torch.nn.MSELoss()

X_dmatrix = xgb.DMatrix(X)

for i in range(iters):

xnet.zero_grad()

xpred = xnet(X_dmatrix)

loss = 1/2 * xmse(xpred, torch.Tensor(Y)) # xgboost uses 1/2 (Y - P)^2

loss.backward(create_graph=True)

xnet.gb_step()

xnet.eval() # like any torch module, use eval mode for predictions

t3 = time.time()

# LGBModule training

lnet = lgbmodule.LGBModule(n, input_dim, output_dim, params={})

lmse = torch.nn.MSELoss()

X_dataset = lgb.Dataset(X)

for i in range(iters):

lnet.zero_grad()

lpred = lnet(X_dataset)

loss = lmse(lpred, torch.Tensor(Y))

loss.backward(create_graph=True)

lnet.gb_step()

lnet.eval() # use eval mode for predictions

t4 = time.time()

print(np.max(np.abs(xbst.predict(xgb.DMatrix(X)) - xnet(X_dmatrix).detach().numpy().flatten()))) # 9.537e-07

print(np.max(np.abs(lbst.predict(X) - lnet(X).detach().numpy().flatten()))) # 2.479e-07

print(f'xgboost time: {t1 - t0}') # 0.089

print(f'lightgbm time: {t2 - t1}') # 0.084

print(f'xgbmodule time: {t3 - t2}') # 0.166

print(f'lgbmodule time: {t4 - t3}') # 0.123

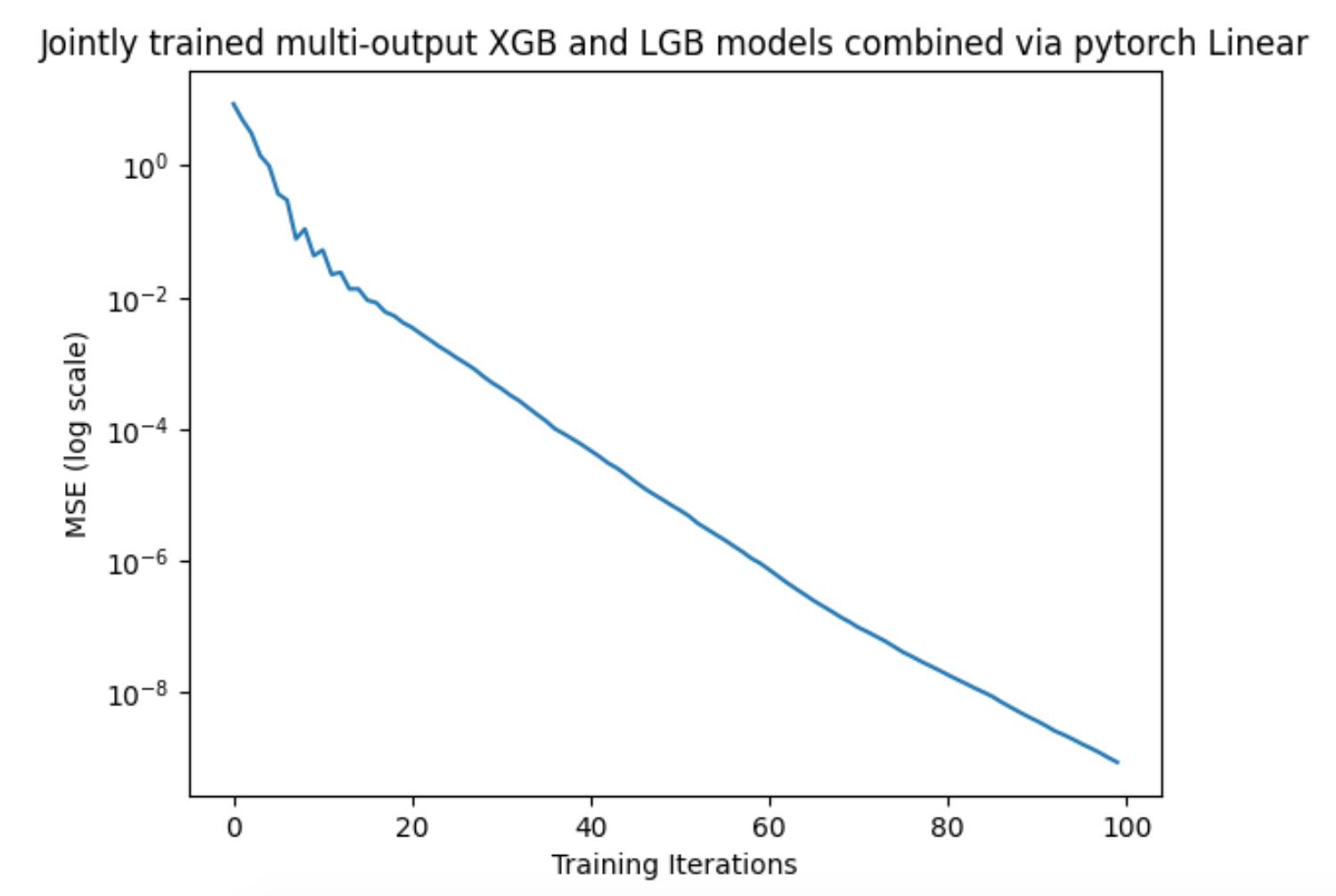

Training XGBoost and LightGBM together

import time

import numpy as np

import torch

from gbnet import lgbmodule, xgbmodule

# Create new module that jointly trains multi-output xgboost and lightgbm models

# the outputs of these gbm models is then combined by a linear layer

class GBPlus(torch.nn.Module):

def __init__(self, input_dim, intermediate_dim, output_dim):

super(GBPlus, self).__init__()

self.xgb = xgbmodule.XGBModule(n, input_dim, intermediate_dim, {'eta': 0.1})

self.lgb = lgbmodule.LGBModule(n, input_dim, intermediate_dim, {'eta': 0.1})

self.linear = torch.nn.Linear(intermediate_dim, output_dim)

def forward(self, input_array):

xpreds = self.xgb(input_array)

lpreds = self.lgb(input_array)

preds = self.linear(xpreds + lpreds)

return preds

def gb_step(self):

self.xgb.gb_step()

self.lgb.gb_step()

# Generate Dataset

np.random.seed(100)

n = 1000

input_dim = 10

output_dim = 1

X = np.random.random([n, input_dim])

B = np.random.random([input_dim, output_dim])

Y = X.dot(B) + np.random.random([n, output_dim])

intermediate_dim = 10

gbp = GBPlus(input_dim, intermediate_dim, output_dim)

mse = torch.nn.MSELoss()

optimizer = torch.optim.Adam(gbp.parameters(), lr=0.005)

t0 = time.time()

losses = []

for i in range(100):

optimizer.zero_grad()

preds = gbp(X)

loss = mse(preds, torch.Tensor(Y))

loss.backward(create_graph=True) # create_graph=True required for any gbnet

losses.append(loss.detach().numpy().copy())

gbp.gb_step() # required to update the gbms

optimizer.step()

t1 = time.time()

print(t1 - t0) # 5.821

Models

Forecasting

gbnet.models.forecasting.Forecast outperforms Meta's popular Prophet algorithm on basic benchmarks (see the forecasting PR for a comparison). Starter comparison code:

import pandas as pd

from prophet import Prophet

from sklearn.metrics import root_mean_squared_error

from gbnet.models import forecasting

## Load and split data

url = "https://raw.githubusercontent.com/facebook/prophet/main/examples/example_yosemite_temps.csv"

df = pd.read_csv(url)

df['ds'] = pd.to_datetime(df['ds'])

train = df[df['ds'] < df['ds'].median()].reset_index(drop=True).copy()

test = df[df['ds'] >= df['ds'].median()].reset_index(drop=True).copy()

## train and predict comparing out-of-the-box gbnet & prophet

# gbnet

gbnet_forecast_model = forecasting.Forecast()

gbnet_forecast_model.fit(train, train['y'])

test['gbnet_pred'] = gbnet_forecast_model.predict(test)['yhat']

# prophet

prophet_model = Prophet()

prophet_model.fit(train)

test['prophet_pred'] = prophet_model.predict(test)['yhat']

sel = test['y'].notnull()

print(f"gbnet rmse: {root_mean_squared_error(test[sel]['y'], test[sel]['gbnet_pred'])}")

print(f"prophet rmse: {root_mean_squared_error(test[sel]['y'], test[sel]['prophet_pred'])}")

# gbnet rmse: 8.757314439339462

# prophet rmse: 20.10509806878121

Ordinal Regression

See this notebook for examples.

from gbnet.models import ordinal_regression

sklearn_estimator = ordinal_regression.GBOrd(num_classes=10)

Contributing

Contributions are welcome! Here are some ways you can help:

- Report bugs and request features by opening issues

- Submit pull requests with bug fixes or new features

- Improve documentation and examples

- Add tests to increase code coverage

Before submitting a pull request:

- Fork the repository and create a new branch

- Add tests for any new functionality

- Ensure all tests pass by running

pytest - Update documentation as needed

- Follow the existing code style

For major changes, please open an issue first to discuss what you would like to change.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file gbnet-0.5.0.tar.gz.

File metadata

- Download URL: gbnet-0.5.0.tar.gz

- Upload date:

- Size: 31.0 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.9.22

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

e90cd3c0c72938059026f9f52e864b314fc60cceed250b9aee2abab8ea89e92a

|

|

| MD5 |

3b5ac717e9ca227c0472a392d8ab2169

|

|

| BLAKE2b-256 |

f0c79d6b3972acbc2efb3ce93a28972b59bc9ad07cf32d3ebb0d79b2b6b17f55

|

File details

Details for the file gbnet-0.5.0-py3-none-any.whl.

File metadata

- Download URL: gbnet-0.5.0-py3-none-any.whl

- Upload date:

- Size: 35.9 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: twine/6.1.0 CPython/3.9.22

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

fb3615b764e65ff2ebbf43e871acd62276f8860a8b8b2eab48a6e2d27a608fd0

|

|

| MD5 |

bd353950c4daca19b4abf652481e114c

|

|

| BLAKE2b-256 |

64adc8aa9d72d1c4488e0db50cf933dc2599bf58fdca7d1d80a4ea0d32a701af

|