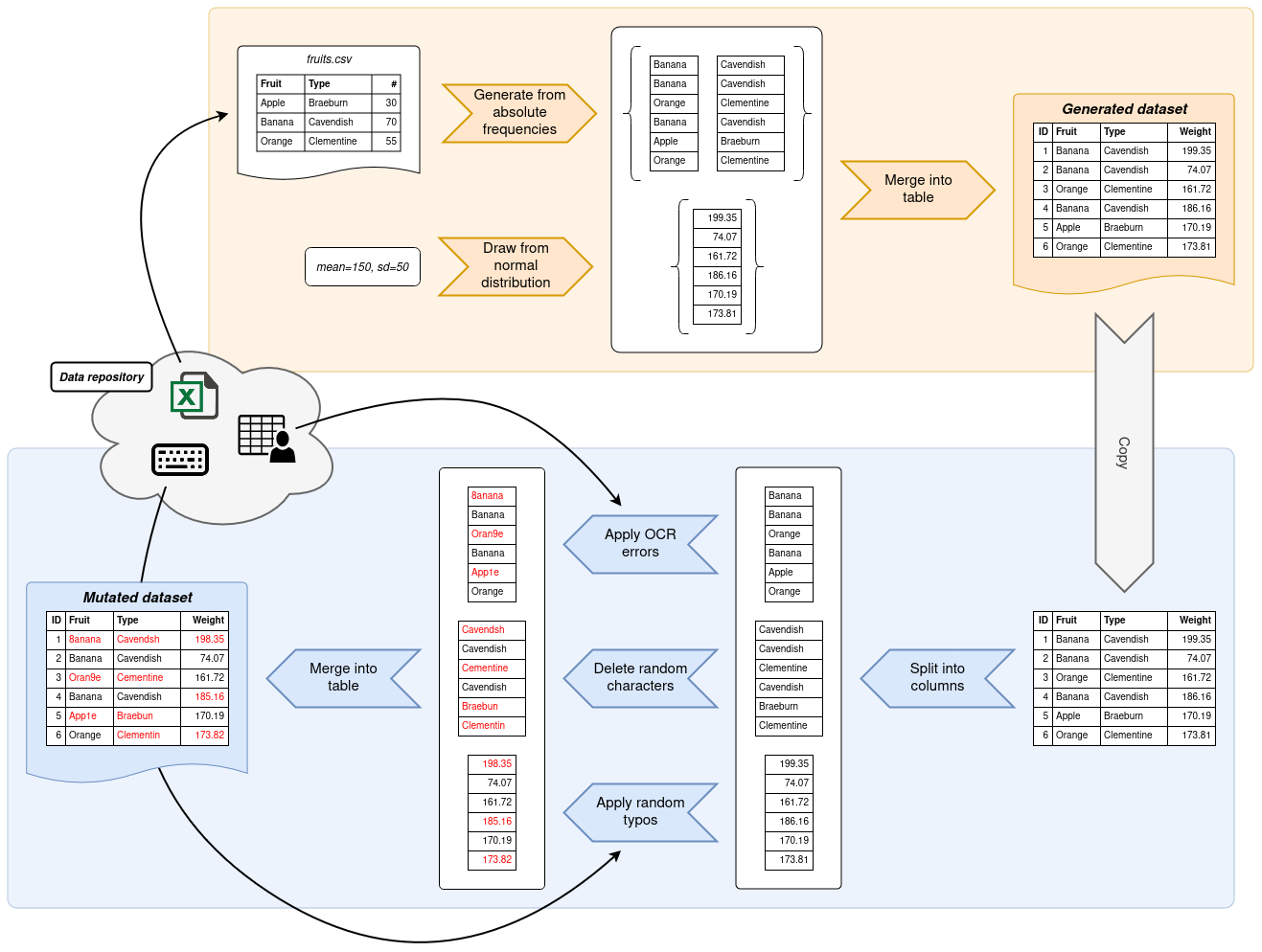

Generation and mutation of realistic data at scale.

Project description

Gecko is a Python library for the bulk generation and mutation of realistic personal data. It is a spiritual successor to the GeCo framework which was initially published by Tran, Vatsalan and Christen. Gecko reimplements the most promising aspects of the original framework for modern Python with a simplified API, adds extra features and massively improves performance thanks to NumPy and Pandas.

Installation

Install with pip:

pip install gecko-syndata

Install with Poetry:

poetry add gecko-syndata

Basic usage

Please see the docs for an in-depth guide on how to use the library.

Writing a data generation script with Gecko is usually split into two consecutive steps. In the first step, data is generated based on information that you provide. Most commonly, Gecko pulls the information it needs from frequency tables, although other means of generating data are possible. Gecko will then output a dataset to your specifications.

In the second step, a copy of this dataset is mutated. Gecko provides functions which deliberately introduce errors into your dataset. These errors can take shape in typos, edit errors and other common data sources. By the end, you will have a generated dataset and a mutated copy thereof.

Gecko exposes two modules, generator and mutator, to help you write data generation scripts.

Both contain built-in functions covering the most common use cases for generating data from frequency information and

mutating data based on common error sources, such as typos, OCR errors and much more.

The following example gives a very brief overview of what a data generation script with Gecko might look like. It uses frequency tables from the Gecko data repository which has been cloned into a directory next to the script itself.

from pathlib import Path

import numpy as np

from gecko import generator, mutator

# create a RNG with a set seed for reproducible results

rng = np.random.default_rng(727)

# path to the Gecko data repository

gecko_data_dir = Path("gecko-data")

# create a data frame with 10,000 rows and a single column called "last_name"

# which sources its values from the frequency table with the same name

df_generated = generator.to_data_frame(

[

("last_name", generator.from_frequency_table(

gecko_data_dir / "de_DE" / "last-name.csv",

value_column="last_name",

freq_column="count",

rng=rng,

)),

],

10_000,

)

# mutate this data frame by randomly deleting characters in 1% of all rows

df_mutated = mutator.mutate_data_frame(

df_generated,

[

("last_name", (.01, mutator.with_delete(rng))),

],

)

# export both data frames using Pandas' to_csv function

df_generated.to_csv("german-generated.csv", index_label="id")

df_mutated.to_csv("german-mutated.csv", index_label="id")

For a more extensive usage guide, refer to the docs.

Rationale

The GeCo framework was originally conceived to facilitate the generation and mutation of personal data to validate record linkage algorithms. In the field of record linkage, acquiring real-world personal data to test new algorithms on is hard to come by. Hence, GeCo went for a synthetic approach using statistical models from publicly available data. GeCo was built for Python 2.7 and has not seen any active development since its last publication in 2013. The general idea of providing shareable and reproducible Python scripts to generate personal data however still holds a lot of promise. This has led to the development of the Gecko library.

A lot of GeCo's weaknesses were rectified with this library.

Vectorized functions from Pandas and NumPy provide significant performance boosts and aid integration into existing

data science applications.

A simplified API allows for a much easier development of custom generators and mutators.

NumPy's random number generation routines instead of Python's built-in random module make fine-tuned reproducible

results a breeze.

Gecko therefore seeks to be GeCo's "bigger brother" and aims to provide a much more refined experience to generate

realistic personal data.

Disclaimer

Gecko is still very much in a "beta" state. As it stands, it satisfies our internal use cases within the Medical Data Science group, but we also seek wider adoption. If you find any issues or improvements with the library, do not hesitate to contact us.

License

Gecko is released under the MIT License.

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file gecko_syndata-0.6.4.tar.gz.

File metadata

- Download URL: gecko_syndata-0.6.4.tar.gz

- Upload date:

- Size: 25.9 kB

- Tags: Source

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/1.8.4 CPython/3.10.12 Linux/6.5.0-1025-azure

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

f6670b558051cc4e1eea9303f7f505e101cfbb704b5a26ad25876c7f5643dac4

|

|

| MD5 |

9d5a2605c6d2d353855e17fa1736ebe5

|

|

| BLAKE2b-256 |

111e32a530ba32eb6934c6b46a52446aade36c59f8c52bdb9e16eeeca729da29

|

File details

Details for the file gecko_syndata-0.6.4-py3-none-any.whl.

File metadata

- Download URL: gecko_syndata-0.6.4-py3-none-any.whl

- Upload date:

- Size: 26.5 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? No

- Uploaded via: poetry/1.8.4 CPython/3.10.12 Linux/6.5.0-1025-azure

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c6867cd0de343487e752435e2e596383653853ca3b86c67cfe3410e4de2cd6c1

|

|

| MD5 |

d77605a2747ed993cb261052b8d505e3

|

|

| BLAKE2b-256 |

87a25eafa8c04e6f87ea320da036e65aa23aedf17a961fe023056db471069a07

|