MCP server for AI-powered research using Gemini: quick grounded search + Deep Research Agent

Project description

Gemini Research MCP Server

MCP server for AI-powered research using Gemini. Fast grounded search, URL extraction, comprehensive Deep Research, and session management.

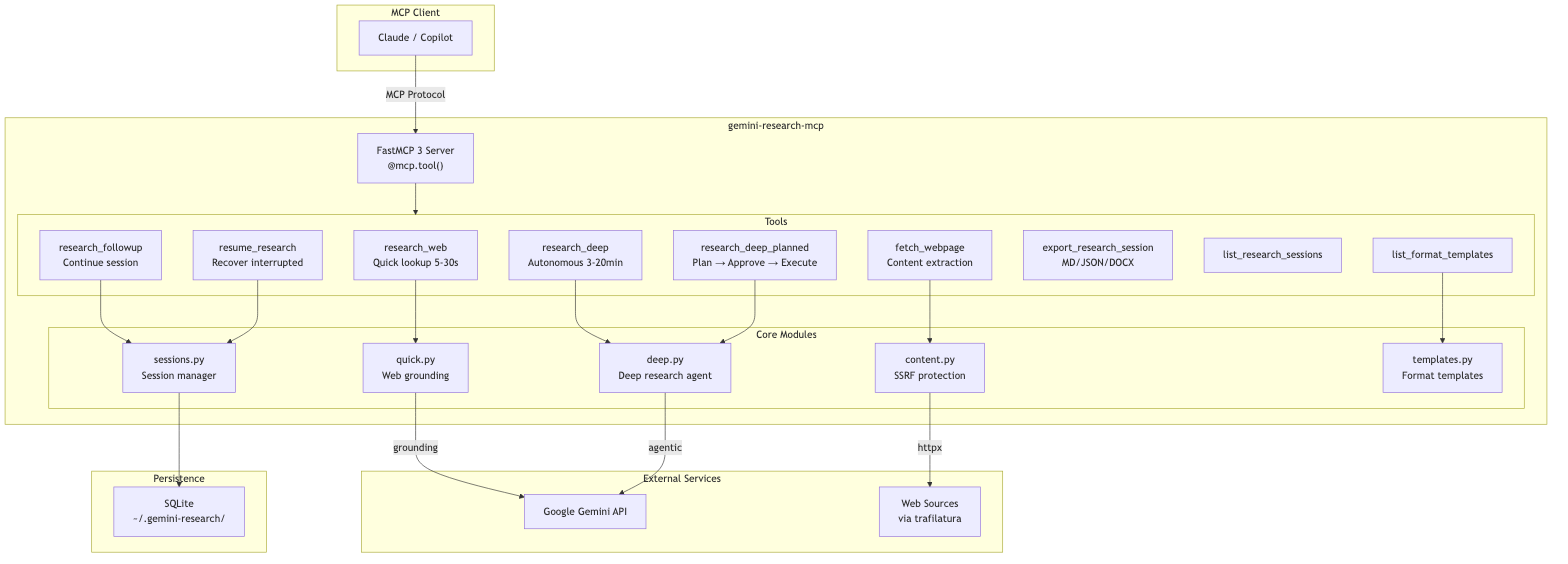

Architecture

Mermaid source

flowchart TB

subgraph Client["MCP Client"]

Claude["Claude / Copilot"]

end

subgraph Server["gemini-research-mcp"]

direction TB

FastMCP["FastMCP 3 Server<br/>@mcp.tool()"]

subgraph Tools["Tools"]

RW["research_web<br/>Quick lookup 5-30s"]

RD["research_deep<br/>Autonomous 3-20min"]

RF["research_followup<br/>Continue session"]

RR["resume_research<br/>Recover interrupted"]

FW["fetch_webpage<br/>Content extraction"]

EX["export_research_session<br/>MD/JSON/DOCX"]

LS["list_research_sessions"]

LT["list_format_templates"]

end

subgraph Modules["Core Modules"]

Quick["quick.py<br/>Web grounding"]

Deep["deep.py<br/>Deep research agent"]

Content["content.py<br/>SSRF protection"]

StorageMod["storage.py<br/>Session manager"]

Templates["templates.py<br/>Format templates"]

end

end

subgraph External["External Services"]

Gemini["Google Gemini API"]

Web["Web Sources<br/>via trafilatura"]

end

subgraph Storage["Persistence"]

SQLite["SQLite<br/>~/.gemini-research/"]

end

Claude -->|"MCP Protocol"| FastMCP

FastMCP --> Tools

RW --> Quick

RD --> Deep

RF --> StorageMod

RR --> StorageMod

FW --> Content

LT --> Templates

Quick -->|"grounding"| Gemini

Deep -->|"agentic"| Gemini

Content -->|"httpx"| Web

StorageMod --> SQLite

Tools

| Tool | Description | Latency |

|---|---|---|

research_web |

Fast web search with citations | 5-30 sec |

research_deep |

Multi-step autonomous research (MCP Tasks) | 3-20 min |

resume_research |

Resume interrupted/in-progress sessions | instant |

research_followup |

Continue conversation after research | 5-30 sec |

list_research_sessions |

List saved research sessions | instant |

list_format_templates |

Browse report format templates | instant |

export_research_session |

Export to Markdown, JSON, or DOCX | instant |

fetch_webpage |

Extract article content from a specific URL (SSRF-protected, chunkable) | 0.5-2 sec |

fetch_webpage Parameters

The fetch_webpage tool supports chunked reading for large pages and optional proxy routing:

| Parameter | Type | Default | Description |

|---|---|---|---|

url |

string | required | HTTP/HTTPS URL to fetch |

max_length |

integer | null | null |

Maximum characters to return (chunk size) |

start_index |

integer | 0 |

Character offset for pagination |

proxy_url |

string | null | null |

Optional HTTP(S) proxy URL for the request |

Notes:

- SSRF protection is always applied (private/internal hosts are blocked).

robots.txtis checked before fetch whenprotegois installed.- When output is truncated, the response includes a continuation hint with next

start_index. - If

proxy_urlis omitted, the server falls back toFETCH_PROXY_URLwhen set. proxy_urlmust be a public HTTP(S) host (private/internal proxy hosts are blocked).

Install the web extra for the highest-quality fetch_webpage experience:

pip install 'gemini-research-mcp[web]'

# or

uv add 'gemini-research-mcp[web]'

Without [web], fetch_webpage still works using the built-in HTML fallback, but trafilatura

extraction and protego-based robots.txt checks are unavailable.

Power User Workflow

Key insight: Gemini Deep Research runs asynchronously on Google's servers. Even if VS Code disconnects, your research continues. The

resume_researchtool retrieves completed work.

Features

- Auto-Clarification:

research_deepasks clarifying questions for vague queries via MCP Elicitation - MCP Tasks: Real-time progress with streaming updates

- Session Persistence: Research sessions are automatically saved and can be resumed later

- Export Formats: Export to Markdown, JSON, or professional DOCX with Table of Contents

- File Search: Search your own data alongside web using

file_search_store_names - Format Instructions: Control report structure (sections, tables, tone)

Installation

PyPI (recommended)

pip install gemini-research-mcp

# or

uv add gemini-research-mcp

Claude Desktop (MCPB Bundle)

Download the .mcpb bundle from GitHub Releases and open it in Claude Desktop for single-click installation.

The bundle uses UV runtime - dependencies are installed automatically, no Python required.

Configuration

| Variable | Required | Default | Description |

|---|---|---|---|

GEMINI_API_KEY |

Yes | — | Google AI Studio API key |

GEMINI_MODEL |

No | gemini-3.1-pro-preview |

Model for research_web |

GEMINI_SUMMARY_MODEL |

No | gemini-3-flash-preview |

Model for session summaries (fast) |

DEEP_RESEARCH_AGENT |

No | deep-research-pro-preview-12-2025 |

Agent for research_deep |

FETCH_PROXY_URL |

No | — | Default HTTP(S) proxy for fetch_webpage |

cp .env.example .env

# Edit .env with your API key

Usage

VS Code MCP

Add to .vscode/mcp.json:

{

"servers": {

"gemini-research": {

"command": "uvx",

"args": ["gemini-research-mcp"],

"env": {

"GEMINI_API_KEY": "your-api-key"

}

}

}

}

Or run from source:

{

"servers": {

"gemini-research": {

"command": "uv",

"args": ["run", "--directory", "path/to/gemini-research-mcp", "gemini-research-mcp"],

"envFile": "${workspaceFolder}/path/to/gemini-research-mcp/.env"

}

}

}

Command Line

uv run gemini-research-mcp

# or

uvx gemini-research-mcp

DOCX Export

Export research sessions to professional Word documents with:

- Cover page with title, date, and research metadata

- Clickable Table of Contents with navigation to sections

- Professional typography: Calibri fonts, 1-inch margins, 1.5x line spacing

- Executive summary with elegant formatting

- Full research report with proper heading hierarchy

- Sources section with full clickable URLs

- Metadata table with session details

VS Code Setup

To enable DOCX export, install with the [docx] extra:

{

"servers": {

"gemini-research": {

"command": "uvx",

"args": ["--from", "gemini-research-mcp[docx]", "gemini-research-mcp"],

"env": {

"GEMINI_API_KEY": "your-api-key"

}

}

}

}

Downloading Files

After running export_research_session with format: "docx", the tool returns a resource URI:

research://exports/{export_id}

In VS Code Copilot Chat, you can:

- Click "Save" on the resource attachment to download the

.docxfile - Drag-and-drop from the chat into your workspace

Installation (pip/uv)

# Install with DOCX support

pip install 'gemini-research-mcp[docx]'

# or

uv add 'gemini-research-mcp[docx]'

Features

| Feature | Description |

|---|---|

| Cover Page | Title, date, duration, tokens, AI agent |

| Clickable TOC | Internal hyperlinks navigate to sections |

| Syntax Highlighting | Pygments-powered code blocks with GitHub colors |

| Professional Styling | Calibri fonts, proper heading hierarchy (H1-H4) |

| Page Margins | Standard 1-inch (2.54cm) margins |

| Heading Spacing | keep_with_next prevents orphan headings |

| Sources | Full URLs as clickable hyperlinks |

| Pure Python | No external binaries (Pandoc not required) |

Resources

MCP Resources provide read-only data that clients can access:

| Resource | Description |

|---|---|

research://models |

Available models and their capabilities |

research://exports |

List cached exports ready for download |

research://exports/{id} |

Download an exported file (Markdown, JSON, or DOCX) |

File Downloads

The export_research_session tool creates exports and returns a resource URI. Clients (like VS Code) can then fetch the resource to download the file with proper MIME type handling.

Development

uv sync --extra dev

uv run pytest

uv run mypy src/

uv run ruff check src/

Tests

uv run pytest # Unit tests

uv run pytest -m e2e # E2E tests (requires GEMINI_API_KEY)

uv run pytest --cov=src/gemini_research_mcp # With coverage

Pricing

| Tool | Typical Cost |

|---|---|

research_web |

~$0.01-0.05 per query |

research_deep |

~$2-5 per task |

Deep Research uses ~80-160 searches and ~250k-900k tokens per task.

License

MIT

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file gemini_research_mcp-0.12.0.tar.gz.

File metadata

- Download URL: gemini_research_mcp-0.12.0.tar.gz

- Upload date:

- Size: 25.2 MB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

4b7161b28cb405835bdc847125ebbbe0d77c48d087cb54c1eb3eaf91283dba23

|

|

| MD5 |

e21a7285f8d39ae1753a08aa5148b3fb

|

|

| BLAKE2b-256 |

be833054c70ad6603ad70daad04aaaf25e17a71436a333bb4f64f79d828cf3bf

|

Provenance

The following attestation bundles were made for gemini_research_mcp-0.12.0.tar.gz:

Publisher:

publish.yml on machinemates-ai/gemini-research-mcp

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

gemini_research_mcp-0.12.0.tar.gz -

Subject digest:

4b7161b28cb405835bdc847125ebbbe0d77c48d087cb54c1eb3eaf91283dba23 - Sigstore transparency entry: 1076375285

- Sigstore integration time:

-

Permalink:

machinemates-ai/gemini-research-mcp@7dbfc6c79062cc24c798ddf66682f4febe9c50d3 -

Branch / Tag:

refs/tags/v0.12.0 - Owner: https://github.com/machinemates-ai

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@7dbfc6c79062cc24c798ddf66682f4febe9c50d3 -

Trigger Event:

release

-

Statement type:

File details

Details for the file gemini_research_mcp-0.12.0-py3-none-any.whl.

File metadata

- Download URL: gemini_research_mcp-0.12.0-py3-none-any.whl

- Upload date:

- Size: 73.9 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.7

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

c0eb5d9c2c20bb043d7dd1177b91968f8cc45ceecd123963315dc483d8c0644f

|

|

| MD5 |

81f5da455577e0bdb412b624391576fb

|

|

| BLAKE2b-256 |

d8a9e06beeeaab361aea4b5747f91d176353a7f60c008b9064393d7a9520eb99

|

Provenance

The following attestation bundles were made for gemini_research_mcp-0.12.0-py3-none-any.whl:

Publisher:

publish.yml on machinemates-ai/gemini-research-mcp

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

gemini_research_mcp-0.12.0-py3-none-any.whl -

Subject digest:

c0eb5d9c2c20bb043d7dd1177b91968f8cc45ceecd123963315dc483d8c0644f - Sigstore transparency entry: 1076375361

- Sigstore integration time:

-

Permalink:

machinemates-ai/gemini-research-mcp@7dbfc6c79062cc24c798ddf66682f4febe9c50d3 -

Branch / Tag:

refs/tags/v0.12.0 - Owner: https://github.com/machinemates-ai

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

publish.yml@7dbfc6c79062cc24c798ddf66682f4febe9c50d3 -

Trigger Event:

release

-

Statement type: