Geometric LLM hallucination detection. No second LLM. Deterministic. Auditable.

Project description

Geometric LLM hallucination detection. No second LLM. Deterministic. Auditable.

Documentation | Research Papers | Examples | Vision | Contributing

groundlens detects LLM hallucinations using embedding geometry instead of a second LLM. It computes deterministic, auditable scores from the spatial relationships between questions, responses, and source context in an embedding space. The result is a verification signal you can explain in an audit, reproduce on demand, and run in regulated environments.

Why groundlens?

| Problem | How groundlens solves it |

|---|---|

| Second-LLM judges are non-deterministic and expensive | Single embedding model (all-MiniLM-L6-v2), deterministic output, sub-second latency |

| Probabilistic scores cannot be audited | Geometric ratios and angular measurements with clear mathematical definitions |

| Regulatory compliance requires explainability | Every score traces to Euclidean distances and cosine similarities in $\mathbf{R}^n$ (n-dimensional real vector space query/anwser) |

| One method does not fit all use cases | SGI for RAG/context verification, DGI for context-free chat, evaluate() auto-selects |

SGI: Semantic Grounding Index | DGI: Directional Grounding Index

I want to...

| Goal | Start here |

|---|---|

| Verify my RAG pipeline outputs | SGI quick start · RAG verification guide |

| Score chat responses without context | DGI quick start · DGI deep dive |

| Evaluate a batch of outputs | Batch evaluation · Batch guide |

| Wrap my LLM provider with auto-scoring | Provider guard · Providers docs |

| Integrate with LangChain / CrewAI / etc. | Integrations · Integration docs |

| Improve accuracy for my domain | Domain calibration · Calibration guide |

| Comply with the EU AI Act | EU AI Act guide |

| Understand the math | How it works · Research papers |

| Understand what it can and cannot detect | Hallucination taxonomy |

| Check my environment is set up correctly | groundlens doctor |

| Contribute | CONTRIBUTING.md · CLAUDE.md · AGENTS.md |

Installation

pip install groundlens

With LLM provider support:

pip install "groundlens[openai]" # OpenAI

pip install "groundlens[anthropic]" # Anthropic

pip install "groundlens[google]" # Google Generative AI

pip install "groundlens[providers]" # All providers

With framework integrations:

pip install "groundlens[langchain]" # LangChain

pip install "groundlens[crewai]" # CrewAI

pip install "groundlens[semantic-kernel]" # Semantic Kernel

pip install "groundlens[autogen]" # AutoGen

pip install "groundlens[all]" # Everything

Requirements: Python 3.10+, numpy, sentence-transformers.

Quick start

SGI -- with context (RAG verification)

SGI (Semantic Grounding Index) measures whether a response engaged with the provided context or stayed anchored to the question. It requires three inputs.

from groundlens import compute_sgi

result = compute_sgi(

question="What is the capital of France?",

context="France is in Western Europe. Its capital is Paris.",

response="The capital of France is Paris.",

)

print(result.value) # 1.23 — ratio of distances

print(result.normalized) # 0.61 — mapped to [0, 1]

print(result.flagged) # False — above review threshold

print(result.explanation) # "SGI=1.230 — strong context engagement (pass)"

Interpretation: SGI > 1.0 means the response is closer to the context than to the question in embedding space. The response engaged with the source material.

DGI -- without context

DGI (Directional Grounding Index) detects hallucinations without requiring source context. It checks whether the question-to-response displacement vector aligns with the characteristic direction of verified grounded responses.

from groundlens import compute_dgi

result = compute_dgi(

question="What causes seasons on Earth?",

response="Seasons are caused by Earth's 23.5-degree axial tilt.",

)

print(result.value) # 0.42 — cosine similarity to reference direction

print(result.normalized) # 0.71 — mapped to [0, 1]

print(result.flagged) # False — above pass threshold (0.30)

Domain calibration improves DGI accuracy from AUROC ~0.8 with a basic calibration to 0.90-0.99 with domain-specific calibration:

from groundlens import compute_dgi

result = compute_dgi(

question="What is the statute of limitations for breach of contract in California?",

response="Four years under California Code of Civil Procedure Section 337.",

reference_csv="legal_calibration_pairs.csv",

)

evaluate() -- auto-select

The evaluate() function picks the right method automatically: SGI when context is provided, DGI when it is not.

from groundlens import evaluate

# With context -> SGI

score = evaluate(

question="What is X?",

response="X is Y.",

context="According to the manual, X is Y.",

)

assert score.method == "sgi"

# Without context -> DGI

score = evaluate(

question="What is X?",

response="X is Y.",

)

assert score.method == "dgi"

Batch evaluation

from groundlens import evaluate_batch

items = [

{"question": "Q1?", "response": "A1.", "context": "Source."},

{"question": "Q2?", "response": "A2."},

{"question": "Q3?", "response": "A3.", "context": "Reference."},

]

results = evaluate_batch(items)

flagged = [r for r in results if r.flagged]

print(f"{len(flagged)}/{len(results)} flagged for review")

CLI

# Check environment health

groundlens doctor

# Single response check

groundlens check \

--question "What is the capital of France?" \

--response "The capital of France is Paris." \

--context "France is in Western Europe. Its capital is Paris."

# Batch CSV evaluation

groundlens evaluate input.csv --output results.csv

# Domain calibration

groundlens calibrate --pairs domain_pairs.csv --output calibration.json

# Run the confabulation benchmark

groundlens benchmark

LLM provider guard

from groundlens.providers.openai import OpenAIProvider

provider = OpenAIProvider(model="gpt-4o")

response = provider.complete(

prompt="Summarize this document.",

context="The document text here...",

)

if response.groundlens_score and response.groundlens_score.flagged:

print("Hallucination risk detected — review recommended.")

else:

print(response.text)

Taxonomy of LLM hallucinations

Not all hallucinations are the same. Groundlens is built on a geometric taxonomy (arXiv:2602.13224) that classifies hallucinations by their geometric signature in embedding space — which determines whether they are detectable and which scoring method applies.

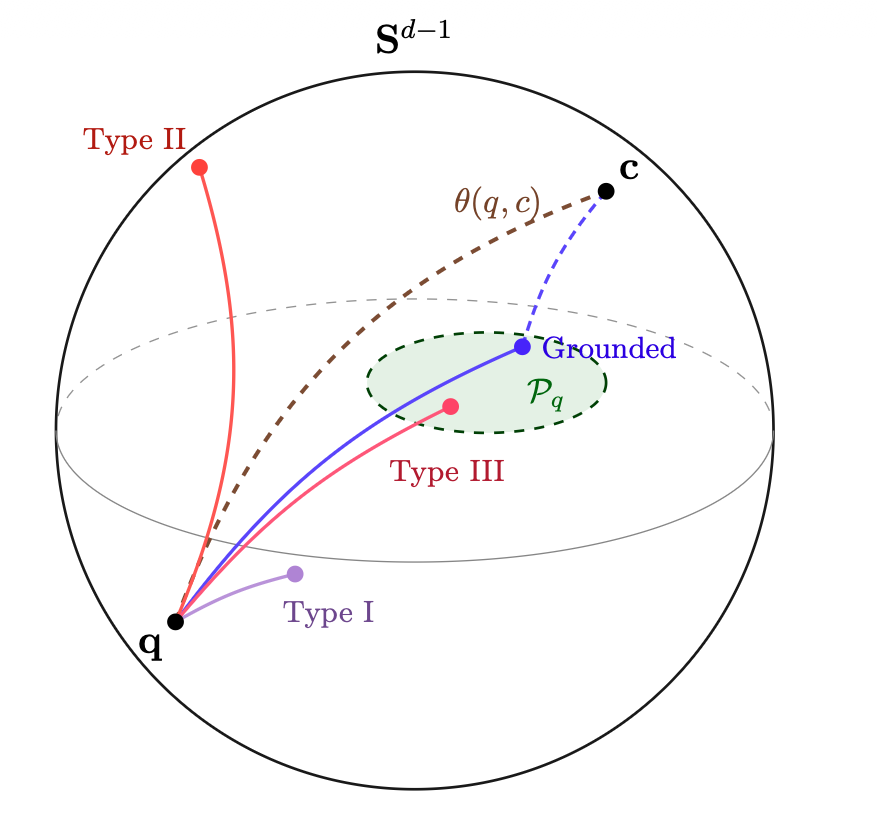

Every text maps to a point on the hypersphere Sd−1. The question q and context c define a geodesic arc. Grounded responses (blue) fall inside the plausibility region 𝒫q. Type I (purple) stays near q — the response ignored the context. Type II (red) deviates far from both q and c — invented content. Type III (pink) lands inside 𝒫q alongside the correct answer — same vocabulary and structure, wrong facts, geometrically indistinguishable.

| Type | What happens | Example | Detection |

|---|---|---|---|

| Type I — Unfaithfulness | Response ignores the provided source and defaults to the question | RAG system returns an answer from memory instead of from the retrieved document | SGI (distance ratio) |

| Type II — Confabulation | Response invents content outside the topic's vocabulary | Asked about CRISPR gene editing, the model describes protein-folding correction instead | DGI (displacement direction) |

| Type III — Within-frame error | Response uses the right vocabulary and structure but gets the facts wrong | "The capital of Australia is Canberra" vs. "The capital of Australia is Sydney" — same frame, wrong city | Undetectable by geometry |

Why Type III is undetectable: Sentence embeddings encode distributional similarity (vocabulary, syntax, co-occurrence), not truth value. Two responses that share the same words, entities, and syntactic frame land in the same region of embedding space regardless of which one is correct. This is not a limitation of groundlens — it is a property of the distributional hypothesis (Harris, 1954) that constrains every embedding-based method, including NLI (which inverts to AUROC 0.311 on TruthfulQA, actively favoring false answers over truthful ones).

Implications: Groundlens is verification triage — it detects the hallucination types that leave geometric traces (Types I and II), which are the most common and most damaging in production. For Type III errors in high-stakes domains (medical, legal, financial), complement groundlens with claim-level fact-checking tools on the outputs that pass geometric verification. See Complementary Tools for Type III.

Scoring methods

Each scoring method targets a specific hallucination type from the taxonomy above.

SGI (Semantic Grounding Index) — detects Type I

When context is available, SGI measures whether the response engaged with the source or stayed anchored to the question:

SGI = dist(phi(response), phi(question)) / dist(phi(response), phi(context))

| Score | Interpretation |

|---|---|

| SGI > 1.20 | Strong context engagement (pass) |

| 0.95 < SGI < 1.20 | Partial engagement (review recommended) |

| SGI < 0.95 | Weak engagement (flagged — possible Type I) |

DGI (Directional Grounding Index) — detects Type II

When no context is available, DGI checks whether the question-to-response displacement aligns with a learned "grounded direction":

delta = phi(response) - phi(question)

DGI = dot(delta / ||delta||, mu_hat)

| Score | Interpretation |

|---|---|

| DGI > 0.30 $^1$ | Aligns with grounded patterns (pass) |

| 0.00 < DGI < 0.30 | Weak alignment (flagged — possible Type II) |

| DGI < 0.00 | Opposes grounded direction (high risk) |

$^1$ This score corresponds to a general calibration. In domain-specific calibrations the score can vary.

Providers and integrations

| Component | Install extra | Description |

|---|---|---|

| OpenAI | openai |

Wraps openai SDK with automatic scoring |

| Anthropic | anthropic |

Wraps anthropic SDK with automatic scoring |

google |

Wraps google-generativeai with automatic scoring |

|

| LangChain | langchain |

Evaluator + callback handler |

| CrewAI | crewai |

Tool for agent pipelines |

| Semantic Kernel | semantic-kernel |

Function calling filter |

| AutoGen | autogen |

Agent chat checker |

Domain calibration

Generic DGI uses a bundled reference direction that achieves AUROC ~0.8 with a basic calibration. For production use, a domain-specific calibration can be applied (a minimum of 200 queries recommended):

from groundlens import calibrate

result = calibrate(csv_path="my_domain_pairs.csv")

print(f"Concentration: {result.concentration:.2f}")

result.save("calibration.json")

Domain-specific calibration typically reaches AUROC 0.90-0.99. The confabulation benchmark (arXiv:2603.13259) reports DGI AUROC 0.958 with domain calibration.

Architecture

┌─────────────────────────────────────────────┐

│ Public API (evaluate) │

├──────────────────┬──────────────────────────┤

│ SGI (sgi.py) │ DGI (dgi.py) │

├──────────────────┴──────────────────────────┤

│ _internal (geometry, embeddings) │

├─────────────────────────────────────────────┤

│ sentence-transformers (all-MiniLM-L6-v2) │

└─────────────────────────────────────────────┘

▲ ▲

│ │

┌─────┴──────┐ ┌───────┴──────┐

│ Providers │ │ Integrations │

│ (OpenAI, │ │ (LangChain, │

│ Anthropic,│ │ CrewAI, │

│ Google) │ │ SK, AutoGen │

└────────────┘ └──────────────┘

See AGENTS.md for detailed file-by-file documentation. See CLAUDE.md for AI-assisted development guidelines.

Research

groundlens implements the methods described in three research papers:

-

Semantic Grounding Index (SGI) Marin, J. (2025). Semantic Grounding Index for LLM Hallucination Detection. arXiv:2512.13771

-

Directional Grounding Index (DGI) Marin, J. (2026). A Geometric Taxonomy of Hallucinations in Large Language Models. arXiv:2602.13224

-

Mechanistic Interpretability Marin, J. (2026). Rotational Dynamics of Factual Constraint Processing in Large Language Models. arXiv:2603.13259

-

Hallucination Benchmark https://github.com/groundlens-dev/grounding-benchmark/blob/4abf98ec5d2f846850a44f713115323659c2a793/paper/A_Methodology_for_Building_Human_Confabulated_Hallucination_Benchmarks.pdf

Security

See SECURITY.md for vulnerability reporting, scope, and response timelines.

Contributing

See CONTRIBUTING.md for development setup, code standards, and PR process.

# Quick start for contributors

git clone https://github.com/groundlens-dev/groundlens.git

cd groundlens

pip install -e ".[dev]"

pre-commit install

groundlens doctor # verify your environment

pytest tests/unit/ # run fast tests

About

Groundlens is built and maintained by Javier Marin -- an engineer who has reinvented himself more times than most people change jobs. The math comes from engineering, the skepticism from regulated industries, and the stubbornness from experience. Read the origin story.

License

MIT -- Javier Marin (javier@jmarin.info)

Project details

Release history Release notifications | RSS feed

Download files

Download the file for your platform. If you're not sure which to choose, learn more about installing packages.

Source Distribution

Built Distribution

Filter files by name, interpreter, ABI, and platform.

If you're not sure about the file name format, learn more about wheel file names.

Copy a direct link to the current filters

File details

Details for the file groundlens-2026.5.10.tar.gz.

File metadata

- Download URL: groundlens-2026.5.10.tar.gz

- Upload date:

- Size: 55.7 kB

- Tags: Source

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

a354a449655511074d2f0f20fb5bb0dd00da56186ef93c63d3af660035e4deec

|

|

| MD5 |

0a9f53f7c40a7ab63dd8b396a72b94f4

|

|

| BLAKE2b-256 |

c63bd3f28afb2d51a04c04512c2ec8a77c7e353b1fd638c39a33fa9bf987b8c8

|

Provenance

The following attestation bundles were made for groundlens-2026.5.10.tar.gz:

Publisher:

release.yml on groundlens-dev/groundlens

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

groundlens-2026.5.10.tar.gz -

Subject digest:

a354a449655511074d2f0f20fb5bb0dd00da56186ef93c63d3af660035e4deec - Sigstore transparency entry: 1509349585

- Sigstore integration time:

-

Permalink:

groundlens-dev/groundlens@bc6d60ed03d2757fb71fa9317cef44f1da7d7f79 -

Branch / Tag:

refs/tags/v2026.5.10 - Owner: https://github.com/groundlens-dev

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@bc6d60ed03d2757fb71fa9317cef44f1da7d7f79 -

Trigger Event:

push

-

Statement type:

File details

Details for the file groundlens-2026.5.10-py3-none-any.whl.

File metadata

- Download URL: groundlens-2026.5.10-py3-none-any.whl

- Upload date:

- Size: 54.5 kB

- Tags: Python 3

- Uploaded using Trusted Publishing? Yes

- Uploaded via: twine/6.1.0 CPython/3.13.12

File hashes

| Algorithm | Hash digest | |

|---|---|---|

| SHA256 |

2445a9a24faad55d5f0b31063556030618a9a383eada07f8f0923355261e771c

|

|

| MD5 |

adc3c3fda40f578f2a4d8b62043ed3af

|

|

| BLAKE2b-256 |

d568d0100c912c6bedd6092bab720169644a6ead7f9a66a1ca1f96e9e0b34402

|

Provenance

The following attestation bundles were made for groundlens-2026.5.10-py3-none-any.whl:

Publisher:

release.yml on groundlens-dev/groundlens

-

Statement:

-

Statement type:

https://in-toto.io/Statement/v1 -

Predicate type:

https://docs.pypi.org/attestations/publish/v1 -

Subject name:

groundlens-2026.5.10-py3-none-any.whl -

Subject digest:

2445a9a24faad55d5f0b31063556030618a9a383eada07f8f0923355261e771c - Sigstore transparency entry: 1509349811

- Sigstore integration time:

-

Permalink:

groundlens-dev/groundlens@bc6d60ed03d2757fb71fa9317cef44f1da7d7f79 -

Branch / Tag:

refs/tags/v2026.5.10 - Owner: https://github.com/groundlens-dev

-

Access:

public

-

Token Issuer:

https://token.actions.githubusercontent.com -

Runner Environment:

github-hosted -

Publication workflow:

release.yml@bc6d60ed03d2757fb71fa9317cef44f1da7d7f79 -

Trigger Event:

push

-

Statement type: